Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: Block Until Done not working as expected

Block Until Done not working as expected

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi,

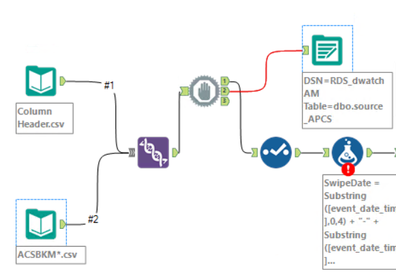

I have a requirement to read a bunch of .csv files at once, process them for some calculations and then dump the original rows in another source table. For this, i tried to use Block Until Done tool as shown below, where i expected the flow from output 1 to get complete first and the data dump to happened from output 2. I observed that even when there is an error in the processing part, the data is still reaching to the source table, which is not correct.

Am i missing something here or there is some other way to achieve this?

Solved! Go to Solution.

- Labels:

-

Output

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

How about Parallel Block Until Done from the CReW Macro Pack: http://www.chaosreignswithin.com/p/macros.html

A way to think of the Parallel Block Until Done tool is: the stream into input 1 must be fully complete (and no stopping errors) before the stream to output 2 is processed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Joe,

That worked perfectly fine. But why didn't the block until done did not help in the above case? My original thought was that this would be doable using product tools, without any external Macro.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

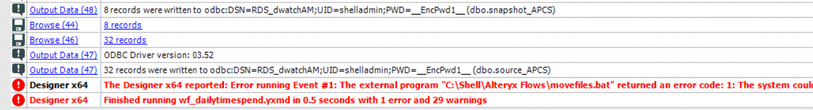

Also, i found a sort of a problem with the working of this upon some more testing.

I was trying to move the source .csv files to some other folder after processing the data(after writing to a snapshot table but before writing the data to the source table). I am doing this via a .batch file in the event section of the work flow. So the flow in a nutshell is:

Read data from CSV files -- Process the data -- Write to a Final Table -- Move the files to some other archive folder -- Write the source data to a source table.

But I found that if this batch file gives an error(which is basically the end of stream 1), the data is still being written to the source table. Is it happening because this is an event, occurring on an external file and Alteryx is not considering it as an error within the flow?

Below is a screenshot of the error:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

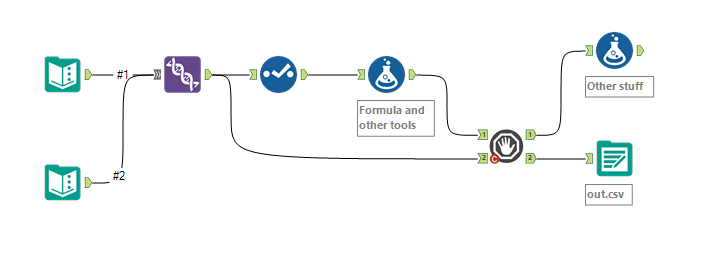

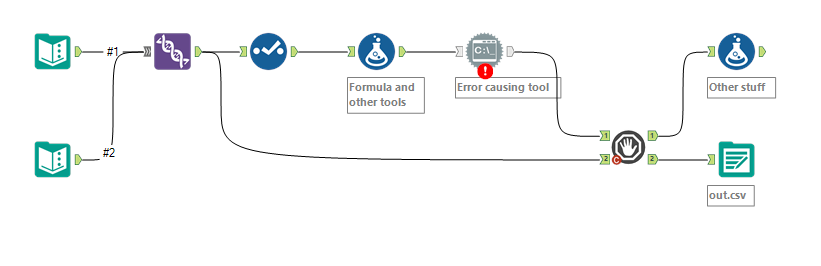

Can you move the step that has the error to happen before the stream goes into input 1?

for example, in the following, the error prevents the output file from being written:

The stream into input 1 must be fully complete (and no stopping errors) before the stream to output 2 is processed.

Stopping errors that happen after output 1 will not prevent the stream of output 2 from running. Only stopping errors that happen before input 1 will prevent output 2 from being run.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

There are a couple of things at play here.

- The Block Until Done tool will not wait for Stream 1 to complete before processing Stream 2, it will just wait until it has finished feeding all the data out Stream 1 before starting to feed data out stream 2. In general, tools in Alteryx know about upstream tools but not necessarily downstream tools.

- Hence, If there is an error after one of the Block Until Done tools, the BUD Tool doesn't care... or even know about it...

That might help explain why you have to move the processing upstream, and why the parallel BUD tool is still such a valuable add-on.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello, I am aware that I am replying to an old post, but I have the same issue currently.

I am not using the crew macros as I schedule workflows via our Gallery.

What I have noticed in revisiting the BUD object example is that there is a message object of them which has variable settings.

can anyone provide an example of how to use the BUD object, I am thinking that I write to SQL Server the records written from step 1

could I then have something in the message object on the second step to check that step 1 has written records before continuing with step 2.

cheers

Steve

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

27 -

Alteryx Designer

7 -

Alteryx Editions

96 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,210 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

15 -

Batch Macro

1,559 -

Behavior Analysis

246 -

Best Practices

2,696 -

Bug

720 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,825 -

Community

26 -

Computer Vision

86 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,939 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,489 -

Data Science

3 -

Database Connection

2,221 -

Datasets

5,223 -

Date Time

3,229 -

Demographic Analysis

186 -

Designer Cloud

743 -

Developer

4,377 -

Developer Tools

3,534 -

Documentation

528 -

Download

1,038 -

Dynamic Processing

2,941 -

Email

929 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,262 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

714 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,711 -

In Database

966 -

Input

4,297 -

Installation

361 -

Interface Tools

1,902 -

Iterative Macro

1,095 -

Join

1,960 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

260 -

Macros

2,866 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

751 -

Output

5,260 -

Parse

2,328 -

Power BI

228 -

Predictive Analysis

937 -

Preparation

5,171 -

Prescriptive Analytics

206 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,434 -

Resource

1 -

Run Command

576 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

631 -

Settings

936 -

Setup & Configuration

3 -

Sharepoint

628 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

432 -

Tips and Tricks

4,188 -

Topic of Interest

1,126 -

Transformation

3,733 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,983

- « Previous

- Next »