Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Dynamic Input should either:

(a) have the option of merging files with different field schemas

(b) Return a list of rejected filepaths

One of the problems I have with using Alteryx is the frequent need to input a bunch of files, but a few have an extra/missing field. The extra/missing field is often unimportant to me, but it means that the dynamic input doesn't work.

-

API SDK

-

Category Developer

-

Enhancement

Hello,

I really would appreciate the ability to store our templates in a Teams/Sharepoint (or whatever exists) folder. However, it doesn't work today :

Best regards,

Simon

-

Desktop Experience

-

Enhancement

-

User Settings

Hello,

There are several dozens of data sources... maybe it would be useful to have a search in it?

Best regards,

Simon

-

Category Input Output

-

Data Connectors

-

Enhancement

A client just asked me if there was an easy way to convert regular Containers to Control Containers - unfortunately we have to delete the old container and readd the tools into the new Control Container.

What if we could just right click on the regular Container and say "Convert to Control Container"? Or even vice versa?!

Hello,

As of today, there are only few packages that are embedded with Alteryx Python tool. However :

1/Python becomes more and more popular. We will use this tool intensively in the next years

2/Python is based on existing packages. This is the force of the language

3/On Alteryx, adding a package is not that easy : you need to have admin rights and if you want your colleagues to open your workflow, it also means that he has to install it himself. In corporate environments, it means loosing time, several days on a project.

Personnaly, I would Polars, DuckDB.. that are way faster than Panda.

-

API SDK

-

Category Developer

-

Enhancement

Currently when a unique tool is used, and a field is removed upstream then the workflow fails to move forward. If you have one or two unique fields being used then it is no big deal, but when you have a very complex workflow then you have to click into each one of those tools in order to update. This can be very problematic and creates a lot of time following all the branches that is connected after the 1st unique tool is used. My suggestion is to make this a warning instead of a fail or have an option to select fail or warning like the union tool is setup. This way people can decide how they want this tool to react when fields are removed.

-

Category Preparation

-

Desktop Experience

-

Enhancement

Currently if I have a connection between two tools as per the example below:

I can drag and drop a new tool on the connection between these tools to add it in:

And designer updates the connections nicely, however if I select multiple tools and try and collectively drop them inbetween, on a connection then it won't allow me to do this, and will move the connection out of the way so it doesn't cause an overlap.

Therefore as a QoL improvement it would be great if there was a multi-drop option on connections between tools.

-

Enhancement

-

UX

Dear Alteryx,

One day, when I pass from this life to the next I'll get to see and know everything! Loving data, one of my first forays into the infinite knowledge pool will be to quantify the time lost/mistakes made because excel defaults big numbers like customer identifiers to scientific notation. My second foray will be to discover the time lost/mistakes made due to

Unexpanded Mouse Wheel Drop Down Interaction

Riveting right? What is this? It's super simple, someone (not just Alteryx) had the brilliant idea that the mouse wheel should not just be used to scroll the page, but drop down menus as well. What happens when both the page and the drop down menu exist, sometimes disaster but more often annoyance. Case in point, configuring an input tool.

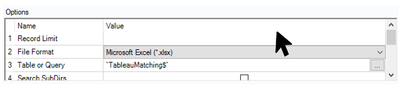

See the two scenarios below, my input is perfectly configured, I'll just flick my scroll wheel to see what row I decided to start loading from

Happy Path, cursor not over drop down = I'll scroll down for you ↓

Sad Path, cursor happened to hover the dropdown sometimes on the way down from a legit scroll = what you didn't want Microsoft Excel Legacy format?

And you better believe Alteryx LOVES having it's input file format value changed in rapid succession., hold please...

Scroll wheels should scroll, but not for drop down menus unless the dropdown has been expanded.

Oh and +1 for mouse horizontal scrolling support please.

-

Desktop Experience

-

Enhancement

-

New Request

for iterative macro, generally it had 2 anchors, one if it is for iterative, and it normally no output (whether got error or not)

it good to have option to remove this anchor when using it in workflow.

so other user no need to identify which one is the True output and which one is just iteration.

additional, if this can apply to input anchor.

(i just built one macro where i don't need the start input, but the input need to be iterate input)

-

Category Interface

-

Desktop Experience

-

Enhancement

The idea behind encrypting or locking a workflow is good for users to maintain the workflow as designed.

However, when a user reaches a level of maturity equivalent to that of the builder or more, or even when changes are required - the current practice is to keep a locked and unlocked version of the workflow so that it allows for a change in the future.

It would be much simpler if we can have the power to lock and unlock workflows with a password. Users can then maintain and keep the passwords so that they can continue with the workflow.

Not everybody is on Server yet so this feature is very helpful for control before Server migration. Otherwise it’s just password protecting a folder containing the workflow package, then re-locking a new save file each time a change is made or when someone new takes over on prem.

-

Desktop Experience

-

Enhancement

-

User Settings

-

UX

Hello,

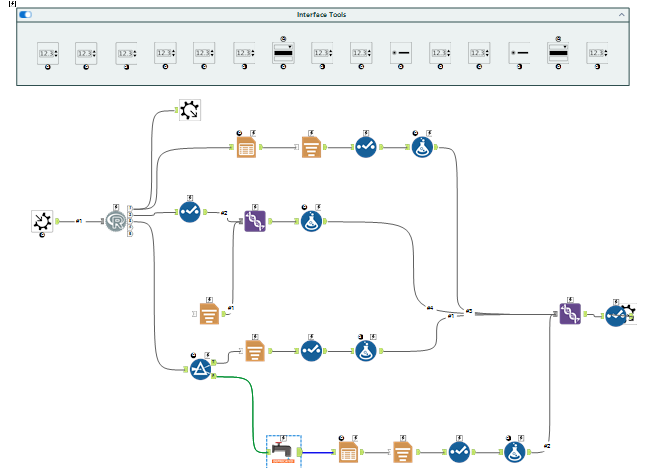

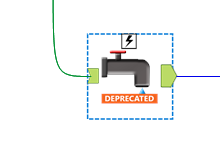

A lot of tools that use R Macro (and not only preductive) are clearly outdated in several terms :

1/the R package

2/the presentation of the macro

3/the tools used

E.g. : the MB_Inspect

Ugly but wait there is more :

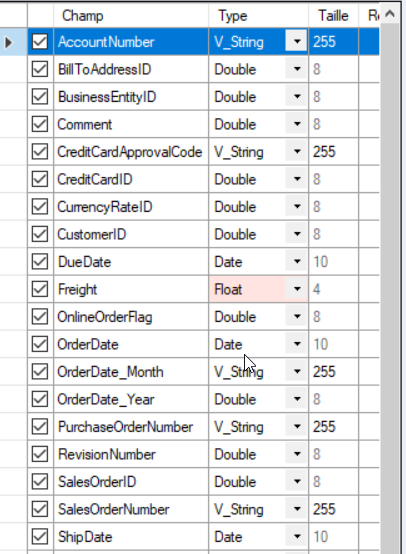

Also ; the UI doesn't help that much with field types.

Best regards,

Simon

-

Category Predictive

-

Desktop Experience

-

Enhancement

Hello all,

We all know for sure that != is the Alteryx operator for inequality. However, I suggest the implementation of <> as an other operator for inequality. Why ?

<> is a very common operator in most languages/tools such as SQL, Qlik or Tableau. It's by far more intuitive than != and it will help interoperability and copy/paste of expression between tools or from/to in-database mode to/from in-memory mode.

Best regards,

Simon

-

Category Preparation

-

Enhancement

I would like a way to disable all containers within a workflow with a single click. It could be simply disable / enable all or a series of check boxes, one for each container, where you can choose to disable / enable all or a chosen selection.

In large workflows, with many containers, if you want to run a single container while testing it can take a while to scroll up and down the workflow disabling each container in turn.

-

Enhancement

-

New Request

-

UX

Hello all,

As of today, you can only (officially) connect to a postgresql through ODBC with the SIMBA driver

help page :

https://help.alteryx.com/current/en/designer/data-sources/postgresql.html#postgresql

You have to download the driver from your license page

However there is a perfectly fine official driver for postgresql here https://www.postgresql.org/ftp/odbc/releases/

I would like Alteryx to support it for several obvious reasons :

1/I don't want several drivers for the same database

2/the simba driver is not supported for last releases of postgresql

3/the simba driver is somehow less robust than the official driver

4/well... it's the official driver and this leads to unecessary between Alteryx admin/users and PG db admin.

Best regards,

Simon

-

Category Input Output

-

Data Connectors

-

Enhancement

-

Desktop Experience

-

Enhancement

Hi all,

When preparing reports with formatting for my stakeholders. They want these sent straight to sharepoint and this can be achieved via onedrive shortcuts on a laptop. However when sending the workflow for full automation, the server's C drive is not setup with the appropriate shortcuts and it is not allowed by our admin team.

So my request is to have the sharepoint output tool upgraded to push formatted files to sharepoint.

Thank you!

-

Category Input Output

-

Data Connectors

-

Enhancement

Hey all,

I don't know about you, but I have always had trouble hovering the mouse over the Results window pane trying to get the resize icon to appear. It seems like you need surgeon level precision to find the icon! 😷

I love Designer and want to see it be the best it can possibly be. I feel like increasing the clickable/hovering area for this resize would be amazingly helpful!

Just wanted to see if we could get some community momentum going in order to get some developer eyes on this issue. 🙂

Please help by bumping/upvoting this thread!

-K

Migrated this from another thread. Some folks tagged from the original post :)

@cpatrickwk @caltang @afellows @MRod @alexnajm @ericsmalley @MilindG @Prometheus @innovate20

-

Enhancement

-

UX

Hello all,

It's really frustrating to have an "alteryx field type" in In-Database Select. It doesn't even make sense since we're manipulating only data in SQL database where those types does not exist. What we should see is the SQL field type.

Best regards,

Simon

-

Category In Database

-

Enhancement

Hello,

Here is the proposal about an issue that I face frequently at work.

Problem Statement -

Frequent failure of workflows that have either been scheduled or run manually on server because the excel input file is sometimes open by another user or someone forgot to close the file before going out of office or some other reason.

Proposed Solution -

The Input/Dynamic Input tools to have the ability to read excel files even when it is open so that the workflows do not fail which will have a huge impact in terms of time savings and will avoid regular monitoring of the scheduled workflows.

-

Category Input Output

-

Data Connectors

-

Enhancement

Sounds simple :

Best regards,

Simon

-

Category Documentation

-

Desktop Experience

-

Enhancement

-

UX

- New Idea 317

- Accepting Votes 1,790

- Comments Requested 22

- Under Review 171

- Accepted 54

- Ongoing 8

- Coming Soon 7

- Implemented 539

- Not Planned 110

- Revisit 57

- Partner Dependent 4

- Inactive 674

-

Admin Settings

21 -

AMP Engine

27 -

API

11 -

API SDK

223 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

79 -

Category Demographic Analysis

2 -

Category Developer

212 -

Category Documentation

80 -

Category In Database

215 -

Category Input Output

646 -

Category Interface

242 -

Category Join

105 -

Category Machine Learning

3 -

Category Macros

154 -

Category Parse

76 -

Category Predictive

79 -

Category Preparation

398 -

Category Prescriptive

1 -

Category Reporting

200 -

Category Spatial

82 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

91 -

Configuration

1 -

Content

1 -

Data Connectors

969 -

Data Products

3 -

Desktop Experience

1,569 -

Documentation

64 -

Engine

129 -

Enhancement

362 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

25 -

Licenses and Activation

15 -

Licensing

14 -

Localization

8 -

Location Intelligence

81 -

Machine Learning

13 -

My Alteryx

1 -

New Request

212 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

25 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

82 -

UX

223 -

XML

7

- « Previous

- Next »

- asmith19 on: Auto rename fields

- Shifty on: Copy Tool Configuration

- simonaubert_bd on: A formula to get DCM connection name and type (and...

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- haraldharders on: Improve Text Input tool

- simonaubert_bd on: Unique key detector tool

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- jackchoy on: Enhancing Data Cleaning

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections