Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

I have recently started using blobs, and I've noticed that when I select 'Disable all tools that write output' that the blob output is not disabled.

Could it be included in the tools that are disabled please?

@caltang thanks for supporting this.

Thanks

PuffinPanic

-

Engine

-

Enhancement

Hello Together,

I have the following situation:

I have a workflow which is used in many different collections because of different users, regions etc,

Depending on the collection name from which the WF was started I would like to have different start parameters, different defaults for interface settings, use formulars, filters etc., having a system constant like [Engine.WorkflowFileName] but as [Engine.WorkflowCollectionName] would be great!

At the moment, I need to save the WF n-times just because of different settings instead of having just one working dynamically depending on the Collection name. Would save a lot of admin time just changing one WF instead of n!.

In case of any questions, pls. go ahead and get in touch with me!

-

Engine

-

New Request

We have discussed on several occasions and in different forums, about the importance of having or providing Alteryx with order of execution control, conditional executions, design patterns and even orchestration.

I presented this idea some time ago, but someone asked me if it was posted, and since it was not, I’m putting it here so you can give some feedback on it.

The basic concept behind this idea is to allow us (users) to have:

- Design Patterns

- Repetitive patterns to be reusable.

- Select after and Input tool

- Drop Nulls

- Get not matching records from join

- Conditional execution

- Tell Alteryx to execute some logic if something happens.

- Record count

- Errors

- Any other condition

- Order of execution

- Need to tell Alteryx what to run first, what to run next, and so on…

- Run this first

- Execute this portion after previous finished

- Wait until “X” finishes to execute “Y”

- Orchestration

- Putting all together

This approach involves some functionalities that are already within the product (like exploiting Filtering logic, loading & saving, caching, blocking among others), exposed within a Tool Container with enhanced attributes, like this example:

The approach is to extend Tool Container’s attributes.

This proposition uses actual functionalities we already have in Designer.

So, basically, the Tool Container gets ‘superpowers’, with the addition of some capabilities like: Accepting input data, saving the contents within the container (to create a design pattern, or very commonly used sequence of tools chained together), output data, run the contents of the tools included in the container, etc.), plus a configuration screen like:

- Refers to the actual interface of the Tool Container.

- Provides the ability to disable a Container (and all tools within) once it runs.

- Idea based on actual behavior: When we enable or disable a Tool Container from an interface Tool.

- Input and output data to the container’s logic, will allow to pickup and/or save files from a particular container, to be used in later containers or persist data as a partial result from the entire workflow’s logic (for example updating a dimensions table)

- Based on actual behavior: Input & Output Data, Cache, Run Command Tools, and some macros like Prepare Attachment.

- Order of Execution: Can be Absolute or Relative. In case of Absolute run, we take the containers in order, executing their contents. If Relative, we have the options to configure which container should run before and after, block until previous container finishes or wait until this container finishes prior to execute next container in list.

- Based on actual behavior: Block until done, Cache, Find Replace, some interface Designer capabilities (for chained apps for example), macros’ basic behaviors.

- Conditional Execution: In order to be able to conditionally execute other containers, conditions must be evaluated. In this case, the idea is to evaluate conditions within the data, interface tools or Error/Warnings occurrence.

- Based on actual behavior: Filter tool, some Interface Tools, test Tool, Cache, Select.

- Notes: Documentation text that will appear automatically inside the container, with options to place it on top or below the tools, or hide it.

This should end a brief introduction to the idea, but taking it a little further, it will allow even to have something like an Orchestration layout, where the users can drag and drop containers or patterns and orchestrate them in a solution, like we can do with the Visual Layout Tool or the Interactive Chart tool:

I'm looking forward to hear what you think.

Best

Hello all,

Like many softwares in the market, Alteryx uses third-party components developed by other teams/providers/entities. This is a good thing since it means standard features for a very low price. However, these components are very regurarly upgraded (usually several times a year) while Alteryx doesn't upgrade it... this leads to lack of features, performance issues, bugs let uncorrected or worse, safety failures.

Among these third-party components :

- CURL (behind Download tool for API) : on Alteryx 7.15 (2006) while the current release is 8.0 (2023)

- Active Query Builder (behind Visual Query Builder) : several years behind

- R : on Alteryx 4.1.3 (march 2022) while the next is 4.3 (april 2023)

- Python : on Alteryx 3.8.5 (2020) whil the current is 3.10 (april 2023)

-etc, etc....

-

of course, you can't upgrade each time but once a year seems a minimum...

Best regards,

Simon

-

AMP Engine

-

Engine

Hello,

As of now, you can't choose the DCM connections to synchronize. It's either all or none.

However, I have one designer and two servers (Sandbox/Production). Most connections must be common, but not all.

Best regards,

Simon

-

Data Connectors

-

Engine

-

Enhancement

Hello all,

As of today, we can easily copy or duplicate a table with in-database tool.This is really useful when you want to have data in development environment coming from production environment.

But can we for real ?

Short answer : no, we can't do it in these cases :

-partitions

-any constraints such as primary-foreign keys

But even if these ideas would be implemented, this means manually setting these parameters.

So my proposition is simply a "clone table"' tool that would clone the table from the show create table statement and just allow to specify the destination path (base.table)

Best regards,

Simon

Hello all,

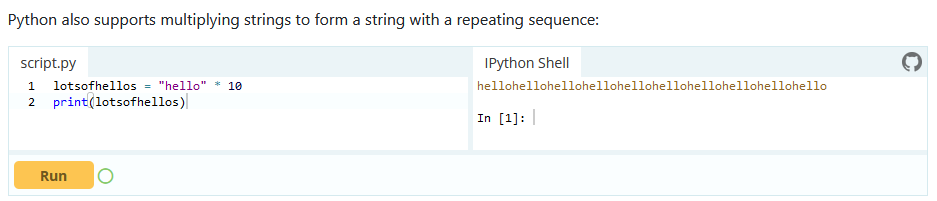

I'm currently learning Pythin language and there is this cool feature : you can multiply a string

Pretty cool, no? I would like the same syntax to work for Tableau.

Best regards,

Simon

I've seen this question before and have run into it myself. I'd like to see a new tool that would allow a developer (of a workflow) to choose a path of logic based upon criteria known only during the execution of a module.

If LEFT INPUT Count of records < 10,000 THEN Path1 (e.g. use a calgary join)

ELSE Path 2 (e.g. use a standard join)

endif

Thanks,

Mark

-

API SDK

-

Category Developer

-

Engine

-

Runtime

Hello,

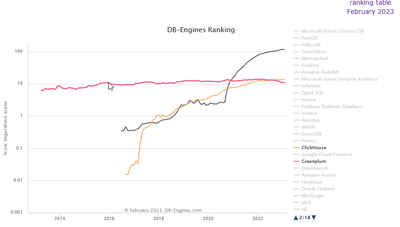

Just like Monetdb or Vertica, Clickhouse is a column-store database, claiming to be the fastest in the world. It's available on Cloud (like Snowflake), linux and macos (and here for free, it's open-source). it's also very well ranked in analytics database https://db-engines.com/en/system/ClickHouse and it would be a good differenciator with competitors.

https://clickhouse.com/

it has became more popular than Greenplum that is supported : (black snowflake, red greenplum, orange clickhouse)

Best regards,

Simon

Hello all,

So, right now, we have two very separated products : Alteryx Designer and Alteryx Designer Cloud. But what if you want to go from Alteryx Designer on your desktop to the cloud ?

well, you will have to rewrite every single workflow because you can't publish or import your current workflow on Alteryx Designer Cloud. You cannot export Designer Cloud workflow to Alteryx Designer on Desktop either.

This is a huge limitation on cloud implementation and sells and the ONLY product I know that's not compatible between on-premise and cloud.

Please Alteryx, this is a no-brainer situation if you want to convince your customers !

Best regards,

Simon

-

Engine

-

New Request

I can't even count how often I looked at an Excel, CSV or even YXDB file, where I KNEW that it was generated by Alteryx, but I couldn't remember the workflow. Currently, I have to simply go through all workflows I ever build and see if I can find it.

Theoretically, I could use a text-search across all workflows and see if I can find the output names - problem here: Most of my output filenames are generated dynamically on the run.

It would be amazing if Alteryx could simply write the Workflow name (maybe even path) into the metadata of a file.

(Screenshot from Google, as my os is set to German)

How about, we write "This file was created with by "Create Controlling Reports.yxmd on 2023-02-06 with Alteryx Designer 2021.4.298434" in the field 'Comments'?

This would make it extremely easy to find what workflow the file generated. I think it would be an option to talk about "filepath" instead of filename, but the filepath could include the local machine name, which might include GDPR information.

@Community: Is there any additional information that you'd like to see in the metadata?

Best

Alex

-

Engine

-

Enhancement

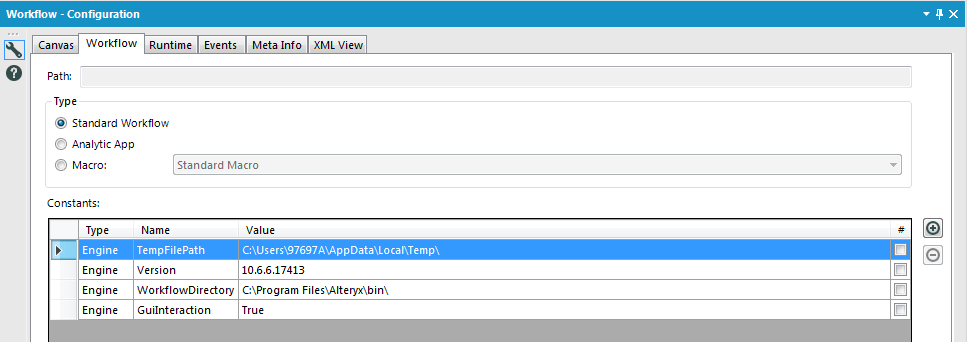

The constant [Engine.GuiInteraction] can be used to determine whether a workflow was run in the Designer or Gallery. Currently, there's no method to also find out whether a workflow was initiated by a schedule or run manually in the Gallery. The information is available in the Gallery but not forwarded to inside the workflow.

Please introduce a new variable [Engine.ScheduledRun] (or similar) which determines whether the workflow was initiated by a schedule (value "true" if boolean or "schedule" if string type) or manually (value "false" or "manual").

-

Engine

-

New Request

-

Scheduler

Hello all,

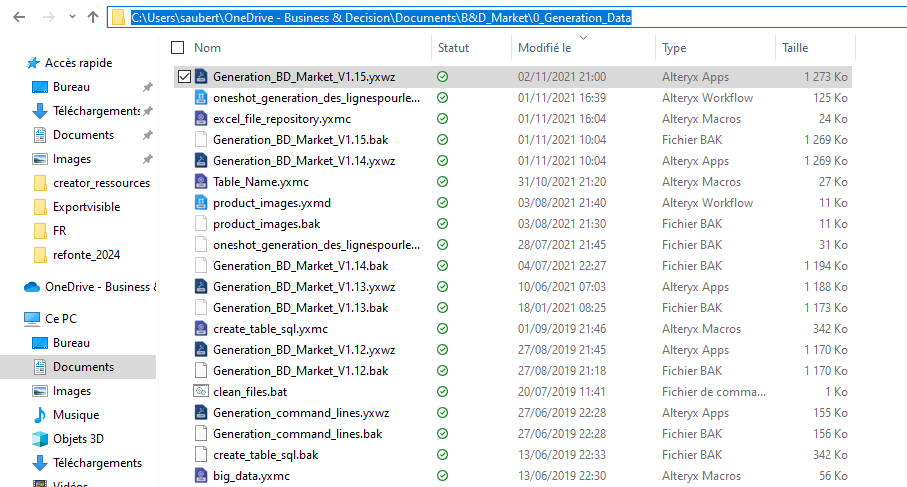

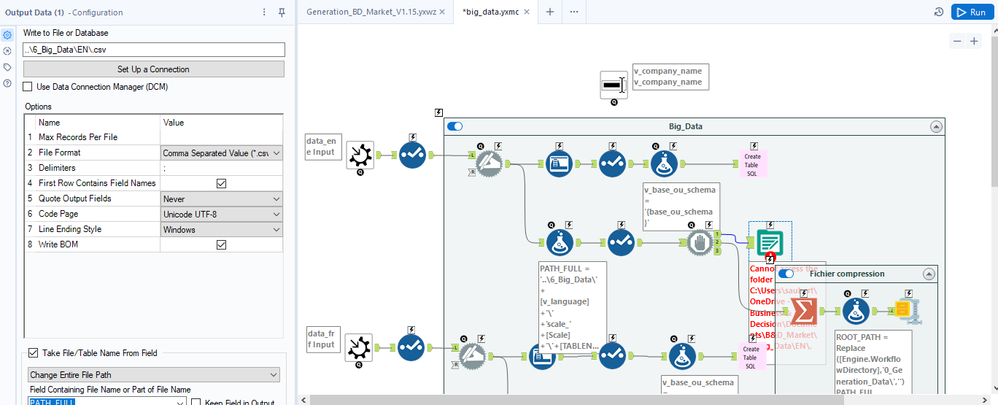

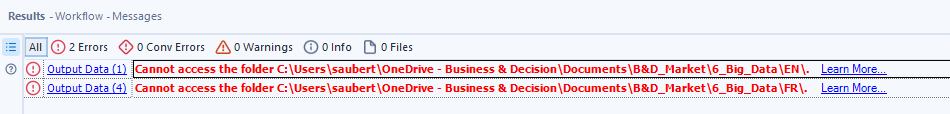

Here the issue : I have a workflow in my One Drive folder

In that workflow, I use a macro that writes a file with a relative path (..\6_Big_Data\EN\.csv ) :

Strangely, it doesn't work and the error message seems to relate to a folder that doesn't exist (but also, not the one I have set)

ErrorLink: Output Data (1): https://community.alteryx.com/t5/*/*/ta-p/724327?utm_source=designer&utm_medium=resultsgrid|Cannot access the folder C:\Users\saubert\OneDrive - Business & Decision\Documents\B&D_Market\6_Big_Data\EN\.

I really would like that to work :)

Best regards,

Simon

-

Category Input Output

-

Engine

-

Enhancement

Additional Dynamic Select Mode for All Native (Non-Macro) Tools with Select Functionality (with or without Data Type Selection)

This is the updated version of an idea I posted a while ago (which only included Multi-Field Formula), and after the release of Alteryx Designer 2025.1, which I found to be very successful from a new tool and functionality perspective, I decided to post about it.

My proposition is to add the Dynamic Select functionality* (at least the Select via a Formula mode) to all native (non-macro) tools in all tool categories that include a Select functionality (as an alternative, where the user would be OK with not being able to also change the field types of the selected fields, such as Join and Append tools, the opposite would apply to Multi-Field Formula, where the user would be able to dynamically select which fields the Multi-Field Formula would be applied to, in addition to changing the data type), including but not limited to (to account for any new tool with a Select functionality that might be added in the future):

Preparation Category

- Auto Field

- Data Cleanse Pro (added in 2025.1)

- Multi-Field Formula

- Multi-Row Formula (for Group By option)

- Rank (for Group By option)

- Record ID (for Group By option)

- Sample (for Group By option)

- Tile (for Group By option)

- Unique

Join Category

- Append Fields

- Find Replace

- Join

- Join Multiple

Transform Category

- Arrange

- Cross Tab

- Make Columns (for Grouping Fields (Optional) option)

- Running Total (for both Group By (Optional) and Create Running Total options)

- Transpose (for both Key Columns and Data Columns options, the tool would generate an error if the Dynamic Select formula written for both options are selecting the same field(s), as the Transpose tool is not supposed to allow it)

- Weighted Average (for Grouping Fields (Optional) option)

In-Database Category

- Select In-DB

Reporting Category

- Layout (for Group By and Per Column Configuration options)

- Table (for Group By and Per Column Configuration options)

Machine Learning Category

- Transformation (for Select Features mode only, as the other two modes with Select functionality (Clean Up Missing Values and One Hot Encoding) require Method and Missing Category Action specification)

Developer Category

- Download (for And values from these fields option present in Headers and Payload tabs)

- Dynamic Rename (for the Select functionality present in Formula mode)

Spatial Category

- Find Nearest

- Spatial Info

- Spatial Match

Data Investigation Category

- Pearson Correlation

Skipping Address and Demographic Analysis categories as they have tools that seem to be using a static input, therefore not requiring a Dynamic Select functionality.

Laboratory Category

- JSON Build (for Grouping Fields (Optional) option)

- Transpose In-DB (with a similar logic to the regular Transpose tool found in Transform category)

*The Dynamic Select functionality added tools that have more than one input anchor (such as Join and Join Multiple) could have new additional fields the users can utilize, such as:

- [Origin] (can have the values "L" or "R" for Join and Append tools)

- [Connection_ID] (can have the values 1, 2, 3 etc. for Join Multiple tool)

- [Unknown] (can have the values "True" or "False" for the Data Columns option of the Transpose tool, or any other tools such as Join that would have the Dynamic or Unknown Columns option as a part of their Select functionality)

-

Desktop Experience

-

Engine

-

Enhancement

-

UX

Hello,

SQLite is :

-free

-open source

-easy to use

-widely used

https://en.wikipedia.org/wiki/SQLite

It also works well with Alteryx input or output tool. 🙂

However, I think a InDB SQLite would be great, especially for learning purpose : you don't have to install anything, so it's really easy to implement.

Best regards,

Simon

Hello all,

MonetDB is a very light, fast, open-source database available here :

https://www.monetdb.org/

Really enjoy it, works pretty well with Tableau and it's a good introduction to column-store concepts and analytics with SQL.

It has also gained a lot of popularity these last years :

https://db-engines.com/en/ranking_trend/system/MonetDB

Sadly, Alteryx does not support it yet.

Best regards

Hello all,

Change Data Capture ( https://en.wikipedia.org/wiki/Change_data_capture ) is an effective way to deal with changes in a database, allowing streaming or delta functionning. Several technos, more or less intrusive, can be applied (and combined). Ex : logs reading.

Qlik : https://www.qlik.com/us/streaming-data/data-streaming-cdc

Talend : https://www.talend.com/resources/change-data-capture/

Best regards,

Simon

-

AMP Engine

-

Engine

Please add official support for newer versions of Microsoft SQL Server and the related drivers.

According to the data sources article for Microsoft SQL Server (https://help.alteryx.com/current/DataSources/SQLServer.htm), and validation via a support ticket, only the following products have been tested and validated with Alteryx Designer/Server:

Microsoft SQL Server

Validated On: 2008, 2012, 2014, and 2016.

- No R versions are mentioned (2008 R2, for instance)

- SQL Server 2017, which was released in October of 2017, is notably missing from the list.

- SQL Server 2019, while fairly new (~6 months old), is also missing

This is one of the most popular data sources, and the lack of support for newer versions (especially a 2+ year old product like Sql Server 2017) is hard to fathom.

ODBC Driver for SQL Server/SQL Server Native Client

Validated on ODBC Driver: 11, 13, 13.1

Validated on SQL Server Native Client: 10,11

- ODBC Driver 17+ is not mentioned, even though it was released in February of 2018. https://docs.microsoft.com/en-us/sql/connect/odbc/windows/release-notes-odbc-sql-server-windows?view...

- SQL Server Native Client is deprecated. It is being replaced by Microsoft OLE DB Driver for SQL Server. However, there is not a mention of Microsoft OLE DB Driver for SQL Server. The latest version of this is 18.3.0. https://docs.microsoft.com/en-us/sql/connect/oledb/release-notes-for-oledb-driver-for-sql-server?vie...

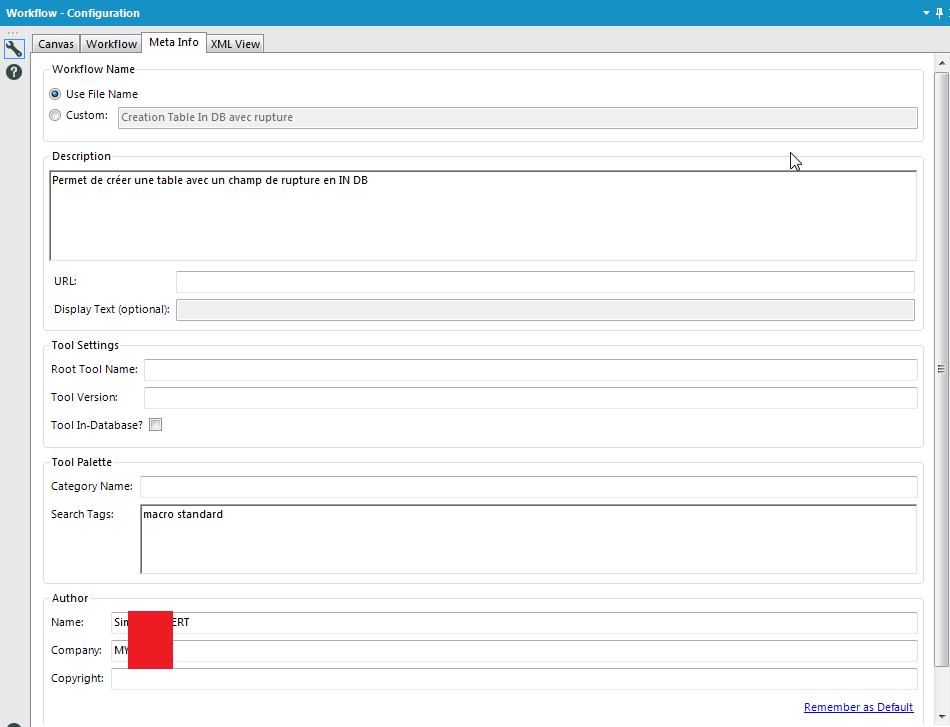

I love Workflow Meta info, especially the ability to put the Author, the search tags,the version, the description, etc...

But why can't we use it as Engine Constant? It doesn't seem very hard to implement and it would change life for development.

-

Engine

-

Feature Request

When using certain tools, particularly market place tools like the SharePoint input/ output etc. it would be helpful to have a quick way to find out which version is being used in a workflow. Something along the lines of an option when you right click the tool, that displays the current version would be ideal.

This would be helpful in several cases but primarily when handing over workflows. There are cases when I have multiple versions of the same tool installed so that I don't have any issues inheriting workflows. This does however, make things confusing when handing workflows back. Tool Version Labelling would solve this problem.

Regards - Pilsner

-

Engine

-

Enhancement

- New Idea 392

- Accepting Votes 1,783

- Comments Requested 20

- Under Review 181

- Accepted 47

- Ongoing 7

- Coming Soon 13

- Implemented 550

- Not Planned 106

- Revisit 56

- Partner Dependent 3

- Inactive 674

-

Admin Settings

22 -

AMP Engine

27 -

API

11 -

API SDK

229 -

Bug

1 -

Category Address

13 -

Category Apps

114 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

252 -

Category Data Investigation

79 -

Category Demographic Analysis

3 -

Category Developer

219 -

Category Documentation

82 -

Category In Database

215 -

Category Input Output

658 -

Category Interface

246 -

Category Join

109 -

Category Machine Learning

3 -

Category Macros

156 -

Category Parse

78 -

Category Predictive

79 -

Category Preparation

405 -

Category Prescriptive

2 -

Category Reporting

205 -

Category Spatial

83 -

Category Text Mining

23 -

Category Time Series

24 -

Category Transform

92 -

Configuration

1 -

Content

2 -

Data Connectors

985 -

Data Products

4 -

Desktop Experience

1,613 -

Documentation

64 -

Engine

136 -

Enhancement

419 -

Event

1 -

Feature Request

219 -

General

307 -

General Suggestion

8 -

Insights Dataset

2 -

Installation

26 -

Licenses and Activation

15 -

Licensing

15 -

Localization

8 -

Location Intelligence

82 -

Machine Learning

13 -

My Alteryx

1 -

New Request

228 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

26 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

87 -

UX

227 -

XML

7

- « Previous

- Next »

-

Carolyn on: Blob output to be turned off with 'Disable all too...

- MJ on: Add Tool Name Column to Control Container metadata...

-

fmvizcaino on: Show dialogue when workflow validation fails

- ANNE_LEROY on: Create a SharePoint Render tool

- jrlindem on: Non-Equi Relationships in the Join Tool

- AncientPandaman on: Continue support for .xls files

- EKasminsky on: Auto Cache Input Data on Run

- jrlindem on: Global Field Rename: Automatically Update Column N...

- simonaubert_bd on: Workflow to SQL/Python code translator

- abacon on: DateTimeNow and Data Cleansing tools to be conside...

| User | Likes Count |

|---|---|

| 9 | |

| 3 | |

| 3 | |

| 2 | |

| 2 |