Alter.Nation

Community news, customer stories, and more!- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Alter.Nation

- :

- #SANTALYTICS Part 2: Solution and Behind the Data

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

That’s right, we are officially one week closer to Santa’s arrival.

Solution

If you’re reading this blog post, then you have participated in the second of four exercises that will constitute the Santalytics challenge - an inclusive, non-denominational, festive excuse for some friendly competition using Alteryx. You’ve also solidified your claim as Alteryx pro in your quest to help Santa and acquire timeless Alteryx swag by knocking the dust off of your Spatial Toolset to solve one of the more difficult optimization problems of the year. This question was by no means an easy one and, since Santa will be getting all the credit this year, you deserve some serious congratulations.

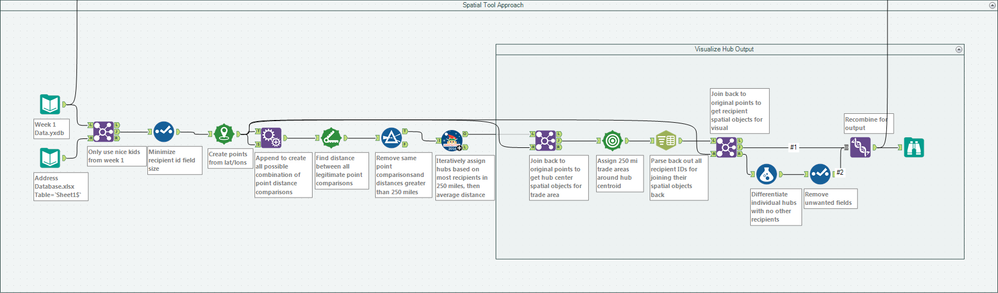

The approach we took to assign hubs to each grouping of nice kids and determine the minimum trip weight is attached (Santalytics Part 2 Solution.yxzp, v10.6), and the details explored below:

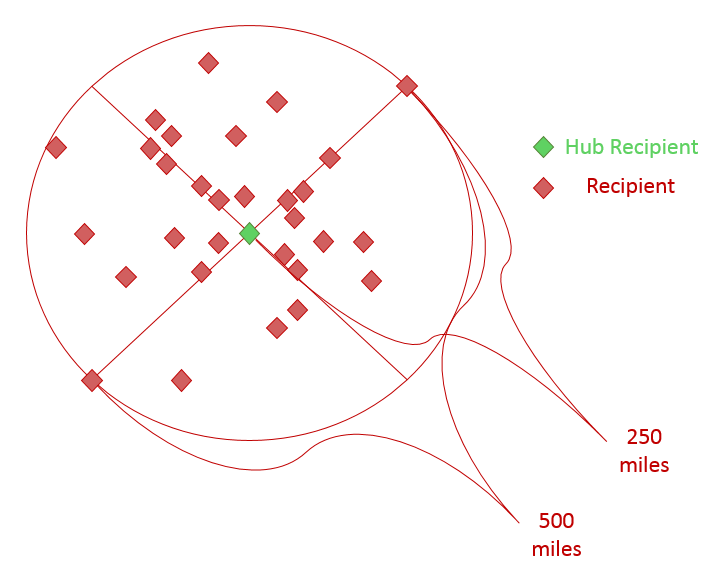

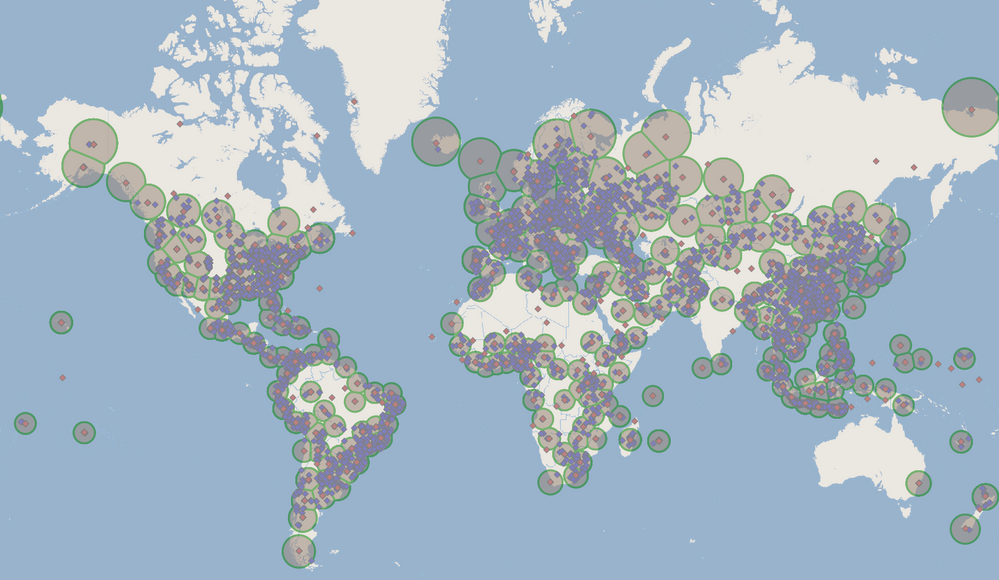

To determine the least number of 500 mile trade area hubs (250 mile radii) that could expedite Santa’s deliveries we measured the distance between every combination of points in our dataset and excluded every distance measurement over 250 miles (below). This was accomplished by appending the dataset to itself and subsequently removing any pair-comparison redundancies. This also excluded 73 points outside trade area distance from any other points and identified outliers that Santa would have to deliver to personally this year. These points are isolated mostly to the remote area of the globe (areas of Newfoundland, Siberia, and scattered islands across the world) - and they had better be leaving cookies out for the trouble.

We then iteratively ranked each point by how many other points lay within a 250 mile radius (visualized below), taking the most inclusive coordinate as our hub centroid and removing its hub constituents from the dataset before repeating the process.

This process is attached as the v10.6 macro Santalytics Part 2 Group Hubs, which grouped the remaining 11,927 recipients to 267 hubs (totaling 12,000 nice kids and 340 stops), and can be seen below.

At this point in our method, all that remained were joins to match each present score and the lightest respective present to the recipients (and centroid recipient) of each hub.

Once each kid was matched with the lightest present of their respective present class, we summarized each hub to sum the weights of every present that needed delivery to a given hub and identified ~422 (421.95) pounds as the minimum towage that Santa would need to distribute reindeer for this year.

While relatively accurate, the approach above is in no way the only right answer; clocking in at just around three minutes in run time, there are alternatives that don’t require as much sheer computation from appending every point to another for pairwise distance calculations. That said, Santa will definitely be getting his computation time back from any attempt to optimize the distribution of presents!

We knew the spatial hub assignment of Part 2 would be challenging and we even asked our CTO, Ned, to take a stab. His response: ‘This is one of those “impossible” type problems. There is no way to directly solve it. So the short answer is a location optimizer or iterative macro is the way to go. Guess and refine.’

We showed him one of our approaches, basically an over complicated Multi-Row Formula Tool that didn’t quite get us there, to which he said, “well, you could just Grid it up, and do a Spatial Match…” and in less than 5 minutes and that many tools, the impossible task with 457 Hubs was done.

It looks like @SophiaF, @MichelKars, @Cooperchu, and @Philip were all thinking like Ned on this one. Very impressive.

@cor used R to establish the hub locations and we won’t even pretend to understand the R script, but they sure do look like convex hull polygons. @JohnJPS used cor's solution to improve upon – improvement that comes at a price of precious runtime. We love this spirit of collaboration!

And then there are the iterative approaches - like @ggruccio’s, @JoeM’s, and the one we highlighted here.

Behind the Data

We mentioned in last week’s post that the Mockaroo realistic data generator supplied us all of our randomly generated kid names and coordinates to use in the exercise, accompanied by the BestBuy Products API’s bulk download list of physical store products for presents. They were chosen primarily for their ease of access, but each had minor shortfalls that we had to work around to fit the exercises – hardly a task that would keep us from staging the greatest holiday themed analytics challenge to date.

Not every latitude and longitude from Mockaroo geocoded so we simply generated an extra dataset of 1,000 records to compensate for the filtered records that didn’t translate to true geocoded locations. Getting the API product data ready was a little trickier, requiring the usual parsing and reshaping of an XML response and some extensive filtering (we actually built a workflow to generate the filter expression from a text file) to slim down their physical products to items that were appropriate gifts. We didn’t want to put Santa in an awkward position by matching a beer fridge to a child - regardless of how good they were this year. Sure, we included batteries for the kids that were barely good this year, but we had to draw the line somewhere.

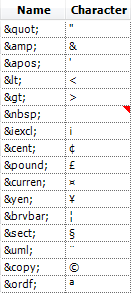

After parsing and filtering, the API data only needed some HTML character substitution to make the product/manufacturer names easier to read. This was easily accomplished using a Text Input Tool as a lookup table (below) for a Find Replace Tool to patch up the strings real pretty like for added readability.

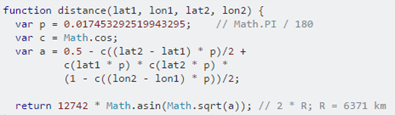

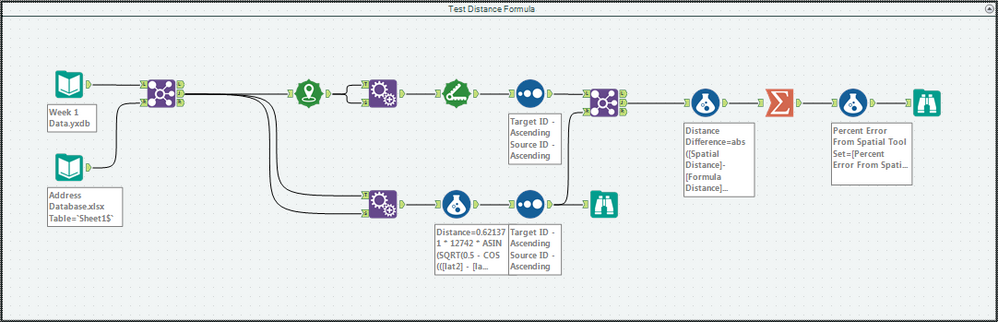

On the topic of data, we also wanted to see if there was another way to handle our data that could reduce run time. Handling millions of computationally expensive spatial objects in our approach, we had our fingers crossed that calculating the distance between lat/lon points, rather than creating spatial points and using the Distance Tool, could be more efficient in a Formula Tool. To do this, we built an alternative workflow arm (Distance Formula Approach) that borrowed a distance calculating function from Stack Overflow:

This translated into Alteryx as follows (note the use of the workflow constant, p):

Although it didn’t save us any processing time, it was a fun way to test just how good the Spatial Tools really are. In addition to proving the tools’ efficiency, the calculation above yielded an average of only 0.05 percent error across the millions of pairwise comparisons – showcasing their accuracy for us as well.

We’re only halfway through the Santalytics gauntlet of challenges and Santa is going to need just a little more help before we can tally this holiday season as a resounding success! Help Santa get around the globe with your Alteryx might and lend some Part 3 insight!

Data Engineer

Former Alteryx, Inc. Support Engineer, Community Data Architect, Data Scientist then Data Engineer. Starting from the old data warehousing paradigm in big pharma, Matt found Alteryx on the first day of the rest of his life. Matt has a MS in Biomedical Engineering & Bioinformatics and is passionate about all things integrated data management and analytics. The Alteryx analytics badassery journey continues here! https://community.alteryx.com/t5/user/viewprofilepage/user-id/375130

Former Alteryx, Inc. Support Engineer, Community Data Architect, Data Scientist then Data Engineer. Starting from the old data warehousing paradigm in big pharma, Matt found Alteryx on the first day of the rest of his life. Matt has a MS in Biomedical Engineering & Bioinformatics and is passionate about all things integrated data management and analytics. The Alteryx analytics badassery journey continues here! https://community.alteryx.com/t5/user/viewprofilepage/user-id/375130

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.