Weekly Challenges

Solve the challenge, share your solution and summit the ranks of our Community!Also available in | Français | Português | Español | 日本語

IDEAS WANTED

Want to get involved? We're always looking for ideas and content for Weekly Challenges.

SUBMIT YOUR IDEA- Community

- :

- Community

- :

- Learn

- :

- Academy

- :

- Challenges & Quests

- :

- Weekly Challenges

- :

- Re: Challenge #2: Preparing Delimited Data

Challenge #2: Preparing Delimited Data

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

done

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

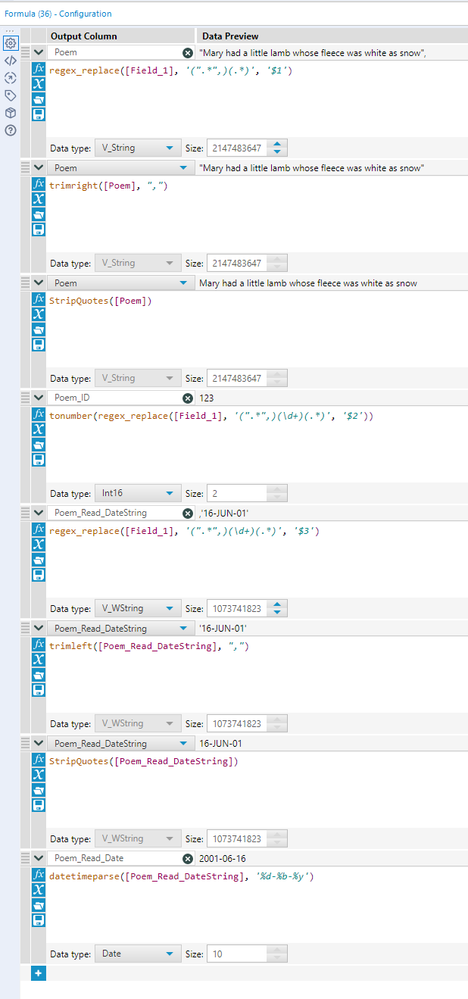

Here is my solution - I just ended up using some back to back formulas to get the job done.

Minor clarifying question on the published solution - shouldn't 'Ignore delimiters in quotes' be checked in the Text to Columns tool, given the assumption in the background information given is that strings such as the Poem may contain delimiters within them? While no comma was included in the Poem part of the string in the two examples provided, if one were included in a different record of the same theoretical dataset, wouldn't the Text to Columns tool incorrectly split the string at the point of said comma (versus ignoring that comma because it is encapsulated within a string and not intended to be interpreted as a delimiter) based on the current tool configuration? Let me know if I am thinking about that incorrectly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello!

Here is my solution -

1) Column to text - split the data into columns based on the comma delimiter

2) Data Cleansing - Removed punctuations from column1(Poem). Got rid of double quotes

3) Column to text - Split to rows, set delimiter to single quotes and checked the ignore delimiters in single quotes options

4) Select records - Entered row number 2 and 4 just to display the required rows

5) DateTime - custom dd-mon-yy

6) Select - Renamed columns and checked the required columns

7) Browse

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

For this challenge I traded using the Text to Columns Tool for Regex and was able to accompish the parsing in 1 tool.

Regex Used: ["](.*)["][,](\d{3})[,].(\d{2}.\w{3}.\d{2}).

The brackets are used to identify a character listed specifically in a set. In this case for quotes and the comma. The second way I have remove the quotes is using the period, which just removes a single character in a specific place in this case the qoutes around the Poem_Read_Date. Another good component of this RegEx is the {}, which can be used to specific a specifc number of characters you would like to parse using the preceding arguement, in this case digits and words.

-

Advanced

273 -

Apps

24 -

Basic

128 -

Calgary

1 -

Core

112 -

Data Analysis

170 -

Data Cleansing

4 -

Data Investigation

7 -

Data Parsing

9 -

Data Preparation

195 -

Developer

35 -

Difficult

69 -

Expert

14 -

Foundation

13 -

Interface

39 -

Intermediate

237 -

Join

206 -

Macros

53 -

Parse

138 -

Predictive

20 -

Predictive Analysis

12 -

Preparation

271 -

Reporting

53 -

Reporting and Visualization

17 -

Spatial

59 -

Spatial Analysis

49 -

Time Series

1 -

Transform

214

- « Previous

- Next »