Weekly Challenges

Solve the challenge, share your solution and summit the ranks of our Community!Also available in | Français | Português | Español | 日本語

IDEAS WANTED

Want to get involved? We're always looking for ideas and content for Weekly Challenges.

SUBMIT YOUR IDEA- Community

- :

- Community

- :

- Learn

- :

- Academy

- :

- Challenges & Quests

- :

- Weekly Challenges

- :

- Challenge #116: A Symphony of Parsing Tools!

Challenge #116: A Symphony of Parsing Tools!

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

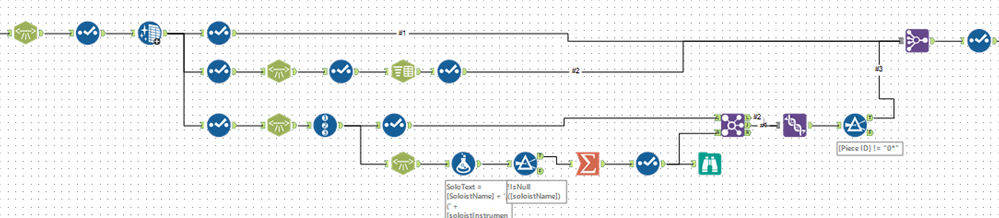

That was a challenge! There are probably ways to do it with less tools than I used....I'll have to examine some of the other submissions!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

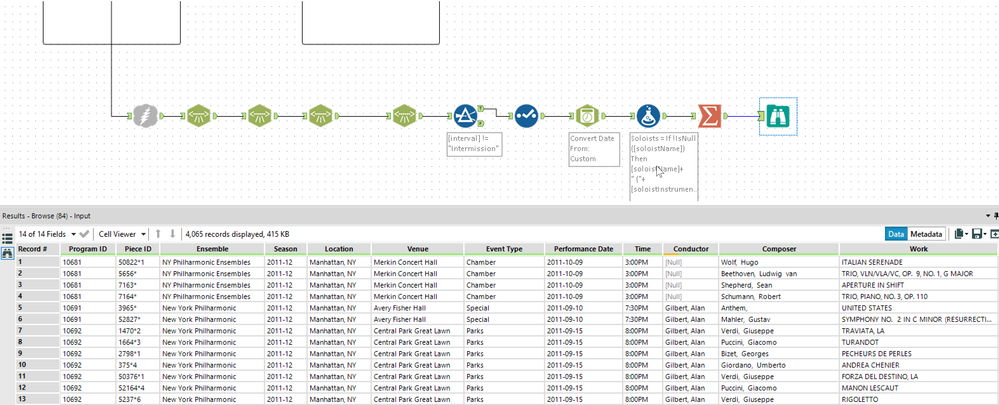

This was more fiddly than difficult - spent a lot of time aligning fields, changing types etc.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

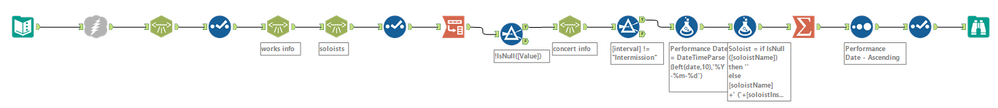

Great practice with the XML parsing tool!

My original workflow treated each instance of the program (e.g., same program, different days), as a record. Simple Unique tool at the end of the workflow took care of that.

Fun fact. I could verify this list with one of my dear friend's father -- he's been the librarian of the NY Phil for many many years!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

When this challenge first came out I had no idea how to solve it, but now I'm slowly starting to feel comfortable with XML parse :) could probably do with a few less tools!

I took into account all concerts, so I have a few more rows (at least that's what I think is the reason!)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Great challenge!

I also initially got one less record than the published results, relating to Program 12104 Piece 7955* which is a second intermission.

My filter condition Isnull([Interval]) excluded this, but when I change the filter condition to [Interval] != "Intermission", I got the same as the official results.

It really helps to open the xml file in a browser before you start to just see which headings contain the data that you want and how many levels you have to parse to get to them.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Ah! The joys of running on an under powered (8GB) machine. I had originally built my workflow linearly, parsing each layer in-line with the previous one. By the time I was parsing the work info, it taking upwards of 3 minutes and generating 10GB data sets. And this was before had even started on the soloists. I changed tactics and parsed the major node types in parallel. Now, complete run time is under 10 seconds, including the download and the largest data set is only 30MB

Divide and conquer saves the day

Dan

-

Advanced

273 -

Apps

24 -

Basic

128 -

Calgary

1 -

Core

112 -

Data Analysis

170 -

Data Cleansing

4 -

Data Investigation

7 -

Data Parsing

9 -

Data Preparation

195 -

Developer

35 -

Difficult

69 -

Expert

14 -

Foundation

13 -

Interface

39 -

Intermediate

237 -

Join

206 -

Macros

53 -

Parse

138 -

Predictive

20 -

Predictive Analysis

12 -

Preparation

271 -

Reporting

53 -

Reporting and Visualization

17 -

Spatial

59 -

Spatial Analysis

49 -

Time Series

1 -

Transform

214

- « Previous

- Next »