Engine Works

Under the hood of Alteryx: tips, tricks and how-tos.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Engine Works

- :

- Migrating and Scaling MongoDB on Alteryx Server

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Introduction and Motivation

The Alteryx Server relies on MongoDB as its back-end data persistence layer, storing Server-related data such as Gallery users and sessions, Schedules, Modules and execution results. By default, the Alteryx Server ships with its own "Embedded" MongoDB, a standalone MongoDB instance bootstrapped silently on first usage. The self-contained instance facilitates near-zero configuration of the Alteryx Server, enabling users to stand up a Server quickly and efficiently without concern about the back-end storage details. Many clients continue to use the single-node setup beyond the initial Server deployment, adding a proper backup strategy but otherwise leaving the instance as-is.

With a backup in place, the single-node MongoDB setup is indeed suitable for smaller Server deployments. As an organization's Server usage grows, however, scaling MongoDB, first by moving the database to a dedicated host and then by adding redundancy, are natural points of expansion. In fact, a number of clients have reached out to us recently (both directly and via their Alteryx support contacts) inquiring about guidelines for migrating their standalone, "Embedded" MongoDB instance to a more robust, "User-managed" MongoDB instance, improving the resilience and redundancy of their Alteryx Server installation.

This post is intended to address these recent user requests directly, providing general recipes for scaling MongoDB, first by migrating the standalone database to a dedicated host, and then adding redundancy via a MongoDB Replica Set. This process relies on some basic Server administration concepts, particularly using the Server command line as discussed in a previous post.

References

In the spirit of up-front documentation and addressing the possibility of TL;DR, here are some key references for concepts in this post:

- MongoDB backup and restore: https://docs.mongodb.com/manual/tutorial/backup-and-restore-tools/

- Mongorestore: https://docs.mongodb.com/manual/reference/program/mongorestore/

- Introduction to Replica Sets: https://docs.mongodb.com/manual/replication/

- Replica Sets with Authentication: https://docs.mongodb.org/manual/tutorial/deploy-replica-set-with-auth/

- Granting roles to users: https://docs.mongodb.com/manual/reference/method/db.grantRolesToUser/

- Keyfiles: https://docs.mongodb.com/manual/core/security-internal-authentication/

- Keyfiles and Replica Sets: https://docs.mongodb.com/manual/tutorial/enforce-keyfile-access-control-in-existing-replica-set/

Preparing for a Migration

Before making any modifications to the Alteryx Server it's important to schedule Server downtime, take the Server offline completely, and perform a backup of the existing MongoDB database. Backing up the MongoDB database is straightforward and we provide a command-line option to dump the existing database via the AlteryxService emongodump command. For reference, complete instructions are available in an older post under the section "Backing up and restoring Embedded Mongo."

You will also need the current MongoDB credentials. To collect the credentials, open an Administrator command shell, navigate to the Alteryx installation directory, and use the AlteryxService getemongopassword command-line option. This retrieves both the administrative and non-administrative credentials for the database:

In an Embedded MongoDB instance, both the administrative and non-administrative user have the username "user".

Migrating to a new MongoDB host

There are several ways of migrating the Alteryx Server database to a new MongoDB host and we'll examine a few of the techniques. The first is copying the database directly to the target server, and is applicable to a MongoDB instance hosted in a Windows environment. The second is creating a database backup and restoring on the target server, applicable to a MongoDB instance hosted in either a Linux or Windows environment.

After migrating the database to a new MongoDB host, updating the MongoDB instance to become a full-fledged Replica Set is suprisingly straightforward and follows the same general process regardless of the host operating system. Similarly, configuring the Alteryx Server to consume the new standalone MongoDB instance, be it a single node or Replica Set, is identical irrespective of the MongoDB hosting environment.

Copying to a new Server (Windows)

The first technique for migrating a standalone instance to a new Windows-based server is by copying the source Mongo folder itself to the new target system. This is arguably the easiest method, requiring just a few short steps.

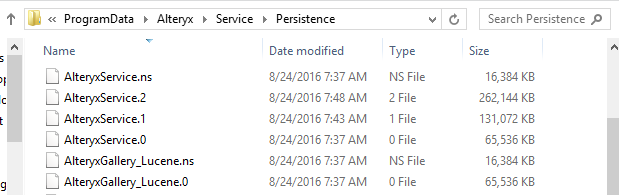

First, locate the MongoDB folder on the source system. By default, the folder is located in C:\ProgramData\Alteryx\Service\Persistence.

Note that the default persistence folder location may be overridden in the System Settings dialog on the Controller | Persistence page.

Copy that folder in its entirety from the source system to the target system. In this example, we're using xcopy on the command line to move the database contents to a shared folder on the new host:

Depending on how large the folder is you may want to compress its contents prior to copying the folder to the new host.

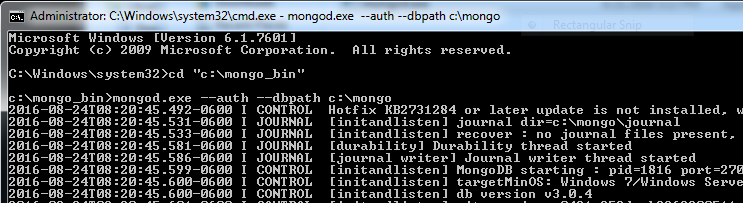

Now, on the new host, you should be able to start MongoDB using that new folder as the database source folder. On the command-line, start mongod.exe using the --auth and --dbpath command-line options, specifying the target folder containing the copied DB for the latter:

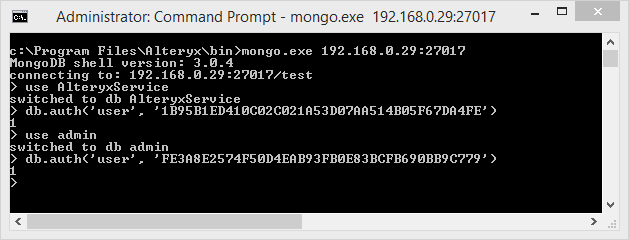

After the new MongoDB server starts, connect to the instance using mongo.exe and authenticate using the credentials collected from the host system. Remember, the username for both the administrative and non-administrative users is "user":

Backing up and restoring to a new server (Windows and Linux)

Another technique for migrating to a new MongoDB server is creating a backup on the source system and then restoring from that backup on the target system. If you haven't already created a backup of the source system, do so now using the AlteryxService emongodump command as described in this post under the section "Backing up and restoring Embedded Mongo":

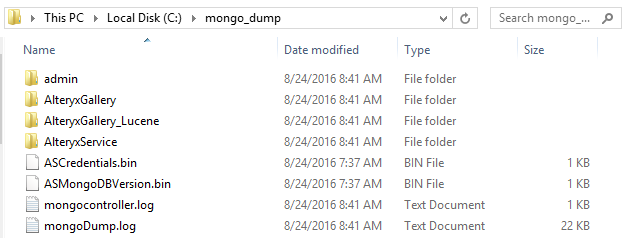

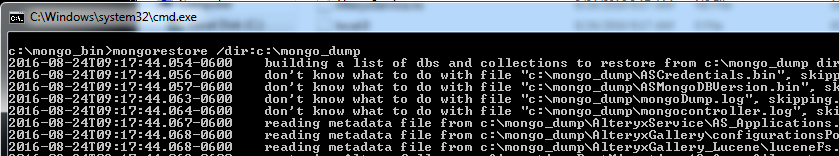

The process may take a while to complete, but in the end it will create a directory containing the contents of the databases plus a few log files:

As with the previous example, copy the contents of the backup to the target system (you may want to compress the contents of this folder first). As before, we're using xcopy here to copy the backup to a shared folder on the new host:

Now, we'll restore the database on the new host using the Mongo tool mongorestore, for which full documentation is available on Mongo's website. The mongorestore tool will restore data into a running database instance, so first we'll start mongod.exe in an empty target directory with authentication disabled (note the lack of the --auth flag):

Then, use mongorestore to populate the new database. Unless specified explicitly, mongorestore will connect to mongod.exe running locally on the default port of 27017:

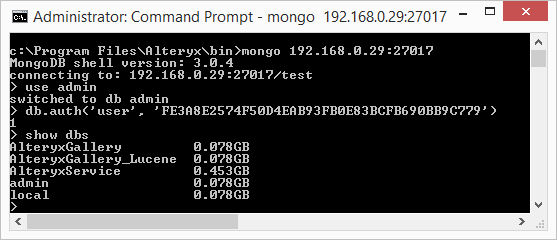

After mongorestore completes its job, the database contents on the new host will be equivalent to that from the old host. As in the previous example, [re]start mongod.exe with auth enabled and test the connection using mongo.exe using the same user and credentials as the original system:

Other methods for migrating data to an existing MongoDB instance (Windows and Linux)

In addition to the two basic techniques presented above, there are several other ways to move data from an Alteryx Server MongoDB instance to a pre-existing MongoDB server. For example, mongorestore can be used to restore only certain databases from a backup into an existing MongoDB instance, creating new databases/collections as necessary. Similarly, the MongoDB copydb command can be executed from the mongo.exe command-line to copy databases from a source instance to a target instance.

Configuring Alteryx Server to use User-managed MongoDB

Regardless of the method you choose to migrate data from the Embedded MongoDB instance to a User-managed MongoDB instance, configuring Alteryx Server to connect to the new instance is trivial.

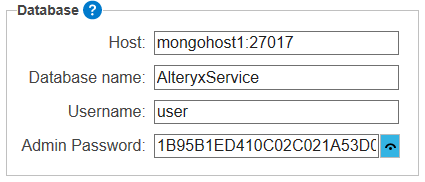

In the System Settings dialog, navigate to the Controller | Persistence page and choose "User-managed MongoDB," updating the connection information with the new MongoDB host and credentials. Note that the password is for the non-administrative user from the Embedded MongoDB instance:

MongoDB Replica Sets

Conceptually, a MongoDB Replica Set is a collection of MongoDB servers configured to provide High Availability (HA) and automatic failover. A single Replica Set comprises one or more MongoDB servers working in unison, and best practices state that the Replica Set should contain at least three nodes (and an odd number of nodes in total). At all times, one of the members of the Replica Set acts as the Primary, or the node responsible for servicing read/write requests from external clients such as the Alteryx Server. The other nodes in the Replica Sets are called Secondaries, and they are responsible for maintaining exact copies of data hosted by the Primary and/or otherwise contributing to the overall health of the Replica Set.

In the unlikely event that the Primary fails, a process called an Election occurs, and one of the Secondaries clicks its heels three times and becomes the Primary. Ideally, the election and subsequent failover to a secondary is transparent to the client, although in practice there may be small "blips" in service.

Replica Sets may also contain other, non-electable nodes including Arbiters and dedicated backup nodes. Arbiters are Replica Set members that do not store any data, but whose sole responsibility is to provide a tie-breaking vote (if needed) when electing a new Primary. Backup nodes are Replica Set members whose sole responsibility is to provide read-only data access for rolling backups, and they may be termed "hidden" or "priority zero" nodes because they are configured to only ever act as a Secondary, contributing to the election process but never being electable to the Primary themselves.

Mongo has excellent documentation available on their website, and I encourage starting with their overview of replication in MongoDB and continuing with the collection of replication-related tutorials. We'll be using several of the basic techniques covered in their tutorials, referring back to specifics from the MongoDB references as needed.

Updating User-managed MongoDB with a Replica Set

Adding resilience and redundancy to our new User-managed MongoDB instance is also reasonably straightforward, following a basic recipe as set forth in Mongo's guidelines for converting a standalone instance. We'll execute the following four steps in sequence, each of which is expanded upon below in more detail:

- Connect to DB and enable cluster administration

- Create keyfile and deploy

- Start Replica Set members

- Connect to Primary and create the Replica Set

Step 1: Connect to DB and enable cluster administration

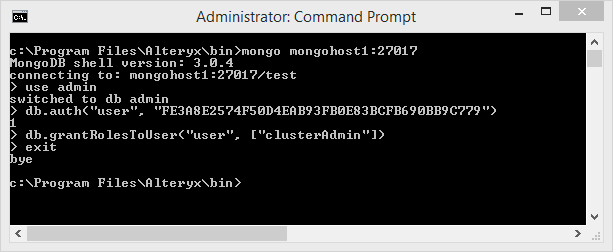

The first step is connecting to the existing database as the administrative user and enabling this user to deploy and administer Replica Sets. Alternatively, a new user can be created with this privilege, but to keep things simple let's use our existing administrator and add the new capability.

Using mongo.exe, we'll connect to the database, authenticate as the administrative user, and then invoke the method "grantRolesToUser" with the new role "clusterAdmin" to give our administrative user the power to control Replica Sets:

Step 2: Create keyfile and deploy

Since we're using a Replica Set with authentication enabled, the Replica Set members must know how to communicate securely with each other. There are several techniques for achieving this goal, and to keep things simple here we're going to use keyfiles. Conceptually, a keyfile contains a bit of pre-shared information that is copied to each member of a Replica Set, and as long as the contents of the files are identical, the Replica Set members can talk to each other.

Following the general suggestions from Mongo, we'll use the openssl command line in Cygwin to generate the keyfile. Contrary to the MongoDB docs, however, we'll specify the -hex option to correctly generate exactly 1k of data in the keyfile (note, however, that for testing purposes any text in the keyfile will work just fine, so open up Notepad and bang away!)

$ openssl rand -hex 512 > /cygdrive/C/mongo/keyfile

After generating the keyfile, copy it onto each node destined to be part of the Replica Set. Since the keyfile contains sensitive information, it should be deployed to a secure location where only the mongod.exe user will have access. Additionally, on Linux, this file must be owned by the same user that runs the mongod process, with 600 file permissions.

Step 3: Start Replica Set members

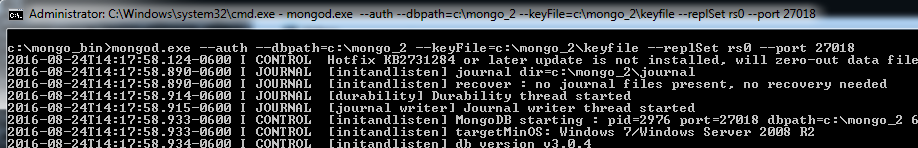

With a keyfile in place on each member, it's now time to start each one in turn. We'll add two new flags to the mongod.exe command line including --keyFile to specify the key file we just generated, and --replSet to specify the name of the Replica Set, which in this example is arbitrarily "rs0":

The other two members are started similarly. Note how the Replica Set name is specified explicitly via the --replSet flag, as is the port via the --port flag in this example from the second member:

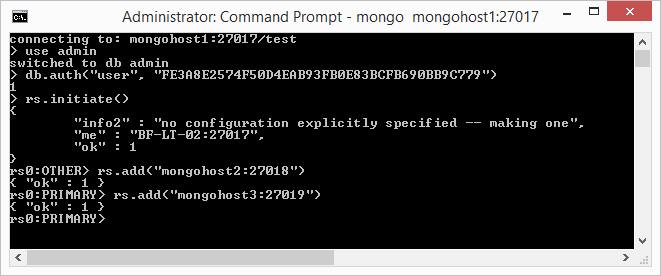

Step 4: Connect to Primary and create the Replica Set

Now that each member is started, we'll log onto the Primary, authenticate, and initialize the Replica Set using the rs.initiate() command. When this command completes, we'll add the other two members using the rs.add() command, explicitly specifying each node and port. The entire sequence of three commands may take a minute or two to complete:

Congratulations! You've just created a three-member Replica Set, and any transaction will now be mirrored across all three nodes instead of just one.

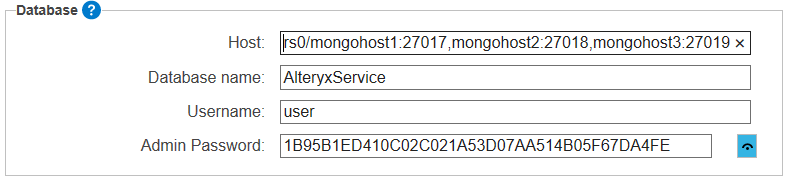

Configuring Alteryx Server to connect to the Replica Set

In the System Settings dialog, navigate to the Controller | Persistence page and choose "User-managed MongoDB." Instead of choosing a single MongoDB node as the Host, however, the Host will comprise the Replica Set name followed by a comma-separated list of the members (as <host1>:<port1>,<host2>:<port2>,<host3>:<port3>).

For our example Replica Set "rs0", the connection string will be as follows:

Note, as with the single-node connection, enter the non-administrative credentials here.

Closing

As shown, the default "Embedded" MongoDB instance used by the Alteryx Server is portable and easily migrated to a standalone instance. Furthermore, updating that standalone instance to support HA and failover via a MongoDB Replica Set requires just a few well-documented steps and perhaps a bit of practice.

Thanks for reading and stay tuned for more Server-related posts. As always, feedback is greatly appreciated so please don't hesitate to reach out directly or via your Alteryx support contact.

Kudos to @TaraM, @GaryS and @DougD (our resident MongoDB Giant!) for assistance.

Steve is a Distinguished Engineer at Alteryx on the Architecture team, working largely in C++ but dabbling in other languages and technologies. His contributions include backend components of the Alteryx Server, Hadoop connectors (HDFS/Avro), JavaScript integration in both the Designer and Engine (CEF), Spark Direct functionality, Cloud execution for desktop (CEfD), etc. He currently lives on the fringe of Desktop and Cloud, and is committed to bringing our Desktop/Server customers forward into the Cloud.

Steve is a Distinguished Engineer at Alteryx on the Architecture team, working largely in C++ but dabbling in other languages and technologies. His contributions include backend components of the Alteryx Server, Hadoop connectors (HDFS/Avro), JavaScript integration in both the Designer and Engine (CEF), Spark Direct functionality, Cloud execution for desktop (CEfD), etc. He currently lives on the fringe of Desktop and Cloud, and is committed to bringing our Desktop/Server customers forward into the Cloud.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.