Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- Stop Being in de-NILE: A Code Free & Code Friendly...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Perhaps you know Alteryx Designer fairly well and have dipped your toes in the water of the R coding language. In this blog, I will mix the two on a single Alteryx canvas, where I’ll analyze a classic and well-discussed dataset – the Nile river flow from 1871 to 1970.

BACKGROUND

People have been fascinated with the Nile for millennia. Much of ancient life in the surrounding area of the Nile was dependent on the river for life itself. It’s not surprising that even in ancient times people were recording data about the Nile.

Fast forward a few thousand years and people are still recording various measurements about the ancient river. They believed for a long time that there was a significant change in the Nile’s water output right around the turn of the last century. There’s a well-known data set that contains the annual water output of the river for each year from 1871 to 1970 that has been used to test this theory.

In 1978, George W. Cobb published “The Problem of the Nile: Conditional Solution to a Changepoint Problem,” in which he used some heavy-duty statistical and mathematical methods to confirm what had long been accepted – that a significant change in the output of the river did indeed occur at the turn of the last century (Cobb, G. W., 1978, The problem of the Nile: conditional solution to a change-point problem. Biometrika 65, 243--51. 10.2307/2335202).

CAN I ALTERYX THE NILE RIVER FLOW CHANGEPOINT?

Of course I can! First, I need to grab the dataset of the Nile output readings from 1871 to 1970. As I said earlier, it’s a classic dataset, and in fact comes preloaded with R, when R is installed. Assuming you loaded the Predictive tools alongside your Designer install, the R library datasets will have been installed, which contains the Nile dataset. I’ll walk through how to access it, read it into Designer, perform the changepoint calculation, and then wrangle it all together.

THE GOAL

I’d like to answer the following 3 questions:

- At what year did the change in the output of the Nile occur?

- What was the median and average output of the river for the years prior to the change?

- What was the median and average output of the river for the years after the change?

THE PROCESS

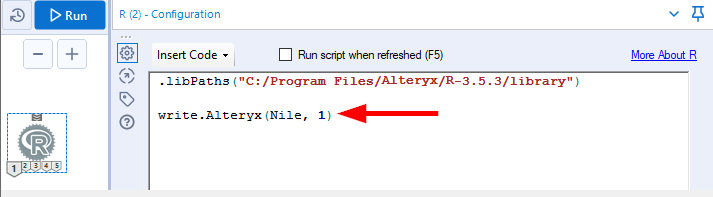

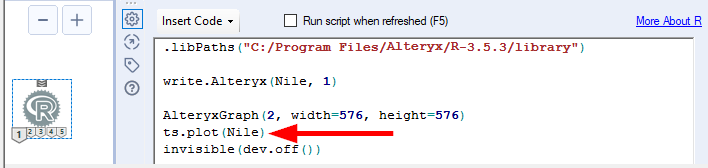

First, I’ll talk about the “code-friendly” phase. I need to read in the dataset. As previously stated, it comes standard with the R (Predictive tools) install. First, I’ll drag an R tool onto a blank canvas and focus in on its configuration pane:

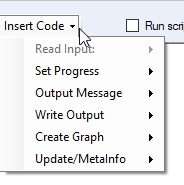

Here I can manually type R code. I can also use the drop down “Insert Code” and have Alteryx auto-insert some very common and useful code for integrating with the Alteryx engine:

More on some of these options shortly.

First, I’ll pull in that Nile dataset. Just for reference, it’s the Annual volume of the Nile River (discharge at Aswan, 108 m3) from 1871 to 1970.

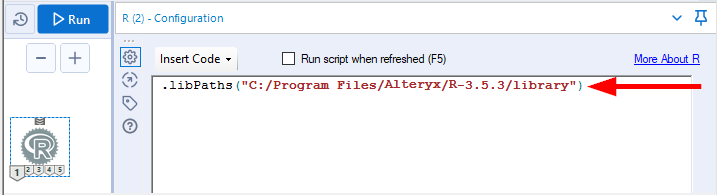

Since it’s a standard dataset in R, I can actually just call it by name (Nile), without declaring it as a separate variable. But first I have to tell R where my R libraries are located. My libraries are located in the default install location that is created when installing the Alteryx Predictive toolset.

To set my library directory, I’ll enter the following code in the configuration pane of the R tool (note that R needs forward slashes instead of backslashes for directory paths):

Easy enough, right? Just “.libPaths” and then in parenthesis the directory to where the R libraries are.

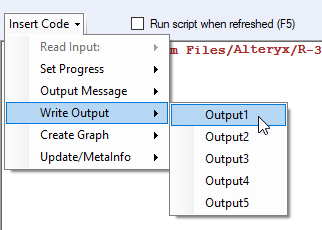

Now that I’ve told R where to look, I can read in the Nile data, and specifically write that data to the R tool’s “1” anchor so I can see it and use it downstream. To write data to one of the output anchors, I use the following option from the “Insert Code” drop down:

This will auto-insert the following code:

The “1” for the second argument refers to the output anchor of the R tool where the data will be written. The “1” is there because I chose “Output1” from the drop-down menu. Had I chosen, say, “Output4,” then that “1” would’ve been a “4”. I can also overwrite it manually to any of the output anchors, if I wanted to. The configuration pane is a fully editable pane.

The “dataVariableToWrite” argument, as you might guess, is generic. I’ll need to overwrite that text with the actual name of what I want to output. For this exercise, it’s the Nile dataset. And as stated before, R already knows what the Nile data is since it’s a pre-loaded dataset, so I don’t need to declare what “Nile” is – I can simply refer to it as-is:

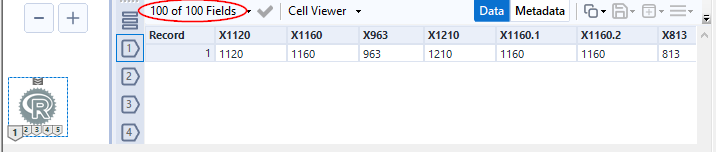

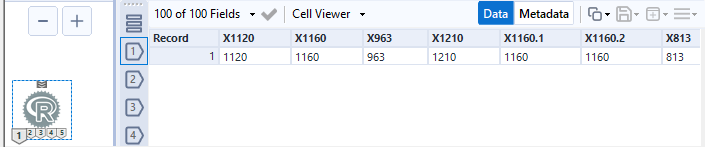

I’ll run this and look at output anchor 1’s output:

You can see there are 100 fields. That’s one field for each year in the dataset (again, 1871 – 1970). R will name the fields according to the value, but with an “X” prefix (and if there’s more than one field with the same value, it will add a suffix, as necessary: .1, .2, etc. So, the first year in the dataset, 1871, has a value of 1,120 108 m3 (recall that’s the output of the Nile river, in units of one hundred million cubic meters). The year 1872 has an output of 1,160 108 m3. And so on.

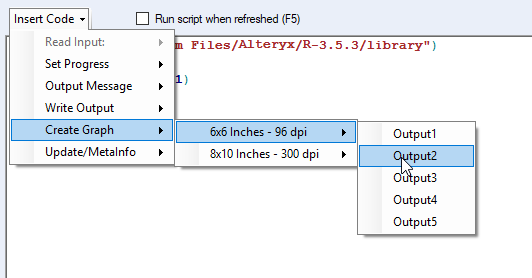

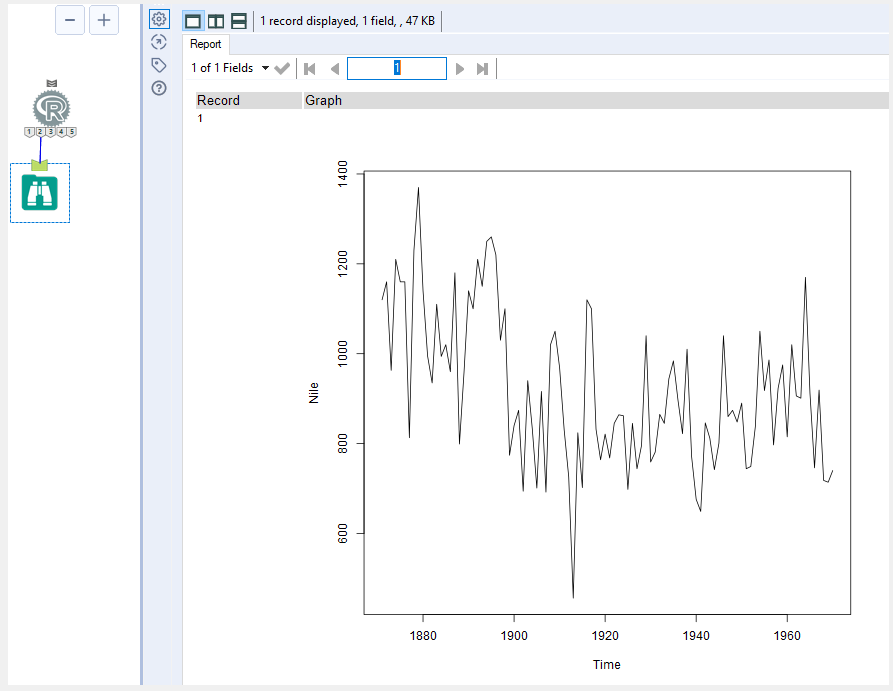

So, there’s my output of the Nile for each of the 100 years in the time series. Remember the first question I’d like answered – in what year did the output significantly change? Before finding the change, I’d like to first look at a plot of the data. I’ll again make use of the Insert Code drop down for this. I can select the following:

This will create a chart of size 6x6 and write it to output anchor 2.

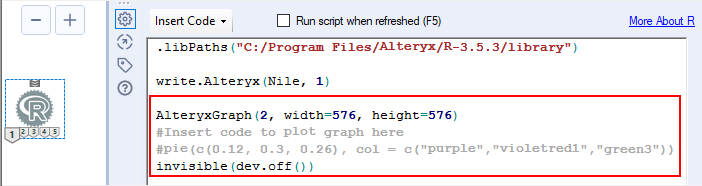

Here’s the code that it auto-inserts:

The first line (AlteryxGraph) tells R where and how to output the chart. The “2” tells R to output to output anchor 2, and the width and height are the size of the chart.

The next two lines of code are commented out (meaning R will ignore them – they are just for the reader’s reference). In R, the comment-out tag is #, which I call the pound sign, because I’m old and don’t like calling it a “hashtag” (insert ‘ok boomer’ here). I’ll have to update the two commented-out lines of code, as applicable. I just want a plain old time series chart, so I’ll remove the second commented-out line of code (the one referring to a pie chart), and overwrite the first commented-out line of code with the following:

The “ts.plot” function is what you’d think – a time series plot of the data in parentheses; here, the Nile data.

If I run the module again, then here’s what output anchor 2 looks like (don't forget to add a Browse tool):

The y-axis is the Nile output volume, and the x-axis is the year. There does indeed seem to be a shift (at least visually) if I look at the trend both before and after the year 1900.

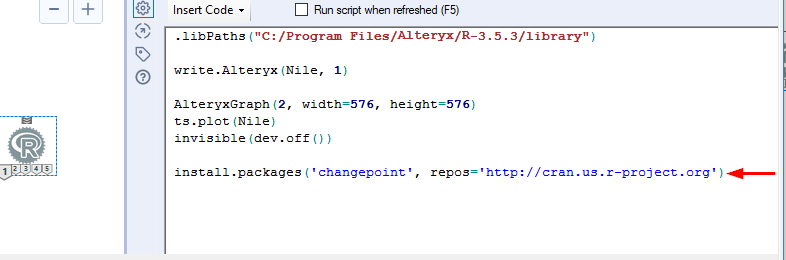

I’ll calculate the statistical “changepoint” in this data. Instead of crunching through some intense formulas and equations, I’ll make use of a particular R package called the changepoint package.

Check out the documentation for this package. On this page, there is a link to a PDF that contains full explanations and details of what’s included in the package.

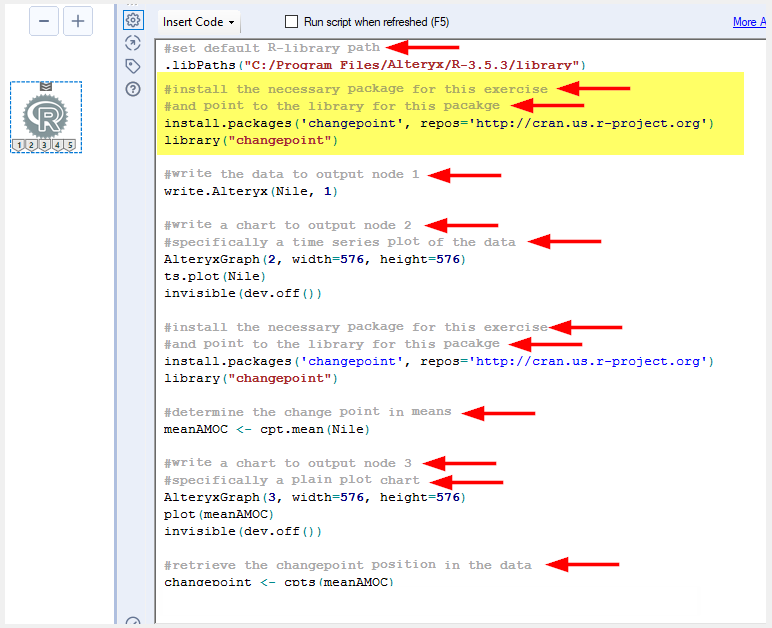

The first thing I’ll need to do is install the changepoint package. Here’s the code to do that:

This will install the package to the library directory of your R install (noted in the “.libPaths” line of code at the top). The install.packages function has 2 arguments - the package name (changepoint), and the standard repository where R packages can be found (cran.us.r-project.org).

To make use of the functions (and anything else) contained in the package, I need to direct R to the actual package library that gets installed:

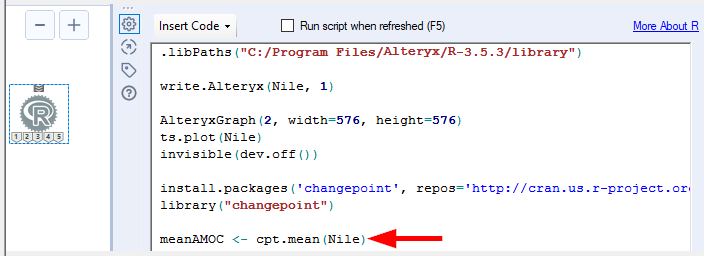

The changepoint package contains (among other things) three wrapper functions: cpt.mean, cpt.var, and cpt.meanvar. They look for changes in the mean, variance, or a combination of both, respectively, in a given dataset. I’m going to make use of the cpt.mean function to look for the change in the mean of the Nile data.

The format of the FULL cpt.mean function with all arguments is as follows:

cpt.mean(data, penalty="MBIC", pen.value=0, method="AMOC", Q=5, test.stat="Normal", class=TRUE, param.estimates=TRUE,minseglen=1)

However, I will employ the function simply as cpt.mean(data), where the other function arguments will be the default. The function will look like this: cpt.mean(Nile). This will look for a single change point in the Nile data, with the “At Most One Change” (AMOC) method, in a normal distribution, using the Modified Bayes Information Criterion (MBIC) penalty.

The code looks like this (note that I’m also going to declare cpt.mean(Nile) as "meanAMOC":

Now, the result of this function will be stored in R, but in order to retrieve it and write it to an output anchor, I’ll need to use another function in the changepoint package, namely, the function cpts (more about that shortly). First, I’d like to visually see the data plotted with the means both before and after the change point.

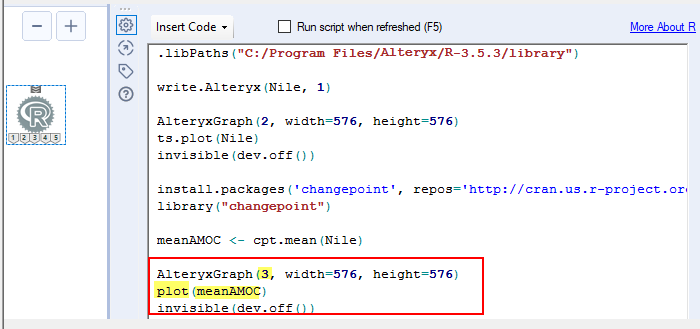

To do this, I’ll just copy and paste the code from my time series plot that I ran earlier, update the output anchor to anchor 3, update the function from a time series plot to just a regular old everyday plot, and update the data that’s being plotted from the original Nile data to the cpt.mean(Nile) results, or as it’s now recognized, meanAMOC:

I’ll run this and look at output anchor 3:

It shows the data with red mean lines representing the means both before and after the identified changepoint. Indeed, they do look significantly different!

Now I’ll retrieve the position in the data of the actual changepoint, which certainly looks to me like somewhere around the turn of the century, just as Cobb also calculated. Recall I stated earlier that in order to do that, I’ll have to take our cpt.mean(Nile) results (now called meanAMOC) and wrap it in the cpts function. I’ll declare that value to be “changepoint.” Finally, I’ll output it to output anchor 4, copying the write.Alteryx line of code from earlier. Here’s what the two lines of code to accomplish this look like:

Before I run my code, I want to mark it up with some notes, or comments, to serve as a reminder to myself (or anyone else) for what’s going on in this code. Here is the same code with some comments added (note too that I moved the install of the changepoint package, along with the library("changepoint") instruction to the top of the code, as this is common R language practice):

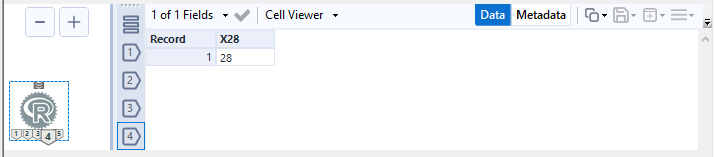

I’ll run this and look at output anchor 4:

Notice that the output is not a year, but rather a position. It’s the position in the chronological data set, which is stored in ascending year order. In order to figure out what the year value is for position 28 (or, in the data, column 28), I’ll go to the next phase of this blog, the “code-free” phase!

SO WHAT'S THE YEAR?

Recall that the data coming out of output anchor 1 looks like this:

I could just scroll over to column 28 and see what value is there, but that value will be the Nile’s output for the year represented by column X28. I don’t know right now what year column X28 represents. I do know, however, that the columns come out of anchor 1 in sequential order from the year 1871 to 1970 (that’s just how R does it with the Nile dataset).

Allow me to think out loud here, and I’m guessing you’re probably forming the same game plan in your head as well. I could flip this data from horizontal to vertical, add the years as a new column, add the row number as another new column, and then dynamically tell Alteryx to split the data appropriately (one group of rows from row 1 to 27, one group for just row 28, and one group of rows from row 29 to the end). I would then just have to take averages & medians for the two groups of rows (recall that in addition to the year, row 28, I also wanted to know what the average & median Nile outputs were before and after the change).

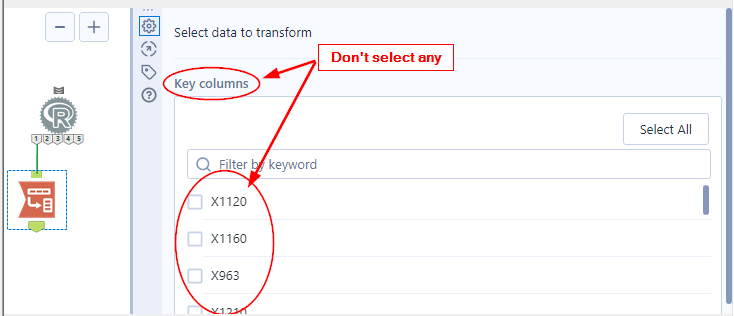

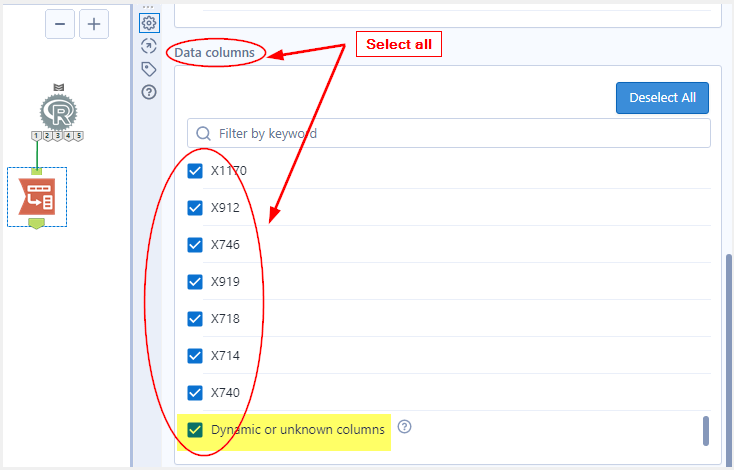

First, I’ll add a Transpose tool to output anchor 1, and won’t select any Key columns, but I will select all Data columns, including Dynamic or unknown columns, as shown below:

Selecting the Dynamic or unknown columns checkbox ensures that this can be reused on any similar incoming set of data. It will simply take however many incoming columns there are and flip them all to rows.

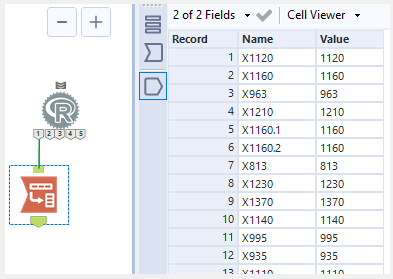

And here’s what the results look like, just as expected:

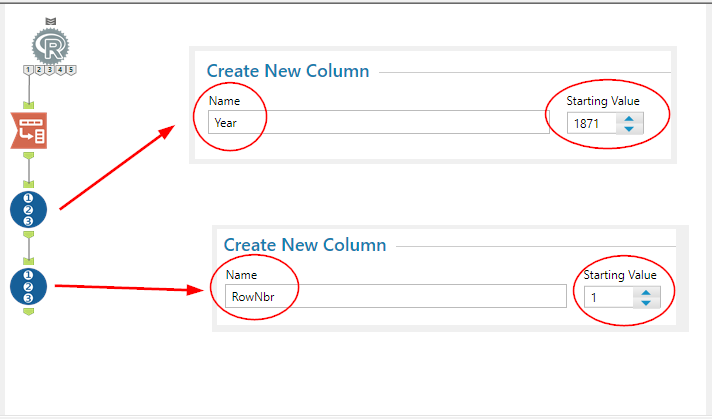

Next, I’ll add a couple Record ID tools – one for the year, and one for the row number:

And here’s what the data now looks like:

Again, I could just scroll down to row 28 and see which year is displayed. But if my dataset had, say, 150,000 rows, scrolling down wouldn’t be too practical, and it wouldn’t be very dynamic. It also would not lend itself to easily calculating the average & median Nile output for the years leading up to the change, and the years after the change.

But, if I could attach or append the row number where the change occurs, I could use filtering and inequality formulas to easily break the data into the groups I need: one group for the years before the change, one group for just the change year, and one group for the years after the change.

First, I’ll append the changepoint position (row number) coming out of the R tool’s anchor 4. Recall that it looks like this:

I’d like to append this value to each record coming out of the R tool’s anchor 1 data stream. However, you can see that the name of the field above is X28. For another dataset, it might be X123456.

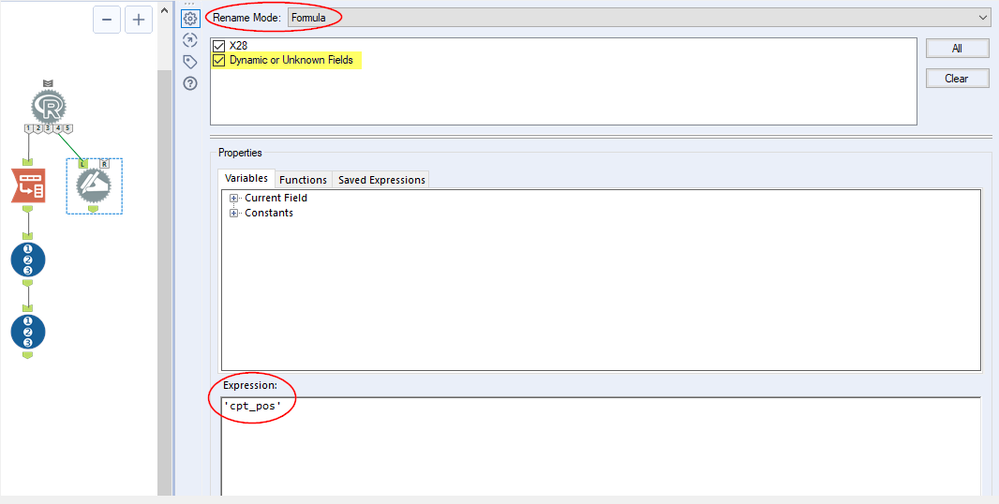

Since there will only ever be one value coming out of output anchor 4 (recall we used the default AMOC method so only one value, or one single changepoint, will be calculated), I can use a Dynamic Rename tool to change whatever comes out of anchor 4 to something more appropriate that could then be easily understood and reused for any dataset. I’ll call it cpt_pos for changepoint position:

Note again that it’s important to check the Dynamic or Unknown Fields value at the top of the configuration. This will ensure that whatever field name comes out of anchor 4 will be renamed to cpt_pos.

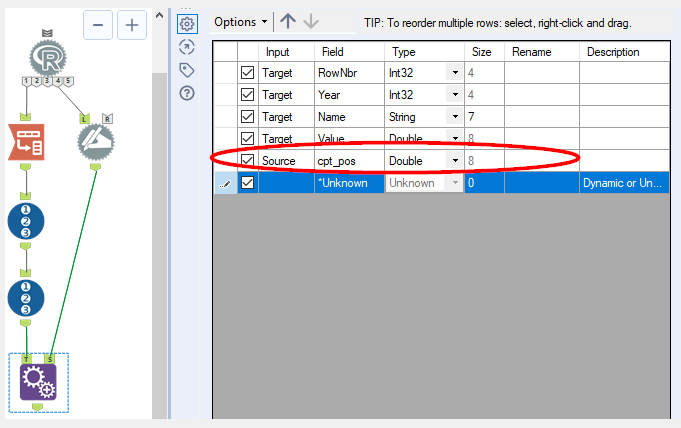

And now I’ll just append cpt_pos to each record:

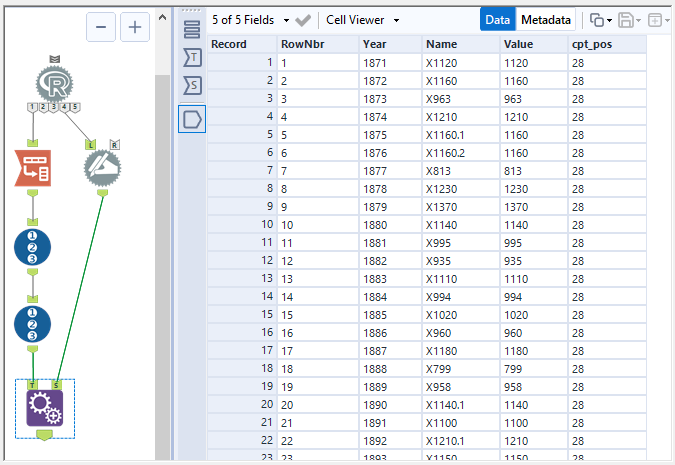

The data now looks like this:

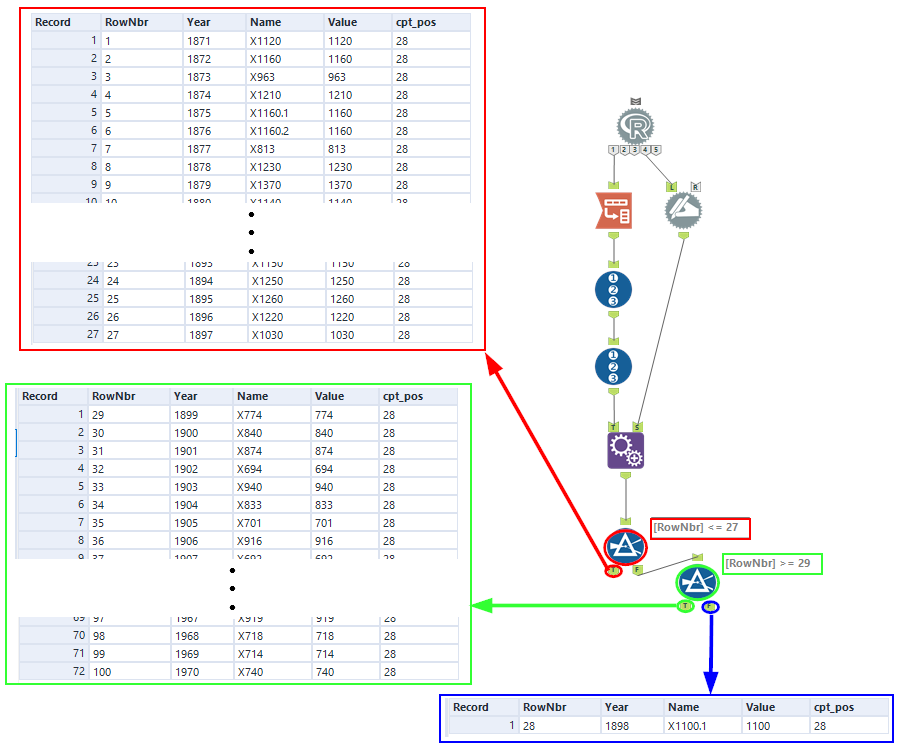

And from here I’ll use a couple Filter tools to split the data into records prior to the change (so records starting with the first record, going all the way up to and including record 27), records after the change (so records starting with record 29, going all the way up to and including the last record), and the changepoint itself:

The data in red is the Nile output prior to the changepoint. The data in green is the Nile output after the changepoint. And the data in blue is, of course, the changepoint itself, which occurred in the year 1898. This indeed matches up to the chart we saw earlier with the mean lines drawn through the time series plot. And it also matches exactly what Cobb calculated in 1978.

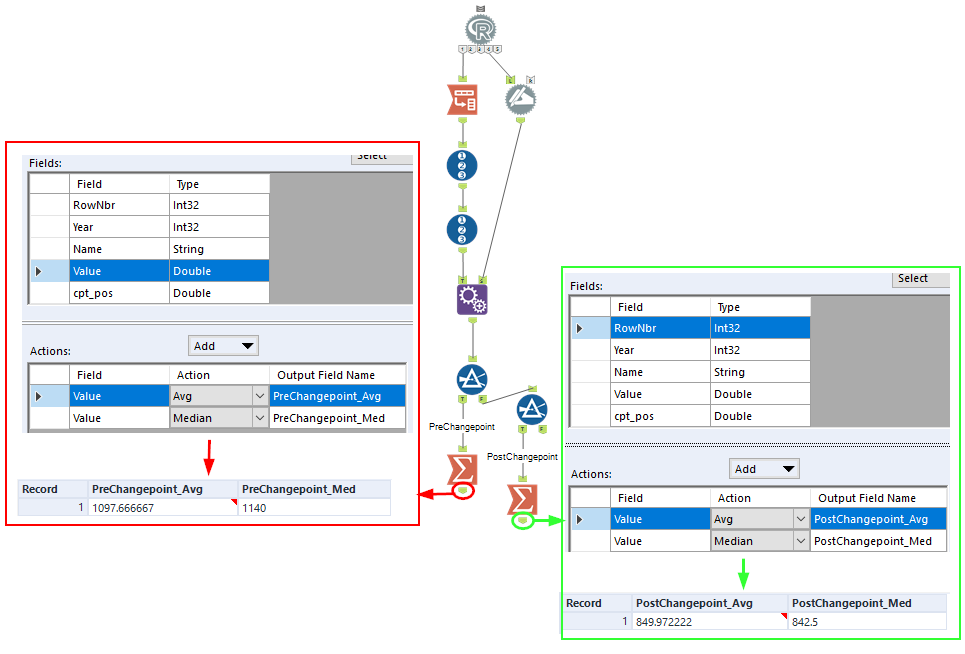

Now I’d like to finish up by calculating the average and median Nile output for the years prior to 1898 and the years after 1898. Easy enough with a couple Summarize tools:

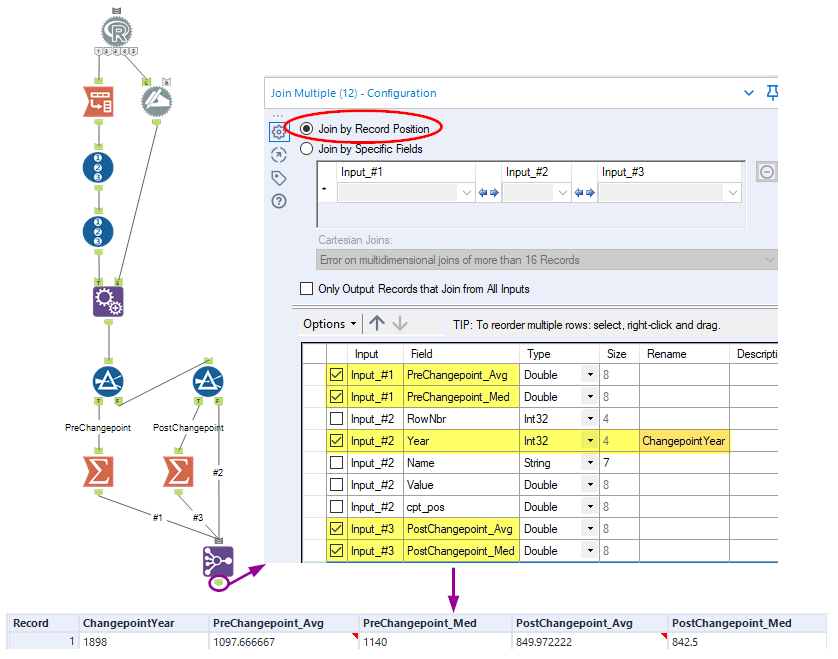

Finally, I’ll bring it all together with Join Multiple tool, where I’ll just join by position:

You could always send this output to one of the report tools and turn it into a nice fancy report as well, but as the authors of so many college text books like to say, “that exercise is left to the reader” . . .

THE WRAP-UP

Here were my original questions with the applicable answers:

- At what year did the change in the output of the Nile occur?

Answer: 1898

- What was the median and average output of the river for the years prior to the change?

Answer: Average = 1,098 108 m3 Median = 1,140 108 m3

- What was the median and average output of the river for the years after the change?

Answer: Average = 850 108 m3 Median = 843 108 m3

CLOSING NOTE

R is just one language that integrates well with Alteryx. Another is Python, and it too can be integrated easily with the use of the Python tool in the Developer category. Just another example of how Alteryx is both code-free, and code-friendly. Hope you enjoyed taking a look at how easy it is to integrate R and Alteryx.

Happy solving!

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.