Support Vector Machine has become an extremely popular algorithm. In this post I try to give a simple explanation for how it works and give a few examples using the the Python Scikits libraries. All code is available on Github. I'll have another post on the details of using Scikits and Sklearn.

What is SVM?

SVM is a supervised machine learning algorithm which can be used for classification or regression problems. It uses a technique called the kernel trick to transform your data and then based on these transformations it finds an optimal boundary between the possible outputs. Simply put, it does some extremely complex data transformations, then figures out how to seperate your data based on the labels or outputs you've defined.

So what makes it so great?

Well SVM it capable of doing both classification and regression. In this post I'll focus on using SVM for classification. In particular I'll be focusing on non-linear SVM, or SVM using a non-linear kernel. Non-linear SVM means that the boundary that the algorithm calculates doesn't have to be a straight line. The benefit is that you can capture much more complex relationships between your datapoints without having to perform difficult transformations on your own. The downside is that the training time is much longer as it's much more computationally intensive.

Cows and Wolves

So what is the kernel trick?

The kernel trick takes the data you give it and transforms it. In goes some great features which you think are going to make a great classifier, and out comes some data that you don't recognize anymore. It is sort of like unraveling a strand of DNA. You start with this harmelss looking vector of data and after putting it through the kernel trick, it's unraveled and compounded itself until it's now a much larger set of data that can't be understood by looking at a spreadsheet. But here lies the magic, in expanding the dataset there are now more obvious boundaries between your classes and the SVM algorithm is able to compute a much more optimal hyperplane.

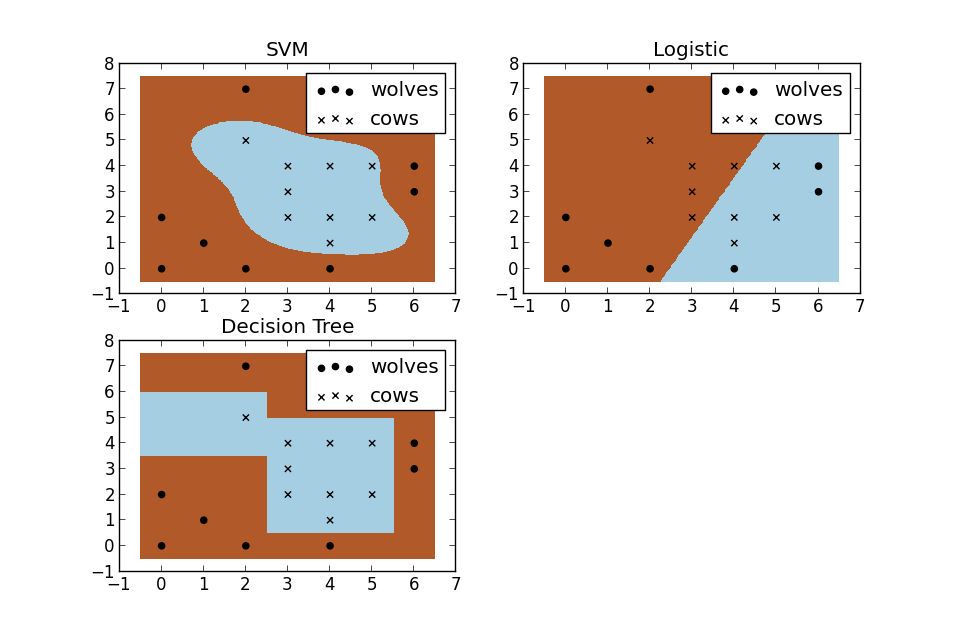

For a second, pretend you're a farmer and you have a problem--you need to setup a fence to protect your cows from packs of wovles. But where do you build your fence? Well if you're a really data driven farmer one way you could do it would be to build a classifier based on the position of the cows and wolves in your pasture. Racehorsing a few different types of classifiers, we see that SVM does a great job at seperating your cows from the packs of wolves. I thought these plots also do a nice job of illustrating the benefits of using a non-linear classifiers. You can see the the logistic and decision tree models both only make use of straight lines.

Want to recreate the analysis?

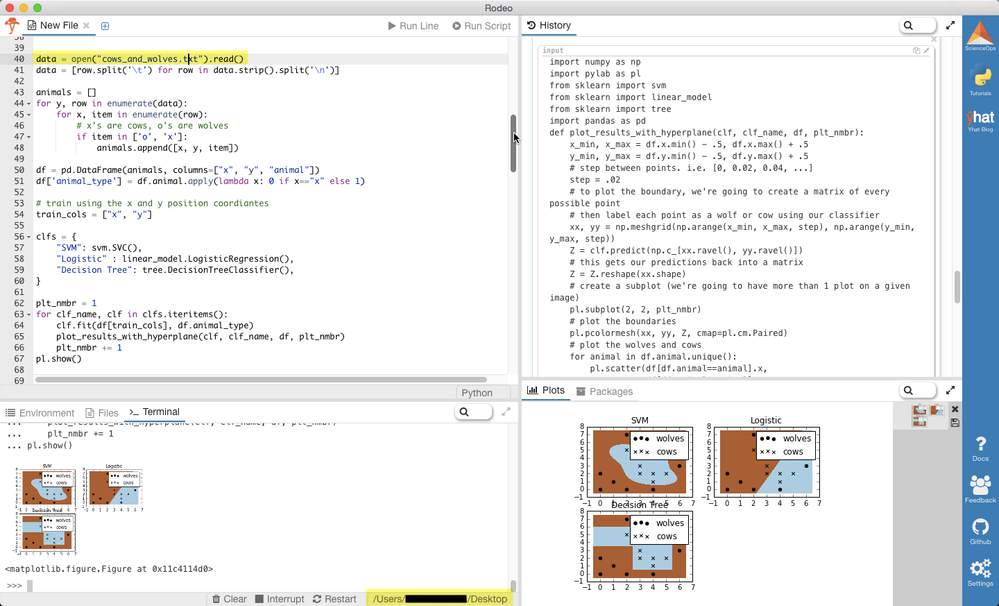

Want to create these plots for yourself? You can run the code in your terminal or in an IDE of your choice, but, big surprise, I'd recommend Rodeo. It has a great pop-out plot feature that comes in handy for this type of analysis. It also ships with Python already included for Windows machines. Besides that, it's now lightning fast thanks to the hard work of TakenPilot.

Once you've downloaded Rodeo, you'll need to save the raw cows_and_wolves.txt file from my github. Make sure you've set your working directory to where you saved the file.

Alright, now just copy and paste the code below into Rodeo, and run it, either by line or the entire script. Don't forget, you can pop out your plots tab, move around your windows, or resize them.

<span class="com"># Data driven farmer goes to the Rodeo</span>

<span class="kwd">import</span><span class="pln"> numpy </span><span class="kwd">as</span><span class="pln"> np

</span><span class="kwd">import</span><span class="pln"> pylab </span><span class="kwd">as</span><span class="pln"> pl

</span><span class="kwd">from</span><span class="pln"> sklearn </span><span class="kwd">import</span><span class="pln"> svm

</span><span class="kwd">from</span><span class="pln"> sklearn </span><span class="kwd">import</span><span class="pln"> linear_model

</span><span class="kwd">from</span><span class="pln"> sklearn </span><span class="kwd">import</span><span class="pln"> tree

</span><span class="kwd">import</span><span class="pln"> pandas </span><span class="kwd">as</span><span class="pln"> pd

</span><span class="kwd">def</span><span class="pln"> plot_results_with_hyperplane</span><span class="pun">(</span><span class="pln">clf</span><span class="pun">,</span><span class="pln"> clf_name</span><span class="pun">,</span><span class="pln"> df</span><span class="pun">,</span><span class="pln"> plt_nmbr</span><span class="pun"><span class="lia-unicode-emoji" title=":disappointed_face:">😞</span></span><span class="pln">

x_min</span><span class="pun">,</span><span class="pln"> x_max </span><span class="pun">=</span><span class="pln"> df</span><span class="pun">.</span><span class="pln">x</span><span class="pun">.</span><span class="pln">min</span><span class="pun">()</span> <span class="pun">-</span> <span class="pun">.</span><span class="lit">5</span><span class="pun">,</span><span class="pln"> df</span><span class="pun">.</span><span class="pln">x</span><span class="pun">.</span><span class="pln">max</span><span class="pun">()</span> <span class="pun">+</span> <span class="pun">.</span><span class="lit">5</span><span class="pln">

y_min</span><span class="pun">,</span><span class="pln"> y_max </span><span class="pun">=</span><span class="pln"> df</span><span class="pun">.</span><span class="pln">y</span><span class="pun">.</span><span class="pln">min</span><span class="pun">()</span> <span class="pun">-</span> <span class="pun">.</span><span class="lit">5</span><span class="pun">,</span><span class="pln"> df</span><span class="pun">.</span><span class="pln">y</span><span class="pun">.</span><span class="pln">max</span><span class="pun">()</span> <span class="pun">+</span> <span class="pun">.</span><span class="lit">5</span>

<span class="com"># step between points. i.e. [0, 0.02, 0.04, ...]</span><span class="pln">

step </span><span class="pun">=</span> <span class="pun">.</span><span class="lit">02</span>

<span class="com"># to plot the boundary, we're going to create a matrix of every possible point</span>

<span class="com"># then label each point as a wolf or cow using our classifier</span><span class="pln">

xx</span><span class="pun">,</span><span class="pln"> yy </span><span class="pun">=</span><span class="pln"> np</span><span class="pun">.</span><span class="pln">meshgrid</span><span class="pun">(</span><span class="pln">np</span><span class="pun">.</span><span class="pln">arange</span><span class="pun">(</span><span class="pln">x_min</span><span class="pun">,</span><span class="pln"> x_max</span><span class="pun">,</span><span class="pln"> step</span><span class="pun">),</span><span class="pln"> np</span><span class="pun">.</span><span class="pln">arange</span><span class="pun">(</span><span class="pln">y_min</span><span class="pun">,</span><span class="pln"> y_max</span><span class="pun">,</span><span class="pln"> step</span><span class="pun">))</span><span class="pln">

Z </span><span class="pun">=</span><span class="pln"> clf</span><span class="pun">.</span><span class="pln">predict</span><span class="pun">(</span><span class="pln">np</span><span class="pun">.</span><span class="pln">c_</span><span class="pun">[</span><span class="pln">xx</span><span class="pun">.</span><span class="pln">ravel</span><span class="pun">(),</span><span class="pln"> yy</span><span class="pun">.</span><span class="pln">ravel</span><span class="pun">()])</span>

<span class="com"># this gets our predictions back into a matrix</span><span class="pln">

Z </span><span class="pun">=</span><span class="pln"> Z</span><span class="pun">.</span><span class="pln">reshape</span><span class="pun">(</span><span class="pln">xx</span><span class="pun">.</span><span class="pln">shape</span><span class="pun">)</span>

<span class="com"># create a subplot (we're going to have more than 1 plot on a given image)</span><span class="pln">

pl</span><span class="pun">.</span><span class="pln">subplot</span><span class="pun">(</span><span class="lit">2</span><span class="pun">,</span> <span class="lit">2</span><span class="pun">,</span><span class="pln"> plt_nmbr</span><span class="pun">)</span>

<span class="com"># plot the boundaries</span><span class="pln">

pl</span><span class="pun">.</span><span class="pln">pcolormesh</span><span class="pun">(</span><span class="pln">xx</span><span class="pun">,</span><span class="pln"> yy</span><span class="pun">,</span><span class="pln"> Z</span><span class="pun">,</span><span class="pln"> cmap</span><span class="pun">=</span><span class="pln">pl</span><span class="pun">.</span><span class="pln">cm</span><span class="pun">.</span><span class="typ">Paired</span><span class="pun">)</span>

<span class="com"># plot the wolves and cows</span>

<span class="kwd">for</span><span class="pln"> animal </span><span class="kwd">in</span><span class="pln"> df</span><span class="pun">.</span><span class="pln">animal</span><span class="pun">.</span><span class="pln">unique</span><span class="pun">():</span><span class="pln">

pl</span><span class="pun">.</span><span class="pln">scatter</span><span class="pun">(</span><span class="pln">df</span><span class="pun">[</span><span class="pln">df</span><span class="pun">.</span><span class="pln">animal</span><span class="pun">==</span><span class="pln">animal</span><span class="pun">].</span><span class="pln">x</span><span class="pun">,</span><span class="pln">

df</span><span class="pun">[</span><span class="pln">df</span><span class="pun">.</span><span class="pln">animal</span><span class="pun">==</span><span class="pln">animal</span><span class="pun">].</span><span class="pln">y</span><span class="pun">,</span><span class="pln">

marker</span><span class="pun">=</span><span class="pln">animal</span><span class="pun">,</span><span class="pln">

label</span><span class="pun">=</span><span class="str">"cows"</span> <span class="kwd">if</span><span class="pln"> animal</span><span class="pun">==</span><span class="str">"x"</span> <span class="kwd">else</span> <span class="str">"wolves"</span><span class="pun">,</span><span class="pln">

color</span><span class="pun">=</span><span class="str">'black'</span><span class="pun">)</span><span class="pln">

pl</span><span class="pun">.</span><span class="pln">title</span><span class="pun">(</span><span class="pln">clf_name</span><span class="pun">)</span><span class="pln">

pl</span><span class="pun">.</span><span class="pln">legend</span><span class="pun">(</span><span class="pln">loc</span><span class="pun">=</span><span class="str">"best"</span><span class="pun">)</span><span class="pln">

data </span><span class="pun">=</span><span class="pln"> open</span><span class="pun">(</span><span class="str">"cows_and_wolves.txt"</span><span class="pun">).</span><span class="pln">read</span><span class="pun">()</span><span class="pln">

data </span><span class="pun">=</span> <span class="pun">[</span><span class="pln">row</span><span class="pun">.</span><span class="pln">split</span><span class="pun">(</span><span class="str">'\t'</span><span class="pun">)</span> <span class="kwd">for</span><span class="pln"> row </span><span class="kwd">in</span><span class="pln"> data</span><span class="pun">.</span><span class="pln">strip</span><span class="pun">().</span><span class="pln">split</span><span class="pun">(</span><span class="str">'\n'</span><span class="pun">)]</span><span class="pln">

animals </span><span class="pun">=</span> <span class="pun">[]</span>

<span class="kwd">for</span><span class="pln"> y</span><span class="pun">,</span><span class="pln"> row </span><span class="kwd">in</span><span class="pln"> enumerate</span><span class="pun">(</span><span class="pln">data</span><span class="pun"><span class="lia-unicode-emoji" title=":disappointed_face:">😞</span></span>

<span class="kwd">for</span><span class="pln"> x</span><span class="pun">,</span><span class="pln"> item </span><span class="kwd">in</span><span class="pln"> enumerate</span><span class="pun">(</span><span class="pln">row</span><span class="pun"><span class="lia-unicode-emoji" title=":disappointed_face:">😞</span></span>

<span class="com"># x's are cows, o's are wolves</span>

<span class="kwd">if</span><span class="pln"> item </span><span class="kwd">in</span> <span class="pun">[</span><span class="str">'o'</span><span class="pun">,</span> <span class="str">'x'</span><span class="pun">]:</span><span class="pln">

animals</span><span class="pun">.</span><span class="pln">append</span><span class="pun">([</span><span class="pln">x</span><span class="pun">,</span><span class="pln"> y</span><span class="pun">,</span><span class="pln"> item</span><span class="pun">])</span><span class="pln">

df </span><span class="pun">=</span><span class="pln"> pd</span><span class="pun">.</span><span class="typ">DataFrame</span><span class="pun">(</span><span class="pln">animals</span><span class="pun">,</span><span class="pln"> columns</span><span class="pun">=[</span><span class="str">"x"</span><span class="pun">,</span> <span class="str">"y"</span><span class="pun">,</span> <span class="str">"animal"</span><span class="pun">])</span><span class="pln">

df</span><span class="pun">[</span><span class="str">'animal_type'</span><span class="pun">]</span> <span class="pun">=</span><span class="pln"> df</span><span class="pun">.</span><span class="pln">animal</span><span class="pun">.</span><span class="pln">apply</span><span class="pun">(</span><span class="kwd">lambda</span><span class="pln"> x</span><span class="pun">:</span> <span class="lit">0</span> <span class="kwd">if</span><span class="pln"> x</span><span class="pun">==</span><span class="str">"x"</span> <span class="kwd">else</span> <span class="lit">1</span><span class="pun">)</span>

<span class="com"># train using the x and y position coordiantes</span><span class="pln">

train_cols </span><span class="pun">=</span> <span class="pun">[</span><span class="str">"x"</span><span class="pun">,</span> <span class="str">"y"</span><span class="pun">]</span><span class="pln">

clfs </span><span class="pun">=</span> <span class="pun">{</span>

<span class="str">"SVM"</span><span class="pun">:</span><span class="pln"> svm</span><span class="pun">.</span><span class="pln">SVC</span><span class="pun">(),</span>

<span class="str">"Logistic"</span> <span class="pun">:</span><span class="pln"> linear_model</span><span class="pun">.</span><span class="typ">LogisticRegression</span><span class="pun">(),</span>

<span class="str">"Decision Tree"</span><span class="pun">:</span><span class="pln"> tree</span><span class="pun">.</span><span class="typ">DecisionTreeClassifier</span><span class="pun">(),</span>

<span class="pun">}</span><span class="pln">

plt_nmbr </span><span class="pun">=</span> <span class="lit">1</span>

<span class="kwd">for</span><span class="pln"> clf_name</span><span class="pun">,</span><span class="pln"> clf </span><span class="kwd">in</span><span class="pln"> clfs</span><span class="pun">.</span><span class="pln">iteritems</span><span class="pun">():</span><span class="pln">

clf</span><span class="pun">.</span><span class="pln">fit</span><span class="pun">(</span><span class="pln">df</span><span class="pun">[</span><span class="pln">train_cols</span><span class="pun">],</span><span class="pln"> df</span><span class="pun">.</span><span class="pln">animal_type</span><span class="pun">)</span><span class="pln">

plot_results_with_hyperplane</span><span class="pun">(</span><span class="pln">clf</span><span class="pun">,</span><span class="pln"> clf_name</span><span class="pun">,</span><span class="pln"> df</span><span class="pun">,</span><span class="pln"> plt_nmbr</span><span class="pun">)</span><span class="pln">

plt_nmbr </span><span class="pun">+=</span> <span class="lit">1</span><span class="pln">

pl</span><span class="pun">.</span><span class="pln">show</span><span class="pun">()</span>Let SVM do the hard work

In the event that the relationship between a dependent variable and independent variable is non-linear, it's not going to be nearly as accurate as SVM. Taking transformations between variables (log(x), (x^2)) becomes much less important since it's going to be accounted for in the algorithm. If you're still having troubles picturing this, see if you can follow along with this example.

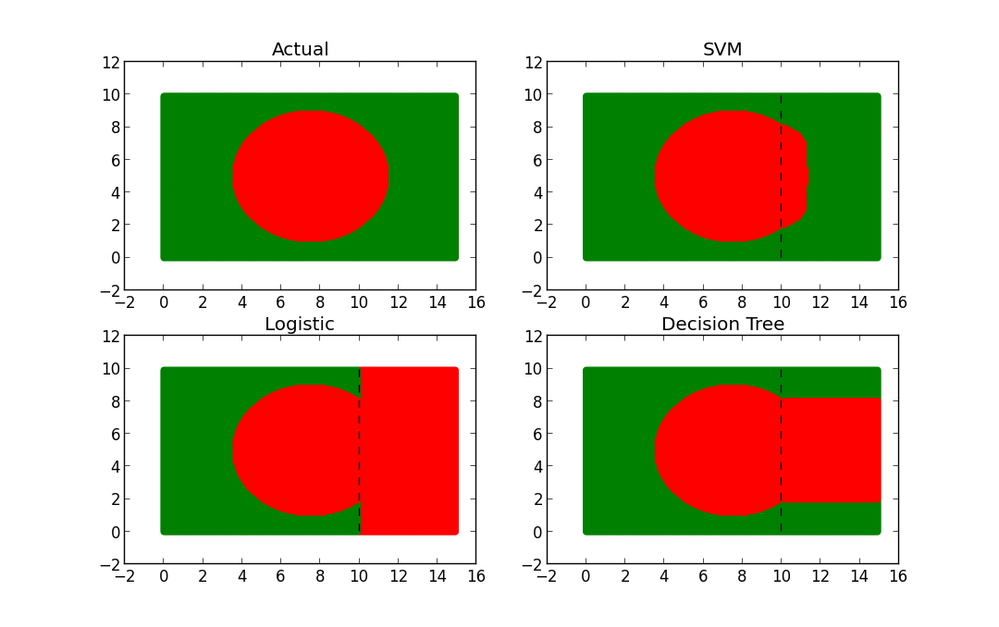

Let's say we have a dataset that consists of green and red points. When plotted with their coordinates, the points make the shape of a red circle with a green outline (and look an awful lot like Bangladesh's flag).

What would happen if somehow we lost 1/3 of our data. What if we couldn't recover it and we wanted to find a way to approximate what that missing 1/3 looked like.

So how do we figure out what the missing 1/3 looks like? One approach might be to build a model using the 80% of the data we do have as a training set. But what type of model do we use? Let's try out the following:

- Logistic model

- Decision Tree

- SVM

I trained each model and then used each to make predictions on the missing 1/3 of our data. Let's take a look at what our predicted shapes look like...

Follow along

Here's the code to compare your logistic model, decision tree and SVM.

import numpy as np

import pylab as pl

import pandas as pd

from sklearn import svm

from sklearn import linear_model

from sklearn import tree

from sklearn.metrics import confusion_matrix

x_min, x_max = 0, 15

y_min, y_max = 0, 10

step = .1

# to plot the boundary, we're going to create a matrix of every possible point

# then label each point as a wolf or cow using our classifier

xx, yy = np.meshgrid(np.arange(x_min, x_max, step), np.arange(y_min, y_max, step))

df = pd.DataFrame(data={'x': xx.ravel(), 'y': yy.ravel()})

df['color_gauge'] = (df.x-7.5)**2 + (df.y-5)**2

df['color'] = df.color_gauge.apply(lambda x: "red" if x <= 15 else "green")

df['color_as_int'] = df.color.apply(lambda x: 0 if x=="red" else 1)

print "Points on flag:"

print df.groupby('color').size()

print

figure = 1

# plot a figure for the entire dataset

for color in df.color.unique():

idx = df.color==color

pl.subplot(2, 2, figure)

pl.scatter(df[idx].x, df[idx].y, color=color)

pl.title('Actual')

train_idx = df.x < 10

train = df[train_idx]

test = df[-train_idx]

print "Training Set Size: %d" % len(train)

print "Test Set Size: %d" % len(test)

# train using the x and y position coordiantes

cols = ["x", "y"]

clfs = {

"SVM": svm.SVC(degree=0.5),

"Logistic" : linear_model.LogisticRegression(),

"Decision Tree": tree.DecisionTreeClassifier()

}

# racehorse different classifiers and plot the results

for clf_name, clf in clfs.iteritems():

figure += 1

# train the classifier

clf.fit(train[cols], train.color_as_int)

# get the predicted values from the test set

test['predicted_color_as_int'] = clf.predict(test[cols])

test['pred_color'] = test.predicted_color_as_int.apply(lambda x: "red" if x==0 else "green")

# create a new subplot on the plot

pl.subplot(2, 2, figure)

# plot each predicted color

for color in test.pred_color.unique():

# plot only rows where pred_color is equal to color

idx = test.pred_color==color

pl.scatter(test[idx].x, test[idx].y, color=color)

# plot the training set as well

for color in train.color.unique():

idx = train.color==color

pl.scatter(train[idx].x, train[idx].y, color=color)

# add a dotted line to show the boundary between the training and test set

# (everything to the right of the line is in the test set)

#this plots a vertical line

train_line_y = np.linspace(y_min, y_max) #evenly spaced array from 0 to 10

train_line_x = np.repeat(10, len(train_line_y)) #repeat 10 (threshold for traininset) n times

# add a black, dotted line to the subplot

pl.plot(train_line_x, train_line_y, 'k--', color="black")

pl.title(clf_name)

print "Confusion Matrix for %s:" % clf_name

print confusion_matrix(test.color, test.pred_color)

pl.show()

Follow along in Rodeo by copying and running the code above!

Follow along in Rodeo by copying and running the code above!

Results

From the plots, it's pretty clear that SVM is the winner. But why? Well if you look at the predicted shapes of the decision tree and GLM models, what do you notice? Straight boundaries. Our input model did not include any transformations to account for the non-linear relationship between x, y, and the color. Given a specific set of transformations we definitely could have made GLM and the DT perform better, but why waste time? With no complex transformations or scaling, SVM only misclassified 117/5000 points (98% accuracy as opposed to DT-51% and GLM-12%! Of those all misclassified points were red--hence the slight bulge.

When not to use it

So why not use SVM for everything? Well unfortunately the magic of SVM is also the biggest drawback. The complex data transformations and resulting boundary plane are very difficult to interpret. This is why it's often called a black box. GLM and decision trees on the contrary are exactly the opposite. It's very easy to understand exactly what and why DT and GLM are doing at the expense of performance.

More Resources

Want to know more about SVM? Here's a few good resources I've come across: