Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- Life or Death: Feature Engineering for the Titanic...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Coming from a background of building stats models and performing stats analysis in R and Python, I thought it would be a fun activity to showcase how one could do

all that coding within Alteryx. I was curious as to the ease of understanding the methodology from a visual perspective in Alteryx since coding can become quite cumbersome and unappealing to some. I also thought this could be used as a nice guide into predictive analytics, in terms of the methodology and thought process, for anyone that wants to get more involved in predictive but is hesitant on taking that first step.

I decided to use the well-known Titanic Kaggle dataset, and mimicked an R-blogger’s first crack at it, converting the R code to Alteryx. I’ll be doing the walkthrough in Alteryx in this blog, but if you’re curious about the R code that does the same thing, you can always refer to the R-blog and compare it. I’ll be keeping the Table of Contents numbering convention the same as an ease of reference. This is part 1 or the blog series where I'll cover feature engineering. Part 2 will explore missingness, and part 3 will conclude with prediction.

As a quick setup summary, the two data files are train.csv and test.csv. Train is the dataset we use to build a model and test is the dataset we use to predict. We’ll also create a submission.csv for our final results that we’ll use to upload to Kaggle to obtain our accuracy score. You’ll notice that there is a “Survived” variable in these datasets, which is the target variable. “Survived” exists in the train.csv but not in the test.csv, which is how we can differentiate these files. We’ll try to predict this variable with our model, and then use the submission.csv to see if we predicted Survivability accurately.

To follow along, I’ve attached the workflows at the bottom, but if you would like more of a challenge, build it out while reading to see if you can figure out the proper configurations.

1. Load the Data

Now, looking at the blog, the first thing to do is to load any necessary packages and the data and check out the variables. Fortunately, there are no extra packages you’ll need to load into Alteryx (Just make sure you have Predictive Tools Installed!), and bringing in data is as simple as bringing in an Input Tool. You can throw in a Browse Tool to see what we’re playing with, but we’ll do data exploration in this whole blog, so it’s not necessary. To get a description of the variables, this is what we have:

|

Variable Name |

Description |

|

Survived |

Survived (1) or died (0) |

|

Pclass |

Passenger’s class |

|

Name |

Passenger’s name |

|

Gender |

Passenger’s gender |

|

Age |

Passenger’s age |

|

SibSp |

Number of siblings/spouses aboard |

|

Parch |

Number of parents/children aboard |

|

Ticket |

Ticket number |

|

Fare |

Fare |

|

Cabin |

Cabin |

|

Embarked |

Port of embarkation |

We’ll be playing with the dataset in its entirety, so we’ll use a Union Tool and bring the train.csv and test.csv into one data stream.

2. Feature Engineering

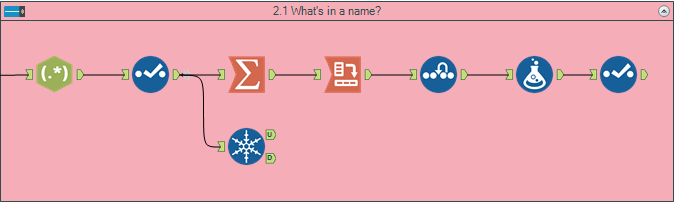

2.1 What’s in a Name?

Now into the fun stuff! We’re going to look at our current variables and see if we can obtain anything meaningful from them. This includes creating additional variables, or simply understanding distributions so we can understand which variables might be valuable for us.

The first thing that pops out is the Passenger Name. With a name, we can see a person’s status (Mr., Mrs., etc.) and their family (Surname). Using Regex, we can split up the variable into two extra fields, one containing the title, and one containing the Surname.

We’ll use a Summarize Tool to add up all the unique titles, and then Crosstab it by gender into a more readable table. Since the Crosstab brings in nulls for any values that are zero, we’ll throw in an Imputation Tool to correct for this. The output table is a bit cumbersome with so many unique titles, so we’ll just use a Formula Tool to combine all these Titles and then use a Select Tool to only choose the aggregated titles.

It looks like we have some elite upper class folk (all male, which is typical of the early 1900s) and can distinguish between married and single women.

Throwing in a Unique Tool, we can get the total records of unique Family Names, which is 875 out of 1309 records. We’ve got a lot of families, so let’s get cracking to see what we can find.

Note: The workflow will create an extra title that was not parsed out due to a data exception (which is corrected when aggregating all the titles together).

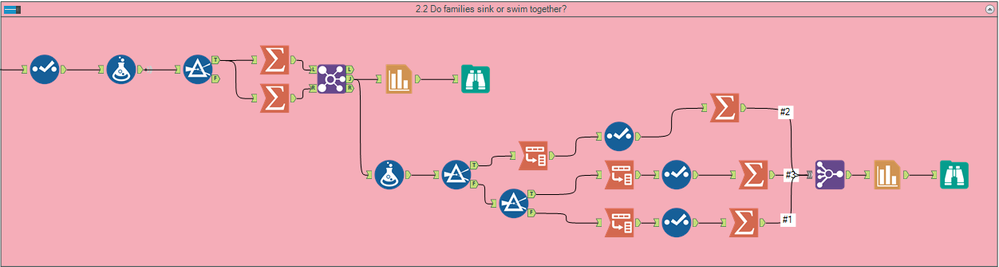

2.2 Do families sink or swim together?

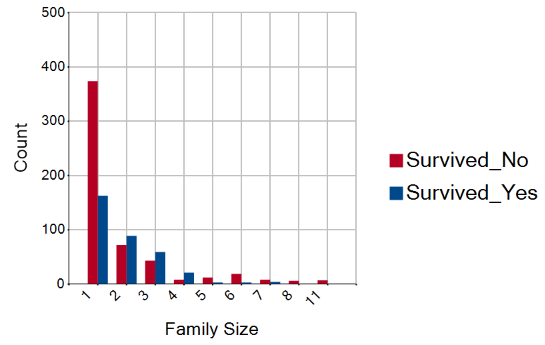

Now that we know that there are a good amount of families, we can create some more variables that look closer into this. First, let’s create a Family Size variable that combines the SibSp and Parch variable (and adding 1 for the individual as well) so we can see how big each person’s family is. To see how it affects survival, let’s plot this against our training data.

We’ll use two other formulas to create tags for if the person survived or not, filter out the test data, and use Summarize Tools to group everything by family size. After Joining them into a single data stream again, we’ll use the Charting Tool to see how Family Size affects survival.

It seems that people with no family (Family Size = 1) are more likely to not survive. Additionally, we can see that larger families don’t survive. To get a better sense of these family sizes, let’s create a discretized family size and group them into three categories: singleton, small, and large.

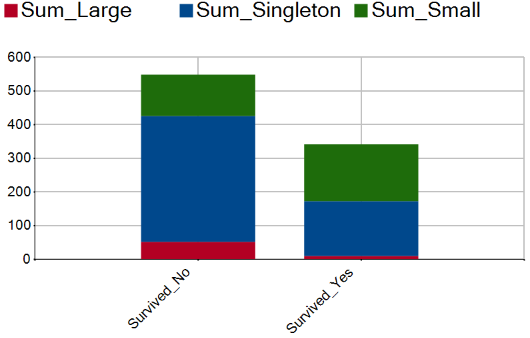

To do this we’ll use a Formula Tool to create the “Discretized Family Size” variable and filter among the three groups. Within each group we’ll use a Cross Tab on Survival and then use a Summarize to total the numbers. Afterwards, we’ll join the three data streams together and Chart it like before.

As we can see, Singleton and Large families have a survival penalty, while those with smaller family sizes have a survival benefit.

Note: You’ll notice the mosaic plot in the R-blog when analyzing the discretized family sizes. While not exactly the same, our stacked column graph tells us the same information.

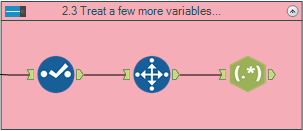

Treat a Few More Variables

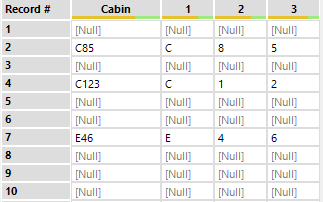

Looking at the Cabin variable, we might be able to infer some information about their deck. Using a Unique Tool, we’ll take a sample of the first 28 rows. We can split the string using Regex to get the deck letter and then room number.

We could try to look more into this, like multiple rooms listed, etc. but considering the sparseness of the field (77% null), we’ll just leave it as is.

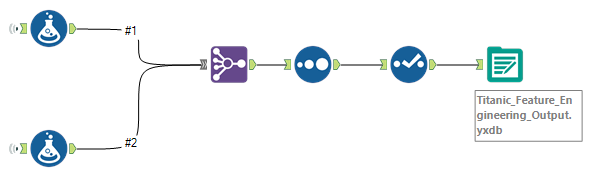

Note: Since we’re doing a multiple workflow problem, let’s create an output for section 2 that we’ll use as an Input for section 3. We’ll use Formulas to recreate the variables we created in previous steps and Union everything together.

Tune in next week when we'll cover Missingness.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.