Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- Book Review: Machine Learning with R

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Machine Learning with R: Expert techniques for predictive modeling, by Brett Lantz, was recently updated to a third edition. My review...

A lot of people wouldn’t start a book called Machine Learning with R with the expectation of it being an engaging read. We pick it up because we need to learn some new modeling techniques, or we want to brush up on our R skills, or we simply need scary-seeming technical books on our desks to justify that expensive online data science course…

Not the case. I actively enjoyed this book.

Maybe it’s the suppressed anthropologist in me, appreciating the author Brett Lantz’s sociology background, which supplied practical examples that are meaningful. It’s probably because Lantz spends time on some of the underserved algorithms like Naïve-Bayes, black box methods like neural networks and support vector machines, while still covering the bread-and-butter production processes like market basket analysis and time series forecasting.

It is absolutely the realistic, grounded view of the data science lifecycle (from data collection to model evaluation and improvement) and more importantly, how to begin with it. If you’re already a citizen data scientist, you will definitely get something out of this book. If you’re a data nerd with aspirations, this is your starting point.

Start with Ethics

It’s presumably the uprising anthropologist in me that believes ethics is the right place to start. Data leads to pattern recognition and pattern recognition leads to machine learning. Lantz covers this, as well as successes and pitfalls. But considering the ethics of machine learning and AI needs to happen first, not afterwards.

“[Machines] are pure intellectual horsepower without direction. A computer may be more capable than a human of finding subtle patterns in large databases, but it still needs a human to motivate the analysis and turn the result into meaningful action.”

Meaningful action.

The meaning comes from you. Providing value to your organization, or researching data from social networking profiles to uncover depression in teenagers, humans are the ones who direct the study and apply the results. Do not blindly apply results!

Do not blindly email expectant mothers-to-be with retail offers! Do not let reddit train your chatbot! Don’t improve economic fortune at the expense of homelessness and displacement!

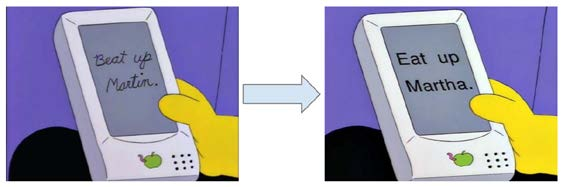

Seasoned statisticians and fresh-faced econometrics graduates all understand and appreciate a Simpsons reference. (It was the one parodying Apple’s “state-of-the-art” handwriting recognition, and the tablet misinterpreted Nelson’s note to "Beat up Martin" as "Eat up Martha.")

When building machine learning systems, it is crucial to consider how they may be influenced by a determined individual or crowd. Start there. Then, understand how machines learn, and the abstraction of an idea from data. We now have models that can decide what model is best for your data. That black magic witchery is great in black box applications, but how does it help you know what you’re doing?

Learning ML via R or Learning R via ML?

Why not both? If you’re starting from scratch with machine learning and/or R, we got you. If you have a took-a-class-at-university-20-years-ago skillset, you will ramp up faster.

Not diving too deep into the weeds of R, this book outlines fundamentals, and his pace is spot-on. In every example, Lantz details nuances in data exploration and prep, model training and improvement for each algorithm, knowing that the nuances in those parameters can matter.

Step by step R code examples demonstrate how to investigate data to determine the most appropriate methodology for normalizing variables while teaching why the methods are appropriate in different circumstances. Learning the summary statistics functions to evaluate model performance reinforces model explainability skills.

What this book covers

Chapter 1, Introducing Machine Learning, presents the terminology and concepts that define and distinguish machine learners, as well as a method for matching a learning task with the appropriate algorithm.

Chapter 2, Managing and Understanding Data, provides an opportunity to get your hands dirty working with data in R. Essential data structures and procedures used for loading, exploring, and understanding data are discussed.

Chapter 3, Lazy Learning – Classification Using Nearest Neighbors, teaches you how to understand and apply a simple yet powerful machine learning algorithm to your first real-world task: identifying malignant samples of cancer.

Chapter 4, Probabilistic Learning – Classification Using Naive Bayes, reveals the essential concepts of probability that are used in cutting-edge spam filtering systems. You'll learn the basics of text mining in the process of building your own spam filter.

Chapter 5, Divide and Conquer – Classification Using Decision Trees and Rules, explores a couple of learning algorithms whose predictions are not only accurate, but also easily explained. We'll apply these methods to tasks where transparency is important.

Chapter 6, Forecasting Numeric Data – Regression Methods, introduces machine learning algorithms used for making numeric predictions. As these techniques are heavily embedded in the field of statistics, you will also learn the essential metrics needed to make sense of numeric relationships.

Chapter 7, Black Box Methods – Neural Networks and Support Vector Machines, covers two complex but powerful machine learning algorithms. Though the math may appear intimidating, we will work through examples that illustrate their inner workings in simple terms.

Chapter 8, Finding Patterns – Market Basket Analysis Using Association Rules, exposes the algorithm used in the recommendation systems employed by many retailers. If you've ever wondered how retailers seem to know your purchasing habits better than you know yourself, this chapter will reveal their secrets.

Chapter 9, Finding Groups of Data – Clustering with k-means, is devoted to a procedure that locates clusters of related items. We'll utilize this algorithm to identify profiles within an online community.

Chapter 10, Evaluating Model Performance, provides information on measuring the success of a machine learning project and obtaining a reliable estimate of the learner's performance on future data.

Chapter 11, Improving Model Performance, reveals the methods employed by the teams at the top of machine learning competition leaderboards. If you have a competitive streak, or simply want to get the most out of your data, you'll need to add these techniques to your repertoire.

Chapter 12, Specialized Machine Learning Topics, explores the frontiers of machine learning. From working with big data to making R work faster, the topics covered will help you push the boundaries of what is possible with R.

A Peek Inside Chapter 7: Black Box Methods – Neural Networks and Support Vector Machines

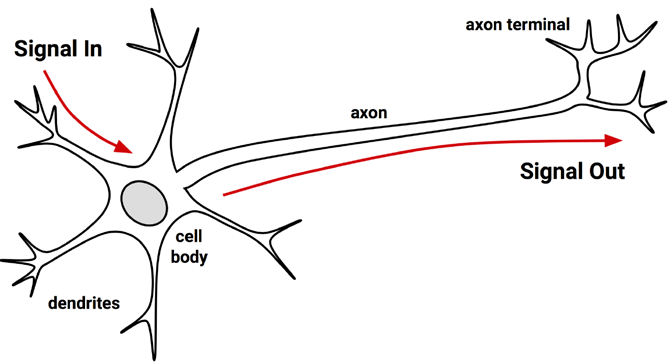

An artificial neural network (ANN) models the relationship between a set of input signals and an output signal, using a model derived from our understanding of how a biological brain responds to stimuli from sensory inputs. Just like a brain uses a network of interconnected cells called neurons to provide vast learning capability, the ANN uses a network of artificial neurons or nodes to solve challenging learning problems.

-Lantz 218

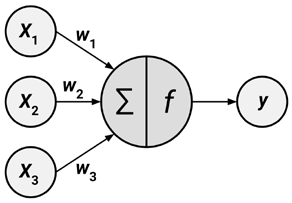

Although there are numerous variants of neural networks, each can be defined in terms of the following characteristics:

- An activation function, which transforms a neuron's net input signal into a single output signal to be broadcasted further in the network

- A network topology (or architecture), which describes the number of neurons in the model as well as the number of layers and manner in which they are connected

- The training algorithm, which specifies how connection weights are set in order to inhibit or excite neurons in proportion to the input signal

-Lantz 220

This is the straightforward, mathematically trivial metaphor for a neural network. Investigating each component brought me closer to completing the model structure conceptually. The activation function is the mechanism that kicks off each neuron (essentially a computational unit). The topology is the pattern and structure of interconnected neurons. Adding layers increases the complexity of what the model can classify (a simple neural network will be able to classify [hotdog, not-hotdog, other] with fewer basic pattern recognition components, while you’d need more layers for [cat, dog, other]). Particular nodes will be better at determining different features (ears, tail, boop-able noses), so adding layers allows the network to be much more specific on the ears themselves, and tails, and all the adorable noses.

The network topology is a blank slate that by itself has not learned anything. Like a newborn child, it must be trained with experience. As the neural network processes the input data, connections between the neurons are strengthened or weakened, similar to how a baby's brain develops as he or she experiences the environment. The network's connection weights are adjusted to reflect the patterns observed over time.

- Lantz 226

The example outlines estimating the strength of concrete. It is difficult to accurately predict the strength of the final product due to the wide variety of ingredients that interact in complex ways. Explains Lantz, “A model that could reliably predict concrete strength given a listing of the composition of the input materials could result in safer construction practices.”

Using data on the compressive strength of concrete, donated to the UCI Machine Learning Repository, the reader is walked through exploring and preparing the data specifically for an artificial neural network (ANN). This includes scaling your data with a normalizing function – and discussing (in a previous chapter) which normalizing function to use based on your data. He talks about using a technique called gradient descent to help determine the initial weight, which the model then iterates. For training the model, he recommends the neuralnet package for its easy-to-use implementation and functions to plot the network topology. Because that’s how we do it, right? Train, visualize, iterate.

I want to brag here for a moment, that I now understand neural networks enough to actually explain the concept to someone else (in terms of autonomous vehicles – one of Lantz’s interests). I was dying to do that here, actually, because I was so excited working through Lantz’s example and seeing the plots, customizing a more complex topology capable of learning more difficult concepts.

But I want you to try! This book makes it so easy, providing the data and all the R code (in easily digestible snippets). Simply open up an R console and you’re practically already modeling.

Machine Learning with R wraps up with the not-so-beginner topics, but there are helpful tips and best practices everywhere. There are examples for working with domain-specific data, such as bioinformatics or social networks. He talks about specific R packages to work with very large datasets (data.table, ff and bigmemory) and how to speed up processing.

You know what? Upon reflection, I do need a copy of this book for my desk at work. When colleagues ask me how to get started with machine learning, I can hand them Machine Learning with R by Brett Lantz and be confident that they’ll get a solid foundation of the algorithms, some pro tips in R, as well as a good analytic mindset. And a refresher on ethics😉

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.