Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- A Lyft Driver Uses Linear Regression to Predict Th...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

As a Lyft driver, how can I predict how much my next fare will be?

For my use case, I am a part-time Lyft driver and I want to predict how much I can expect to receive on my next fare. If I can better determine how much I can expect to receive, I can more easily set and reach my goals. Some predictors of how much my next fare will be include things like the ride distance, the time of day, the type of ride (whether it's a regular fare or a line/split fare) and customer demographics such as age and household income. I am guessing the ride distance is the best predictor, but I really want to know which of my predictors are most important or significant so that I can set better goals. For example, if time of day is a significant predictor of fare amount, then I might adjust when I drive so that I get higher fare amounts.

Part I - Starter kits and brain juice to the rescue

Speaking linearly

Okay fellow overachievers, here is a list of my nerd werds and an easy way to remember them.

- Variable - something that varies

- Predictor - something used to predict something else

- Target - something I want to target, like customer spend, or Lyft fare

- Independent - a variable that acts on its own without being changed by another variable, like age, household income, distance, browser type. Another word for predictor.

- Dependent - a variable that changes depending on other variables, like how much a customer spends could depend on their age, their household income, what browser they are using, how far way they are from the store. Another word for target.

- Significant - important, valid

- Coefficient - a multiplier

- X - the independent variables or predictors

- Y - the dependent variable or target

- Intercept - the value at which the fitted line crosses the y-axis (also known as the model constant)

Rosemary mint water and other supplies

My laundry list of stuff I need to get before starting my linear regression model:

- The Alteryx Predictive Analytics starter kit from Alteryx.com

- The latest Alteryx Designer and Alteryx Predictive Tools from licenses.alteryx.com.

- A shortcut to the starter kit folder at C:\ProgramData\Alteryx\DataProducts\DataSets\Content\Predictive_Analytics_Starter_Kit_Volume_1\Demand_Forecasting

- Review basic simple linear regression concepts by watching Simple Linear Regression on YouTube

- A crunchy snack and some rosemary mint water

Start simple and Mean it

My use case: how can I predict the next fare amount with a predictor of ride distance? I figure starting with a simple linear regression, before moving on to multiple linear regression, will help me wrap my tiny little monkey brain around the concepts.

Before I can consider one or more independent, or X, variables (like ride distance, attributes such as type of ride and time of day, or demographics including customer age or household income), I want to see if I can make a pretty good guess of what the next Lyft fare might be using fare amounts that I already have collected. To do this, I collect fare amount from several Lyft rides. The only information I have is the fare amount. If I add an ID to each amount, then I have ID and fare amount. This is enough to build a simple model. If this is the only information we ever had, the best prediction of the next fare amount is the mean.

To find the mean, or average, I added up all the fare amounts and divided by the number of rides. This is $10.34. This is my first guess. And in statistics this happens to be known as the mean. Time for munchies! Something about math makes me want to eat an entire pizza!

The chart shows my plotted data and where the mean falls. The mean is a pretty good guess for predicting the driver's next Lyft fare.

This chart is pretty basic - it's at the zero end of predictive capabilities. I want to apply some g-force and go from zero to 99. Am I ready for the Alteryx hot sauce? Not yet.

To go to the next step, I apply a concept known as Ordinary Least Squares (OLS) linear regression. Why? In building a model, I want to know that my model is a good representation of my data. To validate (also known as score) a model, statistics has some tricks. These tricks have to do with measuring things like differences, also known as variations, residuals, and errors.

Noses and the point of OLS

What is the point of the OLS? In my research, I discovered numbers should be positive in statistics. Multiplying two negative integers results in a positive number. The entire point is to just make the big outliers look super obvious. Mainly, if there are any values that are so far away from the mean that they look obvious, we want to find them. So like if one of my sisters has a rather really large nose, and we squared it, it would be like a really super big nose.

More nerd werds

I lied. There are more nerd werds. Just a few.

- OLS - ordinary least squares linear regression.

- R squared - a diagnostic metric derived from Sum of Squares of the residuals (SSE). R squared = 1 - (SSE/SST), where SST = total sum of squares. SSE is the residual or error and is the distance between each number and the mean. In statistics, a smaller SSE indicates the model fits the data more closely.

- Variation - In regression models, there will be always be differences, or variation, in the numbers we log (observed values), numbers we use as models (modeled values) and how far from the mean our numbers fall (the model errors).

- Equation of a straight line - This required a thorough cobweb cleaning of the algebra closet in my brain. The equation for any straight line is Y = a + bX. Applying this to my use case, Y is the Fare Amount (what I'm trying to predict), a is the intercept (or, how much is Y when X is at zero), b is the slope of the line, and X is the Distance.

Curiosity will conquer fear...

It looks like my residual sum is close to zero and my SSE is 406. In statistics, SSE is used in the coefficient of determination. This has to do with the slope (the rise over the run, the Y over the X) - a strong relationship is indicated by change in the target when the predictor is applied. In other words, a steep slope is better.

Short summary of simple linear regression

With my fare amount, the mean, the residuals, residuals squared, and a sum of the squares, I can envision a linear model that minimizes the sum of squares. This is like Pac-Man munching his way around a room, eating all of the squares. What I envision is a line that better fits my plotted chart (in statistics, this is known as best fit).

Fortunately, I don't have to take any more math classes or try to figure this out in a spreadsheet. Instead, I will use Alteryx Designer Predictive Analytics tools, specifically the Linear Regression and Score tools to help me with this.

Part II - Time for the top-fuel dragster

It's time to bring in the top-fuel dragster. Go from zero to 99 in .86 seconds! In Part I, I used only Lyft ride distance to determine the Lyft fare amount. There are other factors to consider in addition to distance. Things like time of day, type of ride, and customer demographics such as age and household income. Time to bring in the Linear Regression tool and a data set with multiple predictors (also called variables, or fields).

Sometimes you gotta have a plan

In this section I plan to actually create a data set, create a workflow, prep my data and check to see if it is ready for linear regression, then blend my data sets into a blended data set. Seems easy enough. Then in Part III, I will add new customer data to predict fares.

Ef Why Eye - IMHO - The Alteryx Predictive Analytics starter kit - is a friendly, self-paced, guided workflow that empowered me to feel pretty good about making a pretty good guess. I could not have completed my linear regression without it.

Prepare to face Judge Judy

I prepare my data and my workflow to face the judge (the Linear Regression output report). I want to bring in all possible relevant factors (known as predictors, independent variables, or X's) and let the Linear Regression tool's output report be the judge. But I don't want bad data, incorrect data types, or anything else that will upset the output report. I fear being scolded.

Bring all data sets into one house

The data sets are going to have to live together in one house. Because the Linear Regression tool is really a macro and it has a workflow running in the background. It will know what to do with my combined data set that includes all of my customer info and my transaction info.

Perfectionism and paranoia

I am hoping to create a perfect blended data set that will help me predict with confidence what my next Lyft fare will be. For example, if I only have fare amount and ride distance, I won't know if there are other just-as-significant contributing factors, such as time of day, weekday, weekend, what type of phone the customer was using, or even customer age or their household income.

I feel perfectionist paranoia setting in....to get the data blending part right, I simply followed the Alteryx Predictive Analytics starter kit guided workflow on "Preparing your data for linear regression". This is a workflow located in the following folder: C:\ProgramData\Alteryx\DataProducts\DataSets\Content\Predictive_Analytics_Starter_Kit_Volume_1\Demand_Forecasting\

My data set includes data similar to the starter kit (Products Sold, Order Attribution and Customer Information). Only my data is all about Lyft rides and fares. My Order Attribution data includes data that could possibly contribute to fare amount, such as time of day and type of ride. Maybe I will throw in some other less obvious attributes such as the rider's device just to see what happens. It's fun to play with data!

Needy linear regression

Okay, the Alteryx Designer Linear Regression tool might be just a little bit needy. I do some research and find out some basic tips and tricks:

- Speed up the workflow with the Select tool. Nobody appreciates a slow workflow. Data sets usually contain extra information not pertinent to the workflow. I used the Select tool to select only the fields I want and need.

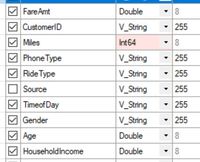

- Select the correct data type. According to this community post, data type Double is most precise. I set "FareAmt", "Age", and "HouseholdIncome" to Double. Since "Miles" is numeric, I set it to Int64.

-

Group by and sum what you want to predict. For example, my data set might have fare amounts by service type (e.g., SUV vs Limo) but I want total fare amount by transaction instead of service type. To do this, I use the Summarize tool to group my fare data by TransactionID, and then find the Sum or total amount of the transaction including the tip, to be able to fully predict fare amount at the transaction level.

-

Pick an attribute. If I have attributes, such as fare amounts due to certain time periods (e.g., weekday vs weekend), and I want to predict my next fare amount by weekday or weekend, so I can decide when I want to drive, then I can use the Filter tool to filter out anything else.

My blended data set looks like this and now I'm ready to go Linear!

This is the exciting part! We get to run our linear regression workflow, check the report to see which variables we should keep, score it, then bring in new customer data to get actual predictions! I see the future!

Part III - Create, tune, and apply a model to predict Lyft fare

My completed linear regression workflow and everything fun in between. Part A describes how I created and tuned my model. Part B describes how I added new customer data to output predicted fare amounts.

A. Create and tune a model to predict Lyft fare

I created my blended data set in Part II and now I want to bring in the Linear Regression tool and create a model.

1 - Show me the data. My first tool on my canvas is the Input Data tool. I connect this tool to my blended data set that includes transaction, attribute, and customer data.

2 - Less baggage; ID check. I want to speed up the workflow and de-select the fields I don't need like TransactionID so I make good use of the Select tool to select specific fields. This is also a second chance to set any of my data types. I check to see if Miles is set as Int64. I also keep CustomerID around in case I need it later.

3 - Fake it til you make it. I am not sure my model is going to work or not, so I use the Create Samples tool as a way to save off a random sample of my data set for validating my model. This will be used by the Score tool.

4 - See what sticks. My Linear Regression (LR) tool has three outputs – model object (O for Object), report output (R for report), and interactive output (I for interactive). These outputs will help me determine if my model is going to work or not. The LR tool is also where I get to select any variables I want – I can even select them all. My strategy is to start with all variables, I can always shed a few. Another fun part is I get to name my model anything I want – I choose NextFareModel. My target variable is always going to be Fare Amount, so everything else (except CustomerID - which goes straight to the bench as an obvious non predictor) gets thrown in as predictors, or independent variables. Flashback and it's not even Friday! I can't help but think how much more sophisticated this model is compared to starting with a calculation of the average, or mean, of all the fare amounts.

5 - Open the portals. The Browse tools are like my little portals into the other world. Once I run my workflow, I can use the R and I outputs to see how good my predictors are. This is getting exciting! Report output is what I use to check out how good my model is and make it better. I repeat steps 4 and 5 a few times so I can tune it. My tuning process consists of checking out the report to see if my predictors are good. I start with all and end up only keeping a few. Sorry bathwater.

Okay........ are my predictors significant - that is, are they any good? How to tell? So many reports, so little time.

Run it once and check for leaks

So I run the workflow then I view the I - Interactive report. Then I scroll down to the advanced statistics:

I used the Confidence column to sort by confidence. I check for a significance code of "0" which is tagged with three stars. I look at the Coefficients table and I see that I have several variables that I might want to keep and others I probably want to remove from the model. I decide to keep Miles, Ride Type, and Household Income. I decide to remove Time of Day, Phone Type, Gender, and Age because these are not as significant:

It's hard to say good bye

After removing the insignificant variables, I run my workflow again. All I have left are the most significant variables - Miles, Ride Type, and Household Income. Now my model is running in 15.8 seconds – pretty speedy!

6 - Build trust. According to Tool Mastery – Score tool, I know I am going to need to trust my model and know that it is working. The Create Samples tool and the Score tool are good team players - they work well together. The Score tool is all about building better models. I use it to see if my blended data set is going to make a good model. The Score tool in this location is like a QA tester - it uses a random sample to see if new customer data is going to work. The Create Samples tool is explained in the online help documentation. I will have to rely on this Score tool again later on in my workflow, so I want to stay on its good side.

The Score tool has checked the accuracy of my model and predicted the fare amount of the validation set. Looking at the browse output from the Score tool, I see a mostly smooth slope with few outliers.

I'm pretty confident my model will work with new customer data and give me the predictions I seek. So, with my model in place and all tuned up, now I am ready to predict Lyft fare.

B. Add new customer data and predict Lyft fare

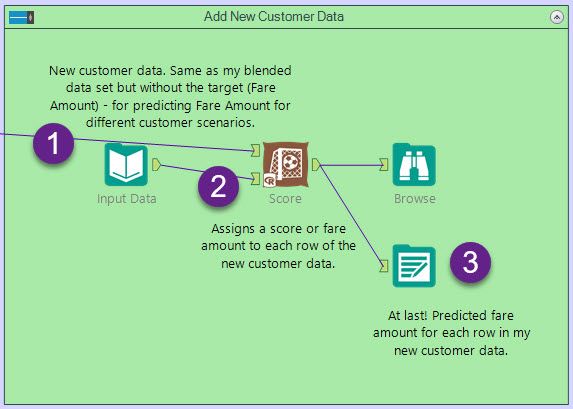

Now I’m ready to bring in new customer data which is just a data set like my blended data set - fare amount is unknown - that's what I'm trying to predict. Each row in my new customer data set represents a scenario. For example, for a customer riding 10.9 miles on a regular type fare with a household income of $30,300 I can predict the fare amount I am likely to receive as a driver.

Bring in new customer data to output predictions

Bring in new customer data to output predictions

1 - Hypothetical rider data. This is just like my blended data set only without the target (FareAmount).

2 - Predict (Score) – produces a predicted amount for each record it gets fed. IT EATS RECORDS!!! In mine, I am hooking it up to the validation data set – this will create a predicted fare amount. I can’t see the “score” or "prediction" for each row until I add another browse tool. So maybe in the future this Score will totally just automatically add its own browse too. I said maybe.

3 - Worth the wait - a prediction at last! To start the machine and view the prediction, I run the workflow. It works! Just like a little program. It makes me feel like I've actually built an application. And ta-da! Predictions!

And that's linear regression - totally fun!!!!

Alteryx Technical Writer

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.