Maveryx Success Stories

Learn how Alteryx customers transform their organizations using data and analytics.STORIES WANTED

Showcase your achievements in the Maveryx Community by submitting a Success Story now!

SUBMISSION INSTRUCTIONS- Community

- :

- Community

- :

- Learn

- :

- Success Stories

- :

- Dairy Queen’s Automated Spatial Analysis Saves $70...

Dairy Queen’s Automated Spatial Analysis Saves $700K Over Time

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Overview of Use Case

American Dairy Queen is a chain of fast-food restaurant with over 4,000 stores in the US. The Restaurant Development Strategy & Analytics team applied the Spatial tools to determine which of vendors were closest to each store location. Converting the procedure to an Alteryx workflow changed a 4-person, 41,000-hour process into a single, repeatable workflow. If we were to compare the previous project hours – over 4,000 hours – to our new process, the saved project hours for this one project pay for my Alteryx license and over 97% savings in project hours.

In our produce program if there is a recall on anything, we must be able to track the produce in order to protect our consumers. In the past, it's been a challenge to do that because we're a franchise organization, so you're relying on the franchisees to properly track their produce.

Within the first couple of months of me arriving to DQ and learning Alteryx, I was asked by an operations leader to talk through a project they were starting. They said, “we want to know how many of the secondary source produce people are nearby each store location.” We needed it to be consistent throughout our franchise system, we needed to make sure that in the event of a recall we can act quickly, and we wanted to make sure that we can update this whenever we needed to.

Original process for proximity analysis

- Create list of existing, operating DQ locations – roughly 4,500 in the US

- Look up a single DQ location on a map

- Manually search the vendor spreadsheet to identify the closest 20 vendors

- Cross check closest suspected vendors on map of the single DQ location

- Manually input each vendor on spreadsheet corresponding to the store they would service

- Repeat this exercise as stores open, close, or relocate

- Total employees required: 4

- Total initial hours: 4,160

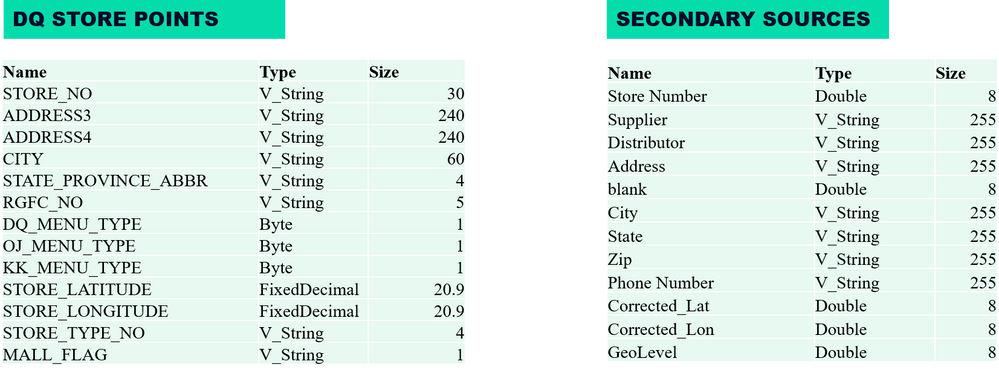

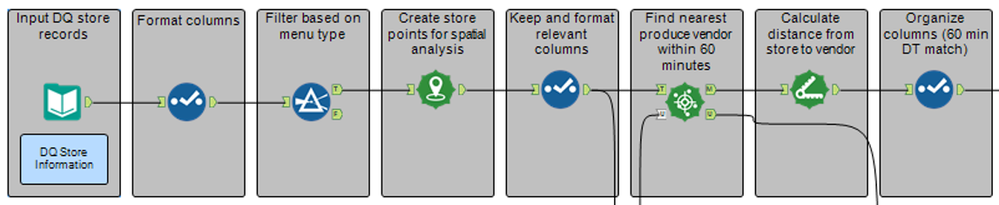

- The input data for the module was relatively basic – DQ store locations and a list of the vendors designated as secondary sources.

- We already had the DQ locations geo-coded and the spreadsheet of vendors was being maintained – since that was the original process for the project.

- The data needs to be turned into points so we can find the nearest vendors.

- This find nearest tool is asking the module to find the closest 25 store points within a 60-minute drive time. Because we want to later sort the data based on drive time and mileage, we next need to calculate the distance between the DQ location and the vendors within that 60-minute drive time.

- Also, notice the unmatched path that comes out of the find nearest tool. These are records that do not fit the criteria- stores that do not fall within a 60-minute drive time of that vendor.

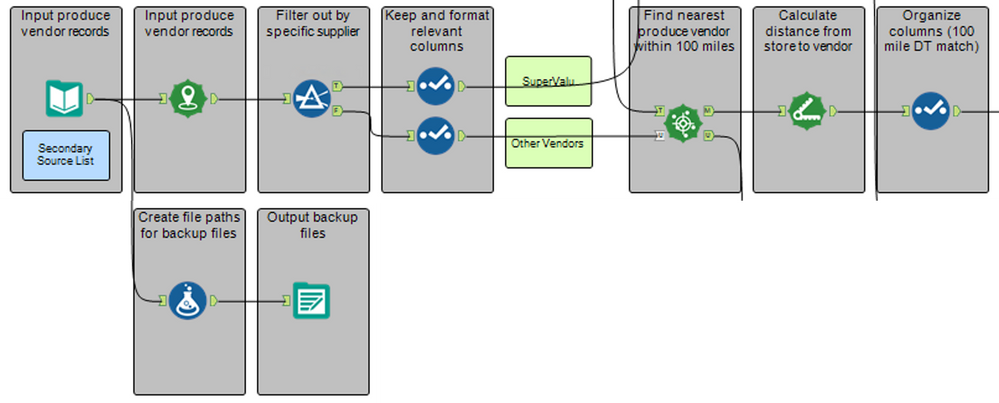

- We pull in the master vendor list and create points for each of the locations.

- Based on the specific supplier, we then filter – one supplier will have that 60-minute drive time we previously viewed, the other vendor will have a 100 mile radius.

- Our end goal is to have one spreadsheet as a master vendor list.

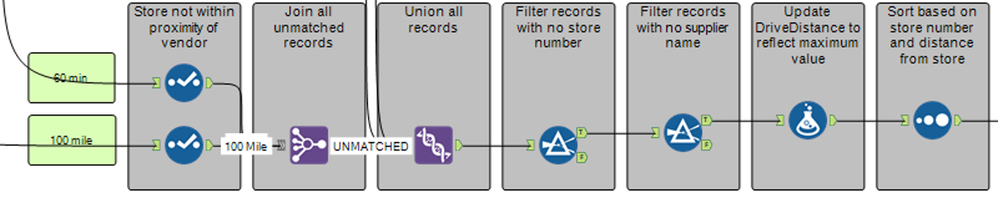

- We need to join both the 60 minute and 100 mile unmatched records into one stream.

- We union the matched and unmatched records into one file and filter out any data abnormalities- no store numbers or supplier names.

- Next, for those records that maxed out the limit in set distance, we set their distance at 100 to allow for a cleaner sorting process.

- The data is then sorted by store number and distance from store- closest to furthest.

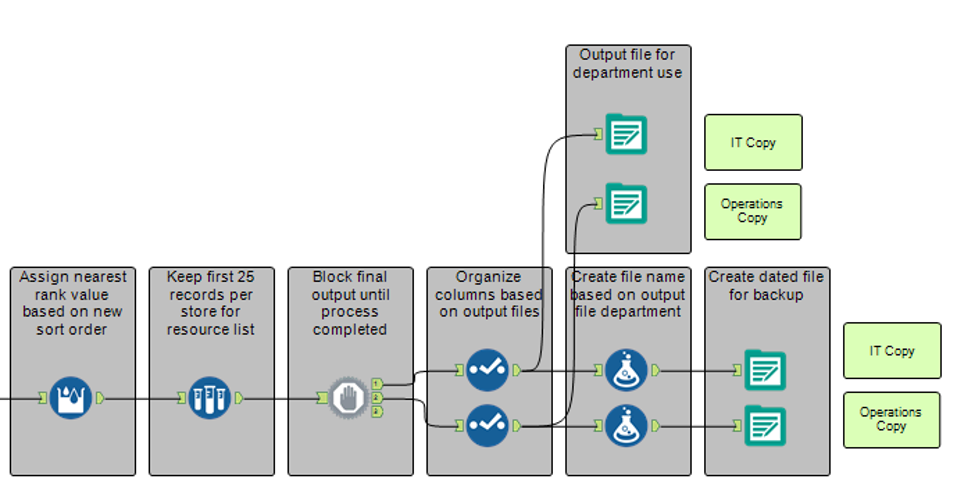

- The final part of the module assigns a rank order to each record by store number.

- Because we only want the 25 closest locations, we need to sample only the top 25 records by DQ store number.

- To prevent any errors while trying to write to the output Excel files, a Block Until Done tool is added in.

- Next the data is organized based on the output audience and then saved as an Excel file.

- I also have this set up to automatically email the group when the files are ready, which saves me from having to remember to send an update to the group.

This was one of my first major modules to build, so it took a few weeks to get it running well. We also loved the flexibility of being able to change the variables as needed. While preparing for another presentation last year, I organized and further optimized the module resulting in a run time of 30 minutes.

|

INITIAL BUILD OUT

|

OPTIMIZED |

|

• Roughly 2-3 weeks to build • Output of over 35,000 records • Originally ran in 4 hours

|

• Optimized module over the years • Final output of over 34,000 records • Now runs in 30 MINUTES!

|

The benefit we saw, even when the module was running for 4 hours, was astounding compared to the previous process. If we were to compare the previous project hours – over 4,000 hours – to our new process, the saved project hours for this 1 project pay for my Alteryx license. We can now run the module quarterly – or as needed. If we round up to 30 minutes for the module to run quarterly – 2 hours total – that is only 122 hours. Over 97% savings in project hours.

The hours that would have been spent over the last 9 years since we’ve been using this workflow:

|

Old Process |

New Process |

|

|

Frequency |

Annually |

Quarterly |

|

Number of People |

4 |

1 |

|

Total Hours Initially |

4,160 |

122 |

|

Total Hours Cumulative |

37,440 |

138 |

If we had been doing it by hand during that time – that is well over $700K in hours spent running this analysis by hand. On the other hand, by automating that process in Alteryx, we’ve only spent 16 additional hours (excludes year 1) running the workflow.

The entire PowerPoint presentation can be found here. Additionally, visit this link to watch the entire recorded session.

-

Adobe

2 -

Alteryx Connect

5 -

Alteryx Designer

202 -

Alteryx for Good

1 -

Alteryx Promote

2 -

Alteryx Server

66 -

Alteryx using Alteryx

29 -

Americas

158 -

Analyst

108 -

Analytics Leader

54 -

Asia Pacific

29 -

AWS

9 -

BI + Analytics + Data Science

100 -

Business Leader

37 -

C-Leader

18 -

Data Prep + Analytics

230 -

Data Science + Machine Learning

113 -

Data Scientist

14 -

Department: Other

14 -

Education

18 -

Energy + Utilities

5 -

Europe + Middle East + Africa

58 -

Experian

2 -

finance

29 -

Financial Services

33 -

Healthcare + Insurance

21 -

Human Resources

19 -

Information Technology

25 -

IT

31 -

Life Sciences + Pharmaceuticals

3 -

Manufacturing

20 -

Marketing

16 -

Media + Entertainment

12 -

Microsoft

52 -

Operations

38 -

Other

10 -

Process Automation

59 -

Professional Services

69 -

Public Sector

15 -

Qlik

1 -

Retail + CPG

32 -

Sales and Service

24 -

Salesforce

9 -

SAP

11 -

Snowflake

6 -

Tableau

71 -

Tech Partner: Other

86 -

Technology

34 -

Telecommunications

5 -

Teradata

5 -

Thomson Reuters

1 -

Transportation + Logistics

25 -

Travel + Hospitality

4 -

UiPath

1

- « Previous

- Next »