Alteryx Server Knowledge Base

Definitive answers from Server experts.Alteryx Server High Availability Environment

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

07-13-2022 12:37 PM

Introduction

Alteryx Server is a cloud-hosted or self-hosted application for providing self-service analytics to the business. In a Server deployment, users can publish Designer Workflows, Macros, and Analytic Apps to a private Server hosted on your company's server infrastructure, or in the cloud. Once published, these workflows can be scheduled or shared with other users to collaborate and view results.

Alteryx Server environments often support hundreds of users and are relied on to process business critical applications. Therefore, minimizing downtime of the Alteryx Server environment is critical. In this article we will look at options for providing a highly available Alteryx Server environment.

High Availability and Alteryx Server

High availability (HA) is a characteristic of a system intended to maximize uptime and minimize recovery time and data loss from a system failure.

The four primary components within Alteryx Server are the Controller, Worker, Gallery, and Persistence Layer. The Controller serves the role of orchestrator. It manages the job queue and schedules. The Worker executes Alteryx workflows. The Gallery supports a web application, web server, and APIs. The Persistence Layer (MongoDB) stores application data. Implementing Alteryx Server HA implies deploying an architecture that will allow each of these components to continue to function when a system failure occurs.

The techniques used to increase system availability and eliminate a single point of failure for Alteryx Server include replicating nodes (i.e., machines) in an active or passive mode, using third-party technology for Controller node failover, and implementing a distributed Persistence Layer.

While maximizing system availability seems intuitive, deploying system components on multiple redundant nodes comes with increasing costs and administration complexity. Each organization will need to decide the degree of availability for their Alteryx Server environment that is suitable for their needs based on service level agreements (SLAs), and acceptable cost and complexity.

Larger deployments may already consist of horizontally replicated Galley, Worker, and Persistence Layer nodes in order to meet performance and scalability requirements. In this case, adding HA for the Controller may complete the HA architecture. Conversely, in small environments, implementing a single passive node with automated third-party failover and a distributed Persistence Layer may provide the necessary HA capabilities.

This paper presents two examples for implementing high availability for Alteryx Server. The first example shows a larger deployment with replicated nodes. The second example applies to smaller deployments with fewer nodes. Other variations are possible based on customer-specific requirements. The decision to distribute Alteryx Server components on one or multiple nodes based on processing capacity requirements should be determined through a Server Sizing activity. Contact your Alteryx Representative for more information.

Alteryx Server HA Example for Larger Deployments

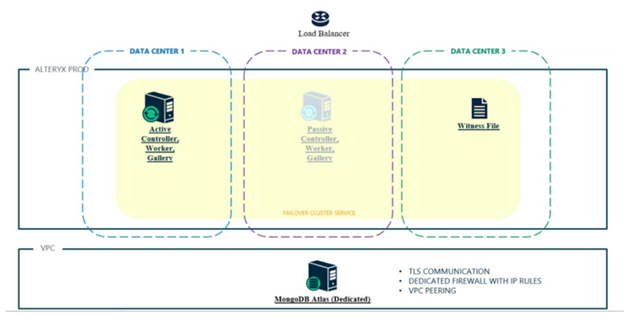

Figure 1 shows an example of distributing Alteryx Server components across multiple replicated tiers and data centers. The need for multiple nodes can be determined based on Server Sizing analysis, HA requirements, or a combination of both. The extent to protect from data center outages is a customer decision.

image.png

image.png

Figure 1: Distributed Alteryx Server HA deployment utilizing a user-managed MongoDB database

Controller HA is enabled in this example by deploying a primary Controller machine and two standby Controller machines. Standby implies the machines are active but the Alteryx Service is not started. Only one Controller may be active at a time. Automated failover from the primary Controller to one of the standby Controllers is performed by third-party software such as Microsoft Failover Clustering. Microsoft recommends 3 or more members in a cluster to guarantee survival after node failure. See Understanding cluster and pool quorum for more information. See the Alteryx Community article High-Availability Controller for information on implementing Controller HA with Microsoft Failover Clustering.

Worker HA is enabled by deploying multiple Worker nodes. These can be in the same or different data center depending on HA requirements. When Worker tags are used, a best practice is to have at least two Workers with each Worker Tag to provide redundancy.

Implementing HA for the Gallery component is enabled by deploying multiple Gallery machines. A third-party load balancer is required to direct traffic to the Gallery machines.

Persistence Layer HA in this example is enabled by deploying a distributed MongoDB with replication using either a user-managed MongoDB or the MongoDB Atlas database service. A user-managed MongoDB instance is installed, configured, and managed on infrastructure remote to Alteryx Server by the customer’s IT team. Conversely, as a database service, MongoDB Atlas eliminates the need to procure infrastructure and can reduce administration. See the MongoDB Atlas documentation for information on potential benefits and cloud deployment options.

The Alteryx Server for High Availability license is used with standby machines to mirror a production Controller machine or deploy a disaster recovery environment. In this example, each HA Controller node would require one HA license. The Alteryx Service on the standby machines would be started only in the event the production environment becomes unavailable. Microsoft Failover Clustering will start the Alteryx Service on a standby Controller should the primary Controller become inactive. All other Worker and Gallery machines in the production environment use standard licensing. User-managed MongoDB or MongoDB Atlas are licensed from MongoDB, Inc.

Alteryx Server HA Example for Smaller Deployments

Figure 2 shows an example of a single node Controller/Worker/Gallery environment with a distributed MongoDB. One Controller/Worker/Gallery passive node combined with a witness file and the distributed MongoDB provides the HA capabilities.

image.png

image.png

Figure 2: Alteryx Server HA example deployment utilizing MongoDB Atlas

In this example, the HA Controller, Worker, and Gallery components are deployed on a single passive node. As with the previous example, Microsoft Failover Clustering is used to switch to from the primary to standby Controller should the primary Controller become inactive. However, in this example, a witness file as opposed to a second passive Controller node is used. When a witness file is used, the cluster can only survive one Controller node failure, however the infrastructure and Alteryx HA license for a second standby Controller is avoided. See Understanding cluster and pool quorum for more information on the use of a witness file. A third-party load balancer is required to direct traffic to the Gallery machines.

As in the previous example, a user-managed MongoDB or MongoDB Atlas is required to provide database high availability. In smaller deployments, the MongoDB Atlas database service may be preferred because the customer does not need to deploy machines and install software for the database. Also, some administration tasks such as performing backups are provided through MongoDB Atlas.

The Alteryx Server for High Availability license is used in this example to license the passive Controller/Worker/Gallery node. MongoDB Atlas or user-managed MongoDB are licensed from MongoDB, Inc.

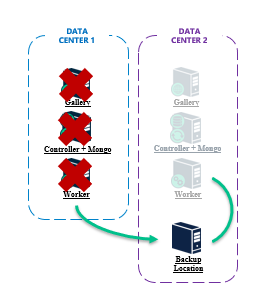

Disaster Recovery (DR)

A related but different concept from high availability is disaster recovery. Disaster recovery is a method of regaining access to applications after an outage such as a cyber-attack or hardware failure. This may be part of a larger business continuity plan. Techniques to implement disaster recovery typically involve taking frequent backups of the production environment and storing backups in a separate location. Should a failure occur in the production environment, the backups are restored to the disaster recovery environment. Although this method can accommodate any failure, downtime will occur in order to restore the DR environment.

image.png

image.png

Figure 3: Alteryx Server Disaster Recovery example deployment

The Alteryx Server for High Availability license is used with standby machines for disaster recovery. The Alteryx Service on the standby machines would be started only in the event the production environment becomes unavailable. Note, each Alteryx Server for HA license is for an individual machine. Therefore, if you had a 3-machine production deployment and wanted 3 additional machines in a DR data center that are only used in the case of a production data center failure, 3 Alteryx Server for HA licenses would be required.

Conclusion

Implementing high availability and disaster recovery architectures for Alteryx Server ensures that Alteryx Server will be able to serve business critical needs despite infrastructure failures and disruptions. If you have any questions about implementing HA and DR for Alteryx Server including licensing, please contact your Alteryx representative.

-

11.0

1 -

2018.3

11 -

2019.3

12 -

2019.4

13 -

2020.4

19 -

2021.1

19 -

2021.2

24 -

2021.3

19 -

2021.4

25 -

2022.1

21 -

Alteryx Gallery

3 -

Alteryx Server

7 -

Apps

16 -

Best Practices

37 -

Chained App

4 -

Collections

7 -

Common Use Cases

35 -

Customer Support Team

2 -

Database Connection

30 -

Datasets

4 -

Documentation

1 -

Dynamic Processing

4 -

Error Message

79 -

FIPS Server

2 -

Gallery

193 -

Gallery Administration

31 -

Gallery API

9 -

How To

95 -

Input

13 -

Installation

31 -

Licensing

13 -

Logs

7 -

Macros

8 -

MongoDB

57 -

Output

11 -

Permissions

5 -

Publish

25 -

Reporting

10 -

Run Command

6 -

SAML

9 -

Scheduler

45 -

Settings

52 -

Support

1 -

Tips and Tricks

50 -

Troubleshooting

6 -

Updates

8 -

Upgrades

18 -

Use Case

1 -

Windows Authentication

13 -

Workflow

35

- « Previous

- Next »