Dearest colleageues and comrades (Romans, countrymen?):

Have you ever queued up your jobs only to have them block your regularly scheduled programming? Imagine a world where, assuming you had multiple worker nodes, you can direct and prioritise your jobs on your terms.

This is what I am suggesting.

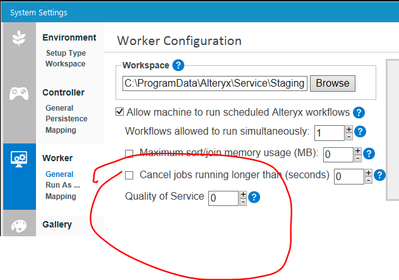

I've recently worked with Alteryx support, and they turned me on to a QoS setting in Alteryx Server settings. Peep this like a marshmallow chick in hot pink:

After learning from the great Server Master Kevin Powney (blessed be his name), I learnt that there are currently 3 'channels' that this QoS variable governs. 0 is the highest priority used for workflows. 4 is used for chained apps. 6 is used for gallery service requests.

This will not do.

Why? Well, for one: hello arcane/memorisation.

Secondly, where is my control? I'm a millenial dammit! Service me!

So, my idea, that I want you to vote so highly on as to save yourself any myself a lot of hassle, is to allow a custom QoS variable to be a traffic variable. And here's how it works:

- For each workflow/app, you can assign it whatever QoS/traffic variable you choose.

- This allows the user the power to control which 'lane' is clogged in the queue.

Example time! [cheers, candy thrown]

In my current situation, we have some jobs that are network-intensive (database calls), and jobs that are processing-intensive (CPU hogs doing hard-core maths).

The network-intensive jobs run on the weekends so that we have the data in the morning on Monday. The processing-intensive jobs need to finish, but suck up all the CPU power. For the last couple of weeks, we've unsuccessfully run these jobs over the weekend. The downside is that since we cannot control how traffic flows (and we only have the 0/4/6 options in QoS, of which these only fit in the 0 lane [breath, sorry]) the CPU-heavy jobs have blocked more critical network jobs.

If we had two paths, we could assign the CPU jobs to lane 1 and the network jobs to lane 0 and they can run in parallel. And then my boss is happy. We like happy bosses, right?

Vote this up! My boss is awesome!

Thanks for your ear,

Hail Caesar or something.

-Cedric