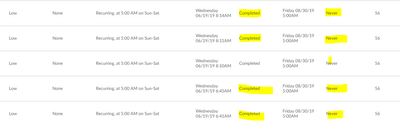

Alteryx Issue: Schedule not running as per next schedule time (its showing state = completed next run = Never)

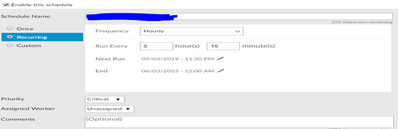

We have Alteryx jobs scheduled every 10 min, after running few iterations, in last run Suddenly it Changed to Never and State is Completed

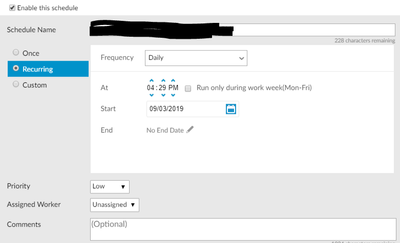

We tried with the putting the end date but still it is having same issue.

Please advice why this issue is occurring?