Tool Mastery

Explore a diverse compilation of articles that take an in-depth look at Designer tools.- Community

- :

- Community

- :

- Learn

- :

- Academy

- :

- Tool Mastery

- :

- Tool Mastery | Neural Network

Tool Mastery | Neural Network

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

09-17-2018 07:19 AM - edited 08-03-2021 03:37 PM

The Neural Network Tool in Alteryx implements functions from thennet package in Rto generate a type ofneural networkscalledmultilayer perceptrons. Neural networks are a predictive model that can estimate continuous or categorical variables. Loosely inspired by brains, neural networks are comprised of densely interconnected nodes (called neurons) organized in layers. Neural networks pass predictor variables through the connections and neurons that comprise the model to create an estimate of the target variable. By definition, neural network models generated by this tool are feed-forward (meaning data only flows in one direction through the network) and include a single hidden layer.

If you would like to know more about the underlying model, please take a moment to read the Data Science blog post It’s a No Brainer: An Introduction to Neural Networks. You can also read a little bit about the history of neural networks and their general underpinnings in this 2017 MIT News article.

In this Tool Mastery, we will review the configuration of the tool, as well as what is included in the tool's outputs.

Configuration

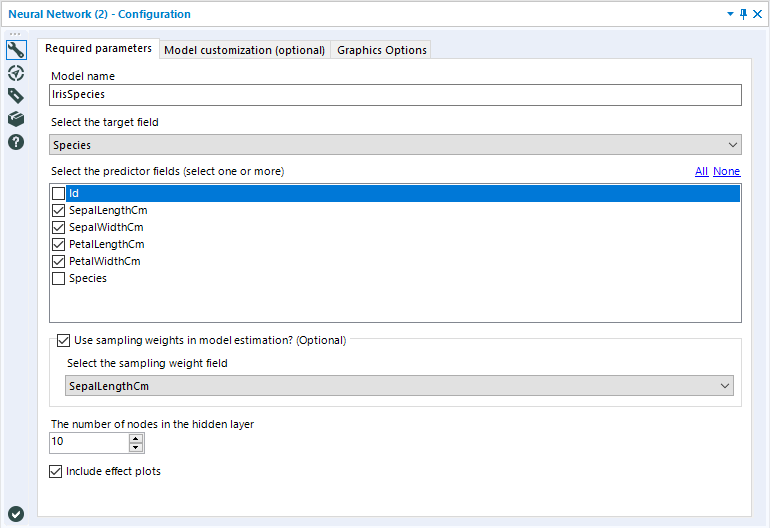

The configuration of the Neural Network Tool is comprised of three tabs; Required parameters, Model customization, and Graphics Options.

The Required parameters tab is the only mandatory configuration tab, and it is the first one that populates in the Configuration Window.

The Model name argument sets the Model Object’s name. This name must follow R naming rules: starts with a letter, and only contains letters, numbers, and the special characters period (".") or underscore ("_").

Select the target variable is where you specify which of the variables in your data set you would like to predict (estimate). The target variable for this model can be continuous (numeric) or discrete (categorical).

Select the predictor variables is a checklist of the variables you would like to use to estimate the selected target variable. These variables can also be continuous or categorical.

Use sampling weights in model estimation is an optional argument that you can enable by selecting the checkbox. It allows you to specify a field that provides sampling weights. Sampling weights are helpful in situations where the data set does not represent the population of data it was sampled from.

The number of nodes in the hidden layer is an integer argument that allows you to specify the number of nodes (aka neurons) included in your hidden layerin the neural network model. There is not a hard rule for how many nodes should be included in the hidden layer. Often, the best way to determine an optimal number of hiddenneurons is to train several neural network models, and determine which produces the best model. Too few hidden neurons can cause underfitting and high statistical bias, where too many hidden neurons can result in overfitting. For additional guidance on specifying hidden units in a neural network, please see this FAQ document on hidden units.

Include effect plots is a check option that determines if effect plots will be generated and included in the R (report) output of the tool. These plots graphically show the relationship between the predictor variable and the target, averaging over the effect of other predictor fields.

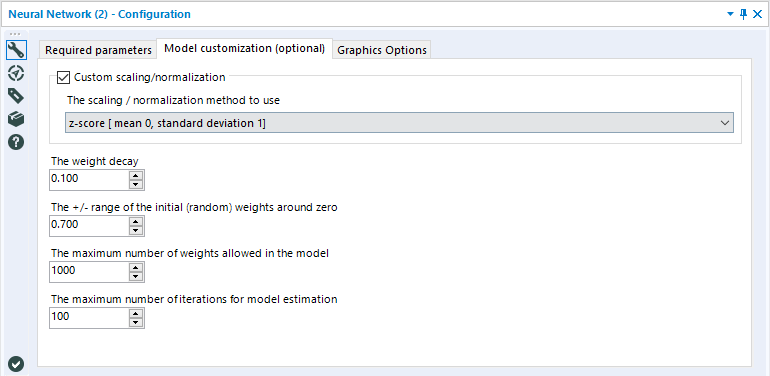

The second tab, Model customization, is optional and allows you to tweak a few of the finer points of your nnet model.

The Custom scaling/normalization argument refers to normalizing your predictor variables prior to generating the Neural Network model. In statistics, standardization refers to transforming your data so that the new values of the data feature are the signed number of standard deviations the individual observation differs from the mean of all the data points. It is a process that rescales the values of each of the variables in your dataset to have a mean of zero and a standard deviation of one.

In theory, it is not necessary to normalize your numeric predictor variables when training a neural network. Because the weights and biases of the model are adjusted during the training process (using a method called backpropagation), they can be scaled to match the magnitude of each predictor variable.However, research has shown that normalizing numeric predictor variables can make the training of the model more efficient, particularly when using traditional backpropagation with sigmoid activation functions (this is the case for the Neural Network Tool in Alteryx),which can, in turn, lead to better predictions. You can read more about this in section 4.3 of the articleEfficient BackProp by LeCun et al.

The default configuration is to leave this option unchecked. If you choose to normalize your predictor variables, you have three options to do so;Z-score, Unit Interval, orZero centered (all predictor fields are scaled so they have a min of -1 and a max of 1).

The weight decay argument limits the movement in the new weight values at each iteration during estimation and can help mitigate the risk of overfitting the model. This value can be set between zero and one. Larger values for this argument place a greater restriction on the possible adjustments of weights during model training. In general, setting a weight decay between 0.01 and 0.2 is recommended.

The +/- range of the initial (random) weights around zero argument limits the range of possible initial random weights in the hidden nodes. Generally, the value should be set close to 0.5. However, if all the input variables are large, setting a lower value for this argument can improve the model. Setting this value to 0 causes the tool to calculate an optimal value given the input data.

The maximum number of weights allowed in the model becomes important when there are a large number of predictor fields and nodes in the hidden layer. There is no hard limit for the maximum number of allowable weights in the code, which can cause models with many predictor fields and hidden layer nodes to take a long time to train. Reducing the number of weights speeds up model estimation. Weights excluded from the model are implicitly set to zero.

The maximum number of iterations for model estimation argument sets the maximum number of attempts the algorithm can make to find improvements in determining model weights relative. The default setting is 100. The algorithm will stop iterating before the maximum is met when the weights are no longer improving. If this is not the case for your model, it can help to increase this value, at the cost of processing time.

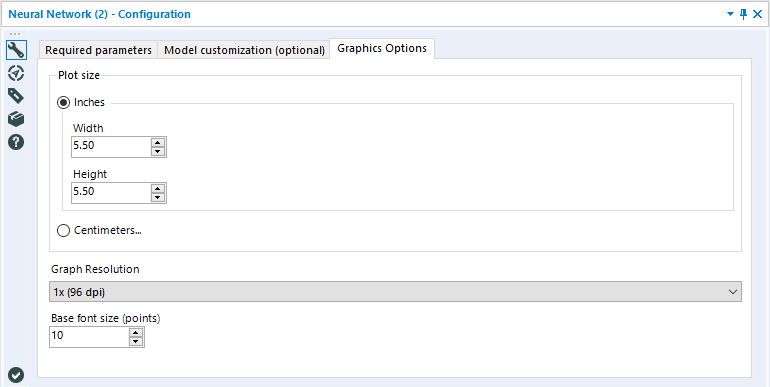

The third and final configuration tab, Graphics Options, can be used to specify the size and resolution of the output plots.

These options impact the size, resolution, and font of the plots generated for the R output.

Outputs

The Neural Network Tool has two output anchors, the object anchor (O) and the report anchor (R).

The O anchor returns the serialized R model object, with the model’s name. Serialization allows the model object to be passed out of the R code and into Designer. This object can be used as an input for the Score Tool, the Model Comparison Tool, or even the R Tool where you can write code to unserialize the model object and use it to perform additional analysis.

The R anchor is the report created during model training. It includes a basic model summary as well as effect plots for each class of the target variable.

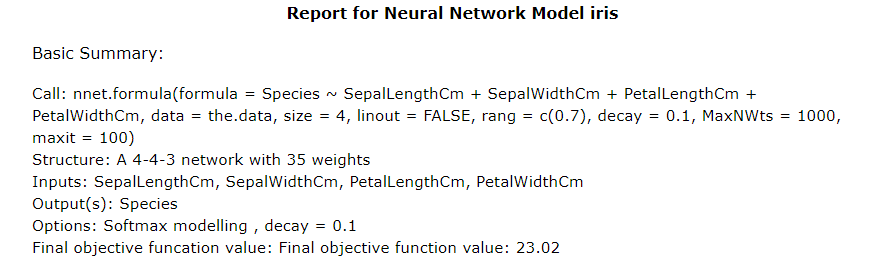

The first part of the Report returned in the R anchor is a basic model summary.

The Call is the actual code used in R to generate the model. It includes all of the configuration options that were set prior to running the Tool.

The Structure is a summary of the Neural Network model’s structure. The sequence of numbers is the number of nodes in each layer (Input-Hidden-Output), and the weights is descriptive of the weighted connections between nodes.

Inputs in this report is a list of the predictor variables used to construct the model, and Output(s) is the name of the target variable.

Options lists a few of the specific configurations included in the model. In this case, Softmaxdescribes the output layer’sactivation functionand decay refers to the argument set for the weight decay parameter (specified in the Tool’s configuration under Customization).

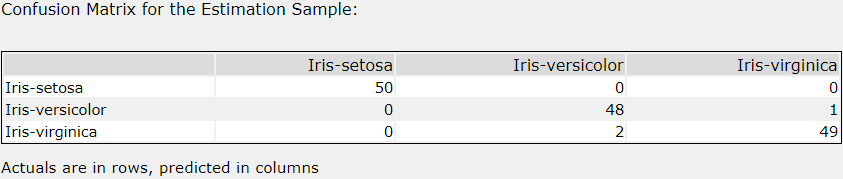

The report for a classification neural networks will include a confusion matrix. As noted, actual values are in rows, and predicted values are in columns.

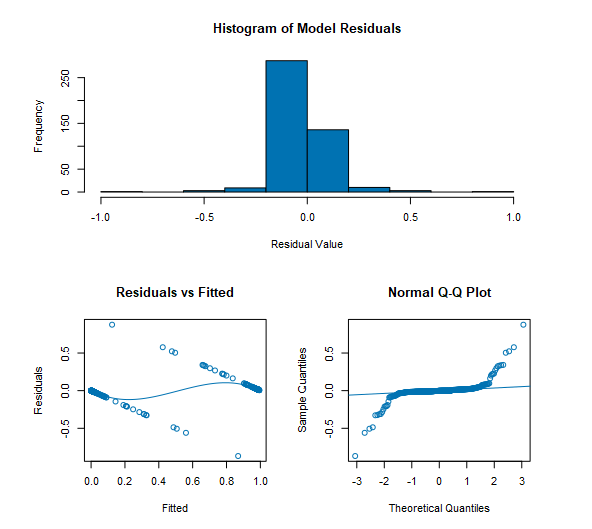

Both classification and regression neural networks will include a series of plots for interpreting model residuals, including a Histogram of residuals, a plot of Residuals vs. Fitted values, and a Normal Q-Q Plot.

For regression models, the residuals are calculated as the difference between the estimated value created by the neural network model, and the actual value for each record in the training data.

For classification models, each possible classification (target value) is given a probability that a given record belongs to that class.Residuals are calculated as the difference between the probability of the predicted value, and the actual value (a 1 or 0, depending on if the classification it true or false) for that record.

The histogram depicts the frequency for residual values for estimated versus true classes for the training data.

The Residuals vs. Fitted plot depicts a point for each record used to train the model, where the X value is the “fitted value” or probability a record belonged to its target class, and the Y-Value is the Residual of that record. A loess smooth lineis plotted along with these points. This plot is helpful for understanding how residuals may be impacted by fitted values.

The Normal Q-Q plotis used for comparing the distributions of two populations by plottingquantilevalues. Quantiles are also often referred to as percentiles and are points in your data below which a given percentage of your data fall. For the Normal Q-Q Plots included in the Neural Network Tool reports, the Sample Quantiles (quantiles of the estimates) against the Theoretical Quantiles (e.g., a normal distribution). For each point, the X-value depicts the Sample Quantile value and the Y-value is the corresponding Theoretical Quantile value. The Normal Q-Q Plot can be helpful for checking that the distribution of a set of data matches a theoretical distribution. In a case where the distribution of the sampled quantile is identical to the theoretical quantile, the line would be straight, and a 45 degree angle. For more help understanding and interpreting a Q-Q Plot, please see this helpful resource from the University of Virginia Library.

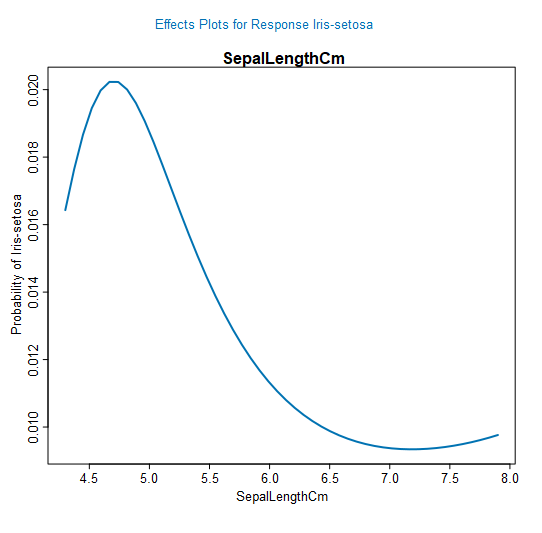

Finally, the effect plots will be included in the Report depending on if the option was checked in the configuration. In a classification model, an individual plot will be created for each target (e.g., Iris Setosa, Iris Virginica, and, Iris Versicolor), and each individual predictor variable (e.g., Sepal Length, Sepal Width, Petal Length, Petal Width).

For each predictor variable, and each classification, the plots will show how the probability of a record belonging to that specific class will change based on the value of the predictor variable. In this case, we see that the probability of a record being Iris-setosa increases when a Sepal Length is between 4.5 and 5.0 cm, but drops pretty quickly after 5.5cm. These effect plots can help make a neural network a little less opaque, by visualizing how classification probability or value is impacted by each individual predictor variable.

By now, you should have expert-level proficiency with the Neural Network Tool! If you can think of a use case we left out, feel free to use the comments section below! Consider yourself a Tool Master already? Let us know atcommunity@alteryx.comif you’d like your creative tool uses to be featured in the Tool Mastery Series.

Stay tuned with our latest posts every#ToolTuesdayby following@alteryxon Twitter! If you want to master all the Designer tools, considersubscribingfor email notifications.

-

2018.3

1 -

API

2 -

Apps

7 -

AWS

1 -

Configuration

3 -

Connector

3 -

Data Investigation

10 -

Database Connection

2 -

Date Time

4 -

Designer

1 -

Desktop Automation

1 -

Developer

8 -

Documentation

3 -

Dynamic Processing

10 -

Error

4 -

Expression

6 -

FTP

1 -

Fuzzy Match

1 -

In-DB

1 -

Input

6 -

Interface

7 -

Join

7 -

Licensing

2 -

Macros

7 -

Output

2 -

Parse

3 -

Predictive

16 -

Preparation

16 -

Prescriptive

1 -

Python

1 -

R

2 -

RegEx

1 -

Reporting

12 -

Run Command

1 -

Spatial

6 -

Tips + Tricks

2 -

Tool Mastery

99 -

Transformation

6 -

Visualytics

1

- « Previous

- Next »