Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

It would be great if we could add example workflows to our macros, accessible in the same way as from the original tools (example hyperlink shown after single-clicking on a tool in the tool palette or when searching in the search bar).

There is a post on how to do it for custom tools How to add an example link in the custom tool (alteryx.com). The way described there has limitations and does not seem to work on macros: I was able to get the link to show up, but nothing happens when I click.

My suggestion, make it easy to add an example workflow to a macro, like it is to change the logo or add a help link.

-

Category Macros

-

Enhancement

Hi!

Just thought up a simple improvement to the US Geocoder macro that could potentially speed up the results. I'm doing an analysis on some technician data where they visit the same locations over & over again. I'm doing a full year analysis (200k + records) & the geocoder takes a bit to churn thru that much data. In the case of my data though, it's the same addresses over & over again & the geocoder will go thru each one individually.

What I did in my process & could be added to the macro is to put a unique tool into the process based off address, city, state, zip, then Geocode the reduced list, then simply join back to the original data stream using a join based off the address, city, state, zip fields (or use record id tool to created a unique process id to join off).

In my case, the 200k records were reduced to 25k, which Alteryx completed in under a minute, then joined back so my output was still the 200k records (all geocoded now).

Not everyone will have this many duplicates, but I'd bet most data has a few, & every little bit of time savings helps when management is waiting on the results haha!

-

Category Address

-

Category Macros

-

Category Spatial

Hi,

Would be helpful to have an Input and Output Tool for ProjectOnline like the SharePoint and OneDrive Tools.

This way we can read the projects in a tabular form and automate our project management tasks.

Thank you.

-

Category Macros

-

New Request

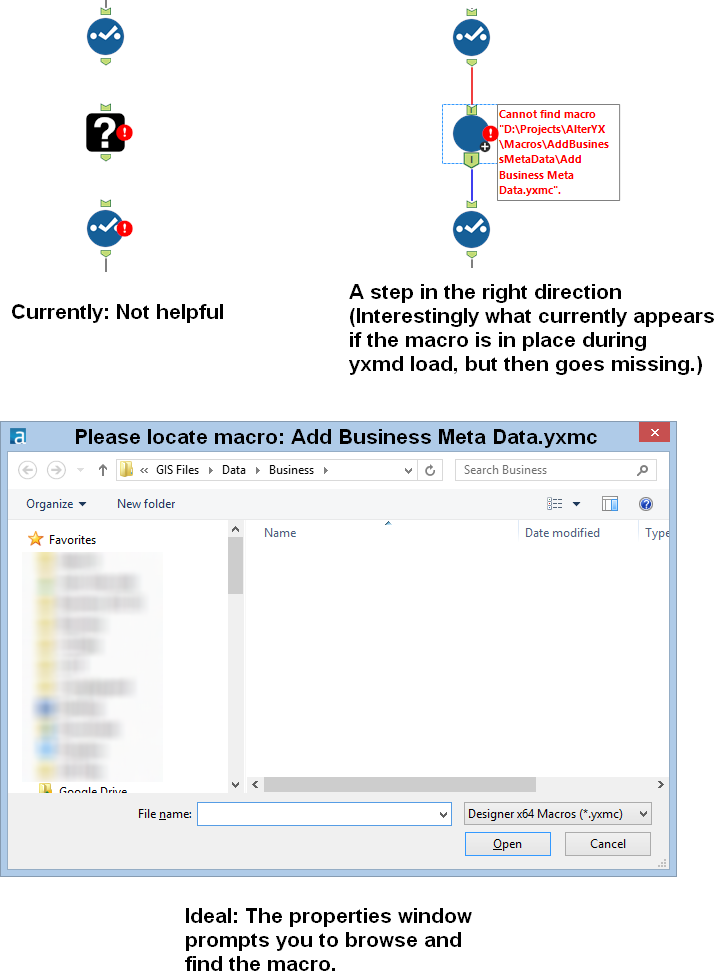

Idea: Prompt the user to find a missing macro instead of the current UX of a question mark icon.

Issue: When a macro referenced in a workflow is missing, then there is no way to a) know what the name of the macro was (assuming you were lazy like me and didn't document with a comment) and b) find the macro so you can get back to business.

When this happens to me know, I have to go to the XML view and search for macros and then cycle through them until I find the one that's missing. Then I have to either copy the macro back into that location or manually edit the workflow XML. Not cool man.

Solution: When a macro is missing, the image below at the right should be shown. In the properties window, a file browse tool should allow the user to find the macro.

-

API SDK

-

Category Developer

-

Category Macros

-

Desktop Experience

Hey all,

The join tool currently does not allow case-insensitive joins, but the find/replace tool does. Additionally- even if both sides are identical, the join tool will not join "Sean's house" to "Sean's house" because of the non-letter character in the middle. Finally - if one side is a string(2), and the other is a vString(200) - even if you have a single identical character on both sides you get uncertain outcomes unless you force the type

Please could you consider amending the join tool to include 3 new options or capabilities:

- Case insensitive join

- Allow full Unicode character set in join

- Full match across text types (irrespective of string size) - this would allow a string(2) value to match to a string(100) value as long as the string(100) value only has the same 2 characters in it as the string(2) value

That would remove a load of work from every text-join that's being done on every canvas we do.

Thank you

Sean

-

Category Connectors

-

Category Macros

-

Category Parse

-

Category Preparation

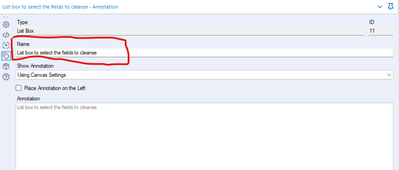

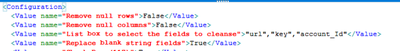

This idea has arisen from a conversation with a colleague @Carlithian where we were trying to work out a way to remove tools from the canvas which might be redundant, for example have you added a select tool to the canvas which hasn't been configured to change a data type or rename a field. So we were looking for ways of identifying in the workflow xml for tools which didn't have a configuration applied to them.

This highlighted to me an issue with something like the data cleanse tool, which is a standard macro.

The xml view of the data cleanse configuration looks like this:

<Configuration>

<Value name="Check Box (135)">False</Value>

<Value name="Check Box (136)">False</Value>

<Value name="List Box (11)">""</Value>

<Value name="Check Box (84)">False</Value>

<Value name="Check Box (117)">False</Value>

<Value name="Check Box (15)">False</Value>

<Value name="Check Box (109)">False</Value>

<Value name="Check Box (122)">False</Value>

<Value name="Check Box (53)">False</Value>

<Value name="Check Box (58)">False</Value>

<Value name="Check Box (70)">False</Value>

<Value name="Check Box (77)">False</Value>

<Value name="Drop Down (81)">upper</Value>

</Configuration>

As it is a macro, the default labelling of the drop downs is specified in the xml, if you were to do something useful with it wouldn't it be much nicer if the interface tools were named properly - such as:

So when you look at the xml of the workflow it's clearer to the user what is actually specified.

-

Category Interface

-

Category Macros

-

Desktop Experience

-

Engine

With the growing demand for data privacy and security, synthetic data generation is becoming an increasingly popular technique for generating datasets that can be shared without compromising sensitive information especially in the healthcare industry.

While Alteryx provides a range of tools, I believe that a custom tool could help meet the specific needs of a lot of healthcare organizations and customers.

Some potential features of a custom synthetic data generation tool for Alteryx could include:

Integration with other Alteryx tools: The tool could be seamlessly integrated with other Alteryx tools to provide a comprehensive data preparation and analysis platform.

Customizable data generation: Users could set parameters and define rules for generating synthetic data that accurately represents the statistical properties of the original dataset.

Data visualization and exploration: The tool could include features for visualizing and exploring the generated data to help users understand and validate the results.

I believe that a custom synthetic data generation tool could help our organization and customers generate high-quality synthetic datasets for testing, model training, and other purposes.

-

Category Macros

-

Desktop Experience

We all love seeing this. And, it's fairly easy to fix, just go find the macro and insert a new copy. But, then you have to remember the configuration and hope that it was simple.

With the tool that's there, the XML still contains the configuration, all that's missing is the tool path. It would be great to be able to right click and repair the path from the context of the missing macro.

-

Category Macros

-

Desktop Experience

A common problem with the R tool is that it outputs "False Errors" like the following: "The R.exe exit code (4294967295) indicted an error"

I call this a false error because data passes out of the R script the same as if there were no error. As such, this error can generally be ignored. In my use case, however, my R tool is embedded within an iterative macro, and the error causes the iterator to stop running.

I was able to create a workaround by moving the R tool to a separate workflow and calling it from the CReW runner macro within my iterator, effectively suppressing the error message, but this solution is a bit clumsy, requires unnecessary read/writes, and uses nonstandard macros.

I propose the solution suggested by @mbarone (https://community.alteryx.com/t5/Alteryx-Designer-Discussions/Boosted-Model-Error/td-p/5509) to only generate an error when the R return code is 1, indicating a true error, and to either ignore these false errors or pass them as warnings. This will allow R scripts and R-based tools to be embedded within iterative macros without breaking.

-

Category Macros

-

Desktop Experience

-

Tool Improvement

This has probably been mentioned before, but in case it hasn't....

The dynamic input tool is useful for bringing in multiple files / tabs, but quickly stops being fit for purpose if schemas / fields differ even slightly. The common solution is to then use a dynamic input tool inside a batch macro and set this macro to 'Auto Configure by Name', so that it waits for all files to be run and then can output knowing what it has received.

It's a pain to create these batch macros for relatively straightforward and regular processes - would it be possible to have this 'Auto Configure by Name' as an option directly in the dynamic input tool, relieving the need for a batch macro?

Thanks,

Andy

-

API SDK

-

Category Developer

-

Category Macros

-

Desktop Experience

I like to suggest having a Batch Macro Container (besides the existing Container) which acts as a Batch Macro within a Workflow and is stored within the Workflow.

I understand that having a batch macro available as a separate tool can be very powerful and reduces redundant work. However, very often Batch Macros are set up for a specific workflow only and are of no use for other workflows. The Creation of a Batch Macro in a container will significantly reduce the time to deploy a batch macro and keeps the Macro folder clean of one-time Batch Macros.

Attached a picture of how this could look like

Thanks

Manuel

-

Category Macros

-

Desktop Experience

There are many circumstances when you have to build an interative macro where it's not just the iterating data set that needs to change every iteration, but also a second data set.

Think about this like a loop where two different variables are updated on every iteration, not just the control variable in the For xxx control variable.

The way that users work around this is to use a temporary yxdb file where instead of a macro input you input from the yxdb, and then write back to the same yxdb. This allows you to pretend that you can adjust 2 different data sets on every cycle of the loop. there are 4 downsides to this:

a) User complexity - this breaks the conceptual simplicity of macro inputs since now the users have to understand that in situation X I use macro inputs; and in situation Y I have to use some other type of tool.

b) Speed penalty - writing to disk is between 1000x slower and 1 000 000x slower than working with data in memory (especially if it's in cache) - so by forcing this to go through a yxdb file, you do incur a speed penalty which is just not needed

c) blocking penalty - Because of the fact that you can't write to a file that you're still reading from, you need to pepper this with Block until done tools - and you need to initialize the macro using a first write to the yxdb file outside the macro - which further hurts speed. Given the nuanced behaviour of block-until-done, this also introduces user complexity issues

d) Self-contained - because you have to initialize these files outside the macro - the macro is no-longer self-contained and portable (which breaks the principle of Information Hiding which is a key pillar of good modular decoupled software design.

The other way that users work around this - is to serialize their entire second recordset into a field which then gets tacked onto the iterating data set using an iterative macro. This is HIGHLY wasteful becuase then you have to build a serialize & deserialize process for this second recordset. It fixes the speed and blocking penalites from above, but introduces a computational overhead which is generating no value; and makes this even more complex for users - and a further blocker to using macros.

Recommendation:

We could make this simpler by allowing users to create multiple pairs of macro input / macro outputs so that 2 or 3 or n different data sets can be updated with every iteration.

Below is a screenshot demonstrating this, from an Advent of code challenge - the details of the problem are not important - the issue at hand is that there are 2 record sets which both need to be updated on every iteration.

cc: @NicoleJohnson @Samanthaj_hughes @SteveA

-

Category Interface

-

Category Macros

-

Desktop Experience

Hi all,

Is it possible to add an option to 'Add an Image' in the settings option of Interface Designer while building Apps or macros?

Currently, we can add Group box, Link, Tab, and Label. It would be really helpful if we can add static images as well!!

This would enable a developer to add an explanatory image just as a link or label is used to communicate to end user.

I am attaching a screenshot for the reference.

Thanks in advance!!

Regards,

Shreyansh Rathod

-

Category Macros

-

Desktop Experience

Hi there,

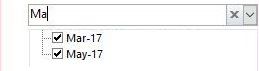

My idea comes when I've built an application, where user select filter from drop-down list. However it contains thousands of records, so it takes lot's of time to find desired record.

In Excel and MS Access when you use filter you can put many letter and filter shows rows that match the input. In Alteryx user can only put first letter, which is huge drawback to my users.

This is how it works in Excel:

Hope you like it!

-

Category Apps

-

Category Macros

-

Desktop Experience

Would be great if anytime a tool (macro tools in particular such as "Data Cleansing" tool) is copied all items from the copied tool are retained to the new pasted version of the tool. Would expect in the instance of the Data Cleansing tool for example that in lieu of not showing the fields that were in the copied from tool to be shown similar tool in which they show but noted as "Missing" and then as the new copied tool is attached to a like data source (likely same data source elsewhere) they then are checked or not checked and no longer showing as "Missing".

This would allow these tools to be copy/pasted and repurposed vs wiping out as they won't be associated right away on the pasting process until manually moved into the proper place on the respective new or updated workflow.

-

Category Macros

-

Desktop Experience

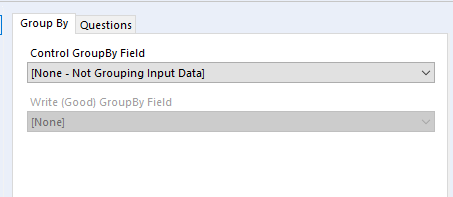

I rarely use the Group By tab on batch macros, but it's unfortunately always the first tab that pops up. When I have a questions tab on a batch macro, it would be great if it appeared first (ie I should see the questions tab when I click on my batch macro.) Thanks!

-

Category Macros

-

Desktop Experience

If the workflow configuration had a run for 'x' number of iterations option it would make debugging macros a lot easier. My current method consists of copying results, changing inputs and repeat until I find my problem which feels very manual.

-

Category Macros

-

Desktop Experience

-

User Settings

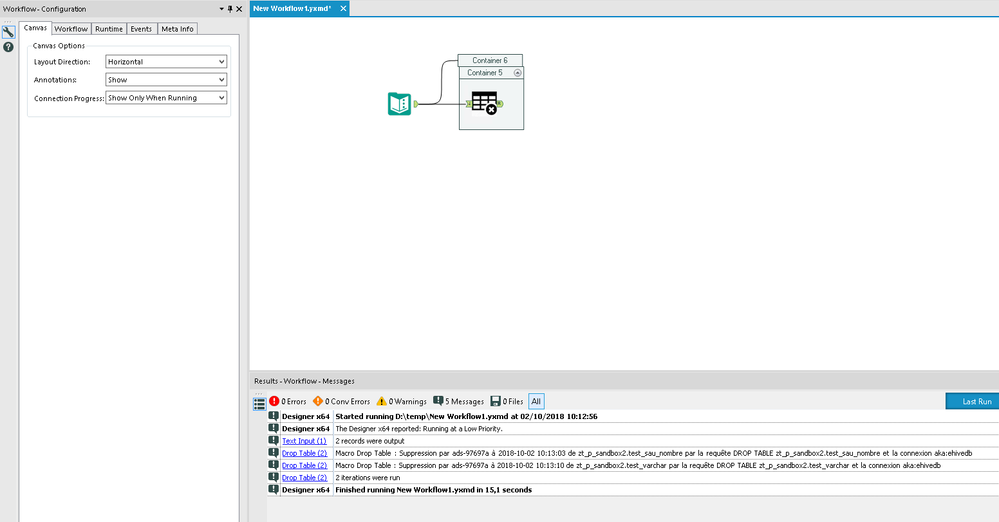

Today, the behaviour of batch macro can be strange.

If I refer to https://community.alteryx.com/t5/Alteryx-Designer-Discussions/Batch-Macro-not-looping-after-running-...

we can have big behaviour differences between :

-wf and app

-designer and scheduler

Example here with a batch macro running for all lines in designer and only for line in scheduler

I know the turnaroud (just use a message box) but it's not natural and I think

-at least the same behaviour is needed in any use case

-if you want to do some optimization, ok, but make it an option!!

-

Category Macros

-

Desktop Experience

Thanks you to @JoeM for recent training on macros, and @NicoleJohnson for pointing out some of the challenges.

when writing an iterative macro - it is a little bit difficult to debug because when you run this in designer mode, it only does one iteration and stops.

Could we add the capability to the designer itself to be able to run the second and third iteration using the test data built into the macro input tool? Even something as simple as an option to run X iterations; or when it's run the first iteration allow me to look at what happened and trigger iteration 2 (or to trigger a run-through to completion) would be immensely helpful.

While you can do this with a test-flow wrapped around a macro, macro development is a bit of a black box because Alteryx doesn't natively have the ability to step into a macro during run-time and pause it to see what's happening on iteration 1 or 2 or n and why it's not terminating etc. So putting the ability to run in a debug mode would be HUGELY helpful.

-

Category Macros

-

Desktop Experience

- New Idea 206

- Accepting Votes 1,838

- Comments Requested 25

- Under Review 149

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

631 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

74 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,492 -

Documentation

64 -

Engine

121 -

Enhancement

274 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

176 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

- guth05 on: Search for Tool ID within a workflow

| User | Likes Count |

|---|---|

| 41 | |

| 30 | |

| 19 | |

| 10 | |

| 7 |