Alteryx Designer Desktop Knowledge Base

Definitive answers from Designer Desktop experts.- Community

- :

- Community

- :

- Support

- :

- Knowledge

- :

- Designer Desktop

- :

- Upload to SFTP/FTP

Upload to SFTP/FTP

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

on 05-13-2016 08:08 AM - edited on 07-27-2021 11:40 PM by APIUserOpsDM

In a previous article, we've shown you how you can upload to FTP using the Download tool. With the release of Alteryx 10.5, the Download tool now supports uploading to SFTP. With this addition, we'll take the opportunity to show you some more examples of uploading data to SFTP/FTP and show you how seamless it can be.

In this article, I'll show two examples of uploading to an SFTP server:

- a file from local disk or network share

- workflowdata directly from within Alteryx as a csv file

If, like me, you don't have and SFTP server to work with but you still would like to test this functionality, you can download and run an SFTP server locally - I'm testing with this free self-contained one. Let's get started!

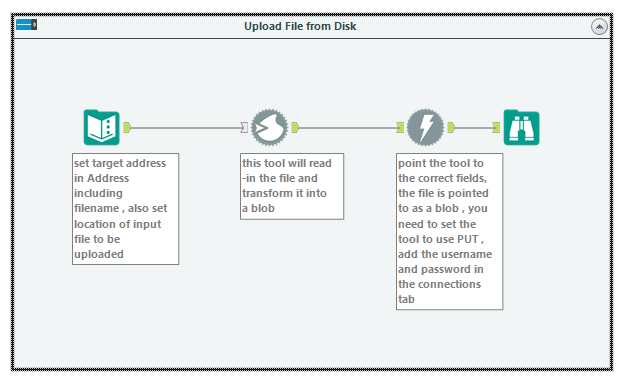

1. Upload a file from local disk or network share

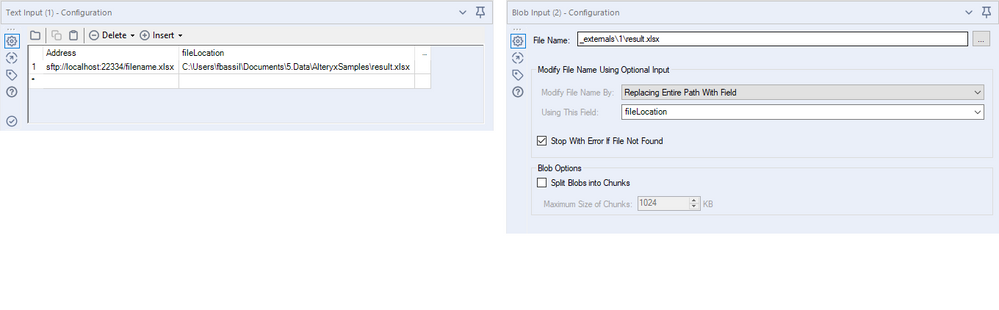

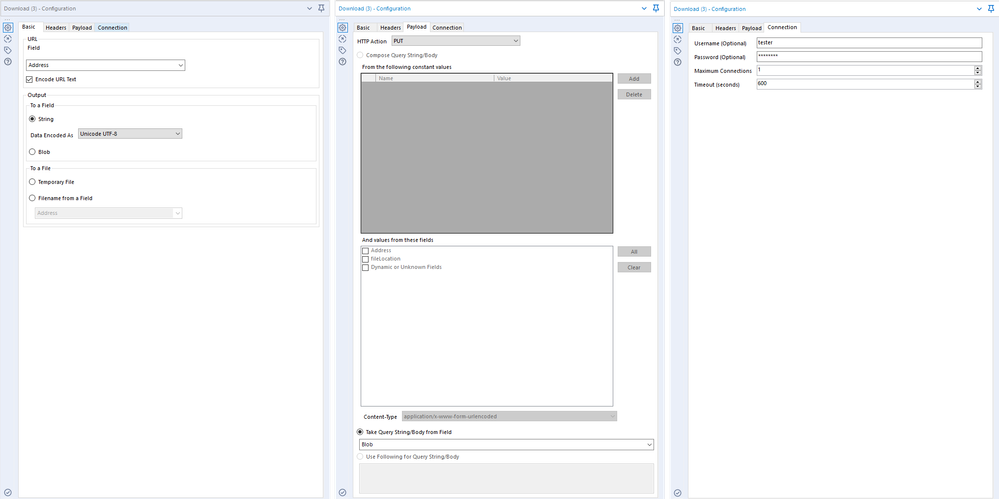

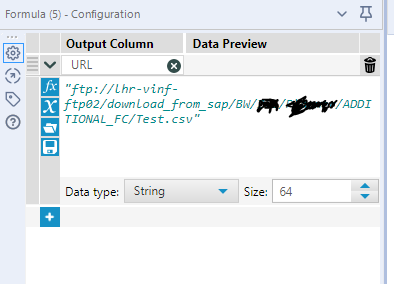

In the first example, I will point Alteryx to load a file from a path on local disk and use the Download tool to upload it. I start by using a Text Input tool to input the URL (the address of the ftp/sftp server) including the port number and the full file path with the file name of where I want to save the file . I'm running my SFTP server on localhost port 22334 and I want to save my file on the root directory of the SFTP server therefore my URL is sftp://localhost:22334/filename.xlsx. Don't forget to start the URL with sftp:// or ftp:// depending on what protocol the server is running. I also add the file location in the second field. Of course you can use a Formula tool to create more dynamic URLs and file names but I'm keeping it simple for this example.

I haven't mentioned any special requirements for uploading data to SFTP/FTP so now is a good time to do so. For the Download tool to upload data, the data must be transformed into a Blob object and the tool has to be configured to send a PUT command. I will read-in the file and transform its content into a blob in one go using the Blob Input tool. In the Blob Input tool, I'll put a placeholder file name, configure the tool to Replace Entire Path With Field, and point to the field that has the input file path. The output of the Blob Input tool is a blob object.

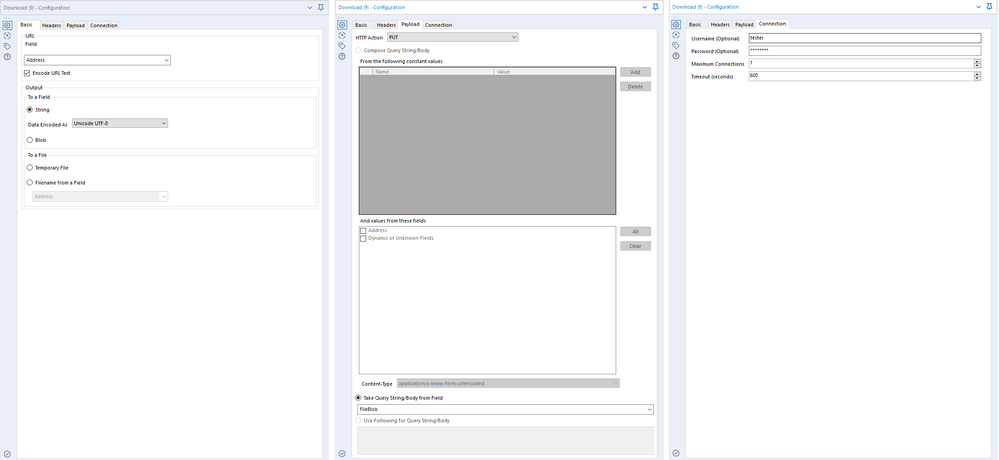

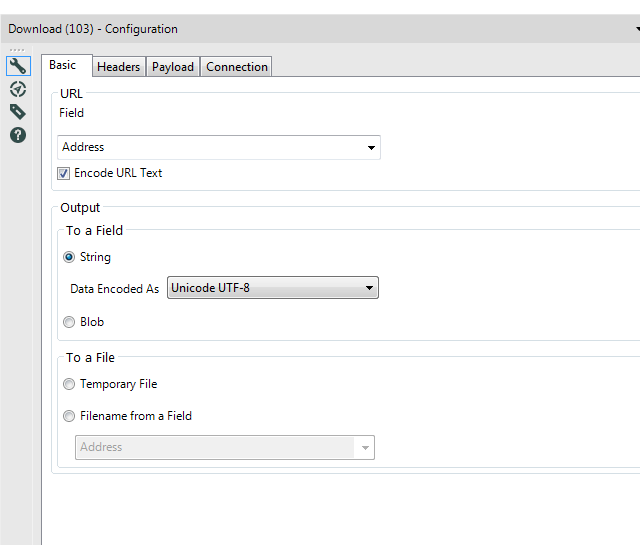

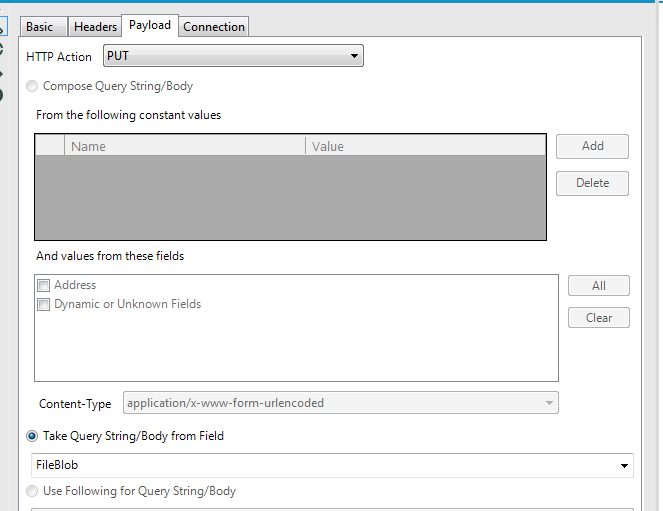

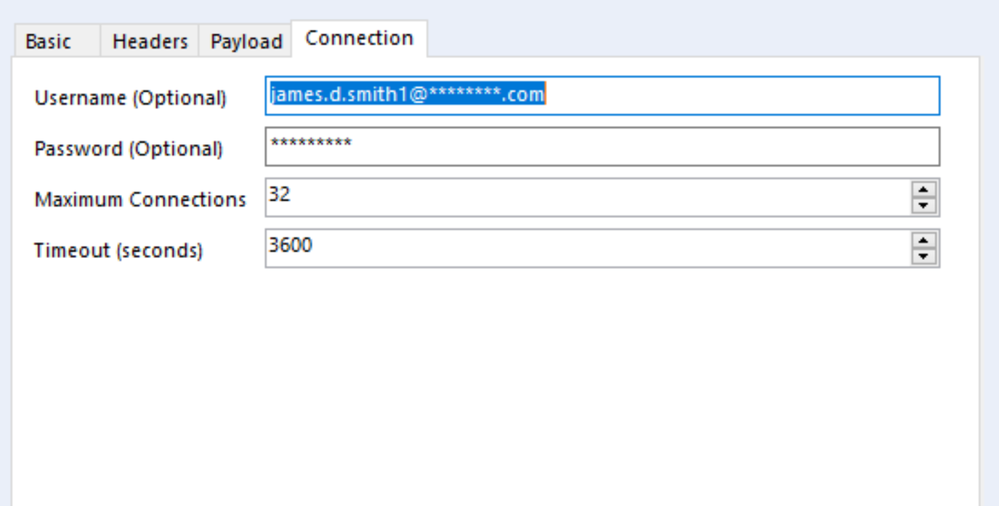

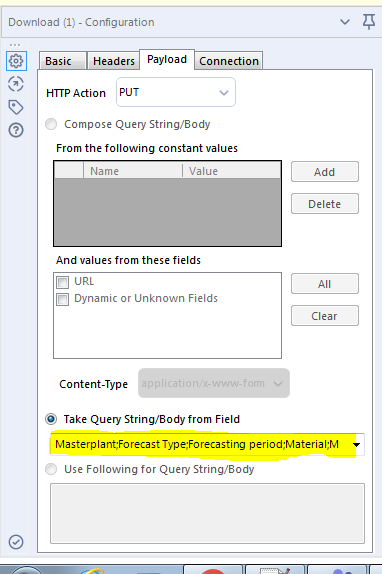

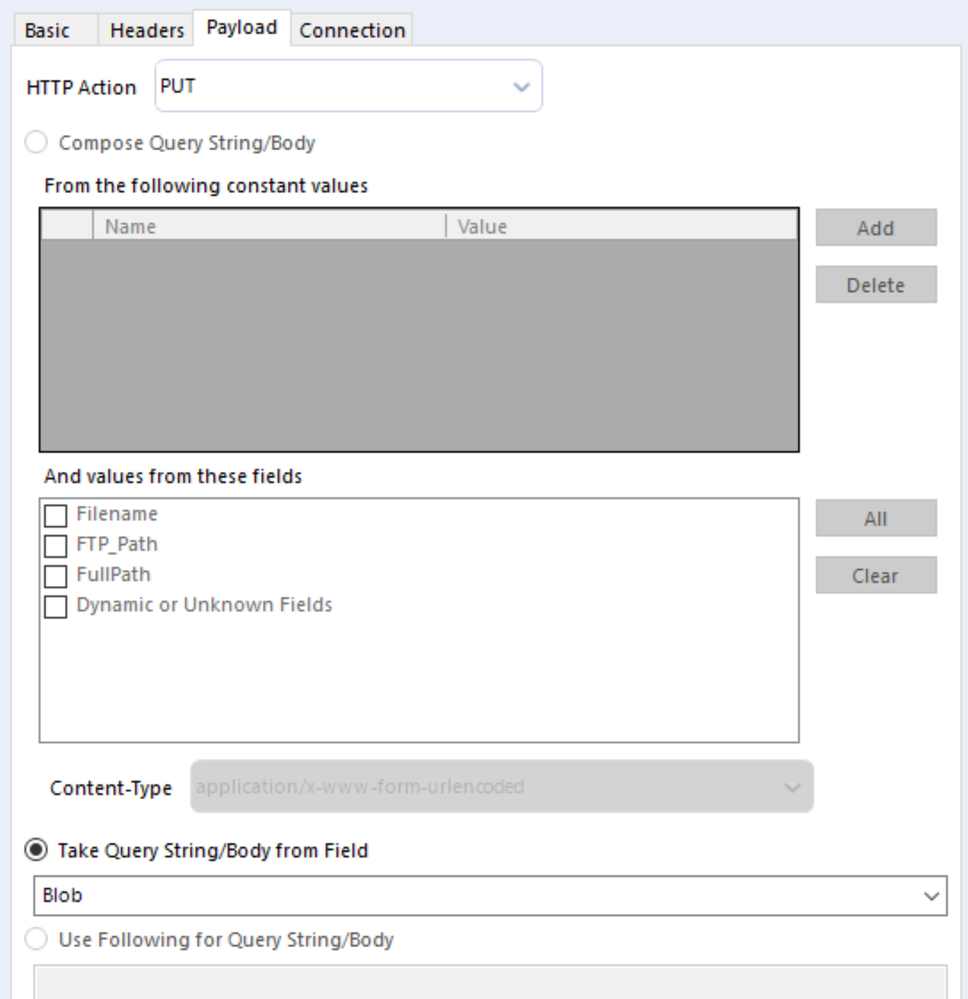

In the Download tool on the Basic tab, I point the URL to the field that has the server details - "Address" in my case. On the Payload tab, I set the HTTP Action to PUT and set the tool to Take Query String/Body from Field and point it to the field that has the Blob object. On the connection tab, I set the username and password to the correct credentials. Now, I run the workflow and Alteryx will read the file, create the blob object and upload it to the SFTP server.

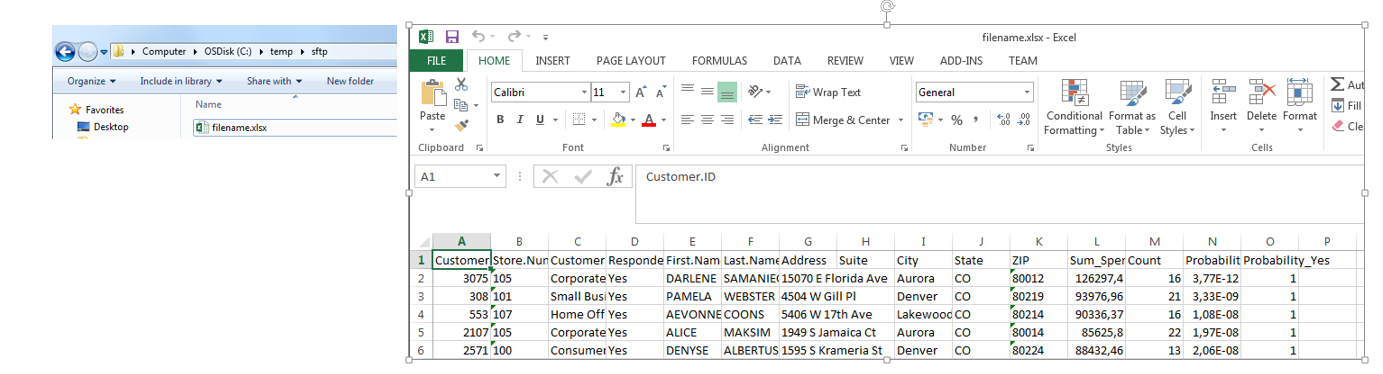

My SFTP server root folder is set to C:\temp\sftp and there I can see the filename.xlsx file that I just uploaded.

2. Upload workflow data directly into a csv file on the SFTP server

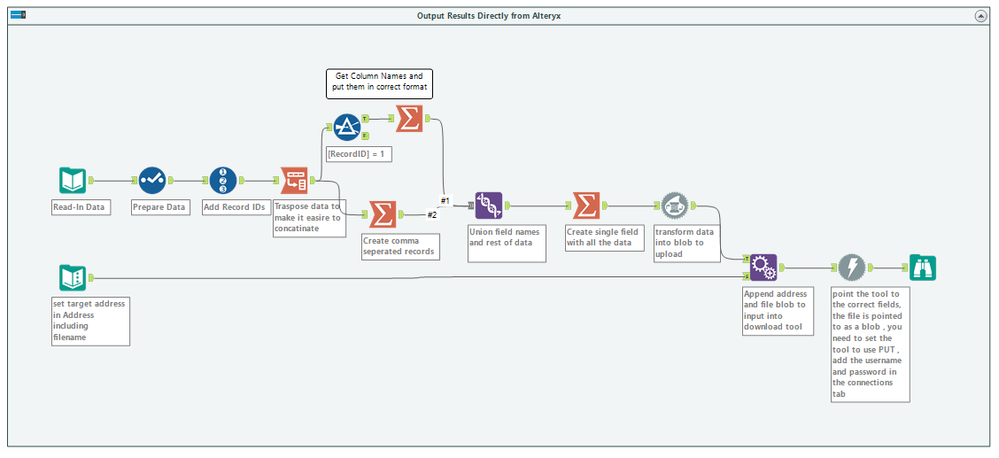

In the second example, I'll read-in data from an Excel file, deselect some columns and then upload the result into a csv file using SFTP. You can think of the Select tool as the part of your workflow that is processing data and from the Record ID tool to the Download tool as the part to prepare the upload. The upload process is very similar to the first example and the majority of the workflow is for preparing the data and creating the correct object to upload.

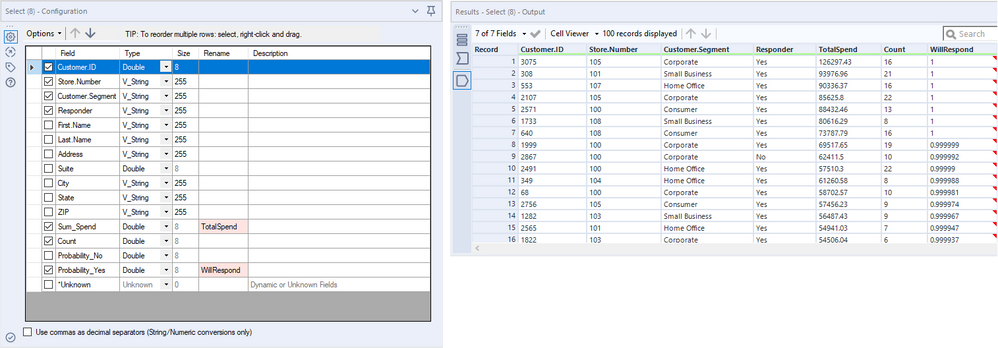

Now that I have read-in, cleaned, and prepared my data, this is how it looks.

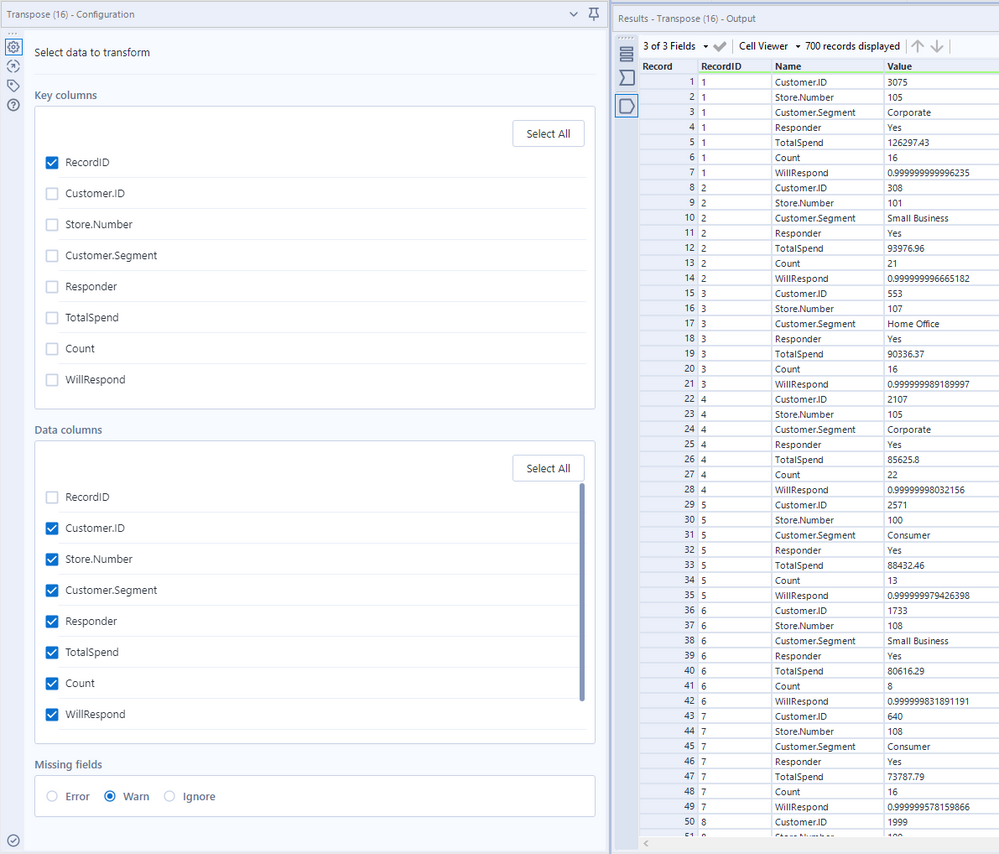

As a reminder, we will need a blob object to feed into the Download tool with the Put command. The next section of the workflow will take the values in the columns and rows of my tableand concatenate theminto a single field containing comma-separated data. I will feed this field into the Blob Convert tool and the output tothe Download tool to upload it to the server.Since I will be transposing and filtering data, I will need to have a point of reference to the original details and therefore I use the Record ID tool to create this point of reference. I then transpose the table using the Transpose tool with the Record ID as a pivot point (the key field) to work with a single column insteadof replicatingwork across multiple columns - in general, transposing dataand working with Name-Value columns tend to simplify transforming and manipulating tables.

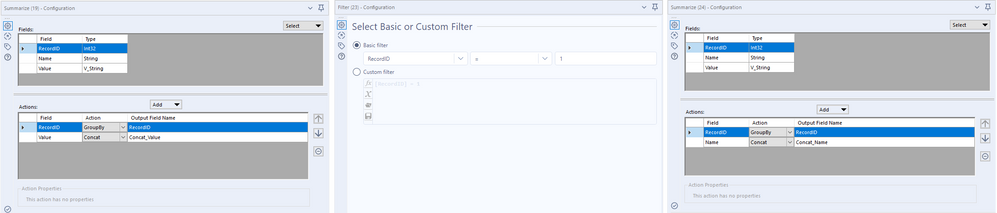

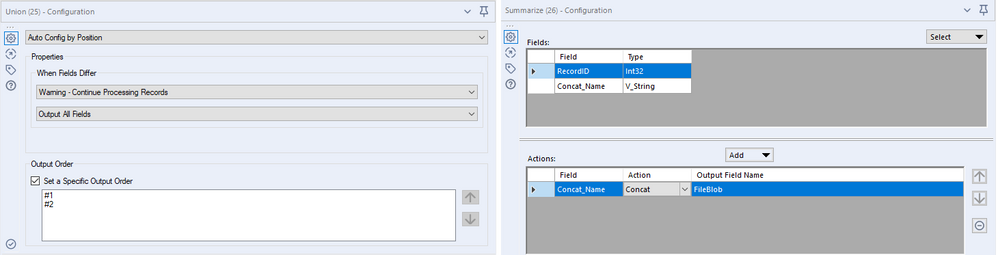

Now that I have my data in a single column, I will use a Summarize tool to create the CSV rows by grouping on RecordID and concatenating the values with "," as the separator. I will also need to create the first row of the CSV file - the field names - so I take the first Record (Record ID = 1) with a Filter tool and concatenate the values in the Name column with "," as the delimiter. The result is each record in my data has one row of comma separated data all in one column.

With the two tables ready, the next step is to union the field names and the values into a single table with the Union tool and making sure that the tool is configured to Set a Specific Output Order. That way you get the field name before the field values in the output. The resulting table is then concatenated into a single field with the Summarize tool using " " (newline) as the delimiter.

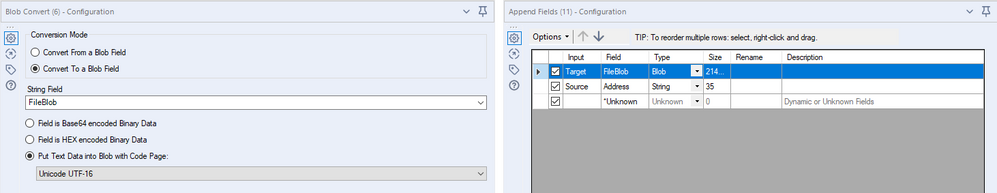

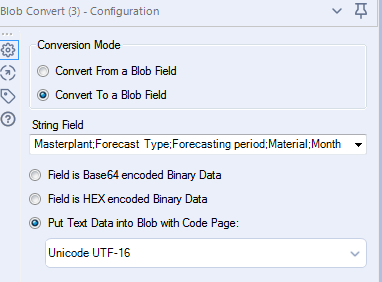

The resulting data is then fed into the Blob Convert tool to create the Blob object. In addition, the target URL is appended to the table using an Append Fields tool. the Download tool is setup with a similar configuration to the first example.

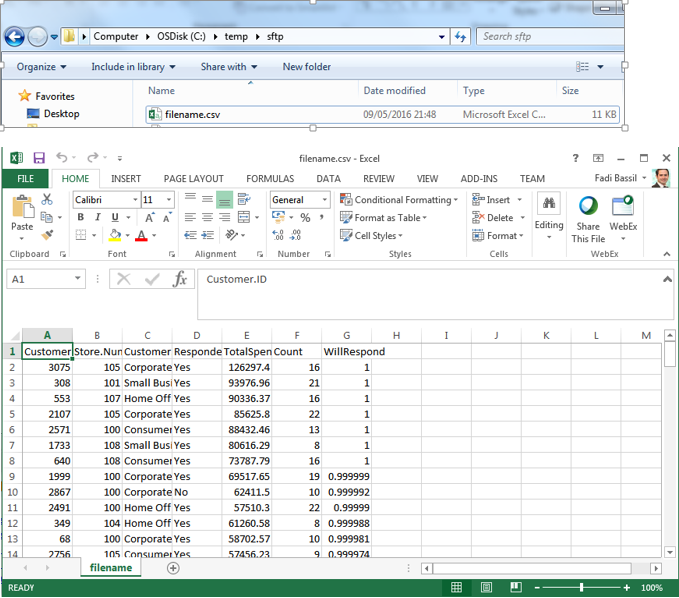

Execute the workflow and, voila, we have the file uploaded to the server as filename.csv.

Both the workflow used in this article and the RebexTinySFTPServer configuration file(rename file from .log to .exe.config) is attached to this post. Please note that you must change the configuration of the Download tool (address and credentials) for the workflow to work on your setup. These examples were built with Alteryx 10.5 (10.5.9.15014).

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I have several scenarios where this will be helpful! So glad I found this. In earlier versions of Alteryx I had experimented with using the Run command and some freeware apps that did SFTP uploads. If this works it will be MUCH easier.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

This is great, thank you, do you know if the only way to upload via the Download tool is to convert the data to a Blob?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@AnthonyJ this is @TashaA's comment on a similar question:

"The help shows that we only accept data from a field that is in BLOB format for the "PUT" request.

Based on my research, this is likely due to the library that we use to execute requests in the Download Tool (libCurl) has specific parameters for using the PUT. One the parameters required is a file, so we use BLOB. Let me know if I can add any additional information. "

Thanks @TashaA

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hello - any thoughts on how to get around the string size limitations? I'm attempting to push a 20 million row output through this SFTP solution and the summarize tool that concatenates all values to a single record (before data is transformed to blob format) is causing the error:

Designer x64 Tool #3: Tool #26: Error String size limit reached: Strings are limited to 2147483648 bytes

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @-Daniel- for your use case it might be easier to use the Run Command to write out and then upload the file using a 3rd party application. something similar to this post: https://community.alteryx.com/t5/Data-Preparation-Blending/ZIP-and-SFTP-ToFile-ToFileToZIP-ToFileToS...

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

![]()

![]() Thank you so much for this, love it.

Thank you so much for this, love it.![]()

![]()

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I have a similar situation but the catch is that we never provide Port number while logging into SFTP . Since you also mentioned port number in your address url is it mandatory to provide it?

Thanks,

Nancy

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @nchikara

It is not mandatory to add the port numbers if you're using standard port numbers. Alteryx will assume port 21 for FTP and port 22 for SFTP. If you use different ports then you'll have to add them.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @FadiB,

Thank you for your reply!

Regards,

Nancy

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Great to find this and it worked for me connecting to a test SFTP with userid and password. However the SFTP site I need to connect to requires Private Key as part of the authentication - do you know if this is still possible or not supported?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@cbridge, at the moment, the Download tool doesn't support PK certificates for authentication. You might want to try using Winscp with Alteryx, similar to this post: https://community.alteryx.com/t5/Data-Preparation-Blending/ZIP-and-SFTP-ToFile-ToFileToZIP-ToFileToS...

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thank you for this very helpful guide. I have pretty much replicated your method. However I need to upload the file using a specific port and to a specific location: I am currently using the following format for the Address:

sftp://ip_address:port/folder/subfolder/filename.csv

Is this format correct? I am trying to make sure this format is correct so I can isolate if there is some other authentication problem.

Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I have am using the scenario 2 version, but when the file gets to the FTP site it is not encoded as Unicode UTF-8? Has anyone dealt with this before or have any ideas on what I am doing wrong? Below are screen shots of my set up.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

very informative...my issue using the SFTP was getting verification that the file AND data was uploaded. Unlike when doing a FTP upload and you get the completed transfer verbiage in the cell viewer...uploading to a SFTP site doesn't give you that information. Working with Alteryx Support, we confirmed that the SFTP upload worked by downloading the free app "FileZilla" and copying the file that was uploaded to the SFTP site to my hard drive and opening it there, and validating that the complete data did indeed upload to the SFTP site.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi - will also echo Kiarash on uploading to a different folder

…. I need to upload the file to a specific location: I am currently using the following format for the Address:

sftp://domain:port/Home/filename.csv

~~ however my login for sFTP takes me to the default folder of Root not Root/Home - logicaly I would need to

cd /Home before putting my file and after establishing the sFTP connection with the tool.

Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thank you so Much!!!! Perfect!!!!

Best Regards.

Carlos Tomé

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I have a workflow that upload the data to SFTP. But does anyone know how to automate this process of loading the alteryx o/p to SFTP. Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @fadib ,

I was able to successfully emulate the workflow you've laid out that uploads workflow data directly into a csv file on the SFTP server.

However, I'd like to dynamically rename the output .CSV file (that will land in the SFTP) to include the day it was uploaded.

Would you be able to provide direction here?

Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @fadib ,

I have a file that writes output data to a location dynamically and the extension it has is <File Name>.<Today's Date> and no .xlsx extension. The file name is Myfile.20190529 . Even though the output file has .xlsx extension, using formula tool I get the output file name as Myfile.20190529. This action takes place the day I run the file. So the variable out here is 20190529. It might change to 20190530.

With respect to the file you uploaded, could you please show how to dynamically upload the file to a server with extension changed.

For server, I have the user name and password.

Your support will be appreciated.

Thanks.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

This great and what I exactly needed. Thank you so much.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@fadib Thanks for sharing your guidance. Your instructions was very helpful. I need your thoughts on this. I successfully uploaded but i do not get back any success message. I get a warning saying "No Data Received"

Warning: Download (3): No data received from "sftp://<CompanyName>:<Port>/User2.txt"; Check the headers for details.

Even the header is empty. Any advise?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I'm trying to replicate option 2 to upload data directly to the sftp server but in my specific case I need to do the "ftps" protocol wrapped in tls. When using the "run" command and WinSCP this meant I had to add the "-explicittls" option. I can't seem to find or add that analogue here. Am I missing something? Thanks, -Hayes

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hello,

I tried this option and it worked fine for files which are less than 1 GB but it did not move the files which is of 4GB size. We tried selecting the option to split the blobs into Chunks and workflow was successful but it moved only 1GB of data as the max size of Chunks size in alteryx is 1048576 KB. Is there any other setting which I have to do to SFTP large files ( 4GB or more )

Appreciate any advice on this.

Thanks,

Harish

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi!

I have a file I have to transfer to an SFTP location M-F, and the file has ~5 million records in it (total file size is around 500MB). I'm using Option 2 above, and when I get to the BLOB Convert tool, I get an "out of memory" error.

Is there an alternate solution to doing a transfer like this for large files? I'm assuming there has to be a limit on how much a BLOB can hold?

I thought about breaking up the file into multiple streams, but I'm not sure how to get all of the streams to append to one another on the SFTP server using the Download Tool, since it doesn't specify file create vs append to file.

Thanks in advance!

Kim

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Has anyone encountered the following when attempting to upload to the SFTP:

SSH-2.0-OpenSSH_7.6

Protocol mismatch.

A few things to note:

- My workflow runs successfully without errors but does not actually transfer data

- I am positive my credentials are correct

- The above is what is passed through as a value in the "DownloadData" field after the download tool

- I know my IP is whitelisted and have proper write permissions (i am able to access the sftp and drop files to it manually via WinSCP)

Does anyone have any suggestions for troubleshooting? I also persisted this question on another thread, which i thought had answered before realizing the file wasnt actually dropping

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I followed this and have it working correctly locally. When I posted it to my company's gallery it completed instantly with no errors but it only transferred the very first file to my ftp. The Download Headers turned into "229 Entering Extended Passive Mode" after the maximum number of connections is reached. It turns out that I was flooding the connection. I had to throttle the stream way down. I hope this saves someone else a headache..

Great Post! Very helpful, thanks!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@ MKAV9893.

Did you get a resoltion? I am having the same issue, and although there is no failure there is a warning about Using blob and not supported?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I ended up having to throttle the flow to around 1000 calcs per minute. It was blowing right through the processes otherwise and drowning the system. Hope that helps you too.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I tried this and it almost worked except that I can only see 1 file out of 3 files crated out of the Blob conversion tool.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @fadib , Thanks a lot for the workflow you shared.

The upload workflows runs fine for the csv file (but,it has limitation). I am having data of 3 million records, for which i am getting error (exceeds the limit) for .csv , For that i want to upload the output in .yxdb format inplace of .csv file. I am really stucked, how can i do? Please, can anybody help me out with this.

Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thanks!

It has a lot of potential scenarios where it can helps me.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

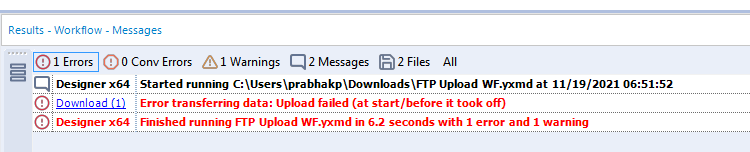

Hello All,

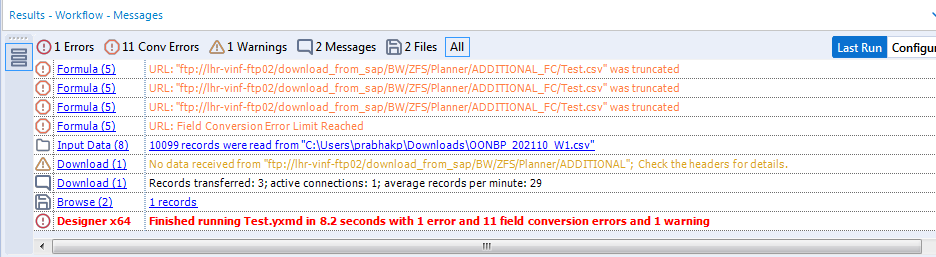

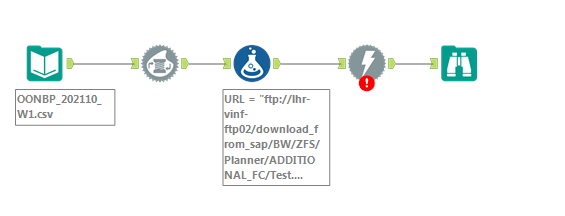

We are trying to upload a CSV file to a FTP server location using BLOB Convert and Download tool. We are getting an error and looks like we are doing something incorrect with the BLOB configuration part.

Please see attached Workflow screenshots and the error message for test run. Please advise.

Thanks,

Piyush

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Piyush,

You haven't actually posted the error message. Can you click on the icon that says "1 error" and post a screen shot of the actual error message? I do see that your URL field is being truncated. That means that the full URL isn't being supplied to the download tool. That's enough by itself. You'll need to make the field larger to accommodate the full URL. Additionally, in the download tool, on the payload tab, the query string/body drop down needs to be set to the blob field that you created. I would start out using one blob field until you have it working.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

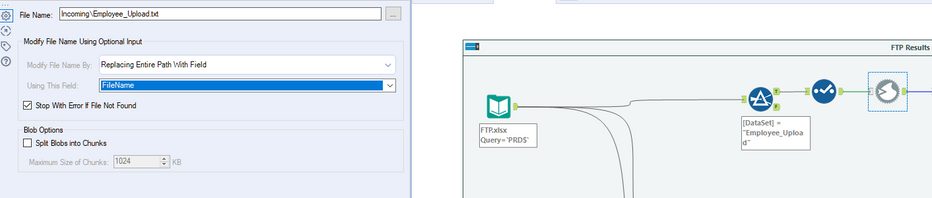

I do this a little differently. The input file contains the fields data required for the blob

FTP.xlsx is below

| DataSet | Filename | URL |

| File1 | Actual location\Filename1.txt | SFPT Location/Filename1.txt |

| File2 | Actual location\Filename2.txt | SFPT Location/Filename2.txt |

| File3 | Actual location\Filename3.txt | SFPT Location/Filename3.txt |

Step 2 is I filter the first file

Step 3 I select only the Filename and Url

Step 4 is the BLOB details - see below

Step 5 FTP

Set up your connection details as well

Step 6 union all output results into and excel output.

Hope this helps.

AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

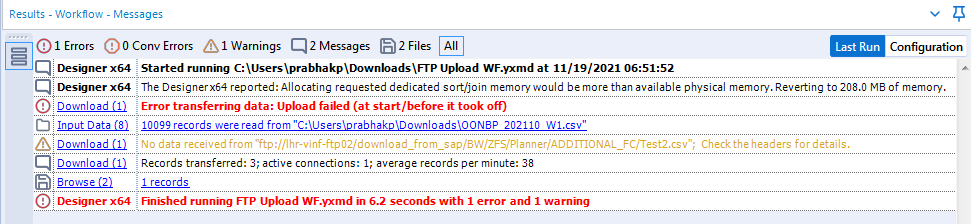

Thanks @jdsmith777

We are not getting truncated field warning after increasing the field size from Formula tool. However, we are still having issues while running the workflow correctly.

Please see attached screenshots of the error messages. Also, Can you please suggest how to attach the Alteryx workflow itself.

Thanks,

Piyush

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

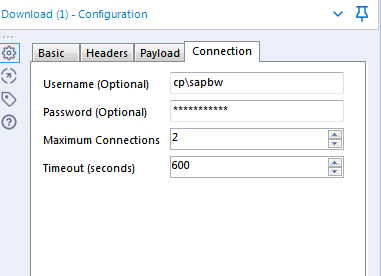

I think you highlighted the issue in your download tool. You're still not passing the BLOB of the files. It looks like you are passing Fields. Also, what does the Connection tab look like in the download tool. Are you supplying your credentials? You should be able to drag and drop the workflow file into the comment section that you type in to respond.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hello @jdsmith777 ,

Thanks for your response.

Yes, we are entering credentials for FTP Server connection in the Connection Tab (Download Tool). Please see attached screenshot.

Sorry, I am still not able to upload the workflow here as it is showing an error message ' The file type (.yxmd) is not supported'.

Please advise.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

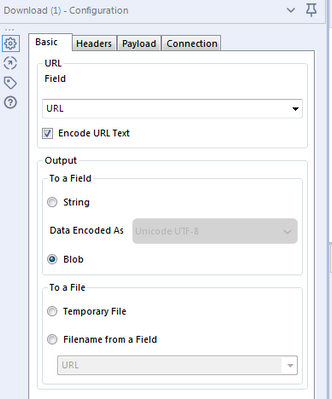

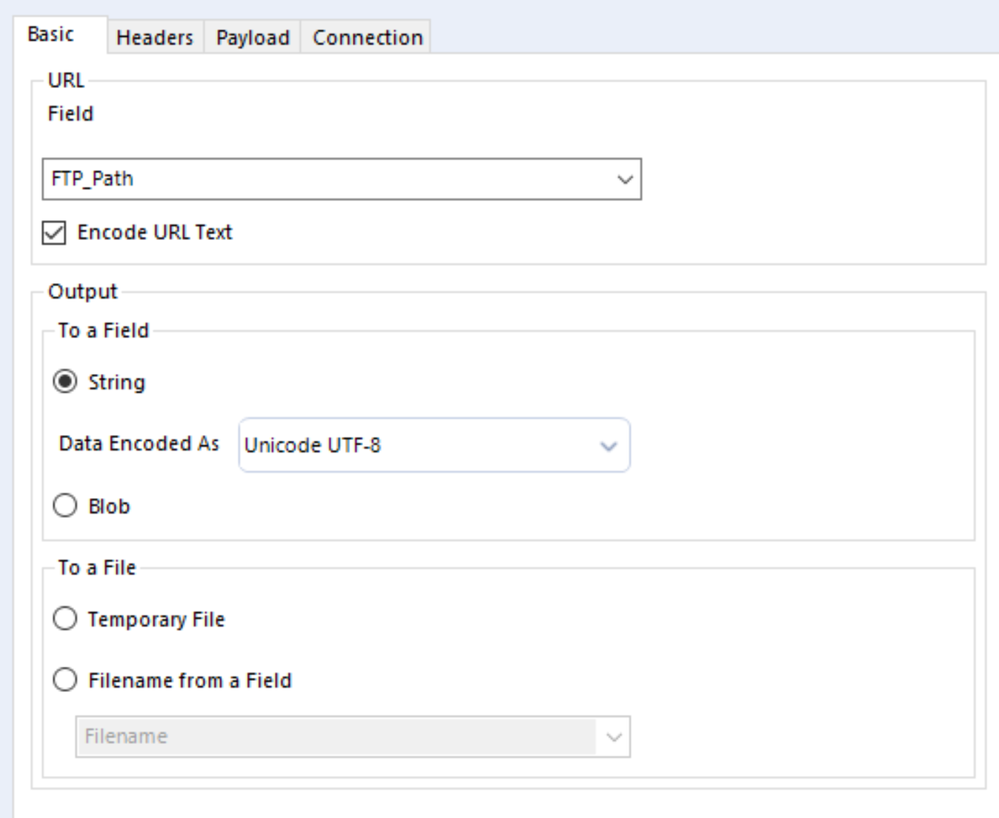

Hello @jdsmith777 ,

I am not sure what you meant by 'passing Fields' here. We are selecting BLOB here in the download tool - Basic Tab.

Could you please elaborate here ?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@PiyushPrabhakar Try zipping it. Sometimes that doesn't help, but often you can upload a .zip.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Set your Output to be a string.

This is what your Payload and Connection tabs should look like.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I am getting the "No data received from... Check the headers for details" error. The file is not being uploaded and when I look at the browse tool, Download Data is NULL and Download Headers is empty. Not sure how to troubleshoot this. Suggestions? I was following the upload file from disk option.

Update... Turns out the file was actually getting uploaded properly and I just needed to refresh to see it. I guess the warning threw me off. Not sure what that warning message really means but it is working.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Sounds like something might be wrong with the URL you're trying to use.

-

2018.3

17 -

2018.4

13 -

2019.1

18 -

2019.2

7 -

2019.3

9 -

2019.4

13 -

2020.1

22 -

2020.2

30 -

2020.3

29 -

2020.4

35 -

2021.2

52 -

2021.3

25 -

2021.4

38 -

2022.1

33 -

Alteryx Designer

9 -

Alteryx Gallery

1 -

Alteryx Server

3 -

API

29 -

Apps

40 -

AWS

11 -

Computer Vision

6 -

Configuration

108 -

Connector

136 -

Connectors

1 -

Data Investigation

14 -

Database Connection

196 -

Date Time

30 -

Designer

204 -

Desktop Automation

22 -

Developer

72 -

Documentation

27 -

Dynamic Processing

31 -

Dynamics CRM

5 -

Error

267 -

Excel

52 -

Expression

40 -

FIPS Designer

1 -

FIPS Licensing

1 -

FIPS Supportability

1 -

FTP

4 -

Fuzzy Match

6 -

Gallery Data Connections

5 -

Google

20 -

In-DB

71 -

Input

185 -

Installation

55 -

Interface

25 -

Join

25 -

Licensing

22 -

Logs

4 -

Machine Learning

4 -

Macros

93 -

Oracle

38 -

Output

110 -

Parse

23 -

Power BI

16 -

Predictive

63 -

Preparation

59 -

Prescriptive

6 -

Python

68 -

R

39 -

RegEx

14 -

Reporting

53 -

Run Command

24 -

Salesforce

25 -

Setup & Installation

1 -

Sharepoint

17 -

Spatial

53 -

SQL

48 -

Tableau

25 -

Text Mining

2 -

Tips + Tricks

94 -

Transformation

15 -

Troubleshooting

3 -

Visualytics

1

- « Previous

- Next »