Alteryx Designer Desktop Knowledge Base

Definitive answers from Designer Desktop experts.- Community

- :

- Community

- :

- Support

- :

- Knowledge

- :

- Designer Desktop

- :

- Web Scraping

Web Scraping

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

on 06-29-2016 12:11 PM - edited on 07-27-2021 11:39 PM by APIUserOpsDM

Web scraping, the process of extracting information (usually tabulated) from websites, is an extremely useful approach to still gather web-hosted data that isn’t supplied via APIs. In many cases, if the data you are looking for is stand-alone or captured completely on one page (no need for dynamic API queries), it is even faster than developing direct API connections to collect.

With the wealth of data already supplied on websites, easy access to this data can be a great supplement to your analyses to provide context or just provide the underlying data to ask new questions. Although there are a handful approaches to web scraping (two detailed on our community, here and here), there are a number of great, free, tools (parsehub and import.io to name a few) online that can streamline your web scraping efforts. This article details one approach that I find to be particularly easy, using import.io to create an extractor specific to your desired websites, and integrating calls to them into your workflow via a live query API link they provide through the service. You can do this in a few quick steps:

1. Navigate to their homepage, https://www.import.io/, and “Sign up” in the top right hand corner:

2. Once you’re signed up to use the service, navigate to your dashboard (a link can be found in the same corner of the homepage once logged in) to manage your extractors.

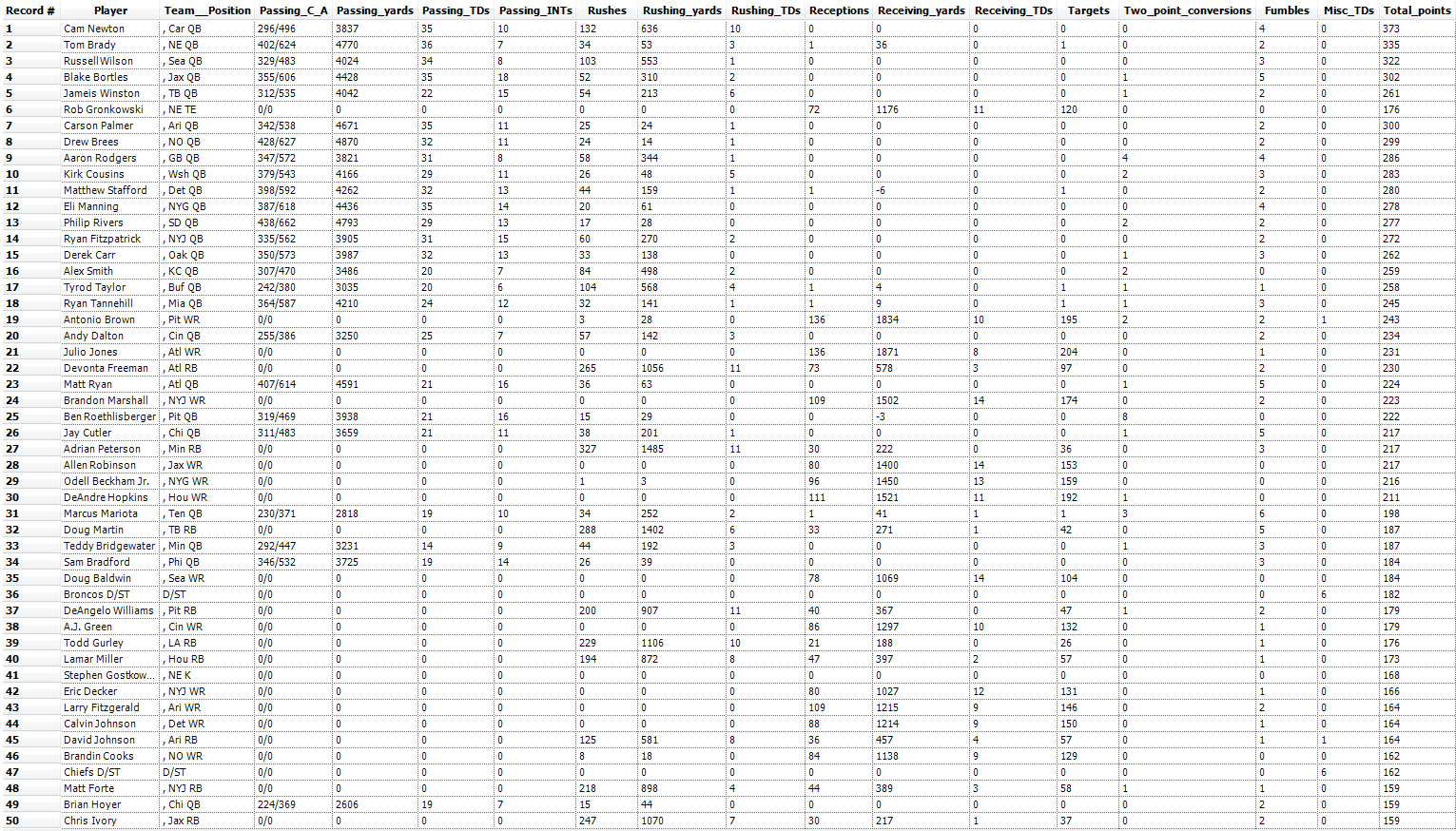

3. Click “New Extractor” in the top left hand corner and paste the URL that contains the data you’re trying to scrape in the “Create Extractor” pop up. Since fantasy football drafting season is just ahead of us, we’ll go ahead and use as an example tabulated data from last year’s top scorers provided by ESPN so you don’t end up like this guy (thank me later). We know our users go hard and the stakes are probably pretty high, so we want to want to get this right the first time, and using an approach that is reproducible enough to supply us with the requisite information needed to keep us among the top teams each year.

4. After a few moments, import.io will have scraped all the data from the webpage and display it to you in their “Data view.” Here you can add, remove, or rename columns to the table by selecting elements on the webpage – this is an optional step that can help you refine your dataset before generating your live query API URL for transfer, you can just as easily perform most of these operations in the Designer. For my example, I renamed the columns to reflect the statistic names on ESPN and added the “Misc TD” field that escaped the scraping algorithm.

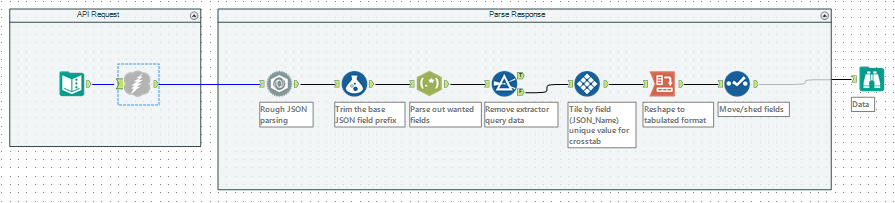

5. Once your data is ready for import, click the red “Done” button in the top right hand corner. You’ll be redirected back to your dashboard where you can now see the extractor you created in the last step – select this extractor and look for the puzzle piece “Integrate” tab just below the extractor name in your view. You can copy and paste the “Live query API” (there’s also an option to download a CSV file of your data) listed here into a browser window to copy the JSON response that contains your data, or you can implement a call to it directly into your workflow using the Download Tool(just be sure to de-select “Encode URL Text” as you’re specifying the URL field):

That’s it! You should now have an integrated live query API for your webpage, and with an extractor that can be leveraged to rake data from that website if you want to try other pages as well. If you’d like to learn more about the approach, or on how to customize it with external scripts, try the import.io community. The sample I used above is attached here in the v10.5 workflow Webscrape.yxmd, you just have to update the live query API with one specific to your account, extractor, and webpage URL. If you decide to give it a try with the example above, be sure to let us know if we helped your fantasy team win big!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I love ScrapingHub as well to get data-- free with awesome support.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Harbinger, I agree & I would give you a star if I could! :)

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

On a side note - is webscraping legal? We had an internal discussion about it & I did some research and findings are mixed...

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@simon

My company is also investing the legal risks behind webscraping. This came after my team had built a really powerful webscrape process with Alteryx! Based on what we've found, the answer depends on the website you are scrapping from and what you are doing with the data you scrape. Take a look at any Terms and Conditions that may be present on the webpage that contains the data or even the website that governs that webpage/data. Your legal team will hopefully be able to gauge the legality of scraping and storing such data based on what is written there.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

You've wandered into a grey area ![]() . Generally, I do a good amount of reading of the website legal documentation before I scrape. You also may want to reach out to your company's counsel before you scrape-- that is if you have one! If you don't ask a lawyer buddy, they should be able to help.

. Generally, I do a good amount of reading of the website legal documentation before I scrape. You also may want to reach out to your company's counsel before you scrape-- that is if you have one! If you don't ask a lawyer buddy, they should be able to help.

Often, the act of scraping is not what gets you in legal trouble (however that may be true in a few cases), it is what you use the data for. For instance, if you simply want to make an informed purchase based on Amazon comments, you could scrape the site for a set of particular products, say coffee makers, run sentiment analysis on the data and choose which one you'd like waking your family up at 5:30AM each morning ;). However, if you were to scrape all of the possible coffee makers from Amzon and use that data for your own profit... say, to build a database of coffee makers that you then resell for profit, that probably isn't cool. My general rule of thumb is: if you're using data from a web scrapper to profit, it isn't cool. If you're using said data to inform a personal or purely academic decision, that's probably cool. Now, these are my opinions and not that of my company or their clients and I am in no way any legal counsel; I'd recommend you talk to a legal expert either from the site you're scraping or from your own organization. I hope this helps!

--JH

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I agree with both of you. It's definitely a grey area and maybe not everyone is aware of this!!! I've read some cases of Facebook vs, Ebay vs, and airline vs. Legality 'seems' to boil down to whether you're in a competitive space and making a profit. Each case/situation can be different...

So I feel scraping store addresses would be fine. Scraping stores with products and prices (can't get API access) maybe. In my case, this would be used to help the same company increase profits by porting data to FB ads. Either way, I'm glad I'm not the only one wondering about this. When in doubt - look at terms and talk to counsel.

Thanks for your input!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I think the integrate tab is not free anymore. On clicking the integrate tab it asks you to upgrade to the paid services. So I am guessing you can't get the "Live query API" for free on import.io

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@princejindal Yes, I found the same when clicking the 'integrate' button.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@princejindal and @VegasBeans, thank you for bringing that change in the service to our attention! We'll start working on documenting alternative methodologies :)

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Every website should tell you what you can scrap and what you can not. You must refer to ROBOTS.TXT ont eh server for details.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@MattD (or any other user) I am trying to update my weekly challenge tracker and learn web scraping as well. I can't seem to get page 4 of my posts. The part of my workflow is using a Download tool pointed to URL https://community.alteryx.com/t5/forums/recentpostspage/user-id/39450/page/4. The other pages (i.e. https://community.alteryx.com/t5/forums/recentpostspage/user-id/39450/page/3) come in fine, but I am getting an error after the dropdown data on this page of :

| Your request failed. Please contact your system administrator and provide the date and time you received the error and this Exception ID: 713EC718. Click your browser's Back button to continue. |

I sent an email to support@alteryx.com as well as I think this is a webpage XML issue, but I'm not an expert in that area. The rest of the web scraping from the Alteryx Community in the workflow is working fine.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

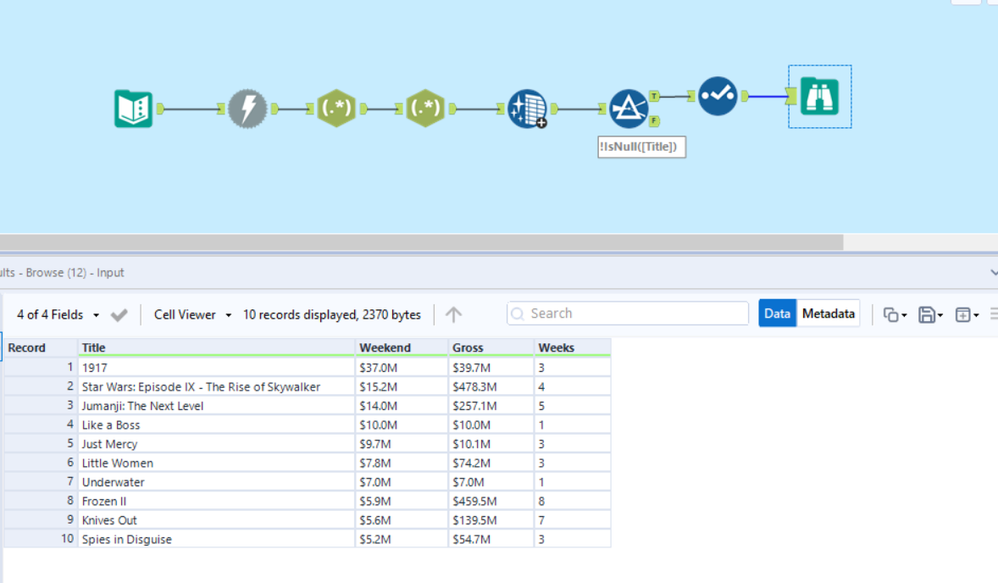

How import data from website and get the column

Title Weekend Gross Weeks

from link https://www.imdb.com/chart/boxoffice/?ref_=nv_ch_cht

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @marwan1023,

Are you asking how to scrape this data? If yes, this is a pretty easy data pull. I already mocked up a workflow, but this thread does not allow workflows to be attached. Send me a private message and re-post this question in Designer Discussions so I can attach the workflow there.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi I need help !

I am trying to fetch exchange rate - Currency conversion ( US vs EUR ) and so on... for 25 different countries. --- I am trying to get data from yahoo website

I would like to get this data in a XL..... may be the 25 different countries conversion for today / last week / last month / 1 year back

Please advise... Thanks for your input!

Conversion to US

| ARSUSD |

| AUDUSD |

| AYXUSD |

| CHFUSD |

| CLPUSD |

| CNYUSD |

| COPUSD |

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

See the URL below for a solution that is marked as solved. This can be used as a starting point for your needs

https://community.alteryx.com/t5/Alteryx-Designer/Yahoo-Web-Scraping-for-Stock-Prices/td-p/562581

https://community.alteryx.com/t5/Weekly-Challenge/Challenge-69-Web-Stock-Data/td-p/58944

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Just FYI, import.io does not have free accounts anymore.

-

2018.3

17 -

2018.4

13 -

2019.1

18 -

2019.2

7 -

2019.3

9 -

2019.4

13 -

2020.1

22 -

2020.2

30 -

2020.3

29 -

2020.4

35 -

2021.2

52 -

2021.3

25 -

2021.4

38 -

2022.1

33 -

Alteryx Designer

9 -

Alteryx Gallery

1 -

Alteryx Server

3 -

API

29 -

Apps

40 -

AWS

11 -

Computer Vision

6 -

Configuration

108 -

Connector

136 -

Connectors

1 -

Data Investigation

14 -

Database Connection

196 -

Date Time

30 -

Designer

204 -

Desktop Automation

22 -

Developer

72 -

Documentation

27 -

Dynamic Processing

31 -

Dynamics CRM

5 -

Error

267 -

Excel

52 -

Expression

40 -

FIPS Designer

1 -

FIPS Licensing

1 -

FIPS Supportability

1 -

FTP

4 -

Fuzzy Match

6 -

Gallery Data Connections

5 -

Google

20 -

In-DB

71 -

Input

185 -

Installation

55 -

Interface

25 -

Join

25 -

Licensing

22 -

Logs

4 -

Machine Learning

4 -

Macros

93 -

Oracle

38 -

Output

110 -

Parse

23 -

Power BI

16 -

Predictive

63 -

Preparation

59 -

Prescriptive

6 -

Python

68 -

R

39 -

RegEx

14 -

Reporting

53 -

Run Command

24 -

Salesforce

25 -

Setup & Installation

1 -

Sharepoint

17 -

Spatial

53 -

SQL

48 -

Tableau

25 -

Text Mining

2 -

Tips + Tricks

94 -

Transformation

15 -

Troubleshooting

3 -

Visualytics

1

- « Previous

- Next »