Alteryx Designer Desktop Knowledge Base

Definitive answers from Designer Desktop experts.- Community

- :

- Community

- :

- Support

- :

- Knowledge

- :

- Designer Desktop

- :

- Error with Simba Hive ODBC Driver: Invalid column ...

Error with Simba Hive ODBC Driver: Invalid column reference

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

10-30-2020 11:42 AM - edited 04-12-2023 05:42 AM

Environment Details

User is getting the following error while reading data from Hadoop Hive using the Input tool or streaming it out using the In-DB Data Stream Out tool :

Input Data (1) Error SQLPrepare: [Simba][Hardy] (80) Syntax or semantic analysis error thrown in server while executing query. Error message from server: Error while compiling statement: FAILED: SemanticException [Error 10002]: Line 1:33 Invalid column reference 'a.storenum'

Alternatively, user is getting the following error trying to write to Hadoop Hive with the Output tool:

Output Data (2) Unable to find field "test" in output record.

- Alteryx Designer

- All versions

- Simba Hive ODBC Driver with DSN configured

- Version 2.6 or greater

Diagnosis

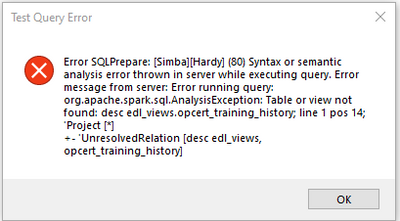

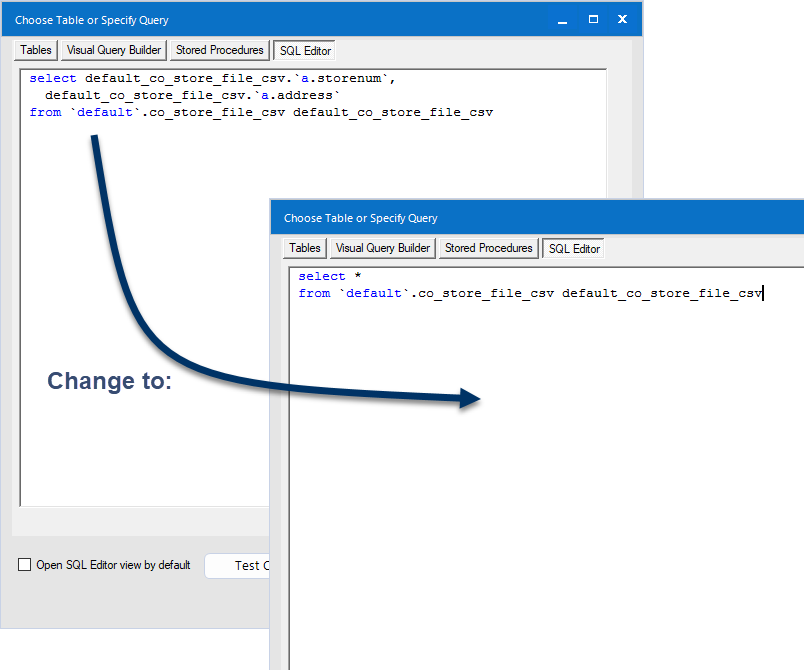

In the Visual Query Builder, the column names are prefaced by an 'a':

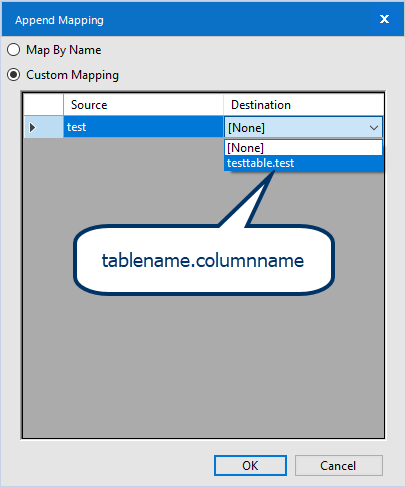

In the Output tool, you can see the table name precede the column name in the field map:

In the Select In-DB tool, the table name is part of the column name in the format tablename.columname:

Note

The below resolutions apply only if the error appears in the context of this change to the column names.

Cause

Simba introduced a new feature in their driver that ensures that all column names are unique when reading in data. This feature is resulting in Alteryx not being able to recognize column names. It is a hidden feature that must be turned off if the user can't edit the query to avoid the error.

Resolution

Solution A: Use Select * [...] in the query

1. In the Alteryx workflow, open the Input tool that is throwing the error.

2. Go to the SQL Editor tab.

3. Remove references to individual columns from the query and use the wildcard asterisk (*) instead to read in all columns in the table.

Solution B: Add a Server Side Property to the DSN

Note: This is not a global setting, it is set for each DSN, individually.

- Go to the Windows ODBC Data Source Administrator

- Open the DSN that is being used to connect for editing

- Click on Advanced Options

- Click on Server Side Properties

- Add the property

hive.resultset.use.unique.column.names

and set it to false - Hit OK on all the windows to save the changes

Solution C: Add a Server Side Property to the Driver Configuration

Note: This is a global setting, it will apply to ALL DSNs using the driver.

- Browse to the install folder for the Simba Hive ODBC driver. The default location is C:\Program Files\Simba Hive ODBC Driver

- Open the /lib folder inside the install folder

- Double-click DriverConfiguration64.exe to open the driver configuration dialog

- Click on Advanced Options

- Click on Server Side Properties

- Add the property

hive.resultset.use.unique.column.names

and set it to false - Hit OK on all the windows to save the changes

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thanks for the solution, I went for the 2nd option, and it solved my problem immediately.

Without understanding the behaviour, and changed the setting, I had to use remove pre-fix for every table I brought in.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

So glad this helped!!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Henriette,

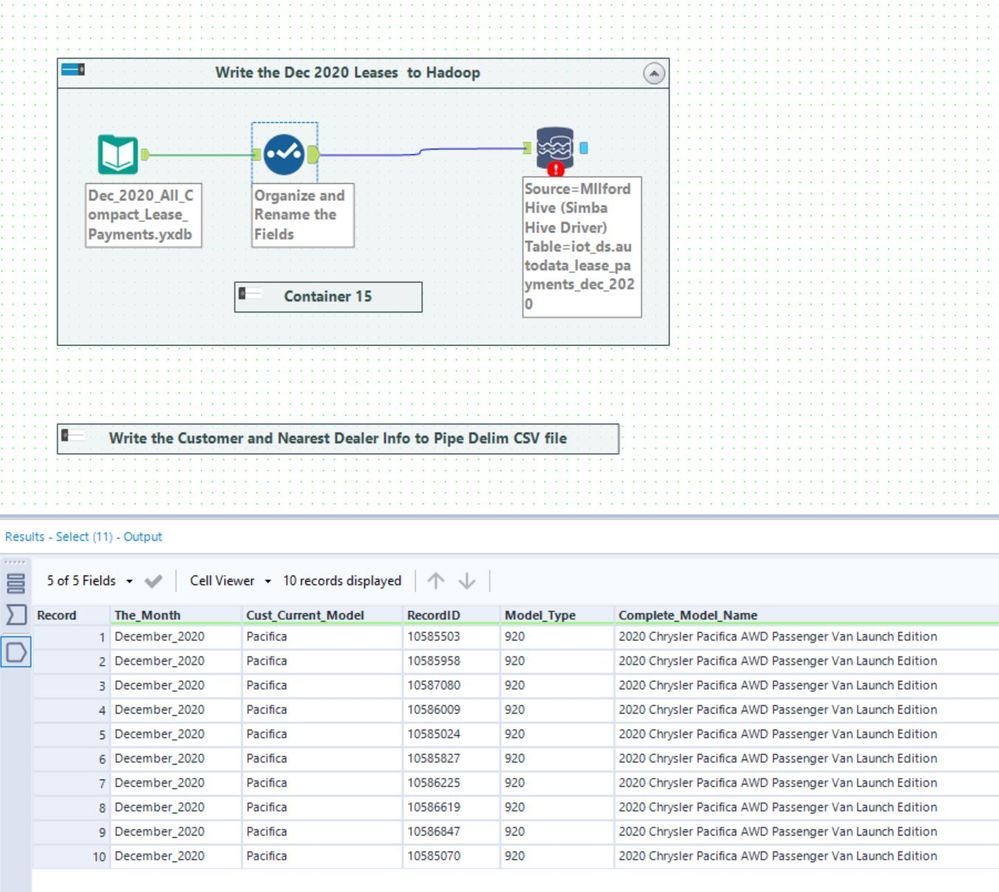

I'm having a similar problem. I'm trying to write a simple table to Hadoop. The table is getting created fine but the content of the data stream is being considered as "?".

To greatly simplify the situation, I've chosen only 10 records and one field. The error message shown in the second graphic is when I tried to write the first five fields. The same error happens whether I'm writing 1 field or all of them.

Do you have any insights? I've used this style workflow in the past to write to hadoop without any trouble.

Thanks,

Ken

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @Ken_Black

The (?,?,?,?,?) are placeholders for the data which is being sent in batches for faster loading. So it is ok that they are showing up.

I would start by pulling some ODBC logs (see here for instructions) and see if you can find something in there. If not, you can reach out to support@alteryx.com to have them help you.

Another thing you could check is the Advanced Options in the driver, we recommend not to check the "Use Native Query" option. Unchecking that option allows the driver to convert the query to HiveQL, otherwise the driver will assume that the query is already in HiveQL and you might get errors.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@Ken_Black

I ran into that error message in a different context, but what I did to resolve it was to add another Server Side Property:

hive.stats.autogather=false

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I was getting the same error so, I applied Solution B provided by @Henriette

but still I am facing the same error. Below is the workflow I have created

In my above workflow there are around many columns in Database which I am filtering using In-DB Filter tool, In Formula tool I am applying one date format rule on which I want to group by using Summarize tool.

But while doing this I am getting below error:

Error: Formula In-DB (75): Error SQLPrepare: [Simba][Hardy] (80) Syntax or semantic analysis error thrown in server while executing query. Error message from server: Error while compiling statement: FAILED: ParseException line 1:6901 cannot recognize input near 'LIMIT' '100' ';' in limit clause

Can someone please help me to get this resolve.

Thanks in advance,

Rishabh

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @jainrishabh

Looking at the error message, there is a LIMIT clause in the formula tool that is breaking the syntax of the query and causing the error. I would review the content of the formula tool.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi Rishabh @jainrishabh .

I think it is because of the ";", if you try to delete it, it should work.

Steven

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thank you steven4320555

and HenrietteH

I have removed ';' and 'limit 100' from the Query builder and it worked for me

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi HenrietteH,

I'm having a similar problem. I'm trying to import table on Data services Designer but when I import then i have a Syntax or semantic analysis error ( cannot recognize input near 'SHOW' 'INDEX' 'ON' ).

I tried to use server side properties to disable indexes but it still doesn't work.

Do you have any idea ?

Thanx,

Wassim

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@FADLI

It would be easier to offer you assistance if you included the full text of the query along with the error message in context. If you don't want to include the details, then you can either edit it or show an image where any sensitive strings are replaced by xxx or blurred. You are trying to show the syntax, though, so try to do it in a way such that the reserved words (commands) and punctuation and operators are visible. For example, using the image in the article above,

is vastly more helpful than

I know this example is absurd, but I hope you get the idea.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

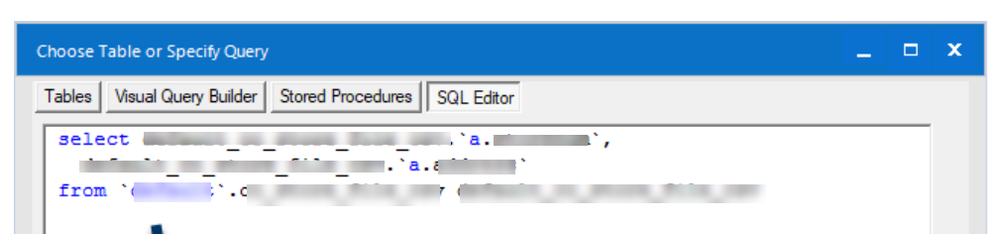

Hi @HenrietteH

Can you please help me understand why I am getting a similar error? I am connecting to Data lake via simba and I am able to extract all tables and columns with whatever query combination I like. But when i try to get table information by using DESC/Description, this error pops up. Any idea why? I am new to alteryx and not able to understand what the issue might be here.

Thanks in advance for your help here!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hello,

Just for your information, we solved that issue by writing directly on hdfs instead of using ODBC

-

2018.3

17 -

2018.4

13 -

2019.1

18 -

2019.2

7 -

2019.3

9 -

2019.4

13 -

2020.1

22 -

2020.2

30 -

2020.3

29 -

2020.4

35 -

2021.2

52 -

2021.3

25 -

2021.4

38 -

2022.1

33 -

Alteryx Designer

9 -

Alteryx Gallery

1 -

Alteryx Server

3 -

API

29 -

Apps

40 -

AWS

11 -

Computer Vision

6 -

Configuration

108 -

Connector

136 -

Connectors

1 -

Data Investigation

14 -

Database Connection

196 -

Date Time

30 -

Designer

204 -

Desktop Automation

22 -

Developer

72 -

Documentation

27 -

Dynamic Processing

31 -

Dynamics CRM

5 -

Error

267 -

Excel

52 -

Expression

40 -

FIPS Designer

1 -

FIPS Licensing

1 -

FIPS Supportability

1 -

FTP

4 -

Fuzzy Match

6 -

Gallery Data Connections

5 -

Google

20 -

In-DB

71 -

Input

185 -

Installation

55 -

Interface

25 -

Join

25 -

Licensing

22 -

Logs

4 -

Machine Learning

4 -

Macros

93 -

Oracle

38 -

Output

110 -

Parse

23 -

Power BI

16 -

Predictive

63 -

Preparation

59 -

Prescriptive

6 -

Python

68 -

R

39 -

RegEx

14 -

Reporting

53 -

Run Command

24 -

Salesforce

25 -

Setup & Installation

1 -

Sharepoint

17 -

Spatial

53 -

SQL

48 -

Tableau

25 -

Text Mining

2 -

Tips + Tricks

94 -

Transformation

15 -

Troubleshooting

3 -

Visualytics

1

- « Previous

- Next »