Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Hello all,

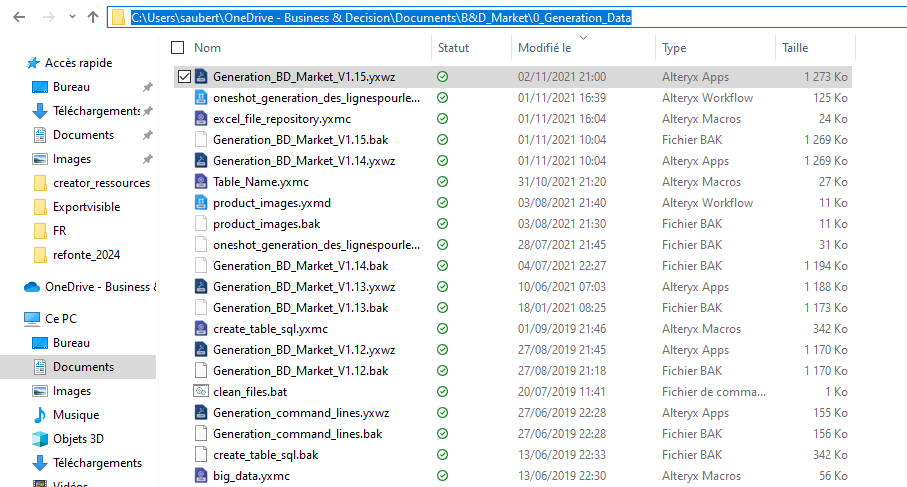

Here the issue : I have a workflow in my One Drive folder

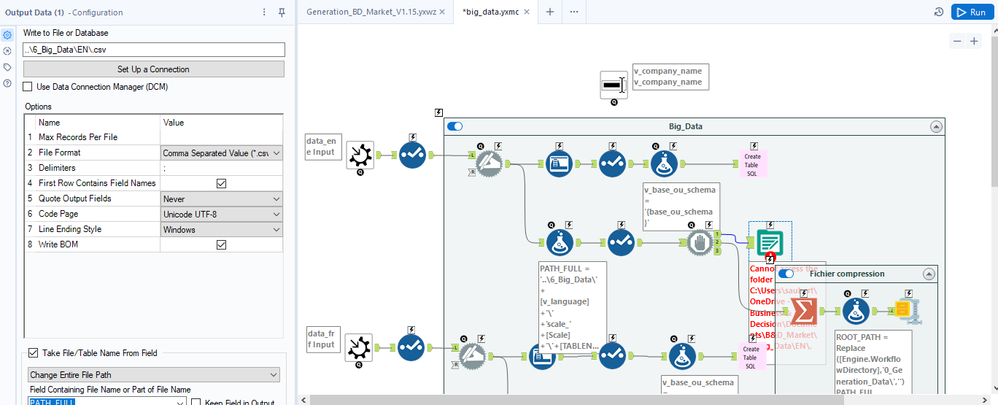

In that workflow, I use a macro that writes a file with a relative path (..\6_Big_Data\EN\.csv ) :

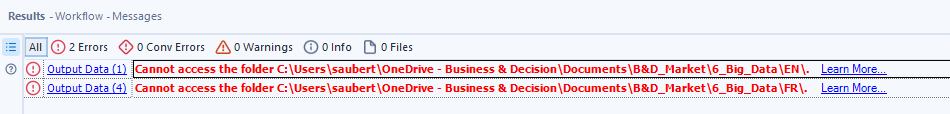

Strangely, it doesn't work and the error message seems to relate to a folder that doesn't exist (but also, not the one I have set)

ErrorLink: Output Data (1): https://community.alteryx.com/t5/*/*/ta-p/724327?utm_source=designer&utm_medium=resultsgrid|Cannot access the folder C:\Users\saubert\OneDrive - Business & Decision\Documents\B&D_Market\6_Big_Data\EN\.

I really would like that to work :)

Best regards,

Simon

I’ve been using the Regex tool more and more now. I have a use case which can parse text if the text inside matches a certain pattern. Sometimes it returns no results and that is by design.

Having the warnings pop up so many times is not helpful when it is a genuine miss and a fine one at that.

Just like the Union tool having the ability to ignore warnings, like Dynamic Rename as well, can we have the ignore function for all parse tools?

That’s the idea in a nutshell.

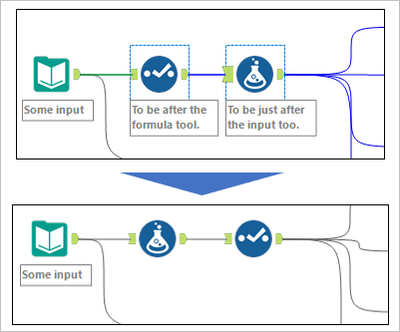

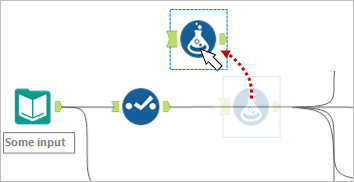

I sometimes have to swap (change the order of) two tools in a flow. It is a bothersome task, especially when there are many connections around them. I would like to suggest two new features for such a situation. It would help if either could be realized.

Swap tools

Select two tools, right-click, and select "Swap" option.

Move and connect around

Drag a tool holding down Alt key (or something) to move it from the stream and connect around. After that, we can drag and drop the tool to the right place.

I have been creating tools that access API data that needs a valid token that does expire. I use iterative macros because I sometimes need to do offsets and loop around but I also need to confirm that the token is still valid and there is a limit of how many time you can generate a token on a run so I don't want to regenerate the token on each loop. I sometimes can use the filter tool to accomplish this goal but I have to do some weird place holder stuff so it does not error if no data is coming through. A nice to have would be if you could have it configure like you do the radio button input to say if value is YES then keep this part of the workflow on if value is "NO" then turn off this sections.

Hi, I have been using different tools for some time now and now I started using Alteryx. It would be better if you can provide a feature to select particular components of workflow and on clicking Run, only selected components gets executed. It would save lots of config time and resources. In case none is selected, the workflow shall execute all tools/functions as it is currently running. I am open to test these features, if approved by Alteryx Team.

Alteryx offers the ability to add new formulae (e.g. the Abacus addin) and new tools (e.g. the marketplace; custom macros etc) - which is a very valuable and valued way to extend the capability of the platform.

However - if you add a new function or tool that has the same name as an existing function / tool - this can lead to a confusing user experience (a namespace conflict)

Would it be possible to add capability to Alteryx to help work around this - two potential vectors are listed below:

- Check for name conflicts when loading tools or when loading Alteryx - and warn the user. e.g. "The Coalesce function in package CORE Alteryx conflicts with the same function name in XXX package - this may cause mysterious behaviours"

- Potentially allow prefixes to address a function if there are same names - e.g. CoreAlteryx.Coalesce or Abacus.Coalesce - and if there is a function used in a function tool in a way that is ambiguous (e.g. "Coalesce") then give the user a simple dialog that allows them to pick which one they meant, and then Alteryx can self-cleanup.

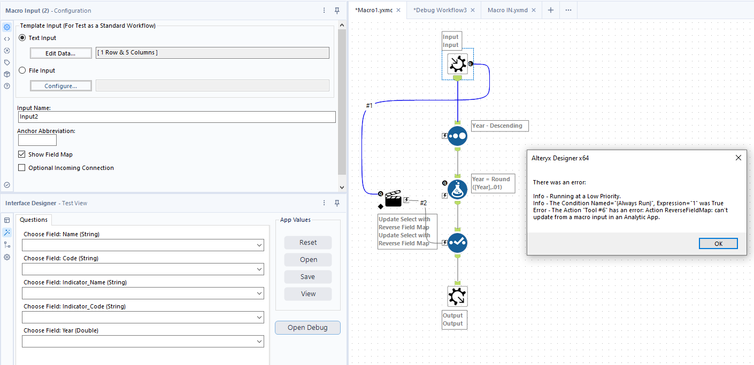

Hello,

Frequently when using the 'Show Field Map' option in a Macro Input I utilize an Action Tool with the 'Update Select with Reverse Field Map' action later in the workflow. When trying to use workflow debug to test the functionality of the macro, Designer throws an error stating the 'Action ReverseFieldMap: can't update from a macro input in an Analytic App.' This makes sense since there are no user supplied fields names in the input data stream to use for the reverse field mapping. However, this prevents me from using of the workflow debugger. The workaround is to manually delete the Action Tool prior to using the debugger. I can then test the macro to ensure the proper functionality. I don't expect the fields names to be anything other than those I supplied as Template Inputs to the Macro Input. This workaround is cumbersome especially if the workflow requires multiple reverse mapping actions. Not to mention I have to remember to undo the delete when updating the workflow after testing.

I suggest an automated process is needed to remove any Action Tools using the reverse field map action from a workflow when the debug workflow is being built for testing. If needed, maybe supply a prompt indicating they were removed. This would allow a smoother transition between macro development and debugging.

Please allow disable or ignore conversion errors in SharePoint List Input.

In SharePoint List Input I see the same conversion error about 10 times. Then....

"Conversion Error Limit Reached".

Can you simply show the error once or allow users to choose to ignore the error? (Union Tool allows users to ignore errors).

I am not using that SP column in my workflow. Meanwhile I have to show my workflow to a 3rd party within the company. SO annoying to see errors that do not apply to my workflow being shown.

Just like there is search bar for Select Tool, there should be one for Data Cleansing tool also.

Currently there are forecasting tools under time series (prediciting for the future). But can a back casting function/tool be added to predict historic data points.

Hello all,

The reasons why I would the cadence to be back to quarter release :

-for customers, a quarter cadence means waiting less time to profit of the Alteryx new features so more value

-quarter cadence is now an industry standard on data software.

-the new situation of special cadence creates a lot of frustration. And frustration is pretty bad in business.

-for partners, the new situation means less customer upgrade opportunities, so less cash but also less contacts with customers.

Best regards,

Simon

It would be neat to add a feature to the Output tool to allow grouping by rows, with all the data related to the group column viewable under a drop-down of the selected field.

I've heard that this is possible with a power pivot but would be a nice feature in Alteryx.

Ex. A listing of all customers in a specific city -> Group by the "Neighborhood" column, the output should be a list of all neighborhoods in the city, with an option to drop down on each neighborhood to see its residents and their relevant data.

Thanks!

Hello All,

I believe there needs to be a new tool added to Alteryx. I am frequently encountering cases where I will have 0 data point feeding a workflow stream that causes my workflows to fail. Because of this, I am having to put in fail safes to keep this from happening.

There should be a tool that if there is no records that are passing into it, anything after that tool will not fail.

For an example, within a workflow I am using a dynamic input that will pull a dynamic file. The file is not always there and the workflow should be able to run if that file is there or not. If the dynamic tool and other tools would process 0 records without failing this would also solve the issue.

I would be nice to have a tool that will block off the work stream if there are 0 records passing through the tool.

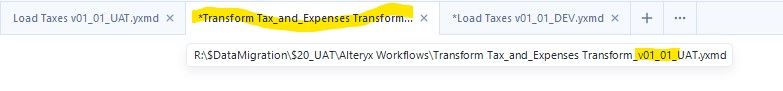

I would like to suggest that right-click on the tab allows the user the ability to EDIT the workflow name/path and save updates by use of an ENTER key press.

Cheers,

Mark

My Backstory:

I am currently what you would call an "independent" data analyst. I currently work for a major US based bank and I am trying to change roles to a data analytics role within said company. Many of our data analytics teams use (or are migrating to) Alteryx. I myself love the program. I have now attained the Core certification. As I have yet to be hired as a data analyst I am currently independently learning and building skills...but as of right now, it seems that data analytics is more of a hobby.

Issue:

I think there are a lot of people out there who are in my shoes. They are either migrating to a data analytics role or are a former analyst just wanting to keep doing what they like doing or are freelancers. Alteryx is an amazing tool. But, the big issue is that we can get the free cert/license...but after a time...we will lose access at some point. Unless we find an employer who can purchase the license for us.

How it Effects the parties involved:

As stated above, I love Alteryx. I would absolutely love to continue using it. But, I am not in a place right now where my company is considering me for a data analytics role. I also barely make enough to survive and would probably take me a lifetime to raise the funds for a full license on my own. In the end, if i were to never get a data analytics role with my current employer or new employer that would be able to give me access...I would have to seriously think about abandoning the Alteryx system as a tool as I cannot spend $5000 on a what is currently a hobby. After my learners license time is up...what do I do?

Now as for how it effects Alteryx...I would think that having people chose whether to use the software or not use the software because of financials wouldn't be the best option for business.

A Possible Fix:

A monthly subscription license. You could have a lite version where the advanced tools are not usable. Maybe even make a tiered subscription model. For example, a Core subscription that has the core learner tools and maybe some of the other tools that would allow someone to do basic analysis. Then a more advanced tier with more tools for a higher monthly rate. And so on...

This would allow people such as myself the ability to continue to use Alteryx...and spread the good word about it to others. It would also allow people to continue to truly master the software. I imagine this could also make Alteryx more of a name brand within the data community...and bring it to the attention of other corporations who would then have a user base coming into the company WITH the more advanced skills to use the system built-in. Rather than a company adopting software that they then have to train the users and going thru the growing pains of that. Again, we would-be-monthly-subscribers wouldn't need all the fancy tools.

To wrap it all up, I love Alteryx. I wish I was able to continue using it. But, as I near my learner license end date, I have to think: how am I going to continue? Do I just hope and pray I eventually find a department/company who will let me back into the cool kids club? Do I look for something similar and move away from this simply because I have access?

I appreciate you taking the time to hear me out. 😀

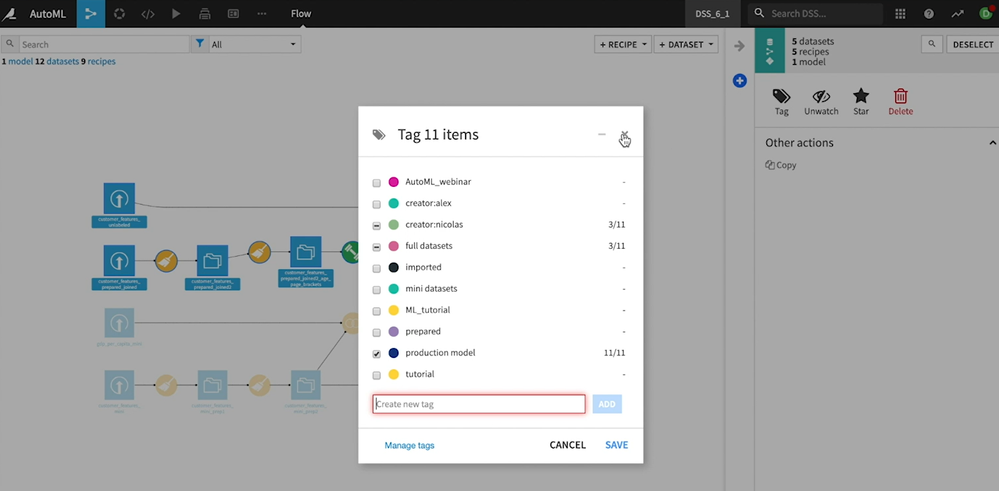

Hello,

Working on Dataiku DSS and there is a cool feature : they can tag tools, parts of a worklow.. and then emphasize the tools tagged.

Best regards,

Simon

Problem statement -

Currently we are storing our Alteryx data in .yxdb file format and whenever we want to fetch the data, the whole dataset first load into the memory and then we can able to apply filter tool afterwards to get the required subset of data from .yxdb which is completely waste of time and resources.

Solution -

My idea is to introduce a YXDB SQL statement tool which can directly apply in a workflow to get the required dataset from .YXDB file, I hope this will reduce the overall runtime of workflow and user will get desired data in record time which improves the performance and reduce the memory consumption.

Hello all,

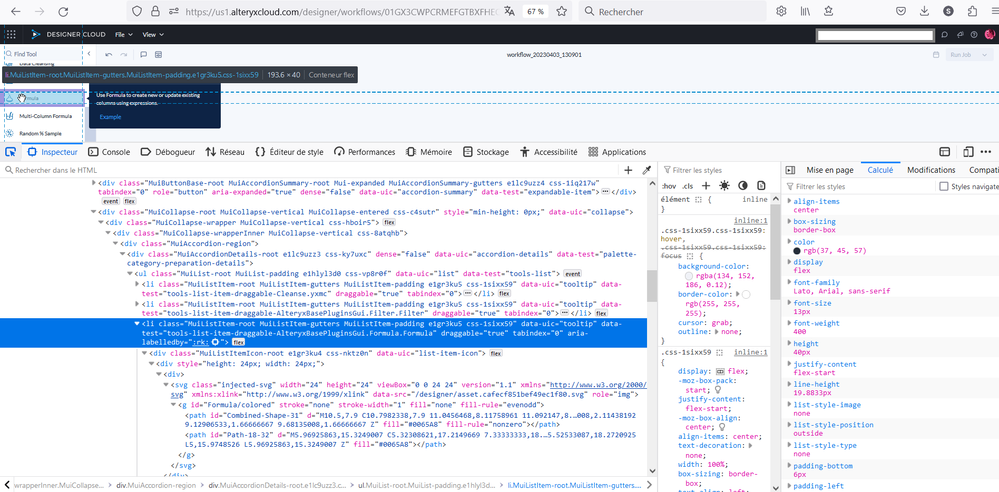

A few years ago, I asked for svg support in Alteryx (https://community.alteryx.com/t5/Alteryx-Designer-Desktop-Ideas/svg-support-for-icon-comment-image-e... ). Now, there is Alteryx Designer Cloud with other icons... already in svg !

So I think it would be great to have an harmonization between designer and cloud.

Best regards,

Simon

Hello all,

We all know for sure that != is the Alteryx operator for inequality. However, I suggest the implementation of <> as an other operator for inequality. Why ?

<> is a very common operator in most languages/tools such as SQL, Qlik or Tableau. It's by far more intuitive than != and it will help interoperability and copy/paste of expression between tools or from/to in-database mode to/from in-memory mode.

Best regards,

Simon

- New Idea 208

- Accepting Votes 1,837

- Comments Requested 25

- Under Review 150

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

632 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,493 -

Documentation

64 -

Engine

122 -

Enhancement

275 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

177 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- apathetichell on: Github support

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...