Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Hello all,

According to wikipedia :

https://en.wikipedia.org/wiki/Embedded_database

An embedded database system is a database management system (DBMS) which is tightly integrated with an application software; it is embedded in the application.

It's often like a single file/dll that you can use inside an application without the user having to connect (or at least to configure it) to it (it's all done inside the application). So, it's widely portable.

Why it does matter ?

As of today, there is not a single example of in database workflow because all the supported databases need the user to:

1/install an odbc driver (most of time, he won't have the rights to do so)

2/configure an odbc connection (sometimes, he doesn't have the rights to)

3/configure a connection on Alteryx (ok, he can)

So it requires IT action, which can be pretty long (in ùany organization, it requires several weeks !!). And even with all of that,the users must be granted privilege to access database and the customer need to develop its own examples and write its own specific documentation.

Well, this is not efficient.

What I suggest is Alteryx to use one of embedded database for training support/one tool examples. SQLlite seems good, maybe a more analytics oriented (like DuckDB ) would be more efficient.

The requirement are, I think, the following :

-OpenSource and free

-Fast

-SQL compliant

-With a bulk load ability

Best regards,

Simon

Add Unicode category to the cleansing tool

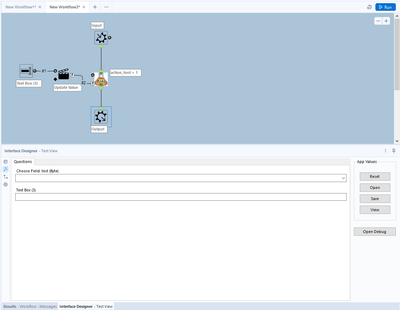

I have developed many workflows, macros, and apps, and I have always had to find a workaround for displaying information on the user config page or user interface.

For example, I want to input 'Default text' into the Text Box interface tool, but the problem is that it does not accept any external connection.

It would be great if this tool had a Q input anchor that could accept data from a connected tool (in both single or multi-line mode) or from external input (such as a file for DropDown list or List Box tools).

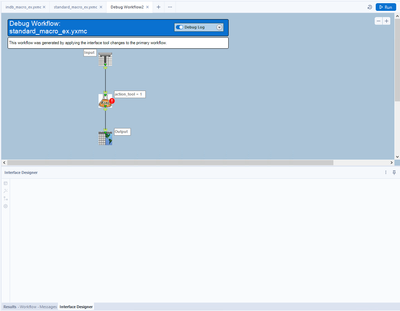

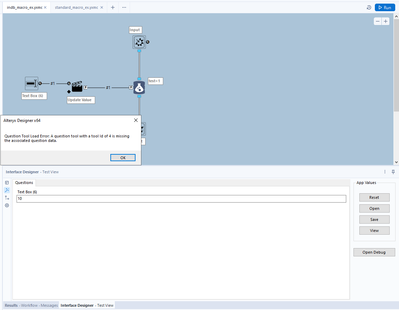

When making any type of macro, it's important to test the functionality of the macro via a debug. This is accomplished successfully with normal tools, however there's a bug that will not allow the user to debug In-DB macros that use either of the following standard Alteryx tools:

- Macro Input In-DB

- Macro Output In-DB

If either of these tools are included in the macro you are building, an error message will appear not allowing you to open a debug.

Error message: Question Tool Load Error: A question tool with a tool id of XXX is missing the associated question data.

Of course, Macro input and output tools do not require any specific action/question tool associated with it. This is a bug. A user pointed out the XML issue almost 3 years ago here:

In summary: "It appears that the tool itself inserts a hidden Question attribute into the XML which can also be seen in Workflow Configuration"

Source:

Examples....

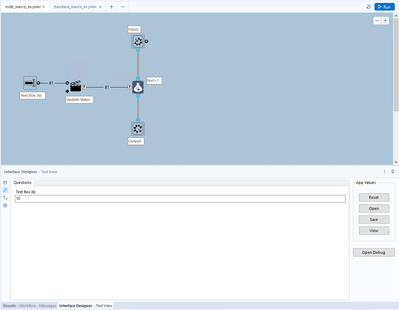

A normal macro, using standard tools:

After debugging a standard macro, the Macro Input/Output tools correctly change to a Text Input and a Browse tool. This allows the macro author to test the macro.

However, when trying the same thing with In-DB tools in a macro, an error message appears:

In-DB macro 1:

In-DB Macro error message (after clicking "Open Debug"):

It would be great if we could add example workflows to our macros, accessible in the same way as from the original tools (example hyperlink shown after single-clicking on a tool in the tool palette or when searching in the search bar).

There is a post on how to do it for custom tools How to add an example link in the custom tool (alteryx.com). The way described there has limitations and does not seem to work on macros: I was able to get the link to show up, but nothing happens when I click.

My suggestion, make it easy to add an example workflow to a macro, like it is to change the logo or add a help link.

I would love a tool to be created for looking up a value in a table based on a condition. It could be called "Lookup." One input to the tool would be the lookup list, the other is the main database. Inside the tool you could enter functions that can query the lookup table and return the results either as an overwrite of an existing field in the main DB or as a new field in the main DB, similar to the options in the Multi-Row Formula tool.

Here is a link to my post in Community that explains the problem. The solution, in a nutshell, was to create a Join (which resulted in millions of additional rows), run the conditional formula, then filter to get rid of the millions of rows that were created by the Join so only those that met the condition remained (the original database rows).

Here is the text of my Community post describing my project (slightly modified for clarity):

Table 1: A list of Pay Dates (the lookup table)

Table 2: Daily timekeeper data with Week Start and Week End Date fields.

The goal: To find the Pay Date in Table 1 that is greater than the Week Start Date in Table 2 and no more than 13 days after the Week End Date in Table 2.

[Table 2: Week Start Date] < [Table 1: Pay Date]

and [Table 2: Week End Date] < [Table 1: Pay Date]

and DateTimeDiff([Table 1: Pay Date], [Table 2: Week End Date], 'Days') <= 13

There are many different flows I could use this type of tool for that would save time and simplify the flow.

Thanks!

Our company has a need to link a new data source in Athena. We have been able to establish a connection using the input functionality however the connection is so slow it is unusable. We need to have Alteryx build an In Database option for Athena to allow us to link our data lake to Alteryx.

Hey all,

At present, if you have an existing canvas and you want to move to a DCM Connection - you are asked something like "this will reset all of your connection details - are you sure". If you have complex queries; or pre+post SQL - then you first have to copy all of this out into Notepad before you can convert to DCM and then reconfigure it all again.

However, if you are not using DCM you can change data sources when you go into Workflow Dependancies without losing your queries etc.

Could we revisit the user experience of changing to or from a DCM connection to eliminate this "start from scratch" phenomenon - if you are converging from an existing SQL ODBC or ODB or SSVB connection to a SQL connection via DCM then it should allow you to make this conversion without losing your current configuration; and the same for any other database type.

cc: @mbarone

Hi

The action of the 'tab' key in configuration window recently appears to have changed from indenting to a navigation function.

The user should be able to select which action the tab key performs.

Alternatively, tab should indent and shift-tab (or alternative) navigate. I'm not the only one who would appreciate the choice.

PuffinPanic

- TEXT TO COLUMN TOOL : Check Mark for “Output/No-Output” next to “OUTPUT ROOT NAME”

Most of the time I don't want/need the column that I parsed. Provide a check box for if you want the root column output.

Hi!

Just thought up a simple improvement to the US Geocoder macro that could potentially speed up the results. I'm doing an analysis on some technician data where they visit the same locations over & over again. I'm doing a full year analysis (200k + records) & the geocoder takes a bit to churn thru that much data. In the case of my data though, it's the same addresses over & over again & the geocoder will go thru each one individually.

What I did in my process & could be added to the macro is to put a unique tool into the process based off address, city, state, zip, then Geocode the reduced list, then simply join back to the original data stream using a join based off the address, city, state, zip fields (or use record id tool to created a unique process id to join off).

In my case, the 200k records were reduced to 25k, which Alteryx completed in under a minute, then joined back so my output was still the 200k records (all geocoded now).

Not everyone will have this many duplicates, but I'd bet most data has a few, & every little bit of time savings helps when management is waiting on the results haha!

Alteryx gods,

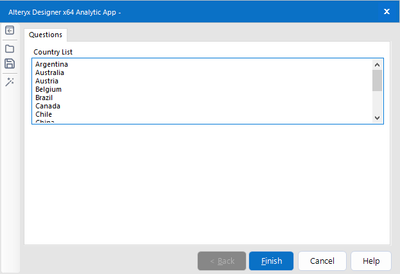

It would make me even happier than I am now if it were possible to tailor the completion messaging in the Interface Designer when an analytic app completes.

Currently, we use rendering etc, but sometimes we simply want to be able to create a bespoke completion message.

My example is as follows:

In the app you have the option to download files, or have them emailed to you. If you choose download, the final display is the render tool with the documents listed, however, if you choose email I want nothing to show but the final window with the message "Please check your email" or something. There may be more than one option, and so being able to dynamically change these messages would be very useful.

Help me Alteryx gods, you're my only hope.

*beep boop boop*

Currently when debug mode is entered in analytic apps and macros, the direct inputs to the app/macro when the error occurred are hardcoded into a workflow in debug mode, so that errors can be more easily detected.

However, inputs into analytic apps also create global variables which can be used in the more code-heavy aspects of Alteryx such as the Formula Tool. These are not updated in the same way which can cause workflows to break in debug mode - it would be really helpful if global variables could be updated in the same way as the inputs into tools are.

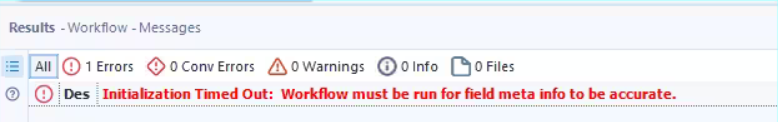

Hello all,

Sometimes, when you have too much time to retrieve your tables metadas, you can have this message

Initialization Timed Out: Workflow must be run for field meta info to be accurate.

From what I understand, it's Alteryx and the source system that drives the time out value. However, I have some cases where the long time is "normal" and that really hurts the user experience.

So, I would like the ability in settings to change the default value.

Best regards,

Simon

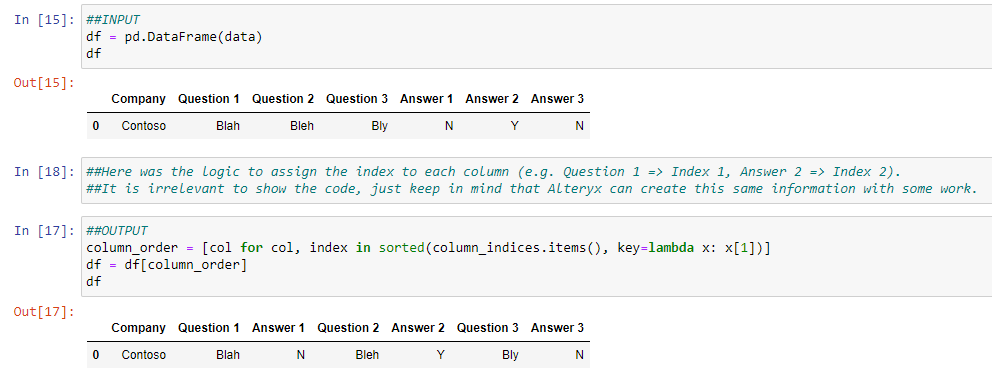

Problem: In certain workflows, it becomes necessary to arrange columns in a specific order for the output. While achieving the desired order for a fixed number of columns is feasible using the select tool, difficulties arise when dealing with dynamic outputs that introduce new columns during each workflow run.

Example: Consider the following scenario: the INPUT data for the select tool includes a set of Question/Answer columns. However, with every run of the workflow, new columns of this type are introduced. The challenge is to ensure that Question N and Answer N columns are grouped together in the OUTPUT dynamically. Unfortunately, this task is not easily accomplished using the current capabilities of Alteryx.

INPUT:

| Company | Question 1 | Question 2 | Question 3 | Answer 1 | Answer 2 | Answer 3 |

| Contoso | Blah | Bleh | Bly | N | Y | N |

DESIRED OUTPUT:

| Company | Question 1 | Answer 1 | Question 2 | Answer 2 | Question 3 | Answer 3 |

| Contoso | Blah | N | Bleh | Y | Bly | N |

With Python/Pandas, this problem can be easily resolved by assigning index values to each column and then sorting the columns based on the assigned index:

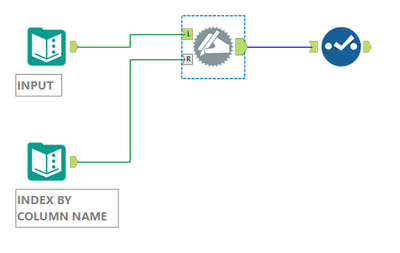

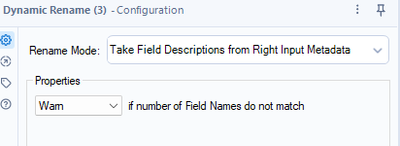

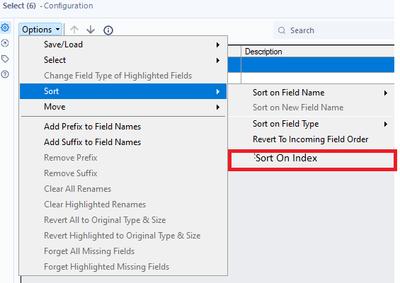

So, based on the Python solution, if Alteryx could do the same, it would be great. I personally think that if the Dynamic Rename tool could held the Index Info, and the select tool could also held the Sort option, this would work.

Dynamic Rename: Already can hold Description info, could hold Index Info.

Select tool: Could sort by index and hold this info when the workflow is saved.

Hope this all make sense.

Thanks.

In the RecordID tool, provide additional options for the creation of the ID, specifically allow for the ID to 'Intervals'.

For example, Record ID every 10, meaning instead of creating an ID of 1, 2, 3, 4, 5 .... you could create an interval of your choosing, the most obvious would by 10 or 100 thus your ID's would then be 10, 20, 30, 40 .... or 100, 200, 300, 400, 500 ... etc.

How about a “Temporarily Disable Tool” feature where the tool is disabled? Just the same as the "Disable All Tools that Write Output" but would only apply to the specific tool you select. But, Instead of having to delete or cut the tool and connect around (as this can be tedious)! The feature could be applied to various preparation tools (and potentially more) to help save time.

For example, there are occasions when I might have a filter applied and would want to temporarily disable the tool only to see all results. This has been the case when I have wanted to include hospital wards (by temporarily disabling the tool) I was filtering out to review in the summarized totals.

The specific tool could have the same hashed marking as the "Disable All Tools that Write Output". The "Temporarily Disable Tool" feature could be listed when the specific tool is right clicked on. - The workflow could also prompt to show that the user has a tool "disabled" to highlight to the user.

Edit: Spelling

This is a feature request based on my comment submitted here: Email Tool: Format "From" field to accommodate "Di... - Alteryx Community

It would be great to provide an option in the Designer Email Tool to allow us to specify a "Display Name" when sending emails. The "Display Name" is a common part of the email specs listed here: RFC2822 - Section 3.4 (Address Specification)

The email gateway/service that I'm using will send emails, but the "From" line will reflect only the email address.

For example, it will show an email as being from "john.smith@example.com" where I would love for it to show up as from "Smith, John". This would make emails appear like other internal company emails in our company Outlook clients, and in general provides more useful flexibility for the Email tool.

Many other email clients support using Display Name, but it appears that Alteryx currently doesn't.

The format of an email address with Display Name is something like "Smith, John" <john.smith@example.com> (with or without the quotes).

Ability to ‘name’ the point created in the “Create Points” tool.

Instead of sticking a select tool after it to rename it from ‘centroid’ to Starting Location or Store location or whatever.

Hi,

Would be helpful to have an Input and Output Tool for ProjectOnline like the SharePoint and OneDrive Tools.

This way we can read the projects in a tabular form and automate our project management tasks.

Thank you.

- New Idea 208

- Accepting Votes 1,837

- Comments Requested 25

- Under Review 150

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

632 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,493 -

Documentation

64 -

Engine

122 -

Enhancement

275 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

177 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

- guth05 on: Search for Tool ID within a workflow