Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Top Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

I am working with complex workflows which use multiple files as input, located on network drives. Input tools are Input Data, Directory, Wildcard Input, Wildcard XLSX Input (from CReW macros).

Regularly, I experience very slow Designer when working on the workflows, and slow progress when running the tools mentioned above, especially when working from home. Switching off Auto Configure did not really help because I the column list sometimes does not converge even after pressing F5 multiple times, and when actively working on workflows, I have to press F5 all the time...

In order to speed up both working on workflows and running the workflows, I would like to propose a function "Cache all File Inputs" which loads and caches all file inputs at once. To achieve this state, I now have Cache and Run workflow once per every file input.

When loading multiple sheets from and Excel with either the Input Data tool or the Dynamic Input Tool, I usually want a field to identify which Sheet the data came from. Currently I have to import the Full Path and then remove everything except the SheetName.

It would be great if there was an option to output she SheetName as a field.

Alteryx Designer is slow when using In-DB tools.

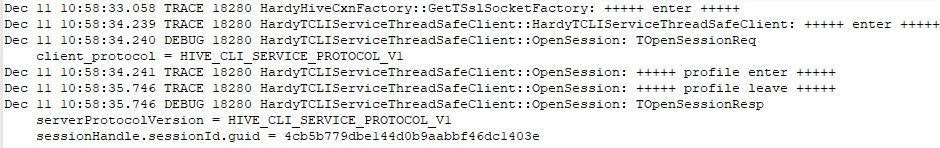

We use Alteryx 2019.1 on Hive/HortonWords with the Simba ODBC Driver configured with SSL enabled.

Here is a compare In-DB / in Memory :

We found that Alteryx open a new connection for each action :

- First link to joiner = 1 connection.

- Second ling to joiner = 1 connection.

- Click on the canevas = 1 connection.

Each connection take about 2,5 sec... It really slow down the Designer :

Please, keep alive the first connection instead of closing it and creating a new one for each action on the Designer.

I love this tool, but think it would be improved by including an option to create a column per delimiting character. This could be added in the number of columns selector box. In the case where 1 row has more delimiters than another, null columns can be created. Without this option you have to Regex count the delimiters, select the max and then embed the Text to columns tools in a macro and then pass the max columns as a param. Would be nice to resolve all this in the main tool.

Thanks, nick

It would be great if we could have a Windows Active Directory data connector tool added to the standard Alteryx toolset.

MS Excel Power Query and PowerBI both can connect to Active Directory for use as a data source, but are both very cumbersome to use. Having a connector in Alteryx that can read AD data into a workflow would be super helpful for a long list of use cases. A couple that are top of mind for me are:

-Leveraging group membership info for dynamic distribution of reports or datasets

-Being able to build reporting and dashboards about the organization (useful for Tech audit, HR, etc.)

I've seen links to an old project on GitHub of someone that started development on this, but the method (just copy these random .dlls into your program directory) is seriously frowned upon by any enterpise IT. Would be great if Alteryx could pick up that work, polish it a bit and add it to the actual Alteryx Designer toolset.

Many files I use are in .xlsb format.

I work with data where milliseconds is my saviour when I count distinct the datetime to get number of events. Alteryx ignores the millisecond part (as lots of other BI tool providers - I don't know what is going on with this idea that milliseconds are not needed). Yes I can convert it to string but it's not the best practice to create duplicate fields just so that I have date part for date-related calculation (plotting, time difference) and on the other hand string value for quick and easy counting..

- moving or renaming a file after importing it

- deleting a file after importing it

- moving or copying a file after successfully exporting it

- writing a temporary file (i.e. batch file for RunCommand tool), then deleting it when finished

The JOIN tool could use some love. Let's consider merging the JOIN and UNION functions into a single tool. Instead of strictly L, J, and R outputs, we could have an option to allow for all standard SQL joins:

- Cross Join (Warning!!!)

- Inner Join (boring)

- Left Outer Join (saves time configuring Union)

- Right Outer Join (saves time ...)

- Full Outer Join (saves time ...)

Being able to JOIN on case-insensitive values is a big bonus (resisted urge to BOLD and change font size).

Being able to JOIN on date-range is often requested.

Being able to JOIN on numeric-range is often requested.

If we are combining tools, getting UNIQUE on L or R (or both) inputs would also save time. Most JOIN errors are because the incoming (R) data contains duplicates by KEY.

cheers,

Mark

Hi @NicoleJ

Working in the accounting department, this has come up too many times now to ignore!

Would LOVE LOVE LOVE to see a new formula available in the DateTime formula suite that mimics the function of the EOMONTH() formula when working with dates in Excel.

The beauty of the EOMONTH() formula in Excel is that I can just give it a date, and then tell it how many months in the future or past I would like it to add/subtract... Alternatively, in Alteryx, this can require 2 or 3 nested DateTime functions to arrive at the same answer.

Example: To find the end of the month 2 months in the future from today's date, I would use the following formula...

Excel = EOMONTH(Today(),2)

Alteryx = DateTimeAdd(DateTimeAdd(DateTimeTrim(DateTimeToday(),"month"),3,"months"),-1,"days")

Seems much more complicated than it needs to be in Alteryx, and easy to get lost in the nested formulas & non-intuitive adding/subtracting of months/days! I can see a new formula (something like DateTimeEOMonth?) being structured as follows in Alteryx: DateTimeEOMonth([Field],increment)

Please consider! Our accounting department thanks you heartily in advance... 🙂

Cheers,

NJ

This request is super simple! I love how Alteryx displays the row count and size of the data passing through each tool at run time. Can you set the default formatting for the row count indicators to be #,###? Without the commas, it's hard to easily check the row count once you get more than 6-9 digits.

In the example below, it would be so much more readable if it displayed as 75,640,320.

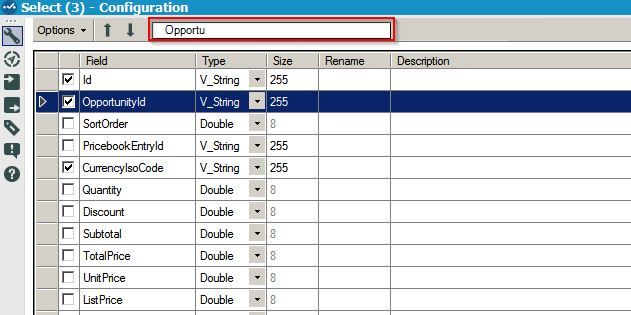

It would be very useful to be able to search the field by typing the name instead of scrolling up and down looking for it among a few hundred fields.

When I use the Comment Tool its difficult to select the tools inside it, but when I use the Container Tool the Container Text doesn't support Font Sizes, and doesn't support multiple lines of text so I end up moving the Comment into the Container, but still have problems selecting a group of tools.

So a combined Comment and Container Tools would be wonderful!

Bonus: If the Comment Tool could support Multiple Font Sizes.

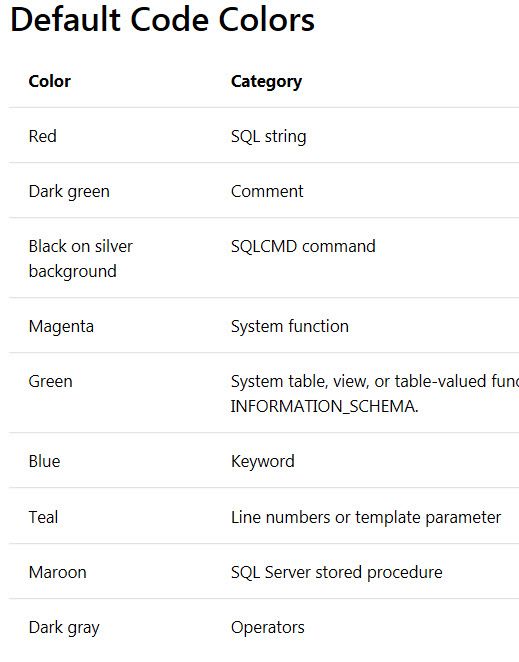

We need color coding in the SQL Editor Window for input tools. We are always having to pull our code out of there and copy it into a Teradata window so it is easier to ready/trouble shoot. This would save us some time and some hassle and would improve the Alteryx user experience. ( I think you've used a couple of my ideas already. This one is a good one too. )

It would be great if there was an option in the configuration of the Output Tool to create the output directory if it doesn't already exist. Maybe also to append instead of overwrite for all file types too?

We all love seeing this. And, it's fairly easy to fix, just go find the macro and insert a new copy. But, then you have to remember the configuration and hope that it was simple.

With the tool that's there, the XML still contains the configuration, all that's missing is the tool path. It would be great to be able to right click and repair the path from the context of the missing macro.

The DateTime tool is a great way to convert various string arrangements into a Date/Time field type. However, this tool has two simple, but annoying, shortcomings :

- Convert Multiple Fields: Each DateTime tool only lets you convert one field. Many Alteryx tools (MultiField, Auto Field, etc.) allow you to choose what field(s) are affected by the tool. If I have a database with a large number of string fields all with the same format (such as MM/DD/YYYY), I should be able to use one DateTime tool to convert them all!

- Overwrite Existing Field: The DateTime tool always creates a new field that contains your converted date/time. I ALWAYS have to delete the original string field that was converted and rename the newly created date/time field to match the original string field's name. A simple checkbox (like the "output imputed values as a separate field" checkbox in the Imputation tool) could give the flexibility of choosing to have a separate field (like how it is now) or overwrite the string field with the converted date/time field (keeping the name the same).

Alteryx is overall an amazing data blending software. I recognize that both of these shortcomings can be worked around with combinations of other Alteryx tools (or LOTS of DateTime tools), but the simplicity of these missing features demonstrates to me that this data blending tool is not sufficiently developed. These enhancements can greatly improve the efficiency of date handling in Alteryx.

STAR this post if you dislike the inflexibility of the DateTime tool! Thank you!

It would be extremely helpful if Alteryx could add quick filters to the data browsing windows that would allow you to filter the contents of the window based on each column. Essentially just replicate what you can do with quick filters in Excel.

Without this, I'm often forced to copy my data out into Excel in order to do any detailed trouble-shooting, and often there's too much data to copy, which prevents me from quickly getting to what I need.

Of course you can create a whole separate filter object, but that's combersome and requires re-running the workflow.

I surprisingly couldn't find this anywhere else as I know it's been discussed in person on many occasions.

Basically the Formula tool needs to be smarter in many ways, but this particular post focuses on the Data Type component.

The formula tool, should not always default to V_String as the data type when entering data or a formula into the formula tool, it should look at the data type and estimate the most likely option.

I know there are times where the logical type might not be consistent in all fields, but the Data Preview and the Function of the formula should be used to determine the most likely option.

E.G. If I type a number or a date directly into the formula tool, then Alteryx should be smart enough to change the data type from the standard V_String to Int, Double or date.

This is an extension to the ideas posted here:

- New Idea 208

- Accepting Votes 1,837

- Comments Requested 25

- Under Review 150

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

632 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,493 -

Documentation

64 -

Engine

122 -

Enhancement

275 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

177 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

- guth05 on: Search for Tool ID within a workflow