Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Because Alteryx Designer is using basic authentication (not our standards like Kereberos and NTLM), Alteryx Designer failed to pass our proxy authentication. Our network security support decide we will use user agent to identify the request is coming from Alteryx Designer and let the authentication thru. Then we realize Alteryx Designer also does not set the user agent for the HTTPS request. We are requesting Alteryx set the user agent value for the download tool https request,

Would be nice to select a bunch of consecutive fields, and cut them and paste them to a different area. Currently, the only options are to Move to Top or Move to Bottom. If you want to move somewhere in between, you have to scroll through the whole list.

Hello! I use Alteryx for lots of spatial data blending, and 99% of the time that works perfectly. However, when I try to analyze data in Puerto Rico or some other USA territories, Alteryx cannot read the data and I have to reproject it in another tool so it can. Can support for all common projections be added to Alteryx so the data can be read in natively? The GDAL Python code data file "gcs.csv" contains all of the projections I think would be needed.

I get a lot of requests to replicate the Excel Table format into Alteryx output. When I use Reporting-Table tool, I have option to choose border, give color and size. No dotted border format or any other border formats which excel offers.

It would be great if Tool Container margins were adjusted so tools inside could snap to the grid perfectly. Right now they are just a pixel or so off and it creates slight crooks in connectors. (Minor I know, but it would go a long way to make canvases look clean)

Example:

When choosing "In List" values in a CYDB input, the normal Windows functions do not work (shift+click, ctl+A, ctl+click, etc.).

When having to choose, say, 20 values, it is a big annoyance to have to click each value (20 clicks).

Have been told this is a bug so I wanted to put it on your radar for a fix.

When a user adds a column in the Formula tool, show the data type directly underneath the column name, not below the expression. It's important for the user to set the data type and then build the expression. Otherwise, the user neglects to and then minutes or hours later sees strange behaviour as a result of having the default V_WString. Make the data type prominent because it is important and part of the metadata.

hello,

Recently I used the optimization tool and it's awesome. However, there is no option for sensitivity analysis and It would be great to see it in a future version. Thank you!

King regards,

Currently working through an assignment on the Udacity Nano-degree related to A/B testing (thank you for the great course content @PatrickN )

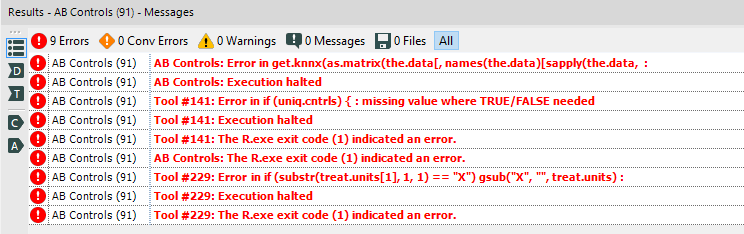

Unfortunately - when using the AB testing tools for the first time, I encountered several cryptic error messages.

This request is not to help diagnose this error message, but rather to wrap these error messages in a way that gives users some useful info so that they can solve this problem themselves.

As you can see from the error message below - the error provided does not give the user any hints on how to go about fixing the problem.

I've attached the workflow with embedded data so this should be replicatable

Hello Alteryx Team,

it is very important to upload json strings to snowflake. The reason is to built data vault 2.0 on snowflake side to have a scalebale dwh. I tried many different ways to do this but nothing works for me. Please can you implement for me this feature for the output tool.

best regards

Jan

This is currently possible with xlsx and xlsm files but not xlsb files.

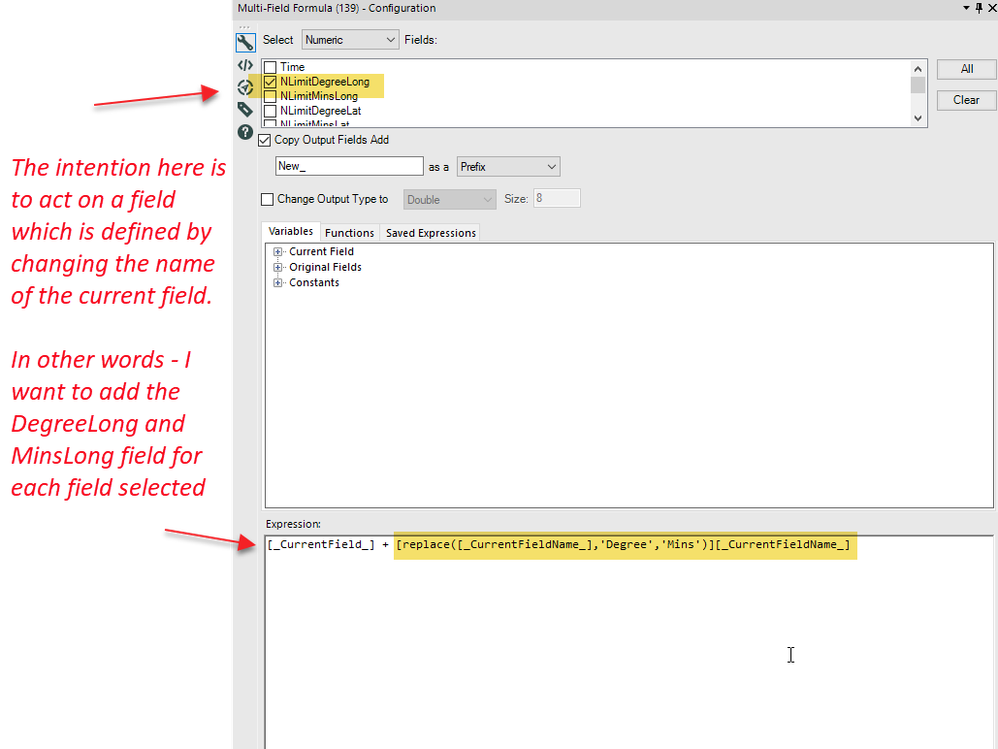

There's a common need to perform the same function on many fields, where you want to bring in data from a secondary field which is defined by the current column name.

So - for example:

Input:

- Prod1UnitWeight: 10

- Prod1Qty: 10

- Prod2UnitWeight:15

- Prod2Qty: 1

Output

- Prod1TotalWeight: 100

- Prod2TotalWeight: 15

So it would be useful to be able to have an indirect function where you can create a string which contains the field you want to use; and then indirect to it.

For example:

- Multi-field formula on Prod1Qty; and ProdQty

- CreateNewField Prod1TotalWeight

- [_CurrentField_] + indirect(replace([_currentFieldName_],"Qty","UnitWeight")

- which would resolve to prod1Qty * indirect("Prod1UnitWeight")

Given redshift prefers accepting many small files for bulk loading into redshift, it would be good to be able to have a max record limit within the s3 upload tool (similar to functionality for s3 download)

The other functionality that is useful for the s3 upload tool is ability to append file names based on datetimestamp_001, 002, 003 etc similar to current output tool

I think it would be great to add metadata to a yxdb. For example, I was back tracking and trying to figure out which module/app I used to create an old yxdb. Now I use Notepad++ and do a "Find In Files" Search. Wouldn't it be great it the module path would be available when you look at the properties of a yxdb in Alteryx?

Would be nice if could use something like $Field rather than repeating the field name in the Condition and Loop expression within the Generate Rows tool

I have several .yxdb files that I’ve been appending to daily from a SQL Server table in order to extend the length of time that data is retained.

They’re massive tables, but I may only need one or two rows.

I had hoped to decrease the time it takes to get data from them by running a query on them (or a dynamic query/input) as opposed to using a filter or joining on an existing data set which would have equal values that would produce the same result as a filter.

Essentially, the input of .yxdb would have the option of inputting the full table or a SQL query just like a data connection.

As per this discussion, I'd like to create constants that stay with me as I create new workflows rather than creating a user constant across multiple workflows.

This could perhaps be done by editing an xml file in the bin.

Hi there,

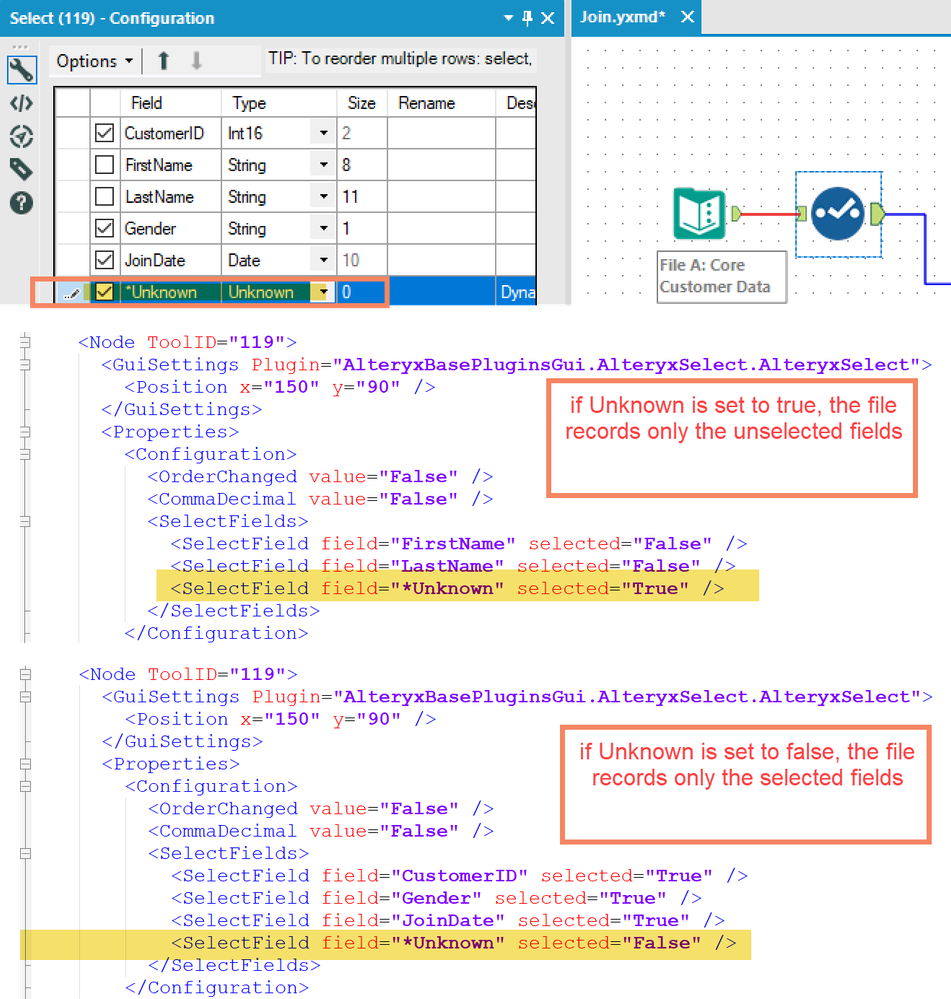

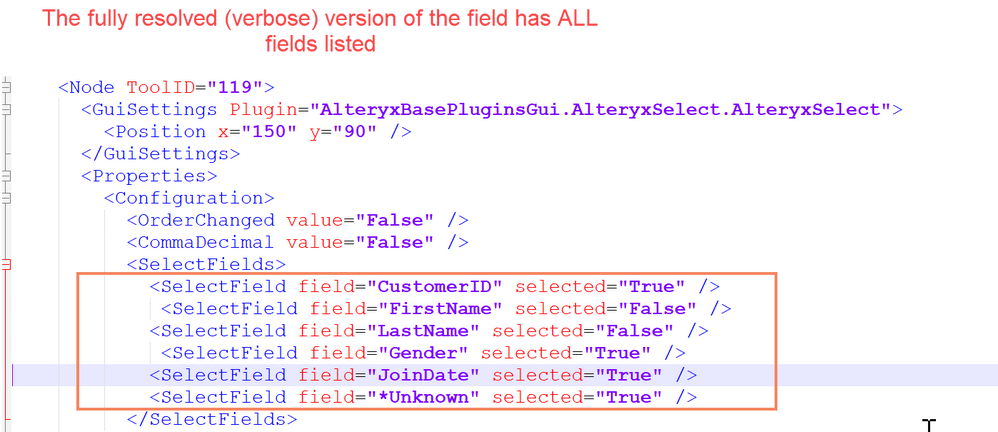

The select tool writes a different configuration to the underlying XML file for the Alteryx flow depending on whether the "Unknown" value is selected.

- if unknown is true:

- Only fields that are deselected (or renamed /modified) are written to the XML file. This is understandable since if no field is de-selected, and "Unknown" is true, then whatever goes through the tool is passed on

- if unknown is false:

- Only fields that are selected (or modified) are written to the XML file. Again, this is logically understandable.

However - we are hoping to scan our Alteryx jobs to spot where fields are not used and should be trimmed out earlier - and because of this behaviour of select-type tools (Select; join; etc), we cannot get a full view of all the fields known by the tool.

Can we please give the user an option to write ALL fields to the XML file irrespective of the "Unknown" flag? This will give the added benefit of enabling every tool to know its fields on a fresh reload without having to rerun.

Example:

Desired State:

cc: @Ned

There should be an in built option in the Email Tool to send only one email instead of sending emails equivalent to the number of records in the attached file.

Currently, it requires to add Unique tool before the Email Tool.

- New Idea 206

- Accepting Votes 1,838

- Comments Requested 25

- Under Review 149

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

631 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

74 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,492 -

Documentation

64 -

Engine

121 -

Enhancement

274 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

176 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

- guth05 on: Search for Tool ID within a workflow

| User | Likes Count |

|---|---|

| 41 | |

| 30 | |

| 19 | |

| 10 | |

| 7 |