Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

My team uses a shared macro repository (say F:\AlteryxMacros), and we recently ran into an issue with the default save location for macros. While we save most macros to our repository, there are times when folks save their macros elsewhere (let's say C:\MyAwesomeWorkflow). The issue we've encountered is that if you go to file >> save as with a macro, it will ALWAYS default to the macro repository, even when my macro is currently saved elsewhere (C:\MyAwesomeWorkflow). Speaking for a friend, people have accidentally saved things to the macro repository by accident. Or, they waste time navigating from the macro repository to the their current folder.

If a macro is saved somewhere, please change the file >> save as to default to the current folder. Thanks!

-

Feature Request

-

General

Hi,

With multiple Workflows open, I'd like to be able to grab one of the Workflow tabs and drag it out on to the desktop. This act would then cause a new Alteryx Window to open up with the Workflow that was pulled out. Just like when you have multiple tabs open in I.E. and you drag a tab out and drop it on the desktop - you end up getting another I.E. opened up and the tab you dragged out is in the newly opened I.E.

This would be handy because I'm often wanting to copy/paste tools, formulas, etc. and it would be nice to do that w/o flipping from one tab to another.

I know I can right-click and open another Alteryx but when opening several - they all open in the same one.

Thanks,

Brad

-

Feature Request

-

General

I like the new cache option in 2018.3, but I would like a user setting added that would allow me to 1) write the cache files to a local drive and 2) have them persist when I re-open Alteryx. Currently, the files are written to the user defaulted temp space and don't persist when Alteryx is closed down. Thanks!

-

Feature Request

Can we have an option to save a workflow in a prior version for backward compatibility? I think Tableau offers this functionality.

Example:

If I have 2019.4.8 and a colleague has 2019.1.x, I cannot share my workflows because my colleague will receive a notice that the workflow was built in a newer version. I want to be able to save my workflow in 2019.1.x and send to my colleague.

This is predicated on the workflow not containing any tools/features not present in the older version. In that case, give me a warning about the specific tools/features that are not backward compatible. Thank you.

-

Engine

-

Feature Request

-

General

-

User Experience Design

The email tool, such a great tool! And such a minefield. Both of the problems below could and maybe should be remedied on the SMTP side, but that's applying a pretty broad brush for a budding Alteryx community at a big company. Read on!

"NOOOOOOOOOOOOOOOOOOO!"

What I said the first time I ran the email tool without testing it first.

1. Can I get a thumbs up if you ever connected a datasource directly to an email tool thinking "this is how I attach my data to the email" and instead sent hundreds... or millions of emails? Oops. Alteryx, what if you put an expected limit as is done with the append tool. "Warn or Error if sending more than "n" emails." (super cool if it could detect more than "n" emails to the same address, but not holding my breath).

2. make spoofing harder, super useful but... well my company frowns on this kind of thing.

-

Category Reporting

-

Desktop Experience

-

Feature Request

-

Tool Improvement

While challenge 41 was fun to calculate weekdays between 2 dates, there should be a formula similar to networkdays in excel to do the same function

-

Feature Request

Hello Dev Gurus -

The message tool is nice, but anything you want to learn about what is happening is problematic because the messages you are writing to try to understand your workflow are lost in a sea of other messages. This is especially problematic when you are trying to understand what is happening within a macro and you enable 'show all macro messages' in the runtime options.

That being said, what would really help is for messages created with the message tool to have a tag as a user created message. Then, at message evaluation time, you get all errors / all conversion warnings / all warnings / all user defined messages. In this way, when you write an iterative macro and are giving yourself the state of the data on a run by run basis, you can just goto a panel that shows you just your messages, and not the entire syslog which is like drinking out of a fire hose.

Thank you for attending my ted talk regarding Message Tool Improvements.

-

API SDK

-

Category Developer

-

Feature Request

-

Tool Improvement

Hello,

Well, the title is pretty simple : it appears that the tendancy right now is to have web version of any software on a server.

A few notes about that :

-a lot of Alteryx competitors are already in this mode and it's hard to sell you're still with a desktop-only mode for design, even if the product is far better.

-a good idea is the one used by Qlik with Qlik Sense : they still have a desktop and a web version of Sense but the desktop works mainly as an hidden browser plus an engine. The web version is cool too because you can make your own application, or your own data connection etc..

-the main interest of a web implementation of Alteryx would be to reduce installation on client computers (and that means packaging the installer, managing the data connection, the paths, the access to macros... etc) and to have a better control of the users.

PS : this idea is soooo simple and so obvious I'm surprised I didn't find it. It may be a duplicate.

-

Feature Request

-

General

We need an option to schedule a continuous bi-weekly payroll workflow. It is every two weeks starting on a certain day of a week. Some months have 3 and other have 2. If we had an option to select the starting day in a particular month and then select bi-weekly bullet (or every 2 weeks) from that date it would be perfect. thanks

-

Feature Request

Hi All,

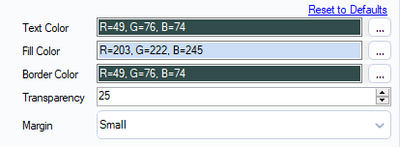

I always use a colour standard within my workflows.

I suggest two small features:

(a) RGB Colour Picker from the screen

(b) Copy and Paste function -> so I can paste the whole path (R=203, G=222, B=245) in the specific field.

Thanks!

-

Feature Request

I just downloaded Alteryx Designer 2019.2 yesterday and got busy straight away but couldn't help notice that while I like the general look and feel of the tool and general design language, I'm concerned that configuring the tools I work with will require so much scrolling.

Could we add the ability to set the zoom level of the configuration pane like we do in the workflow window or have some form of control on how the config pane sizing of contents.

I have attached the config panes using the crosstab tool as an example with 2018.4 on the left and the new 2019.2 on the right. I took care to snapshot both versions the same dimension for a more apples to apples comparison.

-

Feature Request

-

General

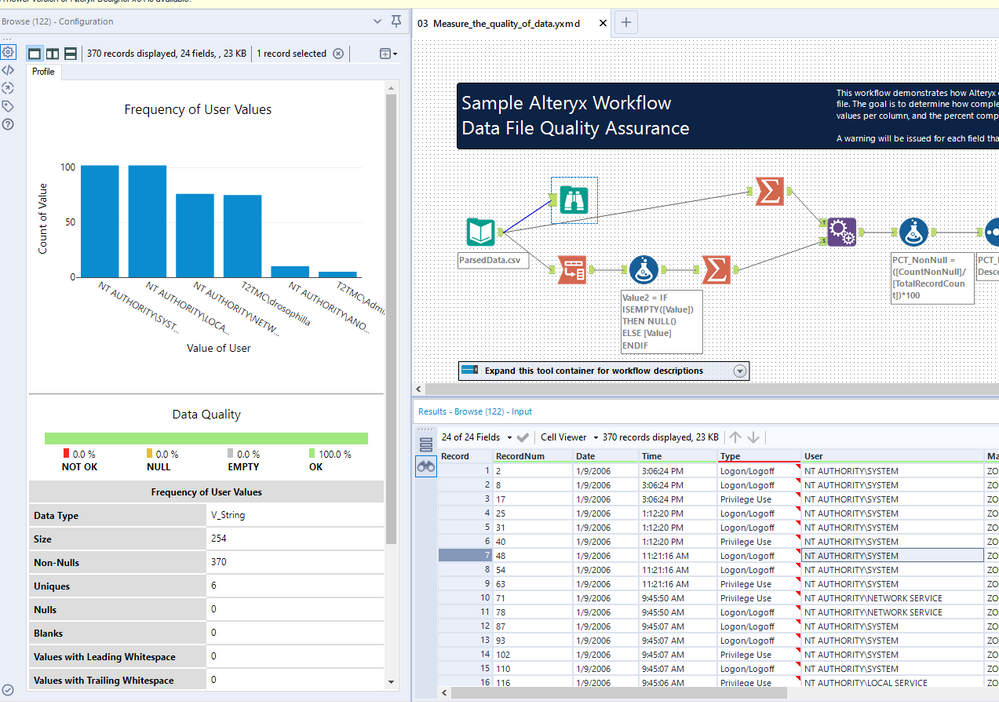

Now that 2019.2 is officially released I'll raise this here as I know it was raised as part of the beta testing. With the new interactive browse tool when filtering results the record numbering restarts.

For example in this window from a weekly challenge, I originally have this:

Then when I filter on the Allocated column for records where the Allocated amount is 0, I get this:

And as you can see the Record on the left hand side is numbered 1 - 15, so when trying to locate one of these lines to check the formula is working as expected it makes it difficult to isolate, where as if I knew that filtered record 10 was actually record 394 in the data I can then scroll to that point.

I know a solution to this would be to add a record ID field to the data, but this is not always needed.

-

Feature Request

-

General

The new Paste Before/After feature is awesome, as is the Cut & Connect Around.

https://community.alteryx.com/t5/Alteryx-Designer-Ideas/Paste-Before-After/idc-p/510292#M12071

What would be even better is to allow the combination of the two. E.G. It is not currently possible to copy or cut multiple tools and paste before/after, as this functionality only works for a single tool that's copied.

Thanks,

Joe

-

Feature Request

-

General

-

Tool Improvement

-

User Experience Design

I see many posts where users want to view numeric or string data as monetary values. I think that it would be friendly to have a masking option (like excel) where you could choose a format or customize one for display. The next step is to apply the formatting to the workflow so that folks who want to export the data can do so.

cheers,

mark

-

Feature Request

-

Localization

-

Tool Improvement

-

User Experience Design

So far, Alteryx Products are offered in 6 different languages, which is a great thing indeed !!

However there is a lack of a toggle option to effortlessly switch the interface to a different language.

As a standard feature users should be allowed to switch language without re-installing the product (applicable to all Alteryx products)

-

Feature Request

-

General

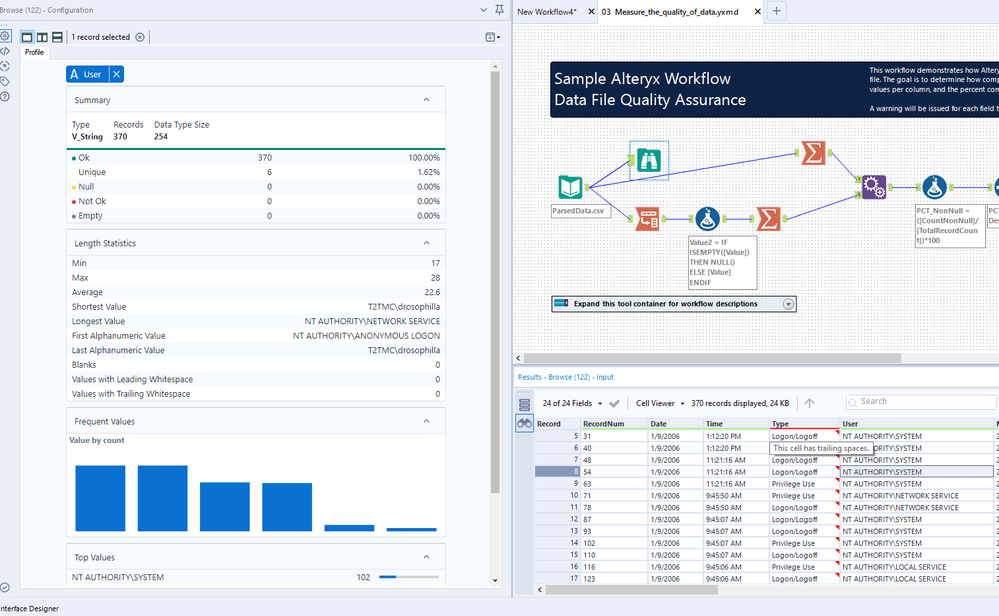

I'm digging the new holistic data view in the browse; however, there is one aspect of the old view that I miss: I liked the list of top values to be available without scrolling. Here is the current view of the new browse:

What are my top values? I either need to hover over the blue bars, or scroll down to see the list at the bottom. I would like the top values list moved to the top. For reference, here is what the old view looked like:

My top values are available right there at the top.

-

Feature Request

-

User Experience Design

Working across a large organisation inevitably leads to people using different drive letters when mapping drives/folders. This makes sharing workflows and macros with other teams more difficult and the first thing I do when creating a new workflow is change the dependencies to All UNC.

This suggestion is to offer the option to default all workflows to UNC via the user settings. Acknowledging that some users will prefer listing files by drive letter and other UNC, adding the option could make life a little bit easier for everyone.

-

Feature Request

-

User Experience Design

I'd consider myself as a power user in most of the tools I use. No matter what program it is, I try to learn most of the useful shortcuts and code them into my mouse or keypad.

It's probably pretty uncommon that someone uses a mouse with 12 extra keys or a keypad, but I think many people would be happy to have the option to define shortcuts for everything. I don't really ask for shortcuts for everything by default, but a menu like Microsoft Word has it, that would be great.

For reference:

Microsoft Word has a menu were nearly every possible action is listed and you are able to define/assign shortcuts (one or more) for every action available.

(Sorry it's German. Path: File > Options > Customize Ribbon > Customize)

-

Feature Request

-

General

-

User Experience Design

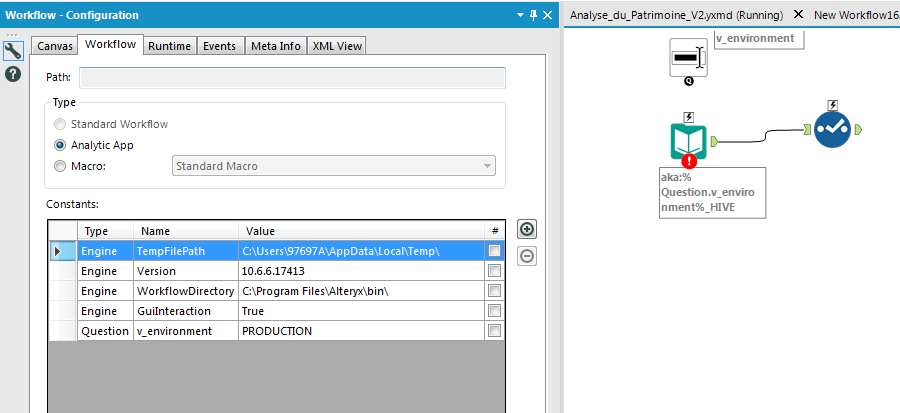

Hello,

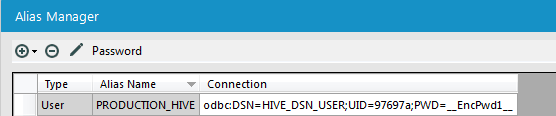

we have several environment in our organization : dev, recept, production.

In order to make that change safe we intend to make several connection (standard alias) like

PRODUCTION_HIVE

DEV_HIVE

RECEPT_HIVE

In our workflows, we want to use aka:%Question.v_environment%HIVE

Sadly, this solution does not work despite the value defaut.

-

Engine

-

Feature Request

In an enterprise environment, or a reasonably sized BI team - you want a degree of consistency on how workflows look and feel. This increases maintainability; portability; and also increases the safety (because like well structured source-code - it's easier to read, so it's easier to peer-review)

Looking at all the samples provided by Alteryx, they all have a similar template, which makes them very easy to use.

Could we add the capability for larger BI teams to create a default canvas template (or a set of templates) which enforce the company / team's style-guide; layout; and required look-and-feel?

Thank you

Sean

-

Feature Request

-

General

- New Idea 205

- Accepting Votes 1,840

- Comments Requested 25

- Under Review 147

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

631 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

74 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,491 -

Documentation

64 -

Engine

121 -

Enhancement

274 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

175 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

- guth05 on: Search for Tool ID within a workflow