Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Just like there is search bar for Select Tool, there should be one for Data Cleansing tool also.

-

Category Transform

-

Desktop Experience

-

Enhancement

Hi currently if you use the cross tab tool and the names of the new fields should have special characters they end up being replaced in the new headers with underscores "_", and then need to be updated in someway. It would be great if this was all done in the tool. In other words the new headers have the special characters as desired

-

Category Transform

-

Enhancement

Is it possible to add a search feature to the Summarize Tool that is similar to the search feature in the Select Tool? Selecting specific fields to summarize in small datasets is fine, but if I am dealing with a table that has 200 fields searching for a specific field can be cumbersome. Type in a few key letters to filter the available fields would be helpful.

-

Category Transform

-

Desktop Experience

Looking for a tool to replicate the Goal seek functionality built into Excel.

Seems it could be solved by using R or iterative macros however a tool would make life much easier,

-

Category Transform

-

New Request

We had a workflow where we needed to count business days. The standard solve of generating rows for each day between the dates wouldn't work as it would slow down the workflow too much.

Something that takes 5 seconds in Excel turned into a tremendous pain.

It would be really nice to have a built out tool where you can input the start date / end date (or what field they are tied to).

Which days of the week are considered business days and which days are not.

Which holidays should be excluded and available to add custom holidays.

-

Category Transform

-

New Request

-

Category Transform

-

Desktop Experience

It would be nice to be able to concatenate numeric values (integers, doubles, etc.) directly in the Summarize Tool.

I know this would involve converting it to a string on the backend, but I don't believe there would be any data loss when going from numeric to string. I know this can be done by using other tools like Select of Formula to convert to string before the summarize but I don't see any reason why this couldn't be accomplished in a single tool.

Thanks,

Paul

-

Category Transform

-

Desktop Experience

Why Alteryx does not have an easier way (Drag, Drop, Click and Run) to calculate moving averages with a specified lookback? There are so many things that one has to adjust before calculating moving averages for a simple numeric column.

I understand that there is a CrewMacros called "Moving Summarize" which does that, but it has a limitation of a lookback period of 100. What if you have data with millions of rows where you need a lookback in 1000s then there is no easy solution to this.

Does anyone know that this configuration is in the making? Moving Average is bread and butter for analysts like me. I am urging Alteryx developers to build this tool asap. and it will bring lot of comfort to my troubled soul.

Maybe i am clearly missing something here, please enlighten me!

Thank you!

-

Category Transform

-

Desktop Experience

For the summary tool, allow for the field data definition type of the output.

-

Category Transform

-

Desktop Experience

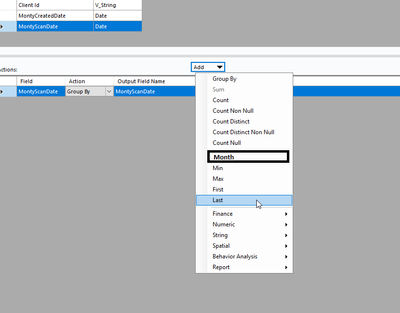

Hi,

I spend too much time creating functions and supporting fields to help me aggregate based on dates. I think that it would be very useful if the Summarize (US spellings....!!!) tool could be extended to include Date Aggregations that were naturally built in such as using a Date field to Group By Year, Month, Date.

I think that this would be a very easy update to the existing functionality and would be very useful as good time could be spent to provide all the usual date / time aggregations pre-made for users.

Kind regards,

Peter

-

Category Transform

-

Desktop Experience

As in title - it might be helpful to define custom name when you are using Transpose tool instead default nomenclature "Name" and "Value".

-

Category Transform

-

Desktop Experience

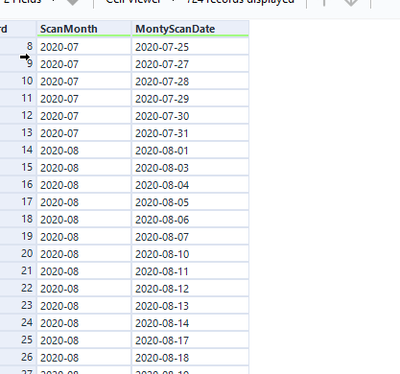

Hi

In all its simplicity, I would like to be able to group by Month based of dates:

To acieve something like this:

-

Category Transform

-

Desktop Experience

A simple quality of life improvement that I would love, is the ability to rename the output of the transpose tool in its configuration, rather than only having 'Name' and 'Value'

Would just let me drop the renaming of these fields afterwards :)

-

Category Transform

-

Desktop Experience

The Summarize tool returns NULL when performing a Mode operation. This doesn't seem to be documented anywhere in Alteryx documentation nor the community. Please fix this behaviour.

-

AMP Engine

-

Category Transform

-

Desktop Experience

-

Engine

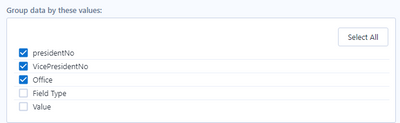

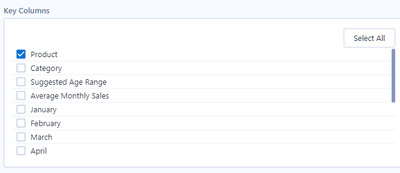

When using the transpose and cross tab tools, I find that I frequently need to reorder the columns selected in the "Key Columns" and "Group data by these values" sections of the tools respectively by using a select tool. It would be helpful to provide users with the ability reorder fields displayed in these tools similar to the functionality provided in the select, join, append, summarize tools etc. Currently the tools default to outputting these columns in the order they come in through the incoming data stream.

-

Category Transform

-

Desktop Experience

Lots of use cases involve concatenating some values based on group by clauses within the Summarize tool.

It will be great to have the option to Concatenate Unique as an aggregation method, so the results will have just one appearance for each value in the results.

Plus, having the option to get the chance to have them sorted or not will be awesome.

-

Category Transform

-

Desktop Experience

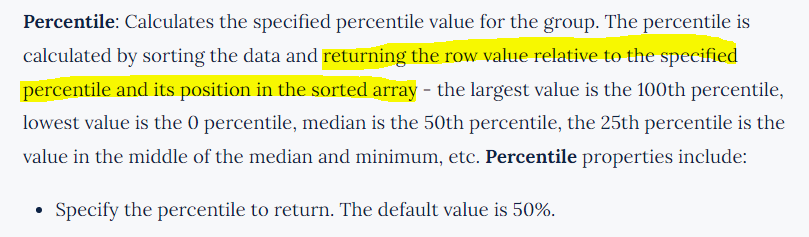

I test the 10% percentile for {1,2,3,4,5,6,7,8,9}, whch alteryx gives 1.8, while it should be 1.

According the help, it should return the value of the target row, which shall not result any decimal in this case.

-

Category Transform

-

Desktop Experience

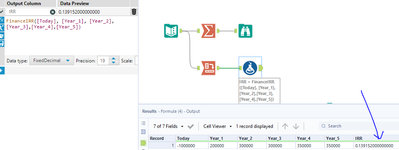

Currently both the formula and summarise tools round to 6.d.p for finance calculations such as IRR. People coming from Excel will be used to a higher precision then this. It would be great to up the precision in line with other platforms to 8.d.p +

-

Category Transform

-

Desktop Experience

Hello --

Many times, I want to summarize data by grouping it, but to really reduce the number of rows, some data needs to be concatenated.

The problem is that some data that is group is repeated and concatenating the data will double, triple, or give a large field of concatenated data.

As an example:

Name State

| A | New York |

| A | New York |

| A | New Jersey |

| B | Florida |

| B | Florida |

| B | Florida |

The above, if we concatenate by State would look like:

| A | New York, New York, New Jersey |

| B | Florida, Florida, Florida |

What I propose is a new option called Concatenate Unique so I would get:

| A | New York, New Jersey |

| B | Florida |

This would prevent us from having to use a Regex formula to make the column unique.

Thanks,

Seth

-

Category Transform

-

Desktop Experience

Issue

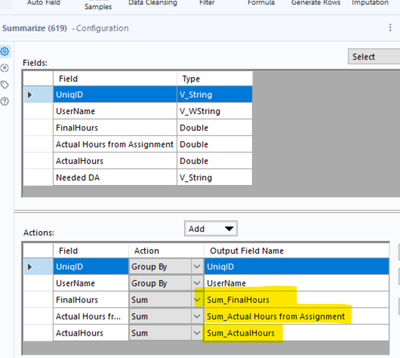

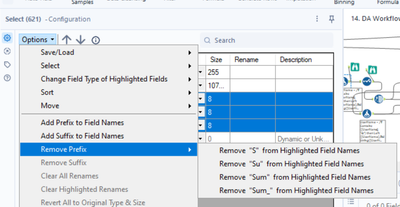

Whenever a Summarize tool is used, it renames the output field (e.g., sales becomes SUM_sales or AVG_sales).

Proposal

I think a reasonable compromise is to by default not rename fields in the Summarize Tool, but to include an option (in the tool, or in global settings) to allow for renaming.

Rationale

I have yet to come across a use case where automatic renaming of aggregated fields is desirable. What I have come across is the annoyance to rename the fields back to what they were with a Dynamic Rename tool, and sometimes having to do this multiple times (e.g., converting back a SUM_SUM_SUM_sales back to sales). Additionally, automatic field renaming causes workflow errors when workflows are later modified by adding/removing a Summarize tool (e.g., if you later add a Summarize tool, all downstream steps will expect the "sales" field and not know to use the "Sum_sales field).

Automatic Renaming feels very much like historic Excel with Pivot Tables field renaming and not reflective of modern code-based workflow best practices.

I appreciate you considering this improvement.

-

Category Transform

-

Desktop Experience

- New Idea 205

- Accepting Votes 1,842

- Comments Requested 25

- Under Review 147

- Accepted 53

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

631 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

74 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,491 -

Documentation

64 -

Engine

121 -

Enhancement

274 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

175 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

- guth05 on: Search for Tool ID within a workflow