Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Hey all,

At present, if you have an existing canvas and you want to move to a DCM Connection - you are asked something like "this will reset all of your connection details - are you sure". If you have complex queries; or pre+post SQL - then you first have to copy all of this out into Notepad before you can convert to DCM and then reconfigure it all again.

However, if you are not using DCM you can change data sources when you go into Workflow Dependancies without losing your queries etc.

Could we revisit the user experience of changing to or from a DCM connection to eliminate this "start from scratch" phenomenon - if you are converging from an existing SQL ODBC or ODB or SSVB connection to a SQL connection via DCM then it should allow you to make this conversion without losing your current configuration; and the same for any other database type.

cc: @mbarone

-

Category Connectors

-

Data Connectors

Request: Google Drive Output Tool to be able to set the maximum records per file and create multiple files

For the regular Alteryx Output Tool, we're able to set maximum records per file. This is helpful in a variety of ways - we use it as part of a workflow where the output gets uploaded into SalesForce and we can only load 5,000 records at a time. I also use this to split up large csv files to be under Excel's ~1M line limit so my teammates without Alteryx can open their reports and not lose data.

The Google Drive Output does not have this ability to split based on the number of records. If I use the RecordID Tool plus a Filter, it crashes Alteryx due to a Bug with RecordID + GDrive Output (it's currently in Accepted Defect stage)

It would be very helpful to have this same functionality that we can with the regular Output Tool

-

Category Connectors

-

Data Connectors

Hi there,

When you connect to a DB using a connection string or an alias - this shows up in the Workflow Dependancies in a way that is very useful to allow you to identify impacts if a DB is moved or migrated.

However - in 2023.1, if you use DCM then the database dependancies just show up as .\ which makes dependancy management much more difficult.

Please could you add the capability to view the DCM dependancies correctly in the dependancy window?

BTW - this workflow Dependancy Window would be a great place to build a simple process to move existing DB connections to a DCM connection!

CC: @wesley-siu @_PavelP

-

Category Connectors

-

Enhancement

-

New Request

-

Scheduler

When you start using DCM - you may have existing canvasses which use regular old connection strings which you want to migrate to DCM.

Currently (in 2023.1.1.123) - when you select "Use Data Connection Manager" - it shreds the configuration of your input tool which makes it difficult to just convert these from an existing connection to a DCM connection

The only way to then make sure that you don't lose any configuration on the tool then is to use the XML editing functionality of the tools and copy across your old configuration.

Could you please add the capability to keep my current tool configuration, but just change from using a regular old connection string to using DCM?

Many thanks

Sean

cc: @wesley-siu @_PavelP

-

Category Connectors

-

Enhancement

-

New Request

-

Scheduler

Hi there,

When connecting to data sources using DCM - could we please add the ability to make JDBC connections?

see:

https://community.alteryx.com/t5/Engine-Works/JDBC-Connections-in-Alteryx/ba-p/968782

As mentioned in these threads - JDBC is very common in large enterprises - and in many cases is better supported by the technology teams / developer community and so is much easier to make a connection. Added to this - there are many databases (e.g. DB2) where JDBC connections are just much easier

Please could you add JDBC connections to the DCM tooling?

Thank you

Sean

cc: @wesley-siu @_PavelP

-

Category Connectors

-

Enhancement

-

New Request

-

Scheduler

When creating a connection using DCM (example being ODBC for SQL) - the process requires an ODBC Data Source Name (see screenshot 1 below).

However, when you use the alias manager (another way to make database connections) - this does allow for DSN-free connections which are essential for large enterprises (see screenshot 2 below).

NOTE: the connection manager screens do have another option - Quick Connect - which seems to allow for DSN-free connections, but this is non-intuitive; and you're asked to type in the name of the driver yourself which seems to be an obvious failure point (especially since the list of all installed drivers can be read straight from the registry)

Please could we change DCM to use the same interfaces / concepts as the alias screens so that all DCM connections can easily be created without requiring an ODBC DSN; and so that DSN-free connections are the default mode of operation?

Screenshot 1: DCM connection:

screenshot 2

cc: @wesley-siu @_PavelP @ToddTarney

-

Category Connectors

-

Data Connectors

For companies that have migrated to OneDrive/Teams for data storage, employees need to be able to dynamically input and output data within their workflows in order to schedule a workflow on Alteryx Server and avoid building batch MACROs.

With many organizations migrating to OneDrive, a Dynamic Input/Output tool for OneDrive and SharePoint is needed.

- The existing Directory and Dynamic Input tools only work with UNC path and cannot be leveraged for OneDrive or SharePoint.

- The existing OneDrive and SharePoint tools do not have a dynamic input or output component to them.

- Users have to build work arounds and custom MACROS for a common problem/challenge.

- Users have to map the OneDrive folders to their machine (and server if published to the Gallery)

- This option generates a lot of maintenance, especially on Server, to free up space consumed by the local version when outputting the data.

The enhancement should have the following components:

OneDrive/SharePoint Directory Tool

- Ability to read either one folder with the option to include/exclude subfolders within OneDrive

- Ability to retrieve Creation Date

- Ability to retrieve Last Modified Date

- Ability to identify file type (e.g. .xlsx)

- Ability to read Author

- Ability to read last modified by

- Ability to generate the specific web path for the files

OneDrive/SharePoint Dynamic Input Tool

- Receive the input from the OneDrive/SharePoint Directory Tool and retrieve the data.

Dynamic OneDrive/SharePoint Output Tool

- Dynamically write the output from the workflow to a specific directory individual files in the same location

- Dynamically write the output to multiple tabs on the same file within the directory.

- Dynamically write the output to a new folder within the directory

-

Category Connectors

-

Category Input Output

-

Data Connectors

I would like to raise the idea of creating a feature that resolves the repetitive authentication problem between Alteryx and Snowflake

This is the same issue that was raised in the community forum on 11/6/18: https://community.alteryx.com/t5/Alteryx-Designer-Desktop-Discussions/ODBC-Connection-with-ExternalB...

Can a feature be added to store the authentication during the session and eliminate the popup browser? The proposed solution eliminates the prompt for credentials; however, it does not eliminate the browser pops up. For the Input/Output function, this opens four new browser windows, one for each time Alteryx tests the connection.

-

Category Connectors

-

Data Connectors

As an Alteryx Designer user I would like the ability to write .hyper files to a subdirectory on Tableau Server to keep make my Tableau site easier to manage.

-

Category Connectors

-

Data Connectors

Hi Team,

With Sharepoint Tool 2.3.0 , We are unable to connect Sharepoint Lists with service Principal Authentication as it requires SharePoint - Application permission - Sites.Read.All and Sites.ReadWrite.All in Microsoft Azure App. However, as those permissions will gets access to all sites in respective Organization community, it is impossible for any company to provide as it leaks data security. Kindly provide any alternative or change in permsiions for Sharepoint Connectivity with thumbprint in Alteryx.

Regerence Case with Alteryx Support : Case #00619824

Thanks & Regards

Vamsi Krishna

-

Category Connectors

-

Enhancement

HI,

Not sure if this Idea was already posted (I was not able to find an answer), but let me try to explain.

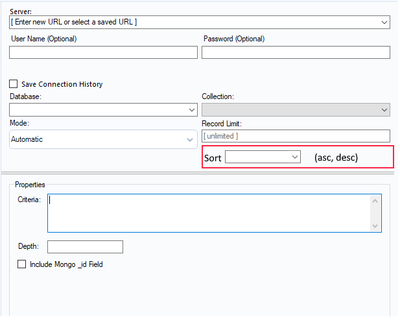

When I am using Mongo DB Input tool to query AlteryxService Mongo DB (in order to identify issues on the Gallery) I have to extract all data from Collection AS_Result.

The problem is that here we have huge amount of data and extracting and then parsing _ServiceData_ (blob) consume time and system resources.

This solution I am proposing is to add Sorting option to Mongo input tool. Simple choice ASC or DESC order.

Thanks to that I can extract in example last 200 records and do my investigation instead of extracting everything

In addition it will be much easier to estimate daily workload and extract (via scheduler) only this amount of data we need to analyze every day ad load results to external BD.

Thanks,

Sebastian

-

Category Connectors

-

Data Connectors

Connect to PowerPivot data model or an Atomsvc file

-

Category Connectors

-

Data Connectors

My organization use the SharePoint Files Input and SharePoint Files Output (v2.1.0) and connect with the Client ID, Client Secret, and Tenant ID. After a workflow is saved and scheduled on the server users receive the error "Failed to connect to SharePoint AADSTS700082: The refresh token has expired due to inactivity" every 90 days. My organization is not able to extend the 90 day limit or create non-expiring tokens.

If would be great if the SharePoint connectors could automatically refresh the token when it expires so users don't have to open the workflow and do it manually.

-

Category Connectors

-

Data Connectors

When you import a csv file, I sometimes use a "TAB" as delimiter. In section 5 Delimiters I want that as an option.

I have learned that it is possible to wright "\t" but a normal choice would bed nice.

-

Category Connectors

-

Data Connectors

Connecting to Smartsheets using Alteryx Desktop (and by extension, Alteryx Server) is extremely cumbersome. If a user wants to read data from Smartsheet, they are required to get an API token (preferred) or use a username/password

Then do one of the following to read data from Smartsheets:

1. a. Install a ODBC driver

b. Configure a DSN connection for ODBC

c. Use the input data using a generic ODBC connection

or

2. Use python

To write data to Smartsheets, a user can use Python or upload the data using an API call - both very hard for end users to use especially if they're not Python developers.

Regardless, all of these are problematic. On the server I manage, I have over 15 ODBC connections to Smartsheets and it's getting very hard to upgrade the server hardware because of them. Creating a native connector for input/output of data to Smartsheets will eliminate a headache of managing ODBC connections, and make it simple for Alteryx Desktop users to read and write data.

-

Category Connectors

-

Data Connectors

When email body gets imported using latest version of the Outlook 365 tool, this tool removes the new line separators from the message body, which makes it difficult to parse relevant information out of the message body. New line separators are there prior to message being imported into Alteryx as can be verified when importing same message using different tools (for example, Python or Power Automate). Without new line separators it is not possible to accurately parse message body using Alteryx. Please add the enhancement to the Outlook 365 tool so that it doesn't remove new line separators from the message body.

This limitation of the Outlook 365 tool has been discussed in the community

-

Category Connectors

-

Data Connectors

Hyperion Smartview Connect

-

Category Connectors

-

Data Connectors

Hi all,

Currently, only the Sharepoint list tool (deprecated) is working with DCM, it would be amazing to add the Sharepoint files input/output to also work with DCM.

Thank you,

Fernando Vizcaino

-

Category Connectors

-

Data Connectors

https://community.alteryx.com/t5/Alteryx-Designer-Discussions/BigQuery-Input-Error/td-p/440641

The BigQuery Input Tool utilizes the TableData.List JSON API method to pull data from Google Big Query. Per Google here:

- You cannot use the TableDataList JSON API method to retrieve data from a view. For more information, see Tabledata: list.

This is not a current supported functionality of the tool. You can post this on our product ideas page to see if it can be implemented in future product. For now, I would recommend pulling the data from the original table itself in BigQuery.

I need to be able query tables and views. Not sure I know how to use tableDataList JSON API.

-

Category Connectors

-

Data Connectors

Hello all,

HDFS (Hadoop Distributed File System) connection is widely used to load data efficiently on Hadoop, for Hive, Spark or Impala. However, it's not compatible with the new DCM.

Best regards,

Simon

-

Category Connectors

-

Data Connectors

- New Idea 207

- Accepting Votes 1,838

- Comments Requested 25

- Under Review 149

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

631 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,493 -

Documentation

64 -

Engine

121 -

Enhancement

274 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

177 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

- guth05 on: Search for Tool ID within a workflow