Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: Stop workflow processing when Iterative Macro ...

Stop workflow processing when Iterative Macro hits error condition

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

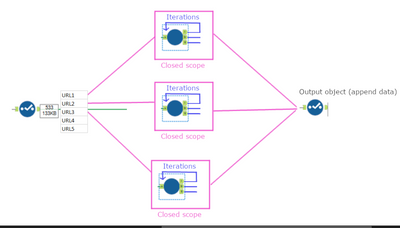

I provide a list of URLs in a workflow to an Iterative Macro that will then query an API.

Some of the URLs will have multiple iterations, most will finish in without any (iteration=0).

It all worked well until I started hitting a request limit and I am now trying to stop processing any URL once I am within a certain percentage. I have no problem stopping the macro from iterating, but I cannot stop it from processing each URL at least once.

My first thought was that I might be able to loop the 'E'(rror) output back to a filter like so, but I assume that this backward loop does not make sense.

Since the Iterative Macro 'resets' with every new row, I do not know how I can stop the workflow from running.

I am sending a Error Message from the Macro and have set-up to cancel the workflow on error.

The macro still works through all the rows of its input.

Any suggestions would be very welcome.

Solved! Go to Solution.

- Labels:

-

Iterative Macro

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @SvenD,

Do you know the rate limit in advance? If so, you could have your iterative macro exit the process once it reaches a certain limit. You probably have the macro set up right now to exit once it finishes all the URLs, right? An iterative macro will stop processing once there are no records left in the loop. So instead of having it process all records, keep a cumulative iteration count in the loop. Append the column to all the URLs at the beginning or end of the macro, then have a filter tool remove all remaining URLs once the limit is reached.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I have accepted your suggestion as a solution too early. I should have had my tea first ;)

The problem I have is that I cannot pass any information to the next row - the problem is not happening between iterations, it is happening between rows.

This is what I imagine what is happening in the Alteryx engine at the moment:

A list of URLs is passed on the the macro. A new instance of the macro (#1) is created with one URL as its parameter. It runs through its iterations and then outputs the data. Then the instance (#1) of the macro is deleted.

Then the next URL from the list starts a new instance (#2) of the macro. It runs through its iterations and then outputs the data (appended to the output of instance #1). Then the instance (#2) of the macro is deleted.

I cannot pass any information between the instances. My solution would have been either a global variable (which is not available) or some kind of feedback/extra column (like you would normally do between iterations).

I am thinking about wrapping the iterative macro in another iterative macro, which should give me a way of 'interrupting' the processing after each row as far as I understand.

Again any suggestions are welcome, including redesigning the process.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello @clmc9601,

Thank you for taking the time to reply. It has been very helpful to think about my solution. I have not found a way to fix it, but I will continue to work on it.

Please find my answers below.

>Do you know the rate limit in advance?

I do not know the limit in advance. The remaining rate limit is sent in the reply header and is shared across the company.

>If so, you could have your iterative macro exit the process once it reaches a certain limit.

The problem I have is that I cannot exit any future rows that the iterative macro will process. I can stop it from iterating for each URL, so it only runs once per URL, but I cannot stop it from running again for the next URL once an API error was received.

Each time I output [Engine.IterationNumber] it always comes back as '0' (unless in the rare cases where I have to make 2 API requests for the same URL (paged results)). To me that means that the iterative macro 'resets' for each row, making it impossible to feed any data back (such as a 'do not process' flag).

>You probably have the macro set up right now to exit once it finishes all the URLs, right?

It is set up to finish once all data has been downloaded from the API for one URL - it only ever sees one URL and not the whole list.

Looking at my message log, it looks like - as described above - that the iterative macro is not iterating through the list, but rather creating new instances.

The log file shows Iteration '0' ([Engine.IterationNumber]) for each URL.

>So instead of having it process all records, keep a cumulative iteration count in the loop.

I don't seem to be able to have anything cumulative as I don't seem to be able to pass any data from one instance to the next. I can pass data from one iteration to the next (which is needed for getting paged results).

>Append the column to all the URLs at the beginning or end of the macro, then have a filter tool remove all remaining URLs once the limit is reached.

Somehow I cannot get the API result to a filter in front of the macro.

Is it correct to say that iterative macros work through the URLs one row at a time when the input is given a list of URLs?

That means, the macro only sees the data in that one row and will run within its closed scope. I can feed data back via the 'iterative output' but this is only possible within that specific row. It is not able to access the list of URLs yet to be processed? It will only get the next row once processing for the current row completed?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @SvenD,

If you put all your URLs through a download tool inside the iterative macro, then yes it will process them all at once. That being said, you can force it to process one at a time and evaluate against the limit after each one. This is more computationally expensive, but it can help prevent overage. I created a skeletal iterative macro that shows my idea for looping one at a time-- do you think this would help your use case?

To answer your other questions at the bottom of the previous post:

If you were using a batch macro, that would be exactly the execution process. Batch macros take one incoming line at a time, at least for the Control input. The download tool itself acts similarly: it processes each row individually. However, iterative macros behave differently. Iterative macros will evaluate all rows sequentially and together, then evaluate against a condition, then loop the rest of the records again. I wonder if your iterative macro isn't functioning like an iterative macro, especially if all your iteration numbers are 0. Sounds like your condition is causing the macro to act just like a standard macro, or something to that effect.

Is your API in Download or in Python (or something else)? If my attached skeleton doesn't help, could you please attach a sample of your macro?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello @clmc9601

Thank you so much for the reply.

I will have to check the iterative macro - it might be the case that I once added a control input and it now behaves like a batch macro.

It's time to knock-off here - I hope you have wonderful weekend and I'll check your workflow on Monday morning.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @SvenD,

Yes, adding a control parameter automatically changes the workflow from an iterative macro to a batch macro. Only batch macros can contain control parameters.

Sounds good! Have a great weekend.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello @clmc9601,

I use the Download tool. The batch-like nature of that tool is what caused all that confusion. All my debug message also triggered after each URL was processed by the Download tool (as I set up to send messages "Before Rows Where..."), leaving me to think that something wasn't right with the iterative macro.

My macro does indeed do 1 iterations (or 2 runs) (for the very few URLs that need to request a 2nd page) - and all of them are processed in the that first iteration as these were the only ones passing the filter to the iteration output.

Now that I have a better understanding of the iterative macro, I have looked at your great example.

I have used your idea of the 'First' and 'Skip First' row to only download one URL per iteration.

I also use the feedback from the download tool (the API returns remaining vs limit). There are probably better ways to do this, but for my example, I ended up adding a column that tracks limit issues. I then summarise over that column and update all rows with the maximum.

So in the next iteration I can filter all URLs out once a limit issue has been encountered.

I can cut that down to just the 'append field' tool, but I leave that as an exercise for the reader ;)

Regards,

Sven

“It is practically impossible to teach good programming to students that have had a prior exposure to BASIC: as potential programmers they are mentally mutilated beyond hope of regeneration” Edsger W.Dijkstra, 18 June 1975

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I'm glad to hear you got it working! Thanks for the description of batch behavior of the Download tool-- I'm sure that will help future question-askers. I agree, the batch behavior of the Download tool was difficult for me to understand at first, too. Happy solving!

-

AAH

1 -

AAH Welcome

2 -

Academy

24 -

ADAPT

82 -

Add column

1 -

Administration

20 -

Adobe

176 -

Advanced Analytics

1 -

Advent of Code

5 -

Alias Manager

70 -

Alteryx

1 -

Alteryx 2020.1

3 -

Alteryx Academy

3 -

Alteryx Analytics

1 -

Alteryx Analytics Hub

2 -

Alteryx Community Introduction - MSA student at CSUF

1 -

Alteryx Connect

1 -

Alteryx Designer

44 -

Alteryx Engine

1 -

Alteryx Gallery

1 -

Alteryx Hub

1 -

alteryx open source

1 -

Alteryx Post response

1 -

Alteryx Practice

134 -

Alteryx team

1 -

Alteryx Tools

1 -

AlteryxForGood

1 -

Amazon s3

138 -

AMP Engine

191 -

ANALYSTE INNOVATEUR

1 -

Analytic App Support

1 -

Analytic Apps

17 -

Analytic Apps ACT

1 -

Analytics

2 -

Analyzer

17 -

Announcement

4 -

API

1,038 -

App

1 -

App Builder

43 -

Append Fields

1 -

Apps

1,167 -

Archiving process

1 -

ARIMA

1 -

Assigning metadata to CSV

1 -

Authentication

4 -

Automatic Update

1 -

Automating

3 -

Banking

1 -

Base64Encoding

1 -

Basic Table Reporting

1 -

Batch Macro

1,271 -

Beginner

1 -

Behavior Analysis

217 -

Best Practices

2,413 -

BI + Analytics + Data Science

1 -

Book Worm

2 -

Bug

622 -

Bugs & Issues

2 -

Calgary

59 -

CASS

46 -

Cat Person

1 -

Category Documentation

1 -

Category Input Output

2 -

Certification

4 -

Chained App

235 -

Challenge

7 -

Charting

1 -

Clients

3 -

Clustering

1 -

Common Use Cases

3,388 -

Communications

1 -

Community

188 -

Computer Vision

45 -

Concatenate

1 -

Conditional Column

1 -

Conditional statement

1 -

CONNECT AND SOLVE

1 -

Connecting

6 -

Connectors

1,180 -

Content Management

8 -

Contest

6 -

Conversation Starter

17 -

copy

1 -

COVID-19

4 -

Create a new spreadsheet by using exising data set

1 -

Credential Management

3 -

Curious*Little

1 -

Custom Formula Function

1 -

Custom Tools

1,720 -

Dash Board Creation

1 -

Data Analyse

1 -

Data Analysis

2 -

Data Analytics

1 -

Data Challenge

83 -

Data Cleansing

4 -

Data Connection

1 -

Data Investigation

3,061 -

Data Load

1 -

Data Science

38 -

Database Connection

1,898 -

Database Connections

5 -

Datasets

4,577 -

Date

3 -

Date and Time

3 -

date format

2 -

Date selection

2 -

Date Time

2,880 -

Dateformat

1 -

dates

1 -

datetimeparse

2 -

Defect

2 -

Demographic Analysis

173 -

Designer

1 -

Designer Cloud

473 -

Designer Integration

60 -

Developer

3,645 -

Developer Tools

2,919 -

Discussion

2 -

Documentation

453 -

Dog Person

4 -

Download

906 -

Duplicates rows

1 -

Duplicating rows

1 -

Dynamic

1 -

Dynamic Input

1 -

Dynamic Name

1 -

Dynamic Processing

2,539 -

dynamic replace

1 -

dynamically create tables for input files

1 -

Dynamically select column from excel

1 -

Email

743 -

Email Notification

1 -

Email Tool

2 -

Embed

1 -

embedded

1 -

Engine

129 -

Enhancement

3 -

Enhancements

2 -

Error Message

1,977 -

Error Messages

6 -

ETS

1 -

Events

178 -

Excel

1 -

Excel dynamically merge

1 -

Excel Macro

1 -

Excel Users

1 -

Explorer

2 -

Expression

1,695 -

extract data

1 -

Feature Request

1 -

Filter

1 -

filter join

1 -

Financial Services

1 -

Foodie

2 -

Formula

2 -

formula or filter

1 -

Formula Tool

4 -

Formulas

2 -

Fun

4 -

Fuzzy Match

614 -

Fuzzy Matching

1 -

Gallery

590 -

General

93 -

General Suggestion

1 -

Generate Row and Multi-Row Formulas

1 -

Generate Rows

1 -

Getting Started

1 -

Google Analytics

140 -

grouping

1 -

Guidelines

11 -

Hello Everyone !

2 -

Help

4,112 -

How do I colour fields in a row based on a value in another column

1 -

How-To

1 -

Hub 20.4

2 -

I am new to Alteryx.

1 -

identifier

1 -

In Database

854 -

In-Database

1 -

Input

3,713 -

Input data

2 -

Inserting New Rows

1 -

Install

3 -

Installation

305 -

Interface

2 -

Interface Tools

1,645 -

Introduction

5 -

Iterative Macro

951 -

Jira connector

1 -

Join

1,737 -

knowledge base

1 -

Licenses

1 -

Licensing

210 -

List Runner

1 -

Loaders

12 -

Loaders SDK

1 -

Location Optimizer

52 -

Lookup

1 -

Machine Learning

230 -

Macro

2 -

Macros

2,500 -

Mapping

1 -

Marketo

12 -

Marketplace

4 -

matching

1 -

Merging

1 -

MongoDB

66 -

Multiple variable creation

1 -

MultiRowFormula

1 -

Need assistance

1 -

need help :How find a specific string in the all the column of excel and return that clmn

1 -

Need help on Formula Tool

1 -

network

1 -

News

1 -

None of your Business

1 -

Numeric values not appearing

1 -

ODBC

1 -

Off-Topic

14 -

Office of Finance

1 -

Oil & Gas

1 -

Optimization

647 -

Output

4,504 -

Output Data

1 -

package

1 -

Parse

2,101 -

Pattern Matching

1 -

People Person

6 -

percentiles

1 -

Power BI

197 -

practice exercises

1 -

Predictive

2 -

Predictive Analysis

820 -

Predictive Analytics

1 -

Preparation

4,632 -

Prescriptive Analytics

185 -

Publish

230 -

Publishing

2 -

Python

728 -

Qlik

36 -

quartiles

1 -

query editor

1 -

Question

18 -

Questions

1 -

R Tool

452 -

refresh issue

1 -

RegEx

2,106 -

Remove column

1 -

Reporting

2,113 -

Resource

15 -

RestAPI

1 -

Role Management

3 -

Run Command

501 -

Run Workflows

10 -

Runtime

1 -

Salesforce

243 -

Sampling

1 -

Schedule Workflows

3 -

Scheduler

372 -

Scientist

1 -

Search

3 -

Search Feedback

20 -

Server

524 -

Settings

759 -

Setup & Configuration

47 -

Sharepoint

465 -

Sharing

2 -

Sharing & Reuse

1 -

Snowflake

1 -

Spatial

1 -

Spatial Analysis

557 -

Student

9 -

Styling Issue

1 -

Subtotal

1 -

System Administration

1 -

Tableau

462 -

Tables

1 -

Technology

1 -

Text Mining

410 -

Thumbnail

1 -

Thursday Thought

10 -

Time Series

397 -

Time Series Forecasting

1 -

Tips and Tricks

3,782 -

Tool Improvement

1 -

Topic of Interest

40 -

Transformation

3,213 -

Transforming

3 -

Transpose

1 -

Truncating number from a string

1 -

Twitter

24 -

Udacity

85 -

Unique

2 -

Unsure on approach

1 -

Update

1 -

Updates

2 -

Upgrades

1 -

URL

1 -

Use Cases

1 -

User Interface

21 -

User Management

4 -

Video

2 -

VideoID

1 -

Vlookup

1 -

Weekly Challenge

1 -

Weibull Distribution Weibull.Dist

1 -

Word count

1 -

Workflow

8,473 -

Workflows

1 -

YearFrac

1 -

YouTube

1 -

YTD and QTD

1

- « Previous

- Next »