Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: Quote Marks in CSV File

Quote Marks in CSV File

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I have a CSV file where the fields are not enclosed in quote marks but some text fields contain quote marks as part of the data - for example 5" X 3" Cards. Rows with this kind of value don't import correctly. I've tried all four settings of Option 9 of the Input Data tool "Ignore Delimiters in" without success.

The odd thing is that this file opens up perfectly in Excel. So my workaround is to open the CSV in Excel and then save it as an Excel document and use that as my input.

If I were to guess at a solution, it might be:

- Automate the conversion of the CSV file to an Excel document.

- Otherwise transform the CSV file before importing it as data.

- Try something I haven't thought of.

The attached sample produces the kind of error I'm getting. Any ideas?

Thanks!

Solved! Go to Solution.

- Labels:

-

Input

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I would probably approach this in a couple of ways depending on what else is in the file.

- Open the CSV file in Alteryx as a non-delimited (e.g. delimiter == \0) format

- Replace all commas with ','

- Use Run Command to write out the modified file and re-import as a standard CSV

- Open the CSV file in Alteryx as a non-delimited format

- Replace all quotation marks with something else (e.g. ")

- Use Run Command to write out the modified file and re-import as a standard CSV

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

HI @ericlaug,

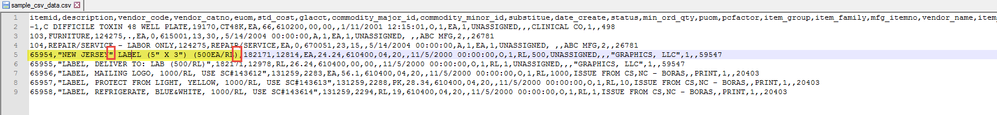

I'm taking a look at this, and I do notice a strange format in one of these rows when I take a look in notepad.

Row 5, which is the one I get the error on has new jersey enclosed in quotes, but no quote at the end of the string. If I remove the quotes, the string reads in for me without a warning message in Alteryx.

For me, this row is the culprit because if I delete it with notepad, the file also reads in ok.

Manager, Technical Account Management | Alteryx

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Thanks for the suggestion, Tom, but either option won't work in my particular case. Some of my values contain commas, some quotes, and some a combination of both.

The software that creates this output is proprietary. It does seem to know enough that when the data contains commas, it encloses the entire value in double quotes ex. "LABEL, MAILING LOGO, 1000/RL, USE SC#143612".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Jessica,

It looks like you missed the full value of the field. The actual value is "NEW JERSEY" LABEL (5" X 3") (500EA/RL) . But you are correct that this is a row that causes the problem. Unfortunately, my actual data set contains several 100K rows and deleting or editing the offending rows (or even finding them) is not really practical.

Thanks,

Eric

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

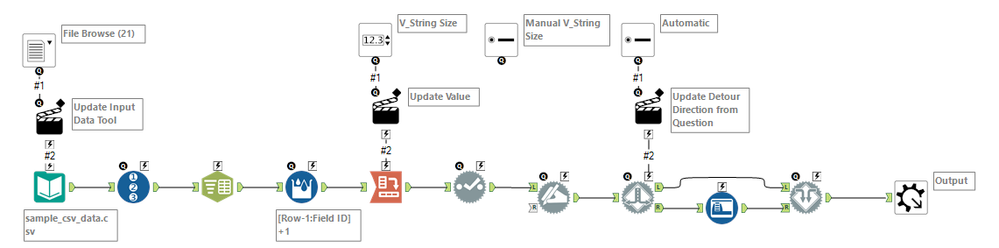

I think you might be able to trick the system in the actual Input tool... try this:

1. Change the delimiter type to something that is other than a comma (the default). For example, a pipe | might do the trick, as long as you don't have pipes in your data (seems like you wouldn't based on the sample, but hard to say). I would also uncheck the box for First Row Contains Names. This will bring everything in to one field (because it won't find any delimiters to split on).

2. Use the Text to Columns tool for the single field to split into your 22 columns based on comma delimiter, ignoring delimiters in quotes

3. Select tool to remove the initial column that had everything in it, then Dynamic Rename to take the column names from the first row.

See attached... might be a weirdly simple solution that actually does what you need it to do?? To spot check it, you could filter for a column that you know needed to contain specific data (numbers, a word in a specified list, etc.) to make sure there are no more rogue transactions.

Hope that helps! :)

NJ

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

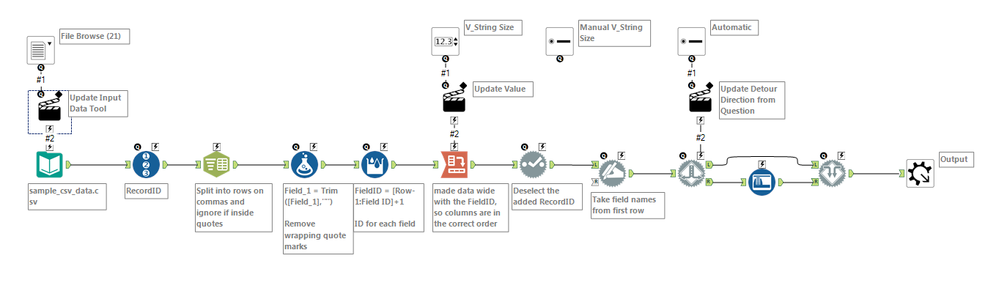

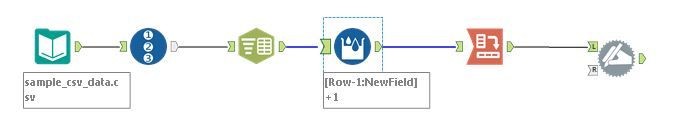

@NicoleJohnson We can use \0 as the deliminator and that will tell the Input tool to not split a line into columns.

Nice work on using so few tools, to take it a step farther, what is the least amount of tools to make it so we do not need to know/specify the number of columns beforehand?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Even better, @Joe_Mako!! That's exactly the "trick" I was looking for, \0. Definitely going to log that one away... (Lordy, I love this place.)

Okay, 5 tool solution to get to the right number of columns without knowing the number of columns ahead of time: RecordID > Text to Columns (split to rows) > Multi-Row Tool w/ Group By RecordID to generate "column numbers" (I'm sure you could use Tile tool, but I personally like Multi-Row better) > Cross-Tab > Dynamic Rename. Can it be fewer tools than that?? Ready, set, go. :)

NJ

-

AAH

1 -

AAH Welcome

2 -

Academy

24 -

ADAPT

82 -

Add column

1 -

Administration

20 -

Adobe

176 -

Advanced Analytics

1 -

Advent of Code

5 -

Alias Manager

70 -

Alteryx

1 -

Alteryx 2020.1

3 -

Alteryx Academy

3 -

Alteryx Analytics

1 -

Alteryx Analytics Hub

2 -

Alteryx Community Introduction - MSA student at CSUF

1 -

Alteryx Connect

1 -

Alteryx Designer

44 -

Alteryx Engine

1 -

Alteryx Gallery

1 -

Alteryx Hub

1 -

alteryx open source

1 -

Alteryx Post response

1 -

Alteryx Practice

134 -

Alteryx team

1 -

Alteryx Tools

1 -

AlteryxForGood

1 -

Amazon s3

138 -

AMP Engine

191 -

ANALYSTE INNOVATEUR

1 -

Analytic App Support

1 -

Analytic Apps

17 -

Analytic Apps ACT

1 -

Analytics

2 -

Analyzer

17 -

Announcement

4 -

API

1,038 -

App

1 -

App Builder

43 -

Append Fields

1 -

Apps

1,167 -

Archiving process

1 -

ARIMA

1 -

Assigning metadata to CSV

1 -

Authentication

4 -

Automatic Update

1 -

Automating

3 -

Banking

1 -

Base64Encoding

1 -

Basic Table Reporting

1 -

Batch Macro

1,271 -

Beginner

1 -

Behavior Analysis

217 -

Best Practices

2,413 -

BI + Analytics + Data Science

1 -

Book Worm

2 -

Bug

622 -

Bugs & Issues

2 -

Calgary

59 -

CASS

46 -

Cat Person

1 -

Category Documentation

1 -

Category Input Output

2 -

Certification

4 -

Chained App

235 -

Challenge

7 -

Charting

1 -

Clients

3 -

Clustering

1 -

Common Use Cases

3,387 -

Communications

1 -

Community

188 -

Computer Vision

45 -

Concatenate

1 -

Conditional Column

1 -

Conditional statement

1 -

CONNECT AND SOLVE

1 -

Connecting

6 -

Connectors

1,180 -

Content Management

8 -

Contest

6 -

Conversation Starter

17 -

copy

1 -

COVID-19

4 -

Create a new spreadsheet by using exising data set

1 -

Credential Management

3 -

Curious*Little

1 -

Custom Formula Function

1 -

Custom Tools

1,720 -

Dash Board Creation

1 -

Data Analyse

1 -

Data Analysis

2 -

Data Analytics

1 -

Data Challenge

83 -

Data Cleansing

4 -

Data Connection

1 -

Data Investigation

3,060 -

Data Load

1 -

Data Science

38 -

Database Connection

1,898 -

Database Connections

5 -

Datasets

4,576 -

Date

3 -

Date and Time

3 -

date format

2 -

Date selection

2 -

Date Time

2,880 -

Dateformat

1 -

dates

1 -

datetimeparse

2 -

Defect

2 -

Demographic Analysis

173 -

Designer

1 -

Designer Cloud

473 -

Designer Integration

60 -

Developer

3,644 -

Developer Tools

2,918 -

Discussion

2 -

Documentation

453 -

Dog Person

4 -

Download

906 -

Duplicates rows

1 -

Duplicating rows

1 -

Dynamic

1 -

Dynamic Input

1 -

Dynamic Name

1 -

Dynamic Processing

2,538 -

dynamic replace

1 -

dynamically create tables for input files

1 -

Dynamically select column from excel

1 -

Email

742 -

Email Notification

1 -

Email Tool

2 -

Embed

1 -

embedded

1 -

Engine

129 -

Enhancement

3 -

Enhancements

2 -

Error Message

1,977 -

Error Messages

6 -

ETS

1 -

Events

178 -

Excel

1 -

Excel dynamically merge

1 -

Excel Macro

1 -

Excel Users

1 -

Explorer

2 -

Expression

1,695 -

extract data

1 -

Feature Request

1 -

Filter

1 -

filter join

1 -

Financial Services

1 -

Foodie

2 -

Formula

2 -

formula or filter

1 -

Formula Tool

4 -

Formulas

2 -

Fun

4 -

Fuzzy Match

614 -

Fuzzy Matching

1 -

Gallery

589 -

General

93 -

General Suggestion

1 -

Generate Row and Multi-Row Formulas

1 -

Generate Rows

1 -

Getting Started

1 -

Google Analytics

140 -

grouping

1 -

Guidelines

11 -

Hello Everyone !

2 -

Help

4,112 -

How do I colour fields in a row based on a value in another column

1 -

How-To

1 -

Hub 20.4

2 -

I am new to Alteryx.

1 -

identifier

1 -

In Database

854 -

In-Database

1 -

Input

3,713 -

Input data

2 -

Inserting New Rows

1 -

Install

3 -

Installation

305 -

Interface

2 -

Interface Tools

1,645 -

Introduction

5 -

Iterative Macro

950 -

Jira connector

1 -

Join

1,737 -

knowledge base

1 -

Licenses

1 -

Licensing

210 -

List Runner

1 -

Loaders

12 -

Loaders SDK

1 -

Location Optimizer

52 -

Lookup

1 -

Machine Learning

230 -

Macro

2 -

Macros

2,499 -

Mapping

1 -

Marketo

12 -

Marketplace

4 -

matching

1 -

Merging

1 -

MongoDB

66 -

Multiple variable creation

1 -

MultiRowFormula

1 -

Need assistance

1 -

need help :How find a specific string in the all the column of excel and return that clmn

1 -

Need help on Formula Tool

1 -

network

1 -

News

1 -

None of your Business

1 -

Numeric values not appearing

1 -

ODBC

1 -

Off-Topic

14 -

Office of Finance

1 -

Oil & Gas

1 -

Optimization

647 -

Output

4,504 -

Output Data

1 -

package

1 -

Parse

2,101 -

Pattern Matching

1 -

People Person

6 -

percentiles

1 -

Power BI

197 -

practice exercises

1 -

Predictive

2 -

Predictive Analysis

820 -

Predictive Analytics

1 -

Preparation

4,632 -

Prescriptive Analytics

185 -

Publish

230 -

Publishing

2 -

Python

728 -

Qlik

36 -

quartiles

1 -

query editor

1 -

Question

18 -

Questions

1 -

R Tool

452 -

refresh issue

1 -

RegEx

2,106 -

Remove column

1 -

Reporting

2,113 -

Resource

15 -

RestAPI

1 -

Role Management

3 -

Run Command

501 -

Run Workflows

10 -

Runtime

1 -

Salesforce

243 -

Sampling

1 -

Schedule Workflows

3 -

Scheduler

372 -

Scientist

1 -

Search

3 -

Search Feedback

20 -

Server

524 -

Settings

759 -

Setup & Configuration

47 -

Sharepoint

465 -

Sharing

2 -

Sharing & Reuse

1 -

Snowflake

1 -

Spatial

1 -

Spatial Analysis

557 -

Student

9 -

Styling Issue

1 -

Subtotal

1 -

System Administration

1 -

Tableau

462 -

Tables

1 -

Technology

1 -

Text Mining

410 -

Thumbnail

1 -

Thursday Thought

10 -

Time Series

397 -

Time Series Forecasting

1 -

Tips and Tricks

3,782 -

Tool Improvement

1 -

Topic of Interest

40 -

Transformation

3,212 -

Transforming

3 -

Transpose

1 -

Truncating number from a string

1 -

Twitter

24 -

Udacity

85 -

Unique

2 -

Unsure on approach

1 -

Update

1 -

Updates

2 -

Upgrades

1 -

URL

1 -

Use Cases

1 -

User Interface

21 -

User Management

4 -

Video

2 -

VideoID

1 -

Vlookup

1 -

Weekly Challenge

1 -

Weibull Distribution Weibull.Dist

1 -

Word count

1 -

Workflow

8,472 -

Workflows

1 -

YearFrac

1 -

YouTube

1 -

YTD and QTD

1

- « Previous

- Next »