Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Add File Creation Date as Column From Directory In...

Add File Creation Date as Column From Directory Input.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi All,

I am trying to build an archive of data files in several sub-folders of in one master folder. The files are a total of 70+ files each with average rows of 20k and most of the files have the same name as they were generated by the same cloud system. It is a database of customer lead scores over a period of time so the most of the file entries are duplicates. The only thing we can use to differentiate them are the dates the files where created/generated from the cloud. I have so far been able to merge the all the files from all sub-folders in into a single database file using a batch macro. But in other to differentiate them each entry int the mater file, I need to add the file creation date, which is not a column in each file. Any ideas on how i can do this?

Thank you.

Solved! Go to Solution.

- Labels:

-

Custom Tools

-

Datasets

-

Macros

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @abdulib

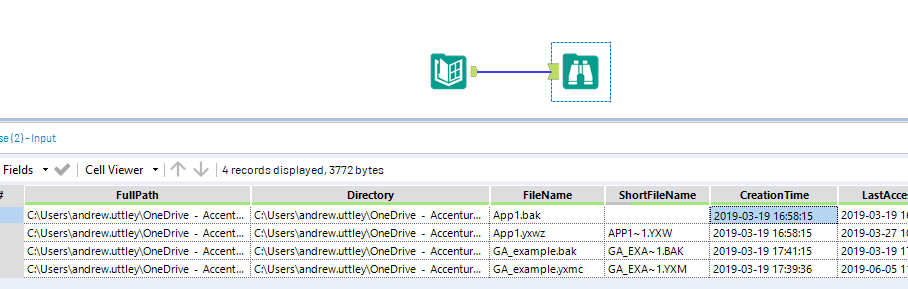

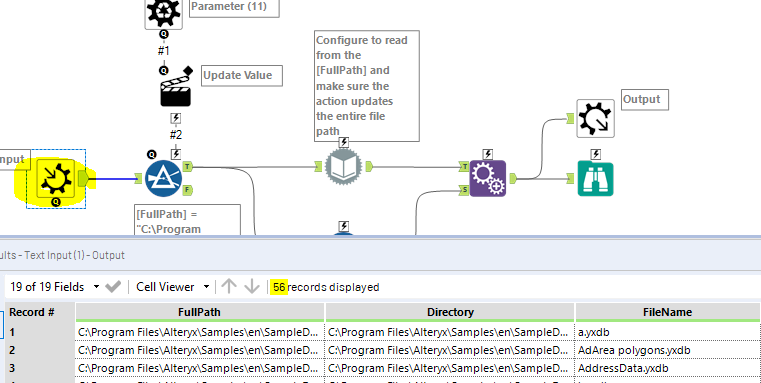

Where are you reading these files from? If it's from a local/network drive you can just use a Directory input at the start, which will give you the info you need downstream:

...after which, you could use the dynamic input tool to bring in your files along with the [CreationTime] field as part of your batch process.

Or are you reading from a database?

Andy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Thank you for your response @andyuttley.

I am reading the files in from a local/network drive.

Can you explain further on how to use the dynamic input tool to bring in your files along with the [CreationTime] field as part of your batch process?

This was the original batch macro I used to merge the files.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

No problem.

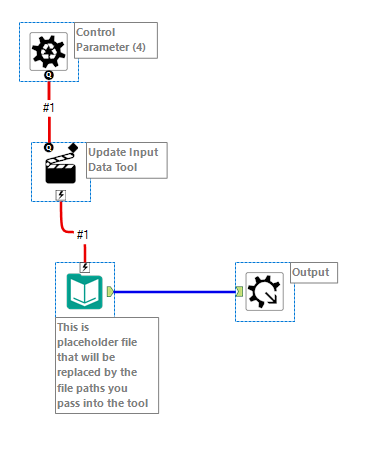

Assuming your control parameter is just feeding a list of file paths, you could instead retrieve these paths from the Directory tool, as this will give you the extra info you need, such as [Creation Time] (and others).

You can then maintain this information once you've brought the actual data in (example below I'm using a Dynamic Input):

Note that I've just used a select records tool (of 1 row) to replicate what would be your batch macro.

There's also a great demo provided in your Alteryx Designer if you want to see some additional examples, just go to: Help -> Sample Workflows -> Learn One Tool at a Time -> Developer -> Dynamic Input

Hope that helps

Andy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Thank very much @andyuttely

The solution works but it only imports and appends the creation time to the first file in the directory.

Apologies if my questions seems very basic. I only started using Alteryx last week.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hey @abdulib

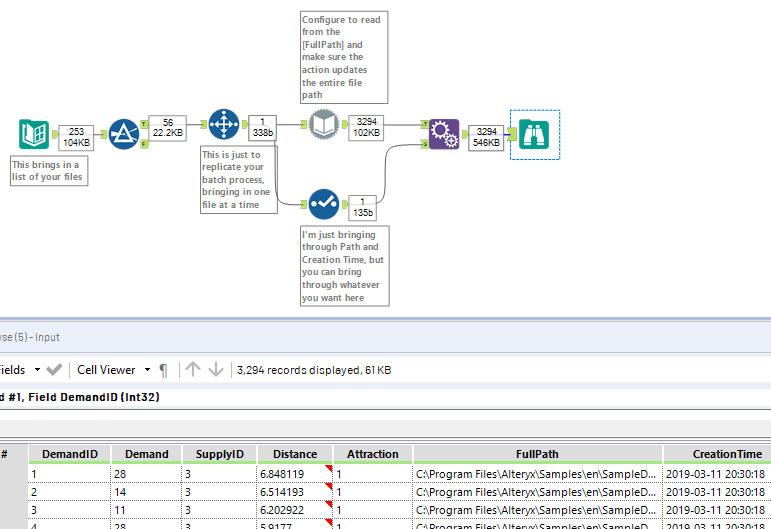

Great, pleased it works. Yes, that'll work just as well in a batch macro, which will run for all paths/files...

I've included a full example here, including batch macro, which I'm hoping you can just point at your directory and plug straight in.

....all I've done here is take the workflow from our example above and embed it in the macro so that it loops through all records (not just one)

Hope that helps!

Andy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @andyuttley,

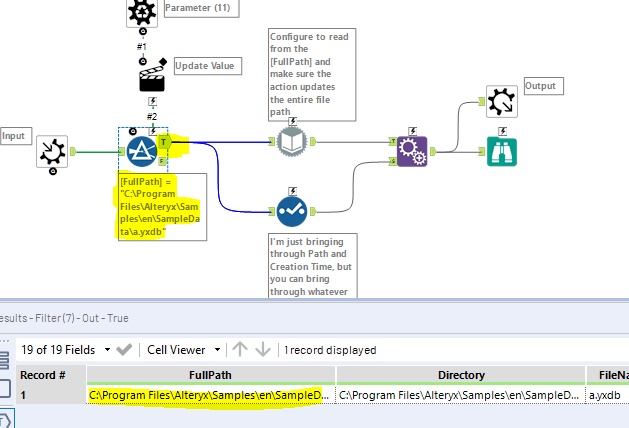

I can't seem to get it working for some reason.Can you explain how the filter works with control parameters and its configuration in the action tool?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

hi @abdulib

Did you download my attached yxzp file above and run it? Hopefully you can see everything I’m referring to here.

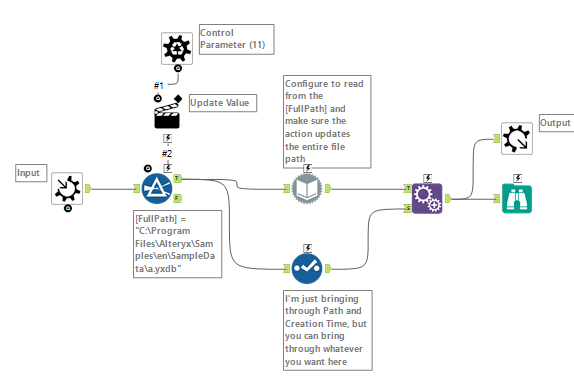

The control parameter doesn’t actually need any configuration; this tool is what makes the macro a batch macro, and will say ‘run it for every record you have feeding this’. The upside-down '?' you see feeding the batch macro is what's going into our control parameter.

The macro input itself (shown below) will contain many rows, but we only want to run one row at a time. If your data is structured slightly differently you’ll find that the dynamic input tool will start to skip files because they don’t match the source input. This is why we’re batching it; basically saying run it independently as many times as there are rows, and then just union them together.

The filter is only in place to ensure we’re just taking one row at a time. The way it’s configured is arbitrary, because it is the control parameter that will update this (via the action tool) for every single run:

The action tool is needed for the control parameter to update the filter so it matches the path of row 1 on run 1, row 2 of run 2 etc. It’s fairly simple to configure: just leave it as ‘update value’ and choose the static value in your filter (hence why it was an arbitrary value), which it will update each time. As is shown below:

![p3.PNG Here we're telling the action tool (on each run) to replace the value in [FullPath]=[Value] every time it runs. Hence the macro being run as many times as there are rows going into your control parameter (the upside-down '?')](https://community.alteryx.com/t5/image/serverpage/image-id/68226i1CB768279B167D57/image-size/large?v=v2&px=999)

Hopefully that answers your question? If yours still isn't working feel free to package it and I can take a look.

Andy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi,

i need help!

i am bringing data from Various folders and sub folders using a directory tool for specific file name..

i need the Full path and creation date along with it so that i can identify from which location each line item was populated.

for some reason the above method doesn't work for me..

Can you please Help.

Thanks

Yogi

-

AAH

1 -

AAH Welcome

2 -

Academy

24 -

ADAPT

82 -

Add column

1 -

Administration

20 -

Adobe

174 -

Advanced Analytics

1 -

Advent of Code

5 -

Alias Manager

69 -

Alteryx

1 -

Alteryx 2020.1

3 -

Alteryx Academy

3 -

Alteryx Analytics

1 -

Alteryx Analytics Hub

2 -

Alteryx Community Introduction - MSA student at CSUF

1 -

Alteryx Connect

1 -

Alteryx Designer

44 -

Alteryx Engine

1 -

Alteryx Gallery

1 -

Alteryx Hub

1 -

alteryx open source

1 -

Alteryx Post response

1 -

Alteryx Practice

134 -

Alteryx team

1 -

Alteryx Tools

1 -

AlteryxForGood

1 -

Amazon s3

135 -

AMP Engine

187 -

ANALYSTE INNOVATEUR

1 -

Analytic App Support

1 -

Analytic Apps

17 -

Analytic Apps ACT

1 -

Analytics

2 -

Analyzer

17 -

Announcement

4 -

API

1,035 -

App

1 -

App Builder

42 -

Append Fields

1 -

Apps

1,165 -

Archiving process

1 -

ARIMA

1 -

Assigning metadata to CSV

1 -

Authentication

4 -

Automatic Update

1 -

Automating

3 -

Banking

1 -

Base64Encoding

1 -

Basic Table Reporting

1 -

Batch Macro

1,264 -

Beginner

1 -

Behavior Analysis

216 -

Best Practices

2,400 -

BI + Analytics + Data Science

1 -

Book Worm

2 -

Bug

619 -

Bugs & Issues

2 -

Calgary

58 -

CASS

45 -

Cat Person

1 -

Category Documentation

1 -

Category Input Output

2 -

Certification

4 -

Chained App

233 -

Challenge

7 -

Charting

1 -

Clients

3 -

Clustering

1 -

Common Use Cases

3,375 -

Communications

1 -

Community

188 -

Computer Vision

44 -

Concatenate

1 -

Conditional Column

1 -

Conditional statement

1 -

CONNECT AND SOLVE

1 -

Connecting

6 -

Connectors

1,172 -

Content Management

8 -

Contest

6 -

Conversation Starter

17 -

copy

1 -

COVID-19

4 -

Create a new spreadsheet by using exising data set

1 -

Credential Management

3 -

Curious*Little

1 -

Custom Formula Function

1 -

Custom Tools

1,710 -

Dash Board Creation

1 -

Data Analyse

1 -

Data Analysis

2 -

Data Analytics

1 -

Data Challenge

83 -

Data Cleansing

4 -

Data Connection

1 -

Data Investigation

3,036 -

Data Load

1 -

Data Science

38 -

Database Connection

1,885 -

Database Connections

5 -

Datasets

4,554 -

Date

3 -

Date and Time

3 -

date format

2 -

Date selection

2 -

Date Time

2,871 -

Dateformat

1 -

dates

1 -

datetimeparse

2 -

Defect

2 -

Demographic Analysis

172 -

Designer

1 -

Designer Cloud

467 -

Designer Integration

60 -

Developer

3,624 -

Developer Tools

2,897 -

Discussion

2 -

Documentation

448 -

Dog Person

4 -

Download

900 -

Duplicates rows

1 -

Duplicating rows

1 -

Dynamic

1 -

Dynamic Input

1 -

Dynamic Name

1 -

Dynamic Processing

2,515 -

dynamic replace

1 -

dynamically create tables for input files

1 -

Dynamically select column from excel

1 -

Email

740 -

Email Notification

1 -

Email Tool

2 -

Embed

1 -

embedded

1 -

Engine

129 -

Enhancement

3 -

Enhancements

2 -

Error Message

1,966 -

Error Messages

6 -

ETS

1 -

Events

176 -

Excel

1 -

Excel dynamically merge

1 -

Excel Macro

1 -

Excel Users

1 -

Explorer

2 -

Expression

1,687 -

extract data

1 -

Feature Request

1 -

Filter

1 -

filter join

1 -

Financial Services

1 -

Foodie

2 -

Formula

2 -

formula or filter

1 -

Formula Tool

4 -

Formulas

2 -

Fun

4 -

Fuzzy Match

613 -

Fuzzy Matching

1 -

Gallery

584 -

General

93 -

General Suggestion

1 -

Generate Row and Multi-Row Formulas

1 -

Generate Rows

1 -

Getting Started

1 -

Google Analytics

139 -

grouping

1 -

Guidelines

11 -

Hello Everyone !

2 -

Help

4,093 -

How do I colour fields in a row based on a value in another column

1 -

How-To

1 -

Hub 20.4

2 -

I am new to Alteryx.

1 -

identifier

1 -

In Database

852 -

In-Database

1 -

Input

3,699 -

Input data

2 -

Inserting New Rows

1 -

Install

3 -

Installation

305 -

Interface

2 -

Interface Tools

1,636 -

Introduction

5 -

Iterative Macro

945 -

Jira connector

1 -

Join

1,729 -

knowledge base

1 -

Licenses

1 -

Licensing

210 -

List Runner

1 -

Loaders

12 -

Loaders SDK

1 -

Location Optimizer

52 -

Lookup

1 -

Machine Learning

229 -

Macro

2 -

Macros

2,491 -

Mapping

1 -

Marketo

12 -

Marketplace

4 -

matching

1 -

Merging

1 -

MongoDB

65 -

Multiple variable creation

1 -

MultiRowFormula

1 -

Need assistance

1 -

need help :How find a specific string in the all the column of excel and return that clmn

1 -

Need help on Formula Tool

1 -

network

1 -

News

1 -

None of your Business

1 -

Numeric values not appearing

1 -

ODBC

1 -

Off-Topic

14 -

Office of Finance

1 -

Oil & Gas

1 -

Optimization

644 -

Output

4,486 -

Output Data

1 -

package

1 -

Parse

2,090 -

Pattern Matching

1 -

People Person

6 -

percentiles

1 -

Power BI

197 -

practice exercises

1 -

Predictive

2 -

Predictive Analysis

817 -

Predictive Analytics

1 -

Preparation

4,619 -

Prescriptive Analytics

185 -

Publish

228 -

Publishing

2 -

Python

726 -

Qlik

35 -

quartiles

1 -

query editor

1 -

Question

18 -

Questions

1 -

R Tool

452 -

refresh issue

1 -

RegEx

2,100 -

Remove column

1 -

Reporting

2,107 -

Resource

15 -

RestAPI

1 -

Role Management

3 -

Run Command

498 -

Run Workflows

10 -

Runtime

1 -

Salesforce

242 -

Sampling

1 -

Schedule Workflows

3 -

Scheduler

370 -

Scientist

1 -

Search

3 -

Search Feedback

20 -

Server

522 -

Settings

755 -

Setup & Configuration

47 -

Sharepoint

463 -

Sharing

2 -

Sharing & Reuse

1 -

Snowflake

1 -

Spatial

1 -

Spatial Analysis

555 -

Student

9 -

Styling Issue

1 -

Subtotal

1 -

System Administration

1 -

Tableau

461 -

Tables

1 -

Technology

1 -

Text Mining

407 -

Thumbnail

1 -

Thursday Thought

10 -

Time Series

397 -

Time Series Forecasting

1 -

Tips and Tricks

3,771 -

Tool Improvement

1 -

Topic of Interest

40 -

Transformation

3,194 -

Transforming

3 -

Transpose

1 -

Truncating number from a string

1 -

Twitter

24 -

Udacity

85 -

Unique

2 -

Unsure on approach

1 -

Update

1 -

Updates

2 -

Upgrades

1 -

URL

1 -

Use Cases

1 -

User Interface

21 -

User Management

4 -

Video

2 -

VideoID

1 -

Vlookup

1 -

Weekly Challenge

1 -

Weibull Distribution Weibull.Dist

1 -

Word count

1 -

Workflow

8,420 -

Workflows

1 -

YearFrac

1 -

YouTube

1 -

YTD and QTD

1

- « Previous

- Next »