Alteryx Designer Desktop Knowledge Base

Definitive answers from Designer Desktop experts.- Community

- :

- Community

- :

- Support

- :

- Knowledge

- :

- Designer Desktop

- :

- Automating File Transfer Protocol (FTP) Downloads

Automating File Transfer Protocol (FTP) Downloads

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

on 04-20-2016 01:36 PM - edited on 07-27-2021 11:41 PM by APIUserOpsDM

So we’re now downloading all the network-shared documents we want thanks to instructions posted on our Knowledge Base, and we’re on our way to masteringFTP in Alteryx. But what if we want to take it a step further? A lot of our users rely on FTP as a drop zone for datasets that are generated periodically (e.g. weekly, monthly, or quarterly datasets). We should then be able to schedule a workflow to coincide with those updates, automatically select the most recent dataset, crank out all the sweet data blending and analytics we have in our scheduled workflow, and proceed with the rest of our lives, right? Right. We can do just that, and with a little work up front, you can automate your FTP download and analysis to run while you’re enjoying the finer things in life. Here’s how in v10.1:

Say the file you want to download is ftp://ftp.url.here/FTP Example File.xlsx :

Each time period, this file is listed at the URL ftp://ftp.url.here/ :

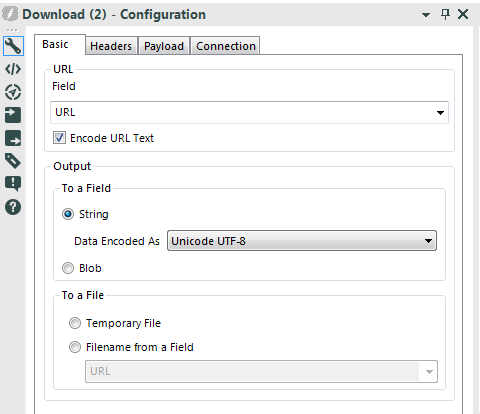

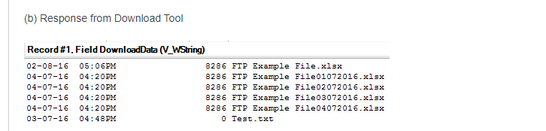

If we use this URL in our Download Tool and specify in the Basic tab to output to a string field (a), otherwise keeping our configuration the same, most servers will return all the files listed at that URL (b).

(a) Basic Tab Configuration

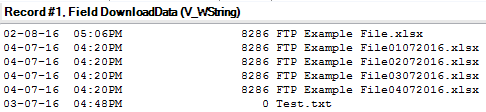

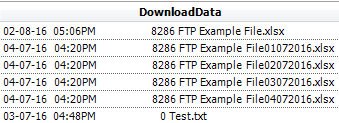

(b) Response from Download Tool

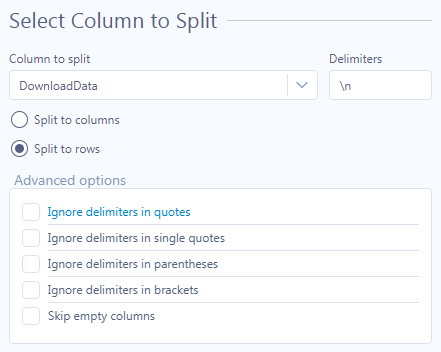

While the most recent version of the file will usually be recognized by the latest date and/or time in the first two fields (we’ll parse those out, too), we’re going to go ahead and use the date stamps in the file names since that’s more difficult and we’re hardcore. Depending on the formats here the approach will vary, but we’ll use regex in this case. First, let’s split the single response cell we have into multiple rows using the Text To Columns Tool:

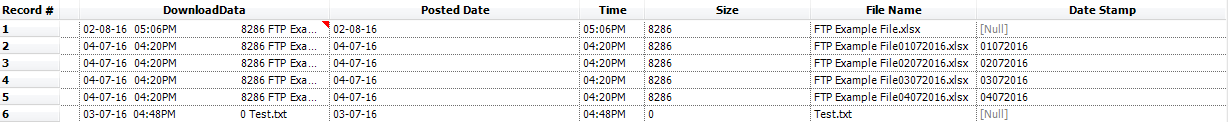

The file list will now look like the following:

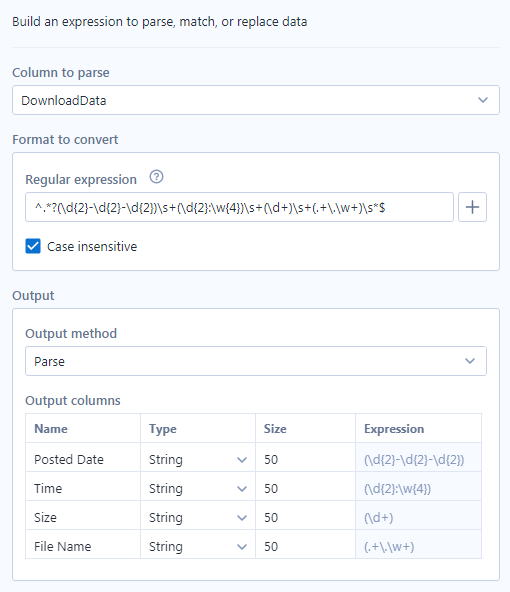

Now let’s unleash the power of regex:

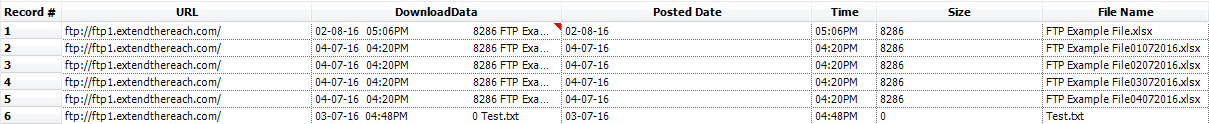

After the parsing above, the file list will look like the below:

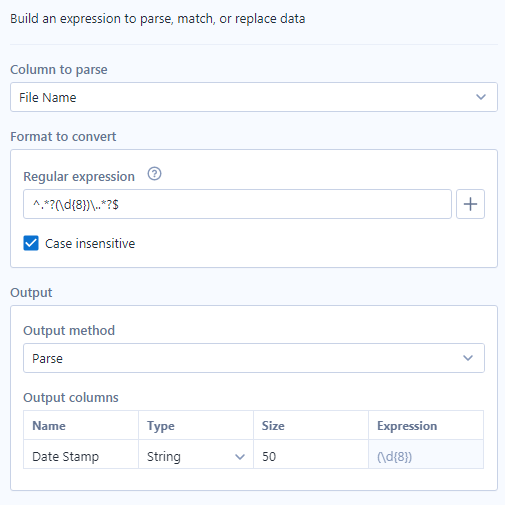

Just apply one more regular expression to get the date stamps out of the file names:

Which looks like:

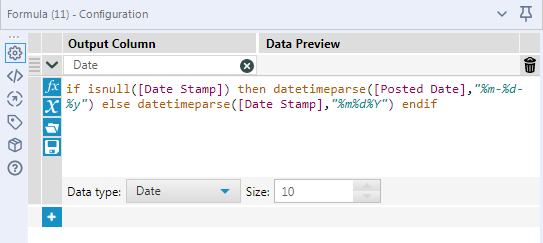

Thenwe’ll throw in a little logic to use either a posted date or a file name date stamp (both converted to date types for sorting later), if provided:

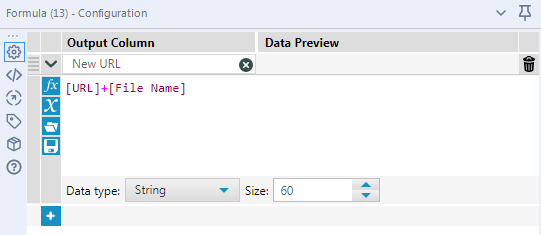

Now that we’ve isolated the dates we’ll leverage to determine the file to open, we can simply sortdescending by the Date field, sample the first record, and create a new URL for our FTP request using the file name corresponding to our date of choice:

Once that’s done, all we need to do is follow the FTP instructions we had before and schedule the workflow to run just after the files are dropped!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Awesome, thanks for sharing this is exactly what I was looking for now if I can just get the external vendors SFTP and our firewall and the Altyerx software all working together I'll be in business!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi,

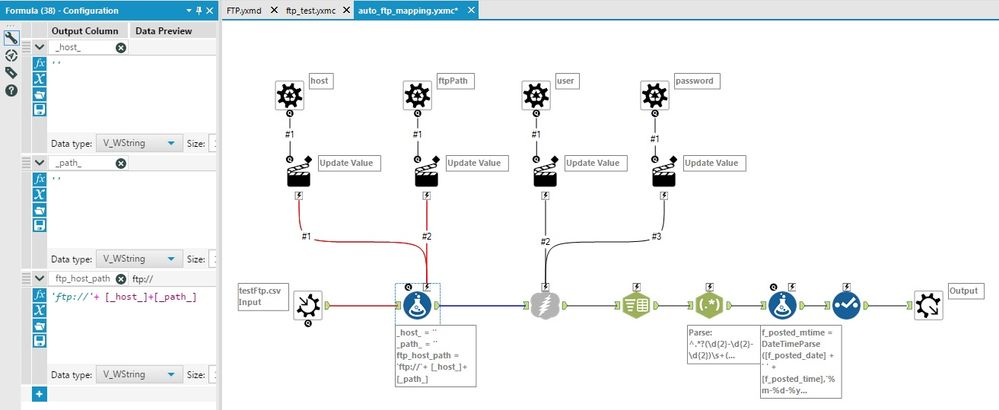

i was following the process (more or less) and it works, but what i really want is to create like a macro that updates the input parameters (host, user, pass, folderPath) of an FTP transfer connector, given before in different columns, and outputs the list of files in those ftps. The idea is to create like a mapping for the input parameters, where the user, later, on the macro itself, can choose from which columns the parameters are fed.

This works when i'm passing the URL (host+folderPath) before the macro and updating only the user and password, but not working when taking host+folderPath dinamically.

Some help would be very much appreciated, as i'm unable to attach a workflow, so i post the macro image.

All the control parameters actions are updating the expression value only (default).

The formula tool is to create the URL string with the updated inputs, (host+folderPath) before inserting into the FTP connector, but i guess is this part which is not working as a batch macro.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @MattD!

This concept looks cool. I have a use case that is little different, would like to know some benchmarks or best practices that could help.

1. What if my input files vary 20 - 40 Gigs in size which are at different share drive aka networks (not hosted FTP locations) ?

2. The process is slow to read such huge file which cannot be broken down.

3. This usually happens when I pick files from a distant server. (overseas - physically servers are at a different country within organization limits)

4. What would be an alternate solution to handle such files as we read such universe files frequently which gets updated? So moving every time to local is again slow.

So ,

1. Does download tool save time while doing such operations?

2. Do they allow such huge files that resides in an FTP sever to be read fast?

3. Do you suggest to move such files from shared network to FTP so this can ease the process?

eagerly waiting for some answers and advice.

Thanks in advance.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

hi Guys,

Thank you for the information. Its really useful. I have another situation where i download file from FTP locations, and i have sorted the list of files based on date. Now i want to upload the sorted files on the shared drive. How should I achieve it? I tried using download tool, but invain. Any quick help?

Thanks

Harsh

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi,

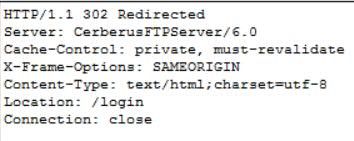

I am having an issue with the download tool. I have followed the instructions, and even tested the URL to ensure that it works, but when the tool goes to actually download the files from the FTP I get the following:

Any idea how to fix this issue?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi ,

In the text input I inserted a second column where I indicated the path to save the data; after which I entered the "filename from a field" in the basic third banner window by selecting the column with the specified path. But when I send the workflow to run, I get the following error message:

"Error: Download (1): Error Opening file: C: \ Users \ Documents \ download: Access denied. (5)".

I modified the path and inserted the name of the files that should download but the zip file that is generated is empty, and then reading the result in the browser tool known this:

"DownloadHeaders"

"HTTP / 1.1 401 Unauthorized"

Thank you in advance for availability

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

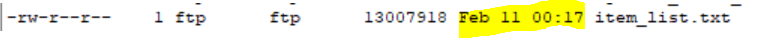

how do you get the FTP download to show the full file upload date? Mine never shows the year only the month, day, and time. I have tried with multiple FTP servers, and all of them show the year in the filezilla but no year in alteryx.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@MattD any thoughts on my comment above? In your screenshot, it shows your date as "02-08-16". Notice in my screenshot below it shows the date like so:

How did you get your date to show the year in the download tool? I have tried multiple FTP servers for clients (ours and theirs) and they all appear like this in alteryx.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

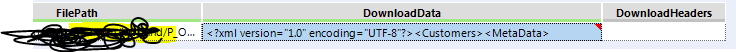

Hi @MattD , thanks for your answers, it helps a lot. Then I got a question, once I get the full path like this:

sftp://sftpxxxx.com/xxx/123xxxxabcdefg.XML, I got a file looked like this, all the data were crowded into one column names "DownloadData", then how can I download this file to the alteryx memory? or how can I download this xml file wtih the same format?

Thanks.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

If you want it in alteryx memory, you need to parse the data somehow. Typical tools to do this include tokenize, text to column, etc.

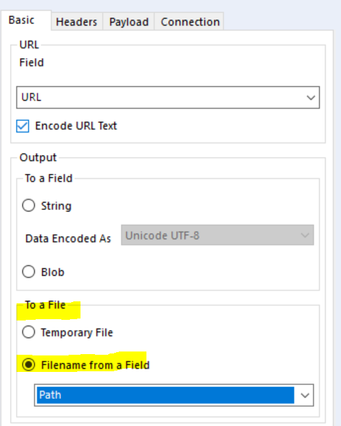

If you prefer to output the file and then use it in a different workflow you can output using this configuration in the download tool:

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Great, thanks a lot!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I've been able to download a file from an FTP as outlined here, but I am unable to output to a "string" as outlined in this Automated process.

In the Text Input tool that precedes the Download Tool, I enter the URL pointing to the FTP location (the particular file is not included in this URL Path)

In the Download Tool, I select the Output -> To a Field -> String as outlined above.

This is where things get wonky.... the "Response from the Download Tool (b)" in my workflow is nothing like what the screenshot shows, where there are clear line items for each file that include a date and time stamp...

From the procedure outlined above

From my workflow

You can see that all the files in the FTP location are contained in the "DownloadData" cell, but they do not include a date/time stamp, and I'm unsure of how to parse this data to reference the particular file I'm interested in...

Any ideas? I'm trying to automate a daily pull of a report and conceptually this process seems like a good fit; just having a tough time getting going 😛

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

yeah i had the same concern when i started. every FTP site I have ever connected to looks like yours and I have always wished it looks like the screenshot posted in this thread. in the end what i have noticed is that if the data is not from the current year, it includes the year.. otherwise the data is from the current year.

in regard to parsing it, i just do text to columns and break it by space. then i just select the columns i need and move on. the only time it is different is if you are pulling a file from a different year, so you can add some logic to account for that.

---------

in regard to your other issue, unless you are trying to pull the directory to know the name of the file (or whether it has been updated), i am not sure why you need to parse the FTP directory anyways. if you are trying to pull the data to a string, you need to include the file name in your URL. if the directory is being returned to you, then you didnt include the file name in the URL

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @Paulo1300

Do you get the solution for your problem. I am also getting the same response from ftp.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@Rahul3 no, i still use the process above (text to columns by space). then you just know that if there is a "time" in that one column, it is the current year, otherwise it is the year in that column

i basically do the following for the date (after doing a text to column tool based on space):

if contains([DownloadData8],":")

then [DownloadData6]+" "+[DownloadData7]+" "+left(datetimenow(),4)

else [DownloadData6]+" "+[DownloadData7]+" "+[DownloadData8]

endif

then i do a formula to convert the output of that to an alteryx date

DateTimeParse([File Date],"%B %d %YYYY")

also one thing that doesnt work with this process is that if the file has spaces in it, then file name will be spread out into multiple columns.. the way i get around that is the text to columns has to = 9 and you set "leave extra in last column". this works since it seems like everyone i pull from is 9 columns and the last column is always file name

-

2018.3

17 -

2018.4

13 -

2019.1

18 -

2019.2

7 -

2019.3

9 -

2019.4

13 -

2020.1

22 -

2020.2

30 -

2020.3

29 -

2020.4

35 -

2021.2

52 -

2021.3

25 -

2021.4

38 -

2022.1

33 -

Alteryx Designer

9 -

Alteryx Gallery

1 -

Alteryx Server

3 -

API

29 -

Apps

40 -

AWS

11 -

Computer Vision

6 -

Configuration

108 -

Connector

136 -

Connectors

1 -

Data Investigation

14 -

Database Connection

196 -

Date Time

30 -

Designer

204 -

Desktop Automation

22 -

Developer

72 -

Documentation

27 -

Dynamic Processing

31 -

Dynamics CRM

5 -

Error

267 -

Excel

52 -

Expression

40 -

FIPS Designer

1 -

FIPS Licensing

1 -

FIPS Supportability

1 -

FTP

4 -

Fuzzy Match

6 -

Gallery Data Connections

5 -

Google

20 -

In-DB

71 -

Input

185 -

Installation

55 -

Interface

25 -

Join

25 -

Licensing

22 -

Logs

4 -

Machine Learning

4 -

Macros

93 -

Oracle

38 -

Output

110 -

Parse

23 -

Power BI

16 -

Predictive

63 -

Preparation

59 -

Prescriptive

6 -

Python

68 -

R

39 -

RegEx

14 -

Reporting

53 -

Run Command

24 -

Salesforce

25 -

Setup & Installation

1 -

Sharepoint

17 -

Spatial

53 -

SQL

48 -

Tableau

25 -

Text Mining

2 -

Tips + Tricks

94 -

Transformation

15 -

Troubleshooting

3 -

Visualytics

1

- « Previous

- Next »