Hi, I'm using the in database tools and coming across a performance issue, wondering if anyone has experienced similar?

This isn't the exact scenario, but shows a close enough example.

There are 2 tables in an Amazon AWS Redshift server:

ProductRecords

--------------------

personid | product type | startdate | enddate

ActionRecords

--------------------

personid | action type | action date

I need to find out what product type was active at the time of action records.

So I need to join the actions to the product records, by personid and also ensure that action date falls between ProductRecords.startdate and ProductRecords.enddate.

If there is no corresponding Product record for the action, I still want to keep the action record.

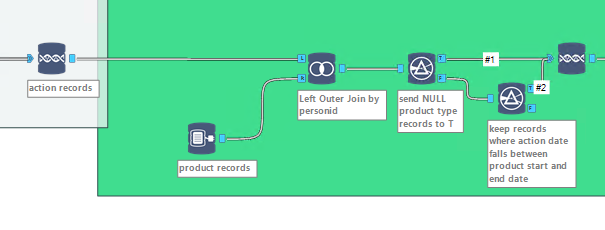

Alteryx doesn't yet allow conditional joins, so I do the following:

1. Use In DB join tool (Left outer) to join the 2 tables by person id, left side being the action records so the action is still kept if there are no product record for the personid

2. Use a filter tool on the results from step 1, in order to send the records with NULL product type value to T output

3. For the records sent to F output in step 2, they are sent to another filter tool so only records where actiondate falls between startdate and end date are kept

4. Union the T output from step 2, with the results from filter in step 3 It works fast up until the union step in step

Even using a small subset of data (less than 10,000 rows), it takes atleast 5 minutes for the union tool to show as complete. It must be the way the nested sql statements are being created in the background?

I've also tried doing something similar by using multiple joins and came across same bottleneck.

I'm also getting the performance bottlenecks when using in DB unions later on in the workflow

Any ideas? It would be great if it was possible to see what SQL statements are being generated in the background which could assist in figuring out where the problem is.