I have a workflow here which is pretty simple, I'm joining an input data file to 1 database query, anything that doesn't match is joined to another database query instead, then I bring it back together and write to a table.

This is all occurring in-db - nothing is streaming back down to my computer that would make it slow.

I am also restricting to just the top 1000 records in the first query. So only 1000 records pass through this whole workflow.

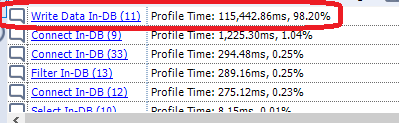

With only 1000 records, the workflow runs in 4 seconds... until I add a write data in-db tool at the end. It's literally just putting those 1000 records into a table, and not even streaming them from my computer since it's all in-db. That extra write in-db tool takes the run time from 4 seconds to 2-3 minutes. 2-3 minutes to just write 1000 rows into a table using records that are all still in-db? My full starting dataset has 1.5 million records. It would take months to run the whole thing at that pace?