Hello,

I'm wondering it it's possible to to create a way for the Blob Input to read a Entire File Path which is a HDFS file path?

I have a use-case whereby I want to output a file into HDFS. I also want to be able to do a checksum process against this same file, hence I thought to use the Blob Input.

However, the Blob Input doesn't seem to take kindly to HDFS as a file path. I'm wondering if it's a limitation on the tool itself or whether I just have access troubles.

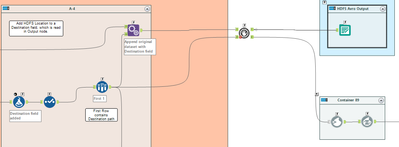

1. Dataset has a Destination field appended, which updates the HDFS filepath to include changes to the file name,

2. This is used in the Output node when the Destination field is used as the filepath, when outputting to HDFS.

Ideal Goal:

3. Once the above output is generated in HDFS, the second step of the Parallel Block Until Done begins.

4. Destination field is also ingested into the Blob Input, so that I can get run a Blob Convert against the generated Blob Field.

5. End hash is then outputted against into a separate location in HDFS.