Hi all,

I’m looking for advice on the best approach for triggering a series of SQL update statements on snowflake (one part of a wider process where we use more diverse Alteryx functions). These SQL statements don’t require data to be input or output, so we really just need to execute them in sequence, with some control on order and dependencies. I know you can use pre/post SQL on an input or output tool, but these can’t, as far as I know, be chained together. General requirements:

- Stays as close to pure SQL as possible, for easier updating of queries created elsewhere;

- Update statements can be chained together with flow control and dependencies;

- Low maintenance;

- Relatively easy to understand the flow visually

Some options I’ve considered:

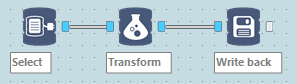

1. Using standard in-db tools to select, transform and write back data – not ideal for transfer-ability to/from other tools, and since full SQL statements already exist which could theoretically be executed in a single tool. The example below would equate to a single update statement, but dozens are required.

2. Using the ‘run’ tool with snowsql CLI tool to run SQL stored in text files – possibly the best option, but a bit fiddly? Workflow would look a bit like option 5, but with 'run' tool instead of custom macro

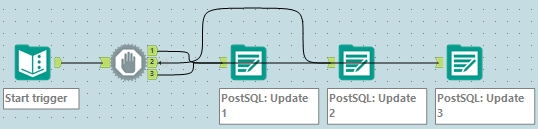

3. Chaining together output tools, using write to a dummy table, with the SQL Update query stored in the pre or post-SQL, like below

4. Using the CREW runner macros to run workflows one after another. This would require creating too many separate workflows, and reduce direct transparency

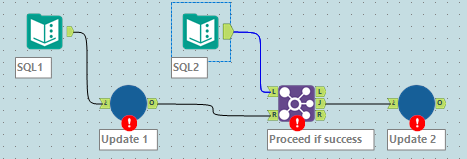

5. Creating a chain-able macro with one SQL input and one ‘trigger’ input, plus a success/fail output that is just dynamically setting the Post SQL on an output node (like the CREW conditional runner macro). Please ignore the errors on my mock up below:

Do these approaches make sense, or is there some vastly simpler way that I’m overlooking? I’d love it if Alteryx could provide a single ‘Run SQL’ tool, but this doesn’t seem to exist.

Thanks in advance!