Hello,

I'm trying to read files from HDFS environment via HTTPFS using the built-in Hadoop connector in Input Data tool. I've the Kerberos set up as SSPI. I'm able to read (and write) from my local 2019.1 Designer (using my windows creds). I'm trying to test this connection on Alteryx Gallery (also 2019.1) but I get errors while publishing and running the workflow in Gallery.

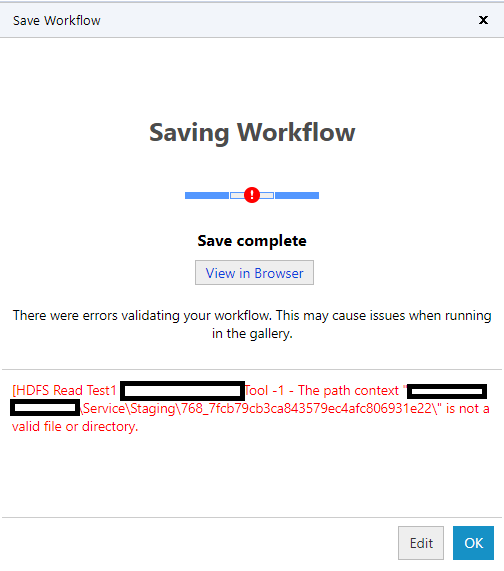

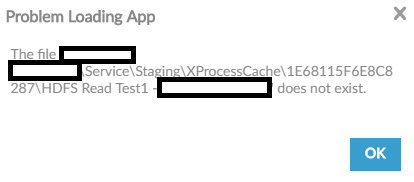

I've set up Workflow Credentials to run the workflow as a Service Account in Gallery. That Service Account has necessary "run-as" permissions on Alteryx Server and has read/write access to HDFS. I get following errors when I try to publish/run. As I understand, workflows are pulled from MongoDB at run time and stored temporarily in XProcessCache folder in Service\Staging directory. Not sure what the process is trying to find in XProcessCache folder, when it tries to run the workflow. We've had a couple of users facing this issue, not just me. Also, I'm not sure if this has anything to do with Kerberos.

Has anybody else faced this issue? Thanks.