Hi everyone,

I'm having a real headache with a particular file (literally).

The problem: the data is provided as a single-field text file. The field is about 9,000 characters wide, and I have about 500,000 records.

The data does come with a datamap, that I can upload into Alteryx and thanks to a very kind member of this community (@BobMoss) I can get that to parse my data.

The issue I'm having relates to the regex tokenize function - to map the data, every character in the datafile is parsed out into individual columns, and ultimately regrouped and transformed into the end result I want. But, because of the level of parsing, when dealing with the whole file of 100's of thousands of records everything grinds to a halt. The file size balloons to hundreds of gig, and things get a little impractical.

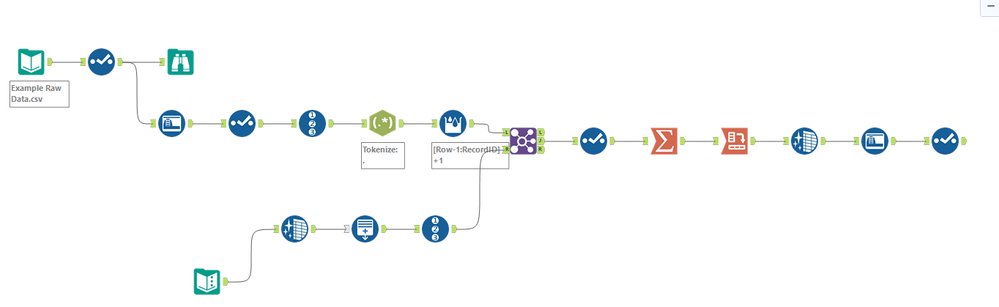

So, I was wondering if there is another way to do this? I've attached my workflow and sample file (note, the sample file is only 500 records, so you'll be able to see how nicely the workflow works with small files).

Any ideas?