Alteryx beginner here...I have about 1.6 million records in my dataset and for each one, I need to generate a random number, add a couple of calculated fields, and summarize the data based on a few different fields. Then, I need to run all 1.6 million records again and again, creating a new random number and again adding on the calculated fields and summarizing. I will need to run 10,000 simulations. I am currently using an iterative macro to perform this task, and it is running very slowly. I ran through all 10,000 simulations last night and it looked like there was 3.3 TB of data in macro step...I guess I'm wondering if there's a more efficient way of doing this.

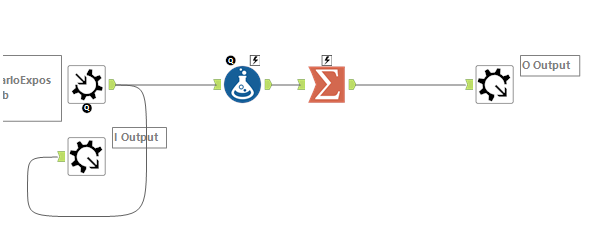

Below is the macro workflow. It's very simple. I read in the data, calculate my random number and other fields, and then I summarize that particular run and spit it out. The same data that's read in is then fed back into the macro. My maximum iterations is set at 10,000 to achieve my 10,000 simulations. Any ideas as to what might be causing Alteryx to hold so much data in the memory? I'm having some trouble attaching my workflows, so I hope this summary is helpful enough.