Hi all,

I just stumpled over an issue where the Dynamic Rename tool destroys my data and increases the required data size by a lot.

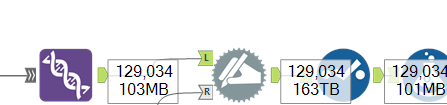

The production workflow looks like this:

The incoming data is about 100MB, after the Dynamic Input it is 163TB and after one Select tool (no configuration is applied) back at 100MB.

There are data points missing after the conversion and while debugging there were crazy characters coming out of the tool, but I have no screenshot unfortunately.

What my workflow does in simple words, I am mapping a data file with a mapping file into a pre defined data model, with given types.

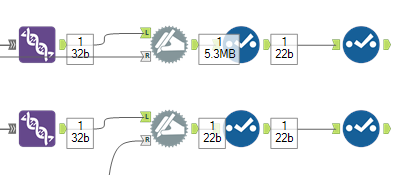

Doing so, the typing with the above Dynamic Rename tool and the configuration "Change field type and size" is only working, when I use the mapping from a Text Input tool and not my calculations.

I have no idea what is happening here and I suppose this is some kind of bug unfortunately.

In the attached workflow there are two examples where one is causing the issue and the size is drastically increased from 22b to 5.3MB:

Did someone experienced something similar or can spot the issue here? Would love to use this method for my production workload ...

I am using version 2022.1.1.42590, also tested with 2023.1, if another version works it would also be fine for me.

Thanks a lot in advance!

Max