I have a data set that has multiple rows of data and multiple fields. Not all fields may have a value and some fields may have the same value in multiple rows. I am trying to create a single row of related data "rollup" and then in each field concatenate if needed and deduplicate the concatenated list of value.

What I have been doing so far is to create a unique key based on a combination of fields. Then I test if there is actually values in those fields and if not -- I send those rows around the process... Say for example the unique key is First Name Last Name DOB. If there is not DOB in a row then that row goes around the process.... I dont want to have a unique key of something like johnsmith as I might have lots of Johnsmith but different people. So for the rows that I can make a unique key, I then group by the key and concatenate the fields with a summary tool. I then add a record ID and then to deduplicate each concated list I use a select tool send the record ID and field , text to columns (rows) based on my delimiter, use the unique tool to deduplicate and then resummarize. In some cases I may also use a filter tool to remove null values. I then multi join back all the fields based on the record ID and rejoin to the bypass rows.... I might then create a different unique key and repeat this process until I've "vertically" consolidated as much as possible.

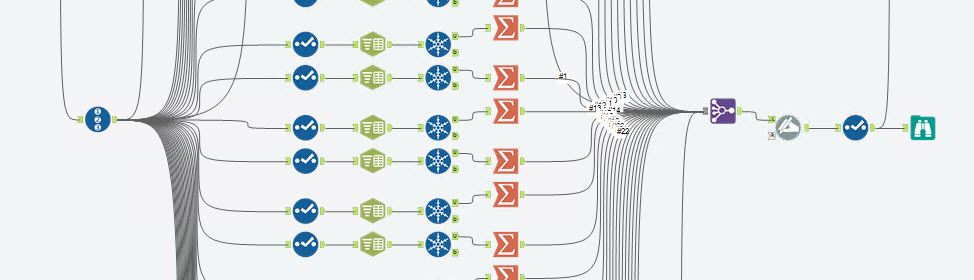

the process looks a bit like this... each row in the container represents a field. So if I have 50 different fields, I have 50 different rows, each one doing the same thing but the field name changes.

If I have a lot of fields its a lot of manual.... I figure there is a macro way to do this....but I'm drawing a blank on how to start. I figure the marco would consist of the SELECT, TEXT TO COLUMN (ROWS), UNIQUE, FILTER for NOT NULL and then SUMMARIZE group by record and concate value.

Should be simple...but struggling with how I would send each different field into the macro and then have it all join back up again.

For each project the fields might called something different as well and the number may vary...

There might also be a better way to solve this that I'm also not thinking about. I'm dealing with sensitive data so I cant share a table...but hopefully you get what I'm trying to do.