INTRO

This macro may prove useful to anyone sending data to ChatGPT. The macro will truncate a string to a given number of tokens, at the space before the next word.

ChatGPT uses the concept of tokens for charging, and you are charged for tokens sent, and tokens received. Tokens are parts of words, so the number of tokens in a string is less than the number of letters, but more than the number of words. You might think of tokens as similar to spoken syllables. Whilst that gives you a feeling for tokens, it is not specific enough.

PYTHON

OpenAI recommend using a python library called "TikToken" to calculate token lengths for strings. This is no relation to TikTok. This macro uses the Python TikToken library to iterate to a string that comes under the token limit provided. The library is stored locally on your PC in the User area, so this should allow for users who do not have Admin level access to their machines.

QUICK START

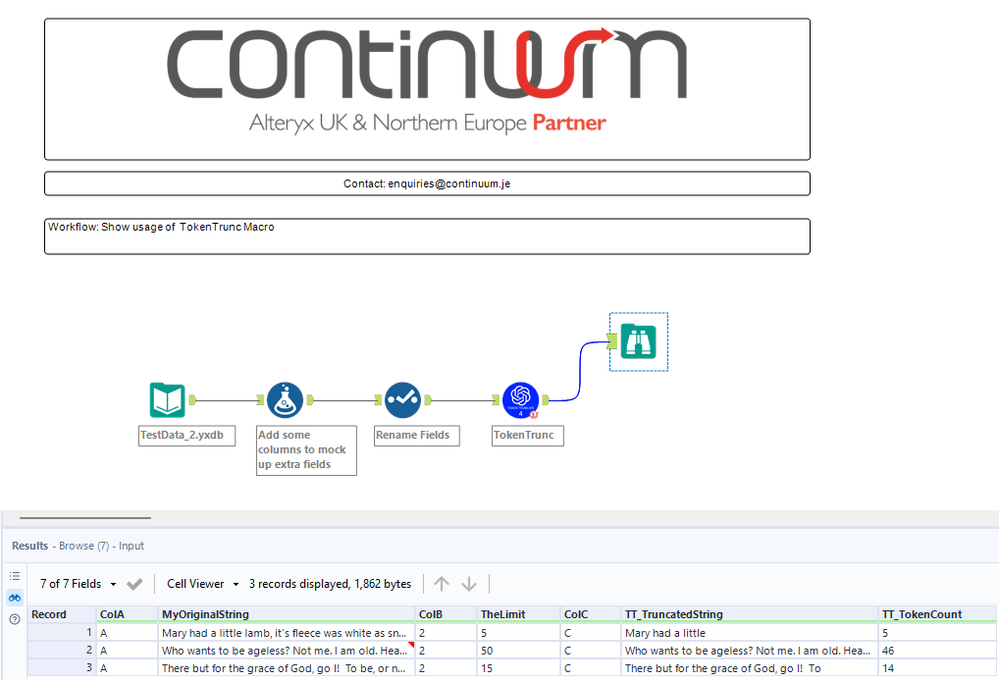

Download and unzip the macro to your working folder. Pass a string and an integer token limit to the macro. The macro will output "TT_TruncatedString", which is the string trimmed to fall under the limit, and "TT_TokenCount", which is the length of the truncated string in tokens.

IN DEPTH GUIDE

The macro uses Python, so there are potential gotchas here. To get around this, the library is installed to the User folder, but it may not be foolproof.

Pass a string into the macro, and an integer value for the token limit. The macro will preserve your input string and any columns passing through it, and will add a "TT_TruncatedString" field, and a "TT_TokenCount" field. The TT_TruncatedString will be chopped at the last space, so that it does not end mid-way through a word. You may still want to further edit the string to end it at a full stop (or period). [To do that, use a formula tool to reverse the string, find the location of the first (ie last in reverse) period, use SubString() to amend, and then re-reverse the string.] The TT_TokenCount will tell you the size of the string in tokens.

If you wish to purely know the token length of a string, set the token limit very high. This will mean the macro performs no truncation, but will still report the token length.

The macro is a wrapper that calls an iterative sub-macro. If you move or copy the main macro, make sure you also take the sub-macro.

EXAMPLE

Above you can see that MyOriginalString has been trimmed from "Mary had a little lamb, it's fleece was white as snow" to "Mary had a little". The original columns passed into the macro persist and remain in their original order, and two TT_ prefixed columns are appended.

CONTINUUM

This macro is the product of research by Continuum Jersey. We specialise in the application of cutting edge technology for process automation, applying Alteryx and AI tech for creative solutions. If you would like to know how your business could apply and benefit from Alteryx, and the agility and efficiency it provides, we would like to talk to you. Please visit dubDubDub dot Continuum dot JE , or send an email to enquiries at Continuum dot JE .

DISCLAIMER

This connector is free, for any use, commercial or otherwise. Resale of this connector is not permitted, either on it's own or as a component of a larger macro package or product. Please bear in mind that you use it at your own risk. Continuum accepts no risk or exposure to liability in the use of this macro.